- SAP Community

- Products and Technology

- CRM and Customer Experience

- CRM and CX Blogs by Members

- SAP Hybris - Data Hub Installation

CRM and CX Blogs by Members

Find insights on SAP customer relationship management and customer experience products in blog posts from community members. Post your own perspective today!

Turn on suggestions

Auto-suggest helps you quickly narrow down your search results by suggesting possible matches as you type.

Showing results for

former_member29

Explorer

Options

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

03-14-2017

10:56 AM

SAP Integrations

SAP integrations provide a framework for connecting the Omni-commerce capabilities of SAP Hybris Commerce with other SAP products.

Architecture of SAP Integrations

SAP integrations involve various possible system landscapes, types of communication, data transfer processes, and SAP-specific configuration settings.

For now, let's focus on Hybris Data Hub Concept.

Data Hub

- Data hubs are an important component in information architecture.

- A data hub is a database which is populated with data from one or more sources and from which data is taken to one or more destinations.

- A database that is situated between one source and one destination is more appropriately termed a “staging area”.

Hybris Data Hub

Receives and sends data to SAP ERP or to hybris (data replication in both directions).

example: Hybris Data Hub can replicate product master data from SAP ERP to hybris, or send orders from hybris to SAP ERP.

- It can be used to connect hybris to non-SAP systems.

Execution takes place in following major steps:

- Load (raw format)

- Composition (canonical format)

- Publication (target format)

- It also acts as a staging area where external data can be analysed for errors and corrected before being fed into hybris.

1.Raw items come directly from the source system.

- The data they contain may undergo some preprocessing before entering the Data Hub.

2.Canonical items have been processed by Data Hub and no longer resemble their raw origins.

- They are organised, consistent, and ready for use by a target system.

3.Target items are transition items.

- The process of creating target items reconciles the canonical items to target system requirements

Feeds and Pools

- Data Hub enables the management of data load and composition with the use of feeds and pools.

- Fragmented items can be loaded into distinct data pools using any number of separate feeds.

- Feeds and Pools allow a fine level of control over how data is segregated and processed.

Data Feeds

- Data feeds enable you to configure how raw fragments enter Data Hub.

- When data is loaded using one of the available input channels, it is passed on to a data feed as specified in the load payload.

- The data feed then delivers raw items for processing to a specified data pool.

Data Pools

- Data pools enable you to isolate and manage your data within Data Hub, for composition and publishing.

- During the composition of raw items into canonical items, only data isolated within each pool is used.

Step-by-step Procedure for Data hub setup in our local system.

Prerequisites:

1.Hybris - v6.2.0 (I'm using)

2.Apache Tomcat 7.x

3.JDK 1.8

4.MySQL

Step 1:

Create folders as below structure in any of the drive

datahub6.2

- config

- crm

- erp

- others

- Open your tomcat folder -> go to config -> create Catalina folder -> localhost folder -> datahub-webapp (create xml file)

Step 2:

Copy the data from below to the datahub-webapp (xml file)

<Context antiJARLocking="true" docBase="<YOUR_PATH>\hybris\bin\ext-integration\datahub\web-app/datahub-webapp-6.2.0.2-RC13.war" reloadable="true">

<Loader className="org.apache.catalina.loader.VirtualWebappLoader"

virtualClasspath=

"<YOUR_PATH>/datahub6.2/config;

<YOUR_PATH>/datahub6.2/crm/*.jar;

<YOUR_PATH>/datahub6.2/erp/*.jar;

<YOUR_PATH>/datahub6.2/others/*.jar"/>

</Context>Step 3:

<YOUR_PATH>hybris\bin\ext-integration\datahub\web-app

- Go to the above path and copy both the path and file name

Step 4:

The copied path and file name should be pasted in the tomcat folder

Open your tomcat folder -> go to config -> Catalina folder -> localhost folder -> datahub-webapp

Step 5:

Now Start the Tomcat

Go to the following path

<YOUR_PATH>\apache-tomcat-7.0.73\apache-tomcat-7.0.73\bin

- Run the command startup.bat through command prompt

- Automatically runs the command in new command prompt as below

Step 6:

Observe the change in the folders after the server starts

Before:

After:

Step 7:

Now go to the datahub6.2 folder which we have created earlier

<YOUR_PATH>\datahub6.2\config

Step 8:

create the following files

encryption-key ----------- text format

local.properties ----------- properties file

- Copy the key 32digit key and paste in encryption-key file

- Copy the following code into local.properties file

#DB Setup

dataSource.className=com.mysql.jdbc.jdbc2.optional.MysqlDataSource

dataSource.driverClass=com.mysql.jdbc.Driver

dataSource.jdbcUrl=jdbc:mysql://localhost/datahub?useConfigs=maxPerformance

dataSource.username=root

dataSource.password=root

#media storage

mediaSource.className=com.mysql.jdbc.jdbc2.optional.MysqlDataSource

mediaSource.driverClass=com.mysql.jdbc.Driver

mediaSource.jdbcUrl=jdbc:mysql://localhost/datahub?useConfigs=maxPerformance

mediaSource.username=root

mediaSource.password=root

#Not why we need to set it here.

datahub.extension.exportURL=http://localhost:9001/datahubadapter

datahub.extension.username=admin

datahub.extension.password=nimda

#Not why we need to set it here.

targetsystem.hybriscore.url=http://localhost:9001/datahubadapter

targetsystem.hybriscore.username=admin

targetsystem.hybriscore.password=nimda

# Encryption

datahub.encryption.key.path=encryption-key.txt

# enable/disable secured attribute value masking

datahub.secure.data.masking.mode=true

# set the masking value

datahub.secure.data.masking.value=*******

kernel.autoInitMode=create-drop

#kernel.autoInitMode=update

#====

sapcoreconfiguration.pool=SAPCONFIGURATION_POOL

#sapcoreconfiguration.autocompose.pools=GLOBAL,SAPCONFIGURATION_POOL

#sapcoreconfiguration.autopublish.targetsystemsbypools=GLOBAL.HybrisCore

datahub.publication.saveImpex=true

datahub.server.url=http://localhost:8080/datahub-webapp/v1

Step 10:

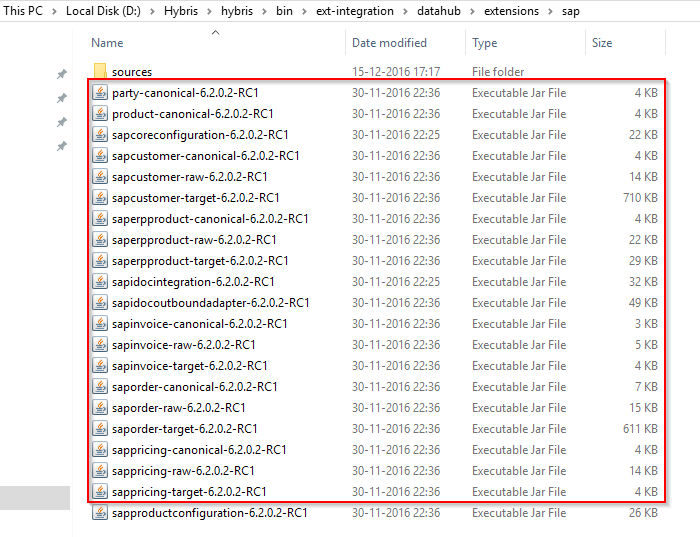

Go to the following path

<YOUR_PATH>\hybris\bin\ext-integration\datahub\extensions\sap

Step 11:

copy the highlighted jar files into the following folder

Go to erp folder

Step 12:

Go to others folder

Download the mysql-connector-java-5.1.30-bin.jar file and copy to the others folder only the jar file after extracting the Zip file

Step 13:

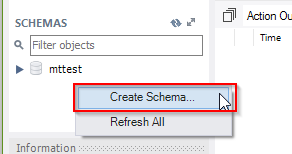

Login to MYSQL workbench

- Create a Schema as below

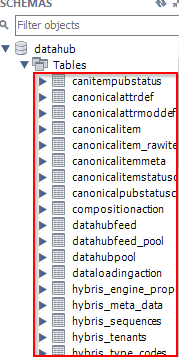

Step 14:

Step 15:

Start Tomcat server

Step 16:

Go to localhost:8080/datahub-webapp/v1/data-feeds

- To Disable Spring secure

Stop the server

Step 17:

Go to the following path

<YOUR_PATH>\apache-tomcat-7.0.73\apache-tomcat-7.0.73\webapps\datahub-webapp\WEB-INF

- web (open the xml file) and comment the following code

Step 18:

Now Start the server

- Default feeds and pools will be displayed

- Go to localhost:8080/datahub-webapp/v1/data-feeds

Step 19:

Tables will be loaded in a schema which was created in step 13.

In the next blog, I will be posting how to load data into data hub using IDOC, CSV and also further by sending IDOC directly from SAP ERP to Hybris (Asynchronous Order Management).

Thanks for reading 🙂

- SAP Managed Tags:

- SAP Commerce

24 Comments

You must be a registered user to add a comment. If you've already registered, sign in. Otherwise, register and sign in.

Labels in this area

-

ABAP

1 -

API Rules

1 -

c4c

1 -

CAP development

2 -

clean-core

2 -

CRM

1 -

Custom Key Metrics

1 -

Customer Data

1 -

Determination

1 -

Determinations

1 -

Introduction

1 -

KYMA

1 -

Kyma Functions

1 -

open SAP

1 -

RAP development

1 -

Sales and Service Cloud Version 2

1 -

Sales Cloud

1 -

Sales Cloud v2

1 -

SAP

1 -

SAP Community

1 -

SAP CPQ

1 -

SAP CRM Web UI

1 -

SAP Customer Data Cloud

1 -

SAP Customer Experience

1 -

SAP CX

2 -

SAP CX Cloud

1 -

SAP CX extensions

2 -

SAP Integration Suite

1 -

SAP Sales Cloud v1

2 -

SAP Sales Cloud v2

2 -

SAP Service Cloud

2 -

SAP Service Cloud v2

2 -

SAP Service Cloud Version 2

1 -

SAP Utilities

1 -

Service and Social ticket configuration

1 -

Service Cloud v2

1 -

side-by-side extensions

2 -

Ticket configuration in SAP C4C

1 -

Validation

1 -

Validations

1

Related Content

- Utility Product Integration Layer (UPIL) in S/4HANA Utilities for Customer Engagement – Part 1 in CRM and CX Blogs by Members

- SAP Hybris Marketing: Customer Data Upload in CRM and CX Questions

- How to create specific entity region cache in SAP Commerce in CRM and CX Blogs by SAP

- SAP Customer Data Cloud Integration with Commerce Cloud and Composable Storefront in CRM and CX Blogs by SAP

- SAP Commerce 2205 - rush link generates wrong symbolic link for custom smartedit extension in CRM and CX Questions

Top kudoed authors

| User | Count |

|---|---|

| 8 | |

| 1 | |

| 1 | |

| 1 |