- SAP Community

- Products and Technology

- Technology

- Technology Blogs by SAP

- Cloud Integration - How to configure Transaction H...

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

This blog discusses options how to configure transaction handling for JMS and JDBC transacted resources in your integration flow. It describes the different configuration options available, allowed combinations and existing limitations. It provides some sample configurations explaining when to use which option and why.

Transaction Handling Configuration in Integration Flow

Many integration scenarios in Cloud Integration use transacted resources, like data stores or message queues, that have to be executed in a single end-to-end transaction to ensure data consistency. Before describing the options we need to understand the basics and why transaction handling is required at all.

Transactional Processing

Some flow steps and adapters use persistency to store data in the database or in JMS queues. To ensure this is done consistently for the whole process a transaction handler is required, that takes care that the whole transaction is either committed or rolled back in case of an error.

For example, taking a simple scenario, during message processing a variable is written and afterwards some data is deleted from a data store in the same process. In case of an error in the later processing both steps are rolled back, so that neither the variable is stored, nor the deletion from data store is done. Without a transaction manager these steps would have been executed on single step level, even if the overall processing of the message ended with an error.

The transaction handler can either handle a JMS transaction or a JDBC transaction, not both. There is no configuration option available for distributed transactions between JMS and JDBC resources in cloud integration.

JDBC Transacted Resources

In cloud integration some flow steps and adapters use JDBC persistency, which requires a JDBC transaction handler to ensure transactional end-to-end processing. This is the case for the following flow steps and adapters:

- Data Store Operations (Write, Delete)

- Write Variables (Local and Global)

- Aggregator

- XI Sender and Receiver Adapter using EO with Data Store as temporary storage (only with June 2018 release)

All the flow steps and adapters execute transactions on the database, to ensure end-to-end consistency of the data processed in the scenario, you configure a JDBC transaction manager for the flow steps. The XI Adapter only needs a JDBC transaction manager if used on receiver side in multicast scenarios (splitter, sequential multicast). Some specific considerations are described in section 'Recommendations and Restrictions' below.

JMS Transacted Resources

In cloud integration configuration, there are some adapters using JMS persistency. These adapters are only for cloud integration customers having an Enterprise License. This is the case for following adapters:

- JMS Sender and Receiver Adapter

- AS2 Sender Adapter

- XI Sender and Receiver Adapter using EO with JMS as temporary storage (only with June 2018 release)

For all of these adapters a JMS transaction manager can be useful, but in some cases it may not be needed. The section 'Recommendations and Restrictions' walks through different cases and explains more details.

Configuration Options in Cloud Integration

In integration flow transaction handling can be configured at two places, in main process and in local processes.

Configuration in Main Process

Normally the end-to-end transaction for a scenario is configured in the main process, to ensure the transaction is either committed or rolled back.

Select the integration process to get the properties for the process. In tab Processing you can configure the transaction handling.

There are three configuration options available, default value is Required for JDBC.

- Not Required: If you don't use any transactional resources in your scenario or don't need an end-to-end transactional behavior, select this option.

- Required for JDBC: If you use flow steps with JDBC transacted resources or several XI receiver adapters in a multicast scenario in the process, that need an end-to-end transaction, select this option.

- Required for JMS: If you use a JMS sender adapter, several JMS receiver adapters or JMS adapter in Send step, select this option. This option together with the JMS adapter is only available for Cloud Integration customers with an Enterprise License.

Configuration in Local Process

In case local processes are used in the integration flow, transaction handling can additionally be configured on this level as well.

Select the local process to get the properties. In tab Processing you can configure the transaction handling.

There are three configuration options available, default value is From Calling Process.

- From Calling Process: If you want to take over the setting from main process, select this option. So, if the main process has no transaction handler defined, also the local process will not get an end-to-end transaction. If the main process has a transaction handler defined, the local process will join the transaction from main process. For consistent end-to-end handling this is the recommended option.

- Required for JDBC: If you use flow steps with JDBC transacted resources in the local process, that need a transaction, it may be useful to select this option. For example, if the calling main process does not have transaction handling defined. Note, that in this case the transaction will be committed or rolled back after execution of the local process, not after execution of the main process. If the main process also has JDBC transaction manager configured, this option is equal to From Calling process, the transaction from main process will be joined.

- Required for JMS: If you use JMS adapter in Send step in the local process, it may be useful to select this option. For example, if the calling main process does not have transaction handling defined. Note, that in this case the transaction will be committed or rolled back after execution of the local process, not after execution of the main process. If the main process also has JMS transaction manager configured, this option is equal to From Calling process, the transaction from main process will be joined. This option together with the JMS adapter is only available for Cloud Integration customers with an Enterprise License.

Important: It is not allowed to configure JMS transaction in main process and JDBC transaction in local process and vice versa, because there is no support for distributed transactions in Cloud Integration.

Old Version of Processes without Transaction Handling Configuration

In the past, with the older version of processes, there was no configuration option available for transaction handling on process level. This means, if you open existing integration flows and select the process or local process, you may not have the option to configure the transaction manager. Within this version always JDBC transaction manager is used.

You need at least version 1.1 of the process or local process to have the configuration options. You need to add a new process into your integration flow and remodel the process to have the options for transaction handling configuration or use the migration of the process or sub-process, available with the 12-December 2017 update to avoid to remodel the whole process.

The migration is described in detail in Blog 'Versioning and Migration of Components of an Integration Flow'.

Recommendations and Restrictions

There are several important restrictions existing for configuring transactions. Some flow steps are not supported with transacted resources, some need transactions, furthermore, specific combinations are not allowed or not recommended. Carefully check out the the listed restrictions and recommendations.

Data Store Operations and Write Variables

Data store operations and the writing of variables can benefit from a JDBC transaction manager, but can also be used without JDBC transaction manager. In this case the database operation is committed on single step level, no end-to-end transaction is hold in this case.

Note, that the 'Delete After Fetch' option in data store Get and Select is always executed at the end of the processing; the message is deleted after successful processing or not deleted if the message processing failed. This is independent of the transaction handling configured.

Aggregator

For the aggregator flow step it is mandatory to use a JDBC transaction handler because otherwise aggregations cannot be executed consistently. Because of this, you will get a check error if you configure aggregator without JDBC transaction manager.

JMS Receiver Adapter

If only one JMS Receiver channel without splitter or sequential multicast is used no JMS transaction handler is required because the JMS transaction can directly be committed. For such flows you should configure no transactions or, if required, JDBC transactions. But Note: in case of using a JDBC transaction manager in the integration flow with one JMS Receiver, in case of a failure during final commit in JDBC the JMS transaction may have already committed the data. This is because distributed transactions between JMS and JDBC are not supported. This behavior could lead to duplicate messages because the message will get a failed status and retry is normally done from sender. Make sure your scenario can handle such duplicates or do not mix JMS receiver with JDBC transacted resources in your integration flows.

In case several JMS Receiver channels or a sequential multicast or splitter followed by a JMS Receiver Adapter are used in one integration process a JMS transaction handler will ensure that the data is consistently updated in all JMS queues. If no JMS transaction handler is defined for these scenarios, a message processing error, which occurs after some of the messages are already written to a queue, can cause a large number of duplicate messages. In

If a JMS Receiver channel is used in a Send step a JMS transaction handler is needed to ensure that the data is consistently updated in the JMS queue at the end of the processing. In an error case the whole transaction will be rolled back if an unhandled error occurs in the integration flow processing after the send step.

If a JMS Sender Channel and one or more JMS Receiver Adapters are used in one integration flow you can optimizes the numbers of used transactions in the JMS instance using a JMS transaction handler because then only one transaction is opened for the whole processing. For such configurations select the JMS transaction handler.

JMS, XI and AS2 Sender Adapter

You should select the transaction manager as required by your scenario configured in the integration flow, keeping in mind the following additional points:

If you use flow steps with JDBC resources (data store, variables, aggregator) together with JMS, XI or AS2 Sender you may select the JDBC transaction manager. But Note: in case of a failure during final commit in JMS (remove message from queue) the JDBC transaction may have already committed the data. This is because distributed transactions between JMS and JDBC are not supported. This could lead to duplicate messages because the message will stay in Retry in JMS queue, but JDBC resources have been updated. Make sure your scenario can handle such duplicates or do not mix JMS, XI or AS2 sender with JDBC transacted resources in your integration flows.

If a JMS, XI, or AS2 Sender Channel is used in an integration flow, a JMS transaction handler can be useful in certain error cases; e.g. if message processing fails and the message needs to be moved to its respective error queue. Moving the message in a single transaction ensures that the message is not duplicated in a disruptive event between reading it from the processing queue and writing it to the error queue.

If a JMS, XI or AS2 Sender Channel and one or more JMS Receiver Adapters are used in one integration flow you can optimizes the numbers of used transactions in the JMS instance using a JMS transaction handler because then only one transaction is opened for the whole processing. For such integration flows select the JMS transaction handler.

XI Receiver Adapter

The XI Receiver adapter with Quality of Service as Exactly Once can be used with either JMS or Data Store for temporary message storage.

If only one XI Receiver channel without splitter or sequential multicast is used no JMS or JDBC transaction handler is required because the transaction can directly be committed. For such flows you should configure no transactions or, if required, JDBC transactions. But Note: in case of using a JDBC transaction manager in the integration flow with one XI Receiver using JMS, in case of a failure during final commit in JDBC the JMS transaction may have already committed the data. This is because distributed transactions between JMS and JDBC are not supported. This behavior could lead to duplicate messages because the message will get a failed status and retry is normally done from sender. Make sure your scenario can handle such duplicates or do not mix XI receiver with JMS storage with JDBC transacted resources in your integration flows.

In case several XI Receiver Adapters are used in a sequential multicast or a splitter followed by an XI Receiver Adapter used in one integration process a JMS or JDBC transaction handler (depending on the storage option used in XI adapter) will ensure that the data is consistently updated in all JMS queues/Data Stores. If no transaction handler is defined for these scenarios, a message processing error, which occurs after some of the messages are already written to a queue or data store, can cause a large number of duplicate messages.

If a XI Receiver channel is used in a Send step (available with 30-September-2018 update) a JMS or JDBC transaction handler (depending on the storage option used in XI adapter) is needed to ensure that the data is consistently updated in the JMS queue/Data Store at the end of the processing. In an error case the whole transaction will be rolled back if an unhandled error occurs in the integration flow processing after the send step.

If a JMS Sender Channel and one or more XI Receiver Adapters with JMS queue as temporary storage are used in one integration flow you can optimizes the numbers of used transactions in the JMS instance using a JMS transaction handler because then only one transaction is opened for the whole processing. For such configurations select the JMS transaction handler.

If the error happens in the process of sending the message to the receiver backend there is no rollback done, because the whole processing until storing the message in the temporary storage was successful. A retry is done from the temporary storage only, and this processing is done in a new transaction.

Splitter

General and iterating splitter are not allowed with parallel processing switched on with transacted resources after the splitter, neither with JMS, nor with JDBC transaction. If splitter is needed with either JMS of JDBC transaction, do not set the flag for Parallel Processing.

Multicast

Parallel multicast is not allowed with transacted resources within the multicast branch, neither with JMS, nor with JDBC transaction. If multicast is needed with either JMS of JDBC transaction, use the sequential multicast.

Guideline for Using Transactions

There are three important guidelines to follow:

1. Configure the transaction as short as possible!

Transactions always need resources on the used persistency, because the transaction needs to be kept open during the whole processing it is configured for. When configured in main process, the transaction will already be opened at the begin of the overall process, and is kept open until the whole processing ends. In case of complex scenarios and/or large messages, this may cause transaction log issues on the database or exceeds the number of available connections.

To avoid this, configure the transactions a short as possible!

2. Configure the transaction as long as needed for a consistent runtime execution!

As already explained, for end-to-end transactional behavior you need to make sure all steps belonging together are executed in one transaction, so that data is either persisted or completely rolled back in all transactional resources.

3. Configure only one transaction if multiple JMS components are used!

As already explained, If a JMS, XI or AS2 Sender Channel and one or more JMS Receiver Adapters are used in one integration flow you can optimizes the numbers of used transactions in the JMS instance using a JMS transaction handler because then only one transaction is opened for the whole processing.

4. Avoid mixing JDBC and JMS transactions!

Cloud integration does not provide distributed transactions, so it is not possible to execute JMS and JDBC transactions together in one transaction. In error cases the JDBC transaction may already be committed and if the JMS transaction cannot be committed afterwards, the message will still stay in the inbound queue or will not be committed into the outbound queue. In such cases the message is normally retried from inbound queue, sender system or sender adapter and could cause duplicate messages.

Either the backend can handle duplicates or you must not mix JMS and JDBC resources.

Check out the sample configurations below for more information.

Sample Scenarios using Data Store Operations

In the following chapter we will showcase some simple sample configurations using JDBC transacted resources and explain the recommended settings.

Scenario 1: Using Data Store in Main Process

This scenario uses a Timer to trigger the processing, a data store Get in a main process, then a Script is executed and afterwards the message is deleted from data store using Delete step and sent to the receiver. To ensure that the message is only deleted from date store if the message is successfully sent to the receiver, for this process the JDBC transaction handler is to be used.

Without JDBC transaction handler the message would have been deleted from data store on execution of the data store Delete step and not at the end of the whole process.

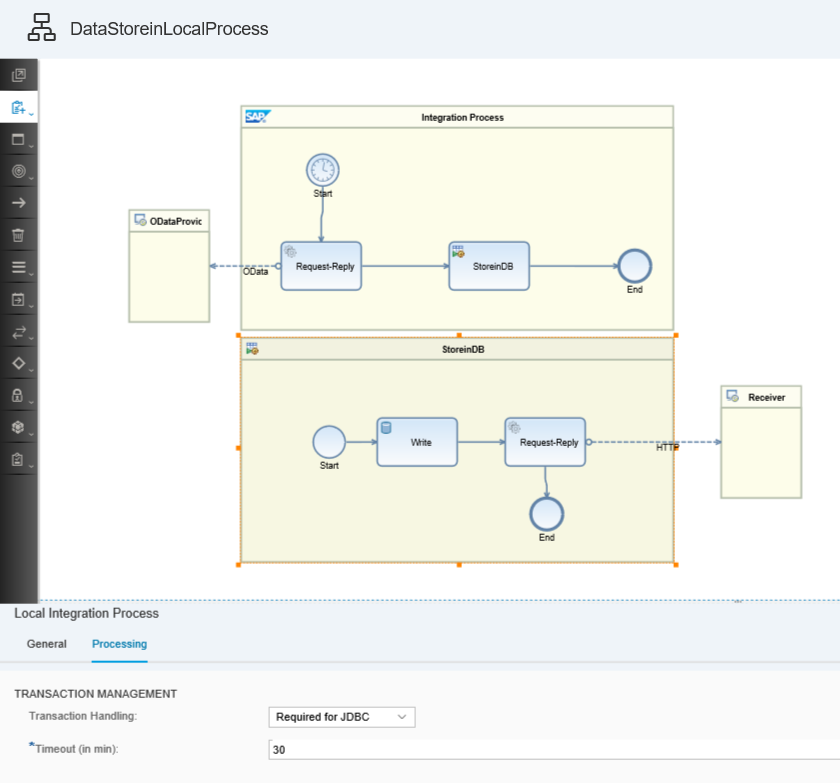

Scenario 2: Using Data Store in Local Process

In this scenario the timer is followed by an OData call to fetch some data and afterwards a local process is called where the message is stored in a data store via data store write step, a confirmation message is sent to the receiver system. Also the call to the receiver system is executed in the same local process.

For such a scenario you can either configure the JDBC transaction in the main process, or in the local process. The difference is, that when configured in main process, the database transaction will already be opened on the database at the begin of the overall process, and is kept open until the whole processing ends. In case of complex scenarios and/or large messages, this may cause transaction log issues on the database. This should be avoided.

So the recommendation would be, to

- ensure that all steps needed in one transaction, data store select and call to receiver, are contained in the local process and

- select Required for JDBC in local process,

- in main process select Not required.

The first steps are not needed in the transaction because nothing is persisted yet and in case of an error the next timer start event will trigger the processing again.

With this configuration the transaction on the database is kept open only as long as needed and not for the whole processing of the message.

Sample Scenarios using JMS Adapter

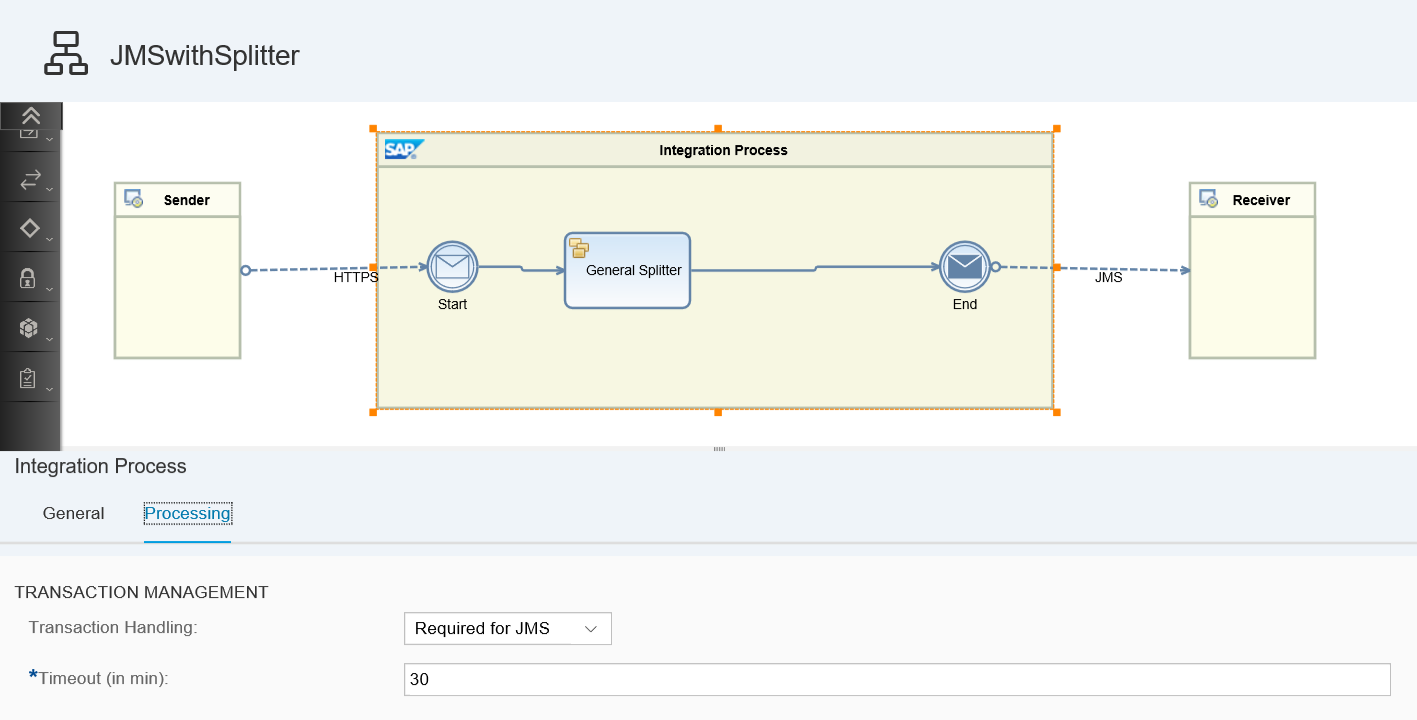

Scenario 3: Using Splitter with JMS Receiver Adapter in Main Process

In this scenario a splitter step is configured followed by a JMS receiver adapter in the main process. To ensure that the split messages are only persisted in the message queue if the whole message was split successfully, for this process, a JMS transaction handler is to be used.

Furthermore, you need to make sure Parallel Processing is not selected in splitter.

Without JMS transaction handler the single split messages would have been saved to the message queue on execution of each JMS receiver call and not at the end of the whole processing. This could lead to the status that some split messages are available in the queue, but the overall process ended with an error and the whole start message will be reprocessed leading to duplicate messages.

Scenario 4: Using JMS Adapter in Send Step in Local Process

In this scenario a JMS receiver is used in the local process in a send step to store messages into a JMS queue. This local process is reused in the main process at several places. To ensure the message is sent to the queue only if the overall processing in the main process ended successfully an end-to-end JMS transaction is needed. In the main process you need to select the JMS handler.

In local process the transaction of the main process needs to be joined.

Without JMS transaction handler it could happen that in error cases messages are persisted in the JMS queue even if the call to the receiver was not successful and the overall message status is failed.

Scenario 5: Using JMS Sender and Receiver Adapter in Main Process

A sample scenario using JMS sender and receiver adapter is described in detail in the blog 'Configure Asynchronous Messaging using JMS Adapter'.

Scenario 6: Using XI Adapter in Main Process

A sample scenario using XI adapter is described in detail in the blogs 'Configuring Scenario Using the XI Receiver Adapter' and 'Configuring Scenario Using the XI Sender Adapter'.

- SAP Managed Tags:

- SAP Integration Suite,

- Cloud Integration

You must be a registered user to add a comment. If you've already registered, sign in. Otherwise, register and sign in.

-

ABAP CDS Views - CDC (Change Data Capture)

2 -

AI

1 -

Analyze Workload Data

1 -

BTP

1 -

Business and IT Integration

2 -

Business application stu

1 -

Business Technology Platform

1 -

Business Trends

1,658 -

Business Trends

103 -

CAP

1 -

cf

1 -

Cloud Foundry

1 -

Confluent

1 -

Customer COE Basics and Fundamentals

1 -

Customer COE Latest and Greatest

3 -

Customer Data Browser app

1 -

Data Analysis Tool

1 -

data migration

1 -

data transfer

1 -

Datasphere

2 -

Event Information

1,400 -

Event Information

69 -

Expert

1 -

Expert Insights

177 -

Expert Insights

326 -

General

1 -

Google cloud

1 -

Google Next'24

1 -

GraphQL

1 -

Kafka

1 -

Life at SAP

780 -

Life at SAP

13 -

Migrate your Data App

1 -

MTA

1 -

Network Performance Analysis

1 -

NodeJS

1 -

PDF

1 -

POC

1 -

Product Updates

4,575 -

Product Updates

374 -

Replication Flow

1 -

REST API

1 -

RisewithSAP

1 -

SAP BTP

1 -

SAP BTP Cloud Foundry

1 -

SAP Cloud ALM

1 -

SAP Cloud Application Programming Model

1 -

SAP Datasphere

2 -

SAP S4HANA Cloud

1 -

SAP S4HANA Migration Cockpit

1 -

Technology Updates

6,872 -

Technology Updates

458 -

Workload Fluctuations

1

- What’s New in SAP Analytics Cloud Q2 2024 in Technology Blogs by SAP

- DevOps with SAP BTP in Technology Blogs by SAP

- How to integrate files (excel, CSV) from O365 (team/sharepoint) into datasphere in Technology Q&A

- How to fetch the configured parameters from an I flow in sap cpi? in Technology Q&A

- 10+ ways to reshape your SAP landscape with SAP Business Technology Platform – Blog 7 in Technology Blogs by SAP

| User | Count |

|---|---|

| 21 | |

| 8 | |

| 8 | |

| 6 | |

| 6 | |

| 6 | |

| 6 | |

| 6 | |

| 5 | |

| 5 |