- SAP Community

- Products and Technology

- Enterprise Resource Planning

- ERP Blogs by Members

- How to perform Data Aging in S/4HANA

Enterprise Resource Planning Blogs by Members

Gain new perspectives and knowledge about enterprise resource planning in blog posts from community members. Share your own comments and ERP insights today!

Turn on suggestions

Auto-suggest helps you quickly narrow down your search results by suggesting possible matches as you type.

Showing results for

BJarkowski

Active Contributor

Options

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

10-03-2016

9:03 AM

Overview

Recently I played around Data Aging concept in S/4HANA system and today I would like to show you how to perform the initial configuration based on SFLIGHT data. This sample data model delivered by SAP allows us to understand the process of data aging without having to worry how to create sample business documents as data generation is simple and straightforward.

Having system running for months or years we need to properly maintain it. We need to ensure the system is available during business hours and performance is on required level. System is growing together with your company and at one moment you have to decide what to do with data that is no longer used. So far the best solution was data archiving, which allows us to store data on separate storage outside SAP system.

Data aging is new possibility to manage outdated information and helps to move sets of data within a database by specifying a data temperature. Hot data resides in current area of the database, when the warm / cold data is moved to historical area.

System preparation and SFLIGHT data generation

For data aging using SFLIGHT data SAP prepared separate set of tables:

DAAG_SPFLI – Flight schedule

DAAG_SFLIGHT – Flights

DAAG_SBOOK - Single Flight Booking

You can either generate the data using SAPBC_DATA_GENERATOR and copy content of the tables or copy the whole report and change the target tables directly in the source code (I used the second approach).

Update:

You can copy the SFLIGHT model data to DAAG* tables using report RDAAG_COPY_SFLIGHT_DATA. Thanks guenther.hasel for sharing this tip!

We can check the table contents directly in SE16n:

When our data is ready we can prepare system to support Data Aging. Firstly, we need to enable new profile parameter:

The second preparation step is to activate Business Function DAAG_DATA_AGING in SFW5.

Creating partitions

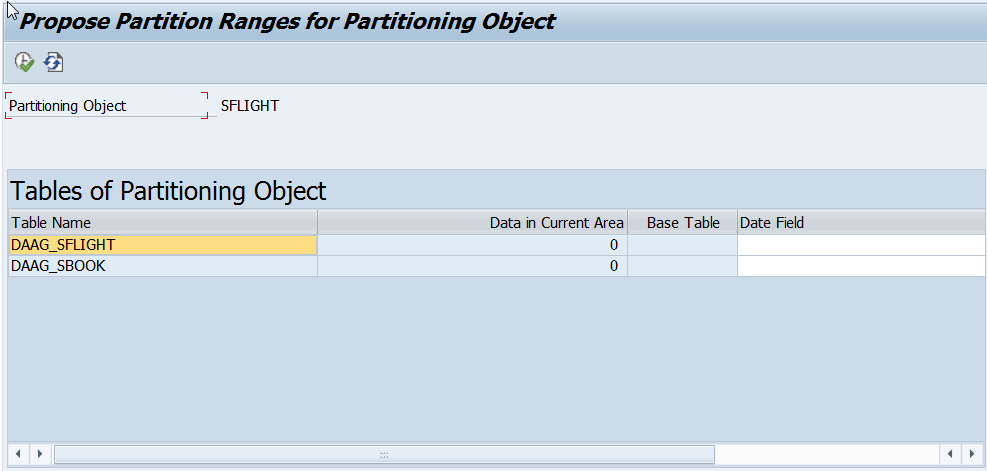

First Data Aging transaction we are going to use is DAGPTM – Manage Partitions, where we can create and modify partitions for all partitioning objects available in the system. Partitioning object is a set of tables that will be partitioned together. In our case it consists of two tables: DAAG_SBOOK and DAAG_SFLIGHT:

Currently there is no partitions defined in our system for SFLIGHT. There are two ways of creating partitions. We can do it manually or use additional tool delivered by SAP – Partition Proposal.

To enter Partition Proposal, click on desired Partition Object and choose “Propose Partition Ranges” from menu (or click F9).

Depending on partitioning object setting value in Date Field may be already filled. In our case let’s choose FLDATE as the date field for DAAG_SFLIGHT and DAAG_SBOOK. After execution, we can see Proposed Partition Ranges and Data Volume in each year / month.

In Proposed Partition Ranges we can easily simulate creation of new partitions and the tool will update us with information about projected data volume.

This time we will create partitions manually. Go back to Manage Partitions and click on Period button.

After confirmation of the dialog box we can run partitioning.

Background job ran only for few second to create partitions.

You can see there is one partition created without Start and End date defined. This partition stores current (hot) data, others are for the historical area.

When we expand partitioning object and select table we can click on the ‘eye’ button to check how many data is in current and historical areas. As we didn’t execute aging, all data resides in current area.

Let’s have a look on what happened in HANA database. Choose DAAG_SFLIGHT table and display runtime information:

We can see our table is partitioned on database level according to our requirements defined in previous steps. At the moment there is no records in any other partition than the current one – which is good. We can also check if the partition is loaded to memory (last column).

Now we need also to decide what shall be the residence time for our documents. We can set thevalue in table DAAG_RT_SFLIGHT. It’s quite flexible – we can set different values based on Carrier and/or Flight Connection. For our testing scenario I want all objects older than one day to be moved to historical area.

Activating Data Aging object

To display all data aging objects, go to transaction DAGOBJ.

When we double click on the object name we can see details like participating tables or implementation class (which actually drives the data aging run by selecting objects to be moved to another partition).

To activate the object, on previous screen select DAAG_SFLIGHT and click Activate button from the menu.

During activation system is running various checks, like data object consistency or existence on partitions at database level.

Data aging run

Once our partitions are created and data aging object is activated we can execute the actual Data Aging. In order to do that enter transaction DAGRUN.

Now we need to define data aging group for our objects. In menu select Goto -> Edit data aging groups. On the screen create following entry:

Once saved go to Data Aging Objects and select DAAG_SFLIGHT model:

If you are not able to save your entries and there is error message displayed saying “DAAG_SFLIGHT is not an application data aging object” there is one correction needed. Please update table DAAG_OBJECTS by setting DAAG_OBJ_TYPE = “A” for data object DAAG_SFLIGHT.

Finally, we finished preparation steps and we can schedule Data Aging run.

After confirming the dialog box the job will start at chosen date / time.

And when it’s done we will find a green light in status column.

In statistics for Data Aging run we can see that 66 308 rows were processed:

Let’s see what happened to our data. Go to Manage Partition to display Hot and Cold Data for Partitions of Table DAAG_SBOOK.

We can also go to HANA Studio and check Runtime Information for table

Success! Our data is now aged!

Standard SAP records have this functionality already implemented, but what happens if we want to access the historical data in our custom reports? In SE16n we can see only current data:

Based on blog I was able to write a small report to count rows by setting data temperature.

- SAP Managed Tags:

- SAP S/4HANA

47 Comments

You must be a registered user to add a comment. If you've already registered, sign in. Otherwise, register and sign in.

Labels in this area

-

"mm02"

1 -

A_PurchaseOrderItem additional fields

1 -

ABAP

1 -

ABAP Extensibility

1 -

ACCOSTRATE

1 -

ACDOCP

1 -

Adding your country in SPRO - Project Administration

1 -

Advance Return Management

1 -

AI and RPA in SAP Upgrades

1 -

API and Integration

1 -

Approval Workflows

1 -

Ariba

1 -

ARM

1 -

ASN

1 -

Asset Management

1 -

Associations in CDS Views

1 -

auditlog

1 -

Authorization

1 -

Availability date

1 -

Azure Center for SAP Solutions

1 -

AzureSentinel

2 -

Bank

1 -

BAPI_SALESORDER_CREATEFROMDAT2

1 -

BRF+

1 -

BRFPLUS

1 -

Bundled Cloud Services

1 -

business participation

1 -

Business Processes

1 -

CAPM

1 -

Carbon

1 -

Cental Finance

1 -

CFIN

1 -

CFIN Document Splitting

1 -

Cloud ALM

1 -

Cloud Integration

1 -

condition contract management

1 -

Connection - The default connection string cannot be used.

1 -

Custom Table Creation

1 -

Customer Screen in Production Order

1 -

Customizing

1 -

Data Quality Management

1 -

Date required

1 -

Decisions

1 -

desafios4hana

1 -

Developing with SAP Integration Suite

2 -

Direct Outbound Delivery

1 -

DMOVE2S4

1 -

EAM

1 -

EDI

3 -

EDI 850

1 -

EDI 856

1 -

edocument

1 -

EHS Product Structure

1 -

Emergency Access Management

1 -

Employee Central Integration (Inc. EC APIs)

1 -

Energy

1 -

EPC

1 -

Financial Operations

1 -

Find

1 -

FINSSKF

1 -

Fiori

1 -

Flexible Workflow

1 -

Gas

1 -

Gen AI enabled SAP Upgrades

1 -

General

1 -

generate_xlsx_file

1 -

Getting Started

1 -

HomogeneousDMO

1 -

How to add new Fields in the Selection Screen Parameter in FBL1H Tcode

1 -

IDOC

2 -

Integration

1 -

Learning Content

2 -

Ledger Combinations in SAP

1 -

LogicApps

2 -

low touchproject

1 -

Maintenance

1 -

management

1 -

Material creation

1 -

Material Management

1 -

MD04

1 -

MD61

1 -

methodology

1 -

Microsoft

2 -

MicrosoftSentinel

2 -

Migration

1 -

mm purchasing

1 -

MRP

1 -

MS Teams

2 -

MT940

1 -

Newcomer

1 -

Notifications

1 -

Oil

1 -

open connectors

1 -

Order Change Log

1 -

ORDERS

2 -

OSS Note 390635

1 -

outbound delivery

1 -

outsourcing

1 -

PCE

1 -

Permit to Work

1 -

PIR Consumption Mode

1 -

PIR's

1 -

PIRs

1 -

PIRs Consumption

1 -

PIRs Reduction

1 -

Plan Independent Requirement

1 -

POSTMAN

1 -

Premium Plus

1 -

pricing

1 -

Primavera P6

1 -

Process Excellence

1 -

Process Management

1 -

Process Order Change Log

1 -

Process purchase requisitions

1 -

Product Information

1 -

Production Order Change Log

1 -

purchase order

1 -

Purchase requisition

1 -

Purchasing Lead Time

1 -

Redwood for SAP Job execution Setup

1 -

RISE with SAP

1 -

RisewithSAP

1 -

Rizing

1 -

S4 Cost Center Planning

1 -

S4 HANA

1 -

S4HANA

3 -

S4HANACloud audit

1 -

Sales and Distribution

1 -

Sales Commission

1 -

sales order

1 -

SAP

2 -

SAP Best Practices

1 -

SAP Build

1 -

SAP Build apps

1 -

SAP CI

1 -

SAP Cloud ALM

1 -

SAP CPI

1 -

SAP CPI (Cloud Platform Integration)

1 -

SAP Data Quality Management

1 -

SAP ERP

1 -

SAP Maintenance resource scheduling

2 -

SAP Note 390635

1 -

SAP S4HANA

2 -

SAP S4HANA Cloud private edition

1 -

SAP Upgrade Automation

1 -

SAP WCM

1 -

SAP Work Clearance Management

1 -

Schedule Agreement

1 -

SDM

1 -

security

2 -

Settlement Management

1 -

soar

2 -

Sourcing and Procurement

1 -

SSIS

1 -

SU01

1 -

SUM2.0SP17

1 -

SUMDMO

1 -

Teams

2 -

Time Management

1 -

User Administration

1 -

User Participation

1 -

Utilities

1 -

va01

1 -

vendor

1 -

vl01n

1 -

vl02n

1 -

WCM

1 -

X12 850

1 -

xlsx_file_abap

1 -

YTD|MTD|QTD in CDs views using Date Function

1

- « Previous

- Next »

Related Content

- Why YCOA? The value of the standard Chart of Accounts in S/4HANA Cloud Public Edition. in Enterprise Resource Planning Blogs by SAP

- Building Low Code Extensions with Key User Extensibility in SAP S/4HANA and SAP Build in Enterprise Resource Planning Blogs by SAP

- Manage Supply Shortage and Excess Supply with MRP Material Coverage Apps in Enterprise Resource Planning Blogs by SAP

- Deep Dive into SAP Build Process Automation with SAP S/4HANA Cloud Public Edition - Retail in Enterprise Resource Planning Blogs by SAP

- How to create a custom material type and assign number range in SAP S4HANA Cloud, Public Edition in Enterprise Resource Planning Q&A

Top kudoed authors

| User | Count |

|---|---|

| 5 | |

| 3 | |

| 2 | |

| 2 | |

| 1 | |

| 1 | |

| 1 | |

| 1 | |

| 1 | |

| 1 |