- SAP Community

- Products and Technology

- Additional Blogs by Members

- Handling Low Latency Scenarios with PI

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

Scenario

I was involved to a very challenging project where I had to deal with real-time scenarios with low latency constraints. In this blog is supposed you are working on SAP PI 7.1 EHP1 since it enables ABAP proxy processing via SOAP adapter. Actors involved into Scenarios are SAP EWM, SAP PI and a Java controller for pallet movement. When main process is started, then messages exchanged between actors are continuous, each message created from a sender actor must be processed by receiver actor within a maximum time frame. Each message doesn’t respect timeline is discarded from an application perspective and must be sent again. There is no need to persist any message and it’s not requested to restart messages in case of failure.

Turnaround time for a message sent from sender actor and time to process its application reply message must be less than 500ms.

Solutions

I want to share all solutions I worked to provide a wider overview of this topic and technology approach chosen. For the test I worked on PI environment, with oversized hardware without any additional message load. On sender side I developed an Abap proxy method that sends out messages via proxy, after filling a payload with a single field. Message incoming to PI are just routed away without any payload transformation and communication type is exactly once. Goal is to process messages as fast as possible respecting time constraint. Java Controller has been developed by using Java 1.6 version with Integrated Web Services feature allowing easy and fast web service (Server/Client) development. The blog only points on how to deal with low latency scenarios and it’s not describing code development. Java controller processes messages within 10-15ms so I assume this component introduces no latency in the complete scenario. Tuning of SAP EWM and SAP PI systems has been performed in order to get best results from involved systems. Messages exchanged for this scenario are asynchronous since EWM or Java Controller sends a message and process the application reply without any blocking task. Sender actor expects an application reply from receiver actor for each message but in the meanwhile other type of messages can be exchanged. From a PI perspective there is one scenario from EWM to Java Controller and one from Java Controller to EWM.

Solution 1: Abap Proxy - Integration Server - Http adapter (Asynchronous)

This solution is handled by Integration Server and messages only have been processed on ABAP stack. Java Controller is designed to work as Http Proxy server so it enables one or more http communication channels to post and get data. Unfortunately solution was not fast enough: 1200ms is the average turnaround time when system is working exclusively on this scenario. Turnaround time is measured by EWM standard transaction as difference between message sent from EWM and time to process its application reply message, sent from java controller. After analyzing Performance Header section of message I discovered most of the time has been spent on qRFC schedulers of EWM and PI as well, that is the time to get message from queue and dispatch it. Also setting properly a filter for queue priorization (sxmb_adm) gave no significant improvements in performance.

Solution 2: Abap Proxy (Soap) - Advanced Adapter Engine - Soap Adapter (Asynchronous)

Solution 2 has been designed trying to bypass PI qRFC scheduler and to work only on Advanced Adapter Engine. For this reason Java controller has been modified to Soap Web Server application and Http adapter (ABAP) has been replaced by Soap adapter (Java). On EWM side ABAP proxy has been dispatched via SOAP adapter using XI Protocol since it's enabled by PI 7.1 EHP1. To configure both scenarios (Outcoming and Incoming) I created two Integrated Configuration objects (ICO) to enable faster asynchronous solution. Unfortunately also solution 2 resulted not fast enough: 700ms is the average turnaround time. For enhancing performance I also followed a tuning approach well described into blog from Mike Sibler, Tuning the PI/PO Messaging System Queues that improved performance even it was not solving qRFC scheduler limitation. In order to summarize, lesson learned is that qRFC scheduler is designed to maximize throughput and not to minimize latency!

I stated that when EWM has to manage several messages than qRFC Scheduler gives a serious impact in general performance, the retention period inside a message queue is not uniform and it’s increasing significantly when number of qRFC queues increases. Message Pipeline steps that contains time spent inside a queue is DB_ENTRY_QUEUING. Picture below shows difference in time between messages sent from EWM, it sounds clear it could never respect project requirement also with this approach. The only way to go on was to remove other persistency steps then I thought about synchronous solution.

Solution 3: Abap Proxy (Soap) - Advanced Adapter Engine - Soap Adapter (Synchronous)

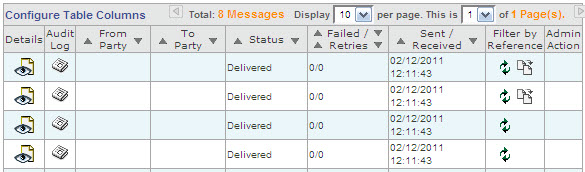

Reason for choosing Synchronous approach has been due to reduce persistence steps. A very interesting point to mention is that besides changing attribute mode for Service Interface into ESR to synchronous, there was no need to adjust developments both on EWM side and Java Controller side. Picture below shows 2 messages delivered from EWM to Java Controller and viceversa with 2 response messages generated as SOAP response.

Turnaround time with this approach is 400ms finally respecting project requirements.

Summary and Improvements

I hope this blog help you to have an qualitative and general overview about this topic. I sincerely don’t expect PI goal is to work as real-time system Integrator but mainly to handle a huge amount of messages to be deliver in considerable time. Results I achieved also consider that in such a case network delay can be significant and must be considered. Despite of such network delay peaks the solution has been tested getting successful results but before adopting it as very stable productive solution, there was the need to change again, not the development done but the PI runtime components. After performing stress test sessions the scenarios has been moved from Central Adapter Engine to a Decentral Adapter Engine. When PI has to manage a huge load on Master data messages (e.g. MATMAS) involving Integration Server and Advanced Adapter Engine then also synchronous solution must be discarded due to performance reason. At the end, result achieved in term of performance and stability is great (approx 200ms) but Decentral Adapter Engine setup is mandatory for this case. This topic will be described into a different blog.

Many thanks to Sergio Cipollaand Sandro Garofano for their precious support.

- SAP LMD/DSD - Last Mile Distribution in Supply Chain Management Blogs by SAP

- revamped SAP First Guidance Collection in Technology Blogs by Members

- How to Leverage SAP CI and APIM for Hyperlink with Query Parameters as Input and no Authentication in Technology Blogs by Members

- Utilizing SAP BTP Integration Suite for Integration with SAP Sales and Service Cloud V2 in CRM and CX Blogs by SAP

- SAP Datasphere + SAP S/4HANA: Your Guide to Seamless Data Integration in Technology Blogs by SAP