- SAP Community

- Products and Technology

- Technology

- Technology Blogs by Members

- Advanced Event Mesh and BTP : Getting Events to Wo...

Technology Blogs by Members

Explore a vibrant mix of technical expertise, industry insights, and tech buzz in member blogs covering SAP products, technology, and events. Get in the mix!

Turn on suggestions

Auto-suggest helps you quickly narrow down your search results by suggesting possible matches as you type.

Showing results for

BarisBuyuktanir

Explorer

Options

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

05-24-2023

12:53 PM

Some of the audience might also remember one of my childhood’s favorite animated TV series “Voltron”. It was a ”science fiction cartoon” featuring a team of pilots who control lion-shaped robots that combine to form a larger, “more powerful” robot called Voltron.

This is how I see BTP: “Voltron”. (BTP and the BTP services / Voltron and the lions). In fact BTP is and was already a very effective PaaS, but now more effective with the addition of Advanced Event Mesh, a very powerful Event Broker that joined the team (Advanced Event Mesh in fact promises more but this we'll discuss later..)

While formulating the architecture and design of the integrations, you have to know and make use of best combination of possible solutions you have in hand. Keeping in mind the pros and cons of each solution and having making use of powerful parts of each one is the ultimate target. Today in the cloud with SAP BTP, we have a very powerful combination / platform, which can add together the power of API based service oriented architecture with the relatively new kid on the block : Event Driven Architecture.

By combining services and solutions like Cloud Integration, Advanced Event Mesh and CAP, (and with other options I won’t be mentioning here); BTP forms and serves the Voltron, surpassing the sum of its underlying components.

I won’t be mentioning about some of these in detail as there are lots of good technical articles & blogs where the benefits and implementations of Cloud Integration, CAP Development are already presented. There are also various blogs covering the advantages of event driven architecture which you can check for introduction.

Instead, I will focus on a scenario where you can combine the power of these three(BTP CI, CAP and AEM), while mostly focusing on the “Advanced Event Mesh” technically, assuming that the other parts’ are already covered and readers have good knowledge.

And also the sample payload data has been modified for privacy reasons, without affecting the functionality.

Imagine a use case where you have a Sales System(C4C), integrated to multiple target/consumer applications that you need to notify about what is happening about the “Accounts”.(Obviously you can prefer other objects as well. In this use-case Account is picked as it's a master data that is requested often in many scenarios)

One very traditional method to handle this would be a file based async approach, or your target applications' querying your upstream application(C4C) in a pull based approach. This means multiple calls to your application(high cost) because you have multiple consumers. Anothe side effect is tight-coupling your consumers to the source application etc.

What if not all your consumers are interested in "all" the Account changes you will have. How this situation is handled?

This would mean different queries, different routing rules or intermediate data storage problems .. and much more.

Or..

What if our source system notifies the world about the changes in the “Accounts” via events once; and multiple possible consumers can pick whatever they are interested in. And not tightly couple producers with consumers (in fact they are not aware of eachother) so that adding and removing each one will be easier.

Very basicly the main steps of the use-case is as follows:

Below is the high level architecture where three third party application are the targets(interested in the same type of data but from different perspective) and C4C being the source system. The good thing with these scenario is all parties are loosely coupled and in fact source system doesn’t even know who the consumers are.

As the source of the events, in C4C, you first need to register a system to publish the events to and register the specific events to be published via “Subscriptions”.

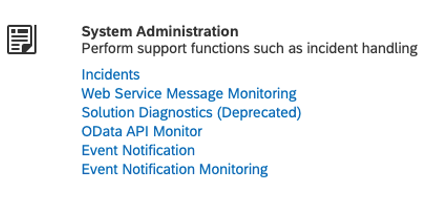

Administrator > General Settings > System Administration > Event Notification

This is for C4C to publish events to other applications. ( in our case it’s the BTP, Cloud Integration iFlow Endpoint)

In this case I will only be publishing the changes in the Account object's Root node (all create, update and delete operations). Therefore whenever a change-delete-insert happens in this particular object, an event is triggered.

Below you can see some of many subscription options from C4C (you can pick among many and in which detail)

When you make a change to the object, as per design, below event is triggered from C4C (a sample) and published.

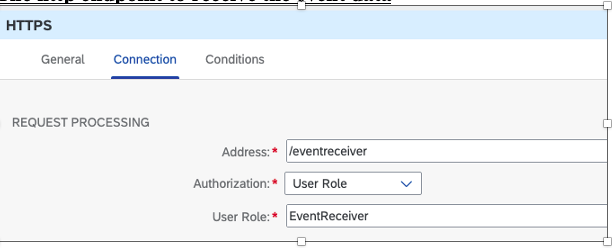

This event is published to the BTP, CI iFlow which acts as the orchestrator. For C4C, it’s an endpoint (an external consumer) as configured below

!! Replace the hostname and endpoint URL according to your requirements (whatever you configured in the iFlow)

With these settings, the publishing (source part) is finished.

In order to simplify the scenario, basic version of BTP, CI iFlow is demonstrated(many steps for logging, security, configuration-scripting for specific requirements are removed from the iflow), as the purpose of the blog is to present the overall architecture and the Event Broker : Advanced Event Mesh portion.

Our iFlow “IFLOW_EVENTRECEIVER_TO_CONSUMERS” will be doing the orchestration of the scenario and will be receiving current and as an extension possible future events from current / new source systems.

The main integration process is simply;

The http endpoint to receive the event data

The main and the sub-process

Advanced Event Mesh Connection Details (AMQP Adapter)

The sub process "Process Topics one by one" is doing the Advanced Event Mesh publishing part, where pCurrentTopicToBePublished is the property we set per each iteration as the topic name.

This topic is published to Advanced Event Mesh with the message payload. (We receive these two from the CAP service(API) described below.)

Bear in mind that there can be a single topic or even zero if we decide not to publish the information. It's all up to you, in this case I prefer 0..n topics and iterate it. In the last part we'll be discussing about the fun part (how topics can be used to route messages in Advanced Event Mesh in a very flexible manner)

In this scenario the responsibility for the CAP service is

We won’t be looking at the details for the CAP Service but the logic is simply:

Below is a sample request and response for the CAP Service:

Request

Response

Now it comes to the fun part, Advanced Event Mesh, event broker configuration and topic structure.

Advanced Event Mesh is an enterprise segment event broker in many cases compared to other event brokers such as Kafka, Rabbit MQ,..

The name with the SAP’s other solution Event Mesh is causing confusion as if Advanced Event Mesh is the same solution with Event Mesh but with more capabilities. The fact is, only the last part of this sentence is correct. (Obviously both EM and AEM are event brokers, providing messaging-eventing services, using queues and topics and subscriptions.. But, when it comes to different capabilities like “meshing” the broker services, easily combining async-sync scenarios, and filtering / routing capabilities using topics in a very effective way (which we will be using in this blog and scenario); Advanced Event Mesh behaves very different and shines with what it offers additionaly as an Enterprise Level Event Broker (and more).

Some of the capabilities are already demonstrated in events/blogs/webinars. Along with today's presented features, I will also try to demonstrate these in the following blog posts.

The fun part starts just after Cloud Integration (iFlow) publishes the message to the smart topic(s).(described below)

Assuming that you have already provisioned your Advanced Event Mesh Broker service within your BTP Tenant, you will be facing user interfaces such as below when you have done for the first time(subject to change with the new versions, but the idea is the same)

Let’s return back to our scenario where C4C is publishing “notifications” about the Accounts’ data to BTP.

In the scenario we have 3 different downstream applications expecting new/changed/deleted Account data.

APP_1

APP_2

APP_3

Topic / Topic Subscriptions

In event-driven architecture (EDA), topics serve as a way to categorize the data conveyed in event messages. Events are sent to one or multiple topics, and endpoints (destinations) can subscribe to one or more topics to receive events from publishers. Practically topics are nothing but additional information (free format string) attached to message (like an attribute / header of the message). However when efficiently designed, they are very powerful. (you can organize routing of messages (events) to multiple different consumers in a very flexible manner.)

We make use of topics via topic subscriptions. Topic subscriptions are to attract messages, for telling the world which messages are you interested in as a consumer end-point.

Advanced Event Mesh can make use of wildcards and more in topic subscriptions which gives you a lot flexibility with wildcards "*" and special ">" character. Our scenario is a small demonstration of this usage. (In fact AEM has more, might be a "topic" of another article about EDA / AEM)

Usage will be more clear with our example below..

Below is a sample topic structure that I used (see the placeholder attributes in {{XYZ}} to be replaced by the real values )

Topic Structure

c4c/account/{{version}}/{{salesorganization}/{{country}/{{division}}

Lets assume that 4 events are triggered from C4C. Based on the data within the event, our smart CAP service fills the topics as below:

So the topics formulated by the CAP services will be:

Now this is where the magic happens.

We have three consumers expecting only the messages that they are interested.

Based on the topics, Advanced Event Mesh routes this messages to zero or multiple subscribers.

Advanced Event Mesh uses wildcards and special symbols while making this filtering possible.

How does this happen?

c4c/account/v1/ECOM1/>

c4c/account/v1/ECOM2/>

c4c/account/v1/*/TR/>

or similarly

c4c/account/v1/*/TR/*

c4c/account/v1/*/*/*

or simply

c4c/account/v1/>

Below are our queues in the beginning:

And the topic subscriptions for these queues:

By this setup;

as they subscribed to.

Once you make your design, the extensions would be much easier in the future such as additional source and target systems' onboarding and/or additional new requirements for the current subscribers.

Lets say our new scenario requires APP2 to extend the coverage for the accounts of the France also. In this case The only thing you need to do in this scenario is adding a subscription to the queue for APP2 as below that’s it.

c4c/account/v1/*/FR/>

Once changes are done in accounts for FR, these are published to APP2 as well along with APP3 which is already receiving all countries' accounts.

Onboarding of new consumer:

Lets say a fourth application(APP4) would like to receive account-related information for a particular sales organization (stores with code(STR1)). Easily done in two steps: You assign this app a queue and adjust the subscription for this queue as

c4c/account/v1/STR1/>

and Voila! Start enjoying how easy the related data is routed to the application.

Although we simplify the scenario to demonstrate the intended points:

Now it's easier to consider other combinations of sync/async, combinations of API based and event driven scenarios depending on the target and source systems' capabilities.

Advanced Event Mesh and BTP has many more capabilities to add on top and in the next couple of articles and blogs, my intention is to demonstrate scenarios with some "advanced" features of Advanced Event Mesh and show how it's possible to modernize integrations with the use of Event Driven Architecture.

Please do not hesitate to contact me in case of questions / comments / recommended use-cases.

This is how I see BTP: “Voltron”. (BTP and the BTP services / Voltron and the lions). In fact BTP is and was already a very effective PaaS, but now more effective with the addition of Advanced Event Mesh, a very powerful Event Broker that joined the team (Advanced Event Mesh in fact promises more but this we'll discuss later..)

While formulating the architecture and design of the integrations, you have to know and make use of best combination of possible solutions you have in hand. Keeping in mind the pros and cons of each solution and having making use of powerful parts of each one is the ultimate target. Today in the cloud with SAP BTP, we have a very powerful combination / platform, which can add together the power of API based service oriented architecture with the relatively new kid on the block : Event Driven Architecture.

By combining services and solutions like Cloud Integration, Advanced Event Mesh and CAP, (and with other options I won’t be mentioning here); BTP forms and serves the Voltron, surpassing the sum of its underlying components.

I won’t be mentioning about some of these in detail as there are lots of good technical articles & blogs where the benefits and implementations of Cloud Integration, CAP Development are already presented. There are also various blogs covering the advantages of event driven architecture which you can check for introduction.

Instead, I will focus on a scenario where you can combine the power of these three(BTP CI, CAP and AEM), while mostly focusing on the “Advanced Event Mesh” technically, assuming that the other parts’ are already covered and readers have good knowledge.

And also the sample payload data has been modified for privacy reasons, without affecting the functionality.

USE CASE

Imagine a use case where you have a Sales System(C4C), integrated to multiple target/consumer applications that you need to notify about what is happening about the “Accounts”.(Obviously you can prefer other objects as well. In this use-case Account is picked as it's a master data that is requested often in many scenarios)

One very traditional method to handle this would be a file based async approach, or your target applications' querying your upstream application(C4C) in a pull based approach. This means multiple calls to your application(high cost) because you have multiple consumers. Anothe side effect is tight-coupling your consumers to the source application etc.

What if not all your consumers are interested in "all" the Account changes you will have. How this situation is handled?

This would mean different queries, different routing rules or intermediate data storage problems .. and much more.

Or..

What if our source system notifies the world about the changes in the “Accounts” via events once; and multiple possible consumers can pick whatever they are interested in. And not tightly couple producers with consumers (in fact they are not aware of eachother) so that adding and removing each one will be easier.

Very basicly the main steps of the use-case is as follows:

- Operations in C4C will trigger events and C4C publishes the new/changed information via these events.(mostly via what is called notification events)

- BTP, Cloud Integration (as the orchestrator of not only C4C Events today-but also orchestrator of possible future events from different source systems.) will manage the flow and make necessary calls.

- BTP, Advanced Event Mesh (as an event broker and router) will manage these event stream and communicates via multiple targets, routing the correct information to interested consumers

- BTP, CAP Services for implementing smart logic, rules for data, filtering etc. and exposing this logic to CI via microservices.

ARCHITECTURE

Below is the high level architecture where three third party application are the targets(interested in the same type of data but from different perspective) and C4C being the source system. The good thing with these scenario is all parties are loosely coupled and in fact source system doesn’t even know who the consumers are.

Architecture

COMPONENTS

Cloud For Customer (C4C)

As the source of the events, in C4C, you first need to register a system to publish the events to and register the specific events to be published via “Subscriptions”.

Administrator > General Settings > System Administration > Event Notification

This is for C4C to publish events to other applications. ( in our case it’s the BTP, Cloud Integration iFlow Endpoint)

Target for Event Notification and Subscriptions of Events

In this case I will only be publishing the changes in the Account object's Root node (all create, update and delete operations). Therefore whenever a change-delete-insert happens in this particular object, an event is triggered.

Below you can see some of many subscription options from C4C (you can pick among many and in which detail)

Registration of the target system and events contd.

When you make a change to the object, as per design, below event is triggered from C4C (a sample) and published.

{

"specversion": "1.0",

"type": "/sap.c4c/Account.Root.Updated",

"source": "/XAF/sap.c4c/000000000123456788",

"id": "bb19819d-66xx-1eed-bdc5-0c04a378e286",

"event-type": "Account.Root.Updated",

"event-type-version": "v1",

"event-id": " bb19819d -66xx -1eed-bdc5-0c04a378e286",

"event-time": "1981-13-04T03:30:30Z",

"data": {

"root-entity-id":"001981D0E1M2E3T4CA1EEC8AF58A7D84",

"entity-id":"001981D0E1M2E3T4CA1EEC8AF58A7D84" }

}

This event is published to the BTP, CI iFlow which acts as the orchestrator. For C4C, it’s an endpoint (an external consumer) as configured below

!! Replace the hostname and endpoint URL according to your requirements (whatever you configured in the iFlow)

With these settings, the publishing (source part) is finished.

BTP, Cloud Integration (formerly CPI) iFlow

In order to simplify the scenario, basic version of BTP, CI iFlow is demonstrated(many steps for logging, security, configuration-scripting for specific requirements are removed from the iflow), as the purpose of the blog is to present the overall architecture and the Event Broker : Advanced Event Mesh portion.

Our iFlow “IFLOW_EVENTRECEIVER_TO_CONSUMERS” will be doing the orchestration of the scenario and will be receiving current and as an extension possible future events from current / new source systems.

The main integration process is simply;

- Receiving the event information from via Http Adapter

- Setting some header/properties to be used later (depending on the complexity of the scenario)

- Calling the CAP Service in order to receive the data(the full enriched object data and topics to be published. (Notification Event Payload only have the changed data but we want to publish more detailed information than that)

- Iterating of multiple topics(possible with the scenario) and publishing to these topics.(0..n topics)

- A local integration process to publish to Advanced Event Mesh for each topic received via AMQP adapter.

- Handling exceptions and notifying related parties via another generic process.

The http endpoint to receive the event data

Http Endpoint (start of iFlow)

The main and the sub-process

Main Integration Process

Sub-process sending the message to AEM

Advanced Event Mesh Connection Details (AMQP Adapter)

The sub process "Process Topics one by one" is doing the Advanced Event Mesh publishing part, where pCurrentTopicToBePublished is the property we set per each iteration as the topic name.

This topic is published to Advanced Event Mesh with the message payload. (We receive these two from the CAP service(API) described below.)

Bear in mind that there can be a single topic or even zero if we decide not to publish the information. It's all up to you, in this case I prefer 0..n topics and iterate it. In the last part we'll be discussing about the fun part (how topics can be used to route messages in Advanced Event Mesh in a very flexible manner)

AMQP Adapter Details

AMQP Adapter Connection and Processing Details

CAP SERVICE

In this scenario the responsibility for the CAP service is

- to formulate the data to be published to the target applications

- to determine which topics to publish this data

We won’t be looking at the details for the CAP Service but the logic is simply:

- CAP Service API receives the event data payload (JSON)

- CAP service queries the C4C System in order to enrich the data (via OData services)

- As per the logic, return one or multiple topics to publish to and enriched object to the caller (CI iFlow)

Below is a sample request and response for the CAP Service:

Request

{

"specversion": "1.0",

"type": "/sap.c4c/Account.Root.Updated",

"source": "/XAF/sap.c4c/000000000123456788",

"id": "bb19819d-66xx-1eed-bdc5-0c04a378e286",

"event-type": "Account.Root.Updated",

"event-type-version": "v1",

"event-id": " bb19819d-66xx-1eed-bdc5-0c04a378e286",

"event-time": "1981-13-04T03:30:30Z",

"data": {

"root-entity-id": "001981D0E1M2E3T4CA1EEC8AF58A7D84",

"entity-id": "001981D0E1M2E3T4CA1EEC8AF58A7D84"

}

}Response

{

"EventTriggeredOn": "1981-04-13T03:30:00Z",

"Topics": [

"c4c/account/v1/ECOM1/TR/DIV1"

],

"Entity": {

"AccountId": "20000001",

"Role": "CRM000",

"ERPAccountID": "358424",

"AccountName": "BB Test Account",

"AccountStatus": "2",

"SalesOrg": "ECOM1",

"DeliveryPostalCode": "34000",

"DeliveryCity": "Istanbul",

"Country": "TR",

"DistributionChannel": "01",

"Division": "DIV1",

"SalesRepCode": "1000132",

"TaxId": "TAX00001",

"CompanyID": "ABCD1234"

}

}Now it comes to the fun part, Advanced Event Mesh, event broker configuration and topic structure.

ADVANCED EVENT MESH

Advanced Event Mesh is an enterprise segment event broker in many cases compared to other event brokers such as Kafka, Rabbit MQ,..

The name with the SAP’s other solution Event Mesh is causing confusion as if Advanced Event Mesh is the same solution with Event Mesh but with more capabilities. The fact is, only the last part of this sentence is correct. (Obviously both EM and AEM are event brokers, providing messaging-eventing services, using queues and topics and subscriptions.. But, when it comes to different capabilities like “meshing” the broker services, easily combining async-sync scenarios, and filtering / routing capabilities using topics in a very effective way (which we will be using in this blog and scenario); Advanced Event Mesh behaves very different and shines with what it offers additionaly as an Enterprise Level Event Broker (and more).

Some of the capabilities are already demonstrated in events/blogs/webinars. Along with today's presented features, I will also try to demonstrate these in the following blog posts.

The fun part starts just after Cloud Integration (iFlow) publishes the message to the smart topic(s).(described below)

Assuming that you have already provisioned your Advanced Event Mesh Broker service within your BTP Tenant, you will be facing user interfaces such as below when you have done for the first time(subject to change with the new versions, but the idea is the same)

Let’s return back to our scenario where C4C is publishing “notifications” about the Accounts’ data to BTP.

In the scenario we have 3 different downstream applications expecting new/changed/deleted Account data.

THE CONSUMER APPLICATIONS

APP_1

- E-commerce application

- Interested in the accounts belong to E-Commerce Sales Organizations(ECOM1, ECOM2)

APP_2

- Regional store application / Turkiye region

- interested in the accounts for country : Turkiye (TR)

APP_3

- Global Application

- interested in every account data regardless of the country, sales organization

THE TOPIC STRUCTURE - DESIGN

Topic / Topic Subscriptions

In event-driven architecture (EDA), topics serve as a way to categorize the data conveyed in event messages. Events are sent to one or multiple topics, and endpoints (destinations) can subscribe to one or more topics to receive events from publishers. Practically topics are nothing but additional information (free format string) attached to message (like an attribute / header of the message). However when efficiently designed, they are very powerful. (you can organize routing of messages (events) to multiple different consumers in a very flexible manner.)

We make use of topics via topic subscriptions. Topic subscriptions are to attract messages, for telling the world which messages are you interested in as a consumer end-point.

Advanced Event Mesh can make use of wildcards and more in topic subscriptions which gives you a lot flexibility with wildcards "*" and special ">" character. Our scenario is a small demonstration of this usage. (In fact AEM has more, might be a "topic" of another article about EDA / AEM)

- * in each level means everthing matches with this level (level is everything between "/" )

- > after the last level means matches every level after your last .. It's like XYZ/*/*/* forever.

Usage will be more clear with our example below..

Below is a sample topic structure that I used (see the placeholder attributes in {{XYZ}} to be replaced by the real values )

Topic Structure

c4c/account/{{version}}/{{salesorganization}/{{country}/{{division}}

Lets assume that 4 events are triggered from C4C. Based on the data within the event, our smart CAP service fills the topics as below:

- First event is for a change in an account from sales organization ECOM1 || from Turkey(TR) || division DIV1

- Second is for an account from sales organization Stores1(STR1) || country: Turkey(TR) || division DIV1

- Third is for an account from sales organization ECommerce(ECOM2) || country: United Kingdom(UK) || division DIV2

- Fourth is for an account from sales organization Stores(STR2) || country: Germany(DE) || division DIV2

So the topics formulated by the CAP services will be:

- Message-1 Topic : c4c/account/v1/ECOM1/TR/DIV1

- Message-2 Topic : c4c/account/v1/STR1/TR/DIV1

- Message-3 Topic : c4c/account/v1/ECOM2/UK/DIV2

- Message-4 Topic :c4c/account/v1/STR2/DE/DIV2

Now this is where the magic happens.

We have three consumers expecting only the messages that they are interested.

Based on the topics, Advanced Event Mesh routes this messages to zero or multiple subscribers.

Advanced Event Mesh uses wildcards and special symbols while making this filtering possible.

- * in each level means everthing with this level

- > after the last level means everything after that

How does this happen?

- All these consumer applications are listening to their own queues(there are different options but widely used one is this)

- APP_1 is a consumer for QUEUE_1Subscription (accounts for E-Commerce Sales Organization(ECOM1, ECOM2))

c4c/account/v1/ECOM1/>

c4c/account/v1/ECOM2/>

- APP2 is a consumer for QUEUE_2Subscription (accounts for country : Turkiye (TR). regardless of other information

c4c/account/v1/*/TR/>

or similarly

c4c/account/v1/*/TR/*

- APP_3 is a consumer for QUEUE_3Subscription (All accounts regardsless of sales org, country, division)

c4c/account/v1/*/*/*

or simply

c4c/account/v1/>

Below are our queues in the beginning:

3 new Queues are created

And the topic subscriptions for these queues:

Queues are subscribed to the topics very easily

By this setup;

- APP_1 -> QUEUE_1 will receive Message 1, Message 3

- APP2 -> QUEUE_2 will receive Message 1, Message 2

- APP 3 (Generic) -> QUEUE_3 will receive all messages : Message 1, Message 2, Message 3 and Message 4

as they subscribed to.

Once you make your design, the extensions would be much easier in the future such as additional source and target systems' onboarding and/or additional new requirements for the current subscribers.

Lets say our new scenario requires APP2 to extend the coverage for the accounts of the France also. In this case The only thing you need to do in this scenario is adding a subscription to the queue for APP2 as below that’s it.

c4c/account/v1/*/FR/>

Once changes are done in accounts for FR, these are published to APP2 as well along with APP3 which is already receiving all countries' accounts.

Final status of the queues after new subscription and events publishing

Final status of the queues after new subscription and events publishing

Onboarding of new consumer:

Lets say a fourth application(APP4) would like to receive account-related information for a particular sales organization (stores with code(STR1)). Easily done in two steps: You assign this app a queue and adjust the subscription for this queue as

c4c/account/v1/STR1/>

and Voila! Start enjoying how easy the related data is routed to the application.

What we have done ..

Although we simplify the scenario to demonstrate the intended points:

- We have enabled C4C system as an event source publishing data to the loosely coupled consumers in a controlled manner.

- With Advanced Event Mesh and BTP, we have enabled multiple consumers to be notified about the changes that they are interested.

- Design a flexible architecture to make future subscribers to easily join the scenario. (and possibly new sources/producers as well)

Follow-up Plans..

Now it's easier to consider other combinations of sync/async, combinations of API based and event driven scenarios depending on the target and source systems' capabilities.

Advanced Event Mesh and BTP has many more capabilities to add on top and in the next couple of articles and blogs, my intention is to demonstrate scenarios with some "advanced" features of Advanced Event Mesh and show how it's possible to modernize integrations with the use of Event Driven Architecture.

Please do not hesitate to contact me in case of questions / comments / recommended use-cases.

8 Comments

You must be a registered user to add a comment. If you've already registered, sign in. Otherwise, register and sign in.

Labels in this area

-

"automatische backups"

1 -

"regelmäßige sicherung"

1 -

"TypeScript" "Development" "FeedBack"

1 -

505 Technology Updates 53

1 -

ABAP

14 -

ABAP API

1 -

ABAP CDS Views

2 -

ABAP CDS Views - BW Extraction

1 -

ABAP CDS Views - CDC (Change Data Capture)

1 -

ABAP class

2 -

ABAP Cloud

2 -

ABAP Development

5 -

ABAP in Eclipse

1 -

ABAP Platform Trial

1 -

ABAP Programming

2 -

abap technical

1 -

absl

1 -

access data from SAP Datasphere directly from Snowflake

1 -

Access data from SAP datasphere to Qliksense

1 -

Accrual

1 -

action

1 -

adapter modules

1 -

Addon

1 -

Adobe Document Services

1 -

ADS

1 -

ADS Config

1 -

ADS with ABAP

1 -

ADS with Java

1 -

ADT

2 -

Advance Shipping and Receiving

1 -

Advanced Event Mesh

3 -

AEM

1 -

AI

7 -

AI Launchpad

1 -

AI Projects

1 -

AIML

9 -

Alert in Sap analytical cloud

1 -

Amazon S3

1 -

Analytical Dataset

1 -

Analytical Model

1 -

Analytics

1 -

Analyze Workload Data

1 -

annotations

1 -

API

1 -

API and Integration

3 -

API Call

2 -

Application Architecture

1 -

Application Development

5 -

Application Development for SAP HANA Cloud

3 -

Applications and Business Processes (AP)

1 -

Artificial Intelligence

1 -

Artificial Intelligence (AI)

4 -

Artificial Intelligence (AI) 1 Business Trends 363 Business Trends 8 Digital Transformation with Cloud ERP (DT) 1 Event Information 462 Event Information 15 Expert Insights 114 Expert Insights 76 Life at SAP 418 Life at SAP 1 Product Updates 4

1 -

Artificial Intelligence (AI) blockchain Data & Analytics

1 -

Artificial Intelligence (AI) blockchain Data & Analytics Intelligent Enterprise

1 -

Artificial Intelligence (AI) blockchain Data & Analytics Intelligent Enterprise Oil Gas IoT Exploration Production

1 -

Artificial Intelligence (AI) blockchain Data & Analytics Intelligent Enterprise sustainability responsibility esg social compliance cybersecurity risk

1 -

ASE

1 -

ASR

2 -

ASUG

1 -

Attachments

1 -

Authorisations

1 -

Automating Processes

1 -

Automation

1 -

aws

2 -

Azure

1 -

Azure AI Studio

1 -

B2B Integration

1 -

Backorder Processing

1 -

Backup

1 -

Backup and Recovery

1 -

Backup schedule

1 -

BADI_MATERIAL_CHECK error message

1 -

Bank

1 -

BAS

1 -

basis

2 -

Basis Monitoring & Tcodes with Key notes

2 -

Batch Management

1 -

BDC

1 -

Best Practice

1 -

bitcoin

1 -

Blockchain

3 -

BOP in aATP

1 -

BOP Segments

1 -

BOP Strategies

1 -

BOP Variant

1 -

BPC

1 -

BPC LIVE

1 -

BTP

11 -

BTP Destination

2 -

Business AI

1 -

Business and IT Integration

1 -

Business application stu

1 -

Business Application Studio

1 -

Business Architecture

1 -

Business Communication Services

1 -

Business Continuity

1 -

Business Data Fabric

3 -

Business Partner

12 -

Business Partner Master Data

10 -

Business Technology Platform

2 -

Business Trends

1 -

CA

1 -

calculation view

1 -

CAP

3 -

Capgemini

1 -

CAPM

1 -

Catalyst for Efficiency: Revolutionizing SAP Integration Suite with Artificial Intelligence (AI) and

1 -

CCMS

2 -

CDQ

12 -

CDS

2 -

Cental Finance

1 -

Certificates

1 -

CFL

1 -

Change Management

1 -

chatbot

1 -

chatgpt

3 -

CL_SALV_TABLE

2 -

Class Runner

1 -

Classrunner

1 -

Cloud ALM Monitoring

1 -

Cloud ALM Operations

1 -

cloud connector

1 -

Cloud Extensibility

1 -

Cloud Foundry

4 -

Cloud Integration

6 -

Cloud Platform Integration

2 -

cloudalm

1 -

communication

1 -

Compensation Information Management

1 -

Compensation Management

1 -

Compliance

1 -

Compound Employee API

1 -

Configuration

1 -

Connectors

1 -

Consolidation Extension for SAP Analytics Cloud

1 -

Controller-Service-Repository pattern

1 -

Conversion

1 -

Cosine similarity

1 -

cryptocurrency

1 -

CSI

1 -

ctms

1 -

Custom chatbot

3 -

Custom Destination Service

1 -

custom fields

1 -

Customer Experience

1 -

Customer Journey

1 -

Customizing

1 -

cyber security

2 -

Data

1 -

Data & Analytics

1 -

Data Aging

1 -

Data Analytics

2 -

Data and Analytics (DA)

1 -

Data Archiving

1 -

Data Back-up

1 -

Data Governance

5 -

Data Integration

2 -

Data Quality

12 -

Data Quality Management

12 -

Data Synchronization

1 -

data transfer

1 -

Data Unleashed

1 -

Data Value

8 -

database tables

1 -

Datasphere

2 -

datenbanksicherung

1 -

dba cockpit

1 -

dbacockpit

1 -

Debugging

2 -

Delimiting Pay Components

1 -

Delta Integrations

1 -

Destination

3 -

Destination Service

1 -

Developer extensibility

1 -

Developing with SAP Integration Suite

1 -

Devops

1 -

digital transformation

1 -

Documentation

1 -

Dot Product

1 -

DQM

1 -

dump database

1 -

dump transaction

1 -

e-Invoice

1 -

E4H Conversion

1 -

Eclipse ADT ABAP Development Tools

2 -

edoc

1 -

edocument

1 -

ELA

1 -

Embedded Consolidation

1 -

Embedding

1 -

Embeddings

1 -

Employee Central

1 -

Employee Central Payroll

1 -

Employee Central Time Off

1 -

Employee Information

1 -

Employee Rehires

1 -

Enable Now

1 -

Enable now manager

1 -

endpoint

1 -

Enhancement Request

1 -

Enterprise Architecture

1 -

ETL Business Analytics with SAP Signavio

1 -

Euclidean distance

1 -

Event Dates

1 -

Event Driven Architecture

1 -

Event Mesh

2 -

Event Reason

1 -

EventBasedIntegration

1 -

EWM

1 -

EWM Outbound configuration

1 -

EWM-TM-Integration

1 -

Existing Event Changes

1 -

Expand

1 -

Expert

2 -

Expert Insights

1 -

Fiori

14 -

Fiori Elements

2 -

Fiori SAPUI5

12 -

Flask

1 -

Full Stack

8 -

Funds Management

1 -

General

1 -

Generative AI

1 -

Getting Started

1 -

GitHub

8 -

Grants Management

1 -

groovy

1 -

GTP

1 -

HANA

5 -

HANA Cloud

2 -

Hana Cloud Database Integration

2 -

HANA DB

1 -

HANA XS Advanced

1 -

Historical Events

1 -

home labs

1 -

HowTo

1 -

HR Data Management

1 -

html5

8 -

HTML5 Application

1 -

Identity cards validation

1 -

idm

1 -

Implementation

1 -

input parameter

1 -

instant payments

1 -

Integration

3 -

Integration Advisor

1 -

Integration Architecture

1 -

Integration Center

1 -

Integration Suite

1 -

intelligent enterprise

1 -

Java

1 -

job

1 -

Job Information Changes

1 -

Job-Related Events

1 -

Job_Event_Information

1 -

joule

4 -

Journal Entries

1 -

Just Ask

1 -

Kerberos for ABAP

8 -

Kerberos for JAVA

8 -

Launch Wizard

1 -

Learning Content

2 -

Life at SAP

1 -

lightning

1 -

Linear Regression SAP HANA Cloud

1 -

local tax regulations

1 -

LP

1 -

Machine Learning

2 -

Marketing

1 -

Master Data

3 -

Master Data Management

14 -

Maxdb

2 -

MDG

1 -

MDGM

1 -

MDM

1 -

Message box.

1 -

Messages on RF Device

1 -

Microservices Architecture

1 -

Microsoft Universal Print

1 -

Middleware Solutions

1 -

Migration

5 -

ML Model Development

1 -

Modeling in SAP HANA Cloud

8 -

Monitoring

3 -

MTA

1 -

Multi-Record Scenarios

1 -

Multiple Event Triggers

1 -

Neo

1 -

New Event Creation

1 -

New Feature

1 -

Newcomer

1 -

NodeJS

2 -

ODATA

2 -

OData APIs

1 -

odatav2

1 -

ODATAV4

1 -

ODBC

1 -

ODBC Connection

1 -

Onpremise

1 -

open source

2 -

OpenAI API

1 -

Oracle

1 -

PaPM

1 -

PaPM Dynamic Data Copy through Writer function

1 -

PaPM Remote Call

1 -

PAS-C01

1 -

Pay Component Management

1 -

PGP

1 -

Pickle

1 -

PLANNING ARCHITECTURE

1 -

Popup in Sap analytical cloud

1 -

PostgrSQL

1 -

POSTMAN

1 -

Process Automation

2 -

Product Updates

4 -

PSM

1 -

Public Cloud

1 -

Python

4 -

Qlik

1 -

Qualtrics

1 -

RAP

3 -

RAP BO

2 -

Record Deletion

1 -

Recovery

1 -

recurring payments

1 -

redeply

1 -

Release

1 -

Remote Consumption Model

1 -

Replication Flows

1 -

Research

1 -

Resilience

1 -

REST

1 -

REST API

1 -

Retagging Required

1 -

Risk

1 -

Rolling Kernel Switch

1 -

route

1 -

rules

1 -

S4 HANA

1 -

S4 HANA Cloud

1 -

S4 HANA On-Premise

1 -

S4HANA

3 -

S4HANA_OP_2023

2 -

SAC

10 -

SAC PLANNING

9 -

SAP

4 -

SAP ABAP

1 -

SAP Advanced Event Mesh

1 -

SAP AI Core

8 -

SAP AI Launchpad

8 -

SAP Analytic Cloud Compass

1 -

Sap Analytical Cloud

1 -

SAP Analytics Cloud

4 -

SAP Analytics Cloud for Consolidation

2 -

SAP Analytics Cloud Story

1 -

SAP analytics clouds

1 -

SAP BAS

1 -

SAP Basis

6 -

SAP BODS

1 -

SAP BODS certification.

1 -

SAP BTP

20 -

SAP BTP Build Work Zone

2 -

SAP BTP Cloud Foundry

5 -

SAP BTP Costing

1 -

SAP BTP CTMS

1 -

SAP BTP Innovation

1 -

SAP BTP Migration Tool

1 -

SAP BTP SDK IOS

1 -

SAP Build

11 -

SAP Build App

1 -

SAP Build apps

1 -

SAP Build CodeJam

1 -

SAP Build Process Automation

3 -

SAP Build work zone

10 -

SAP Business Objects Platform

1 -

SAP Business Technology

2 -

SAP Business Technology Platform (XP)

1 -

sap bw

1 -

SAP CAP

2 -

SAP CDC

1 -

SAP CDP

1 -

SAP CDS VIEW

1 -

SAP Certification

1 -

SAP Cloud ALM

4 -

SAP Cloud Application Programming Model

1 -

SAP Cloud Integration for Data Services

1 -

SAP cloud platform

8 -

SAP Companion

1 -

SAP CPI

3 -

SAP CPI (Cloud Platform Integration)

2 -

SAP CPI Discover tab

1 -

sap credential store

1 -

SAP Customer Data Cloud

1 -

SAP Customer Data Platform

1 -

SAP Data Intelligence

1 -

SAP Data Migration in Retail Industry

1 -

SAP Data Services

1 -

SAP DATABASE

1 -

SAP Dataspher to Non SAP BI tools

1 -

SAP Datasphere

9 -

SAP DRC

1 -

SAP EWM

1 -

SAP Fiori

2 -

SAP Fiori App Embedding

1 -

Sap Fiori Extension Project Using BAS

1 -

SAP GRC

1 -

SAP HANA

1 -

SAP HCM (Human Capital Management)

1 -

SAP HR Solutions

1 -

SAP IDM

1 -

SAP Integration Suite

9 -

SAP Integrations

4 -

SAP iRPA

2 -

SAP Learning Class

1 -

SAP Learning Hub

1 -

SAP Odata

2 -

SAP on Azure

1 -

SAP PartnerEdge

1 -

sap partners

1 -

SAP Password Reset

1 -

SAP PO Migration

1 -

SAP Prepackaged Content

1 -

SAP Process Automation

2 -

SAP Process Integration

2 -

SAP Process Orchestration

1 -

SAP S4HANA

2 -

SAP S4HANA Cloud

1 -

SAP S4HANA Cloud for Finance

1 -

SAP S4HANA Cloud private edition

1 -

SAP Sandbox

1 -

SAP STMS

1 -

SAP SuccessFactors

2 -

SAP SuccessFactors HXM Core

1 -

SAP Time

1 -

SAP TM

2 -

SAP Trading Partner Management

1 -

SAP UI5

1 -

SAP Upgrade

1 -

SAP Utilities

1 -

SAP-GUI

8 -

SAP_COM_0276

1 -

SAPBTP

1 -

SAPCPI

1 -

SAPEWM

1 -

sapmentors

1 -

saponaws

2 -

SAPS4HANA

1 -

SAPUI5

4 -

schedule

1 -

Secure Login Client Setup

8 -

security

9 -

Selenium Testing

1 -

SEN

1 -

SEN Manager

1 -

service

1 -

SET_CELL_TYPE

1 -

SET_CELL_TYPE_COLUMN

1 -

SFTP scenario

2 -

Simplex

1 -

Single Sign On

8 -

Singlesource

1 -

SKLearn

1 -

soap

1 -

Software Development

1 -

SOLMAN

1 -

solman 7.2

2 -

Solution Manager

3 -

sp_dumpdb

1 -

sp_dumptrans

1 -

SQL

1 -

sql script

1 -

SSL

8 -

SSO

8 -

Substring function

1 -

SuccessFactors

1 -

SuccessFactors Time Tracking

1 -

Sybase

1 -

system copy method

1 -

System owner

1 -

Table splitting

1 -

Tax Integration

1 -

Technical article

1 -

Technical articles

1 -

Technology Updates

1 -

Technology Updates

1 -

Technology_Updates

1 -

Threats

1 -

Time Collectors

1 -

Time Off

2 -

Tips and tricks

2 -

Tools

1 -

Trainings & Certifications

1 -

Transport in SAP BODS

1 -

Transport Management

1 -

TypeScript

2 -

unbind

1 -

Unified Customer Profile

1 -

UPB

1 -

Use of Parameters for Data Copy in PaPM

1 -

User Unlock

1 -

VA02

1 -

Validations

1 -

Vector Database

1 -

Vector Engine

1 -

Visual Studio Code

1 -

VSCode

1 -

Web SDK

1 -

work zone

1 -

workload

1 -

xsa

1 -

XSA Refresh

1

- « Previous

- Next »

Related Content

- Hack2Build on Business AI – Highlighted Use Cases in Technology Blogs by SAP

- SAP Analytics Cloud - Performance statistics in Technology Blogs by SAP

- Unify your process and task mining insights: How SAP UEM by Knoa integrates with SAP Signavio in Technology Blogs by SAP

- SAP HANA Cloud Vector Engine: Quick FAQ Reference in Technology Blogs by SAP

- Receive a notification when your storage quota of SAP Cloud Transport Management passes 85% in Technology Blogs by SAP

Top kudoed authors

| User | Count |

|---|---|

| 11 | |

| 10 | |

| 7 | |

| 6 | |

| 4 | |

| 4 | |

| 3 | |

| 3 | |

| 3 | |

| 3 |