- SAP Community

- Products and Technology

- Technology

- Technology Blogs by SAP

- Integrating SAP Signavio Process Intelligence and ...

Technology Blogs by SAP

Learn how to extend and personalize SAP applications. Follow the SAP technology blog for insights into SAP BTP, ABAP, SAP Analytics Cloud, SAP HANA, and more.

Turn on suggestions

Auto-suggest helps you quickly narrow down your search results by suggesting possible matches as you type.

Showing results for

Advisor

Options

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

07-20-2022

11:02 AM

Disclaimer: the paragraph "SAP Data Intelligence pipeline" has been updated on 2022, September 29th.

Welcome to the third episode of the series: Integrating SAP Signavio Process Intelligence and SAP Data Intelligence.

In case you missed the two previous blog posts:

After the enhancements that were released in the product in June 2022, with the general availability of Ingestion API (in the Appendix you can find the link to the documentation), we can now overcome that preliminary approach and actually move to a direct connectivity between the products.

In this third post, I’ll therefore describe a concrete example that shows how you can use an SAP Data Intelligence pipeline to feed data directly into SAP Signavio Process Intelligence, via Ingestion API, bypassing the need for a staging area.

Ingestion API allows you to create an external data pipeline and ingest data into SAP Signavio Process Intelligence via API with an authentication token. This facilitates the integration with SAP Data Intelligence Cloud, where a data pipeline can be created with an operator at the end pushing the data into SAP Signavio Process Intelligence.

The example implemented in this blog shows how to read data from a customer survey from a Qualtrics system and how to then feed it into SAP Signavio Process Intelligence.

What is interesting is that such approach can be applied to any of the numerous sources supported by SAP Data Intelligence Cloud leveraging on its data processing and transformation capabilities, but it can also be applied to any kind of SAP and non-SAP data pipeline, external to SAP Signavio Process Intelligence.

Let’s first introduce some context.

Some of you already know about journey to process analytics, an innovative process management practice that connects experience and business operations data, aiming at understanding, improving and transforming your customer, supplier and employee experience. If you haven’t heard of it yet, I would suggest you to read this 5 minute blog post by aida.centelles.

Let’s now imagine that we want to analyze an Incident-to-Resolution process. From a traditional process mining perspective, the approach is quite straightforward: we configure a connection to ServiceNow, extract the data, create an event log, and apply process mining techniques on it.

But what if we could collect customers feedback, at the end of their journey, in a Qualtrics survey?

This experience data could be combined to the operational data to bring the customer experience perspective into play, enriching the data model and leading to a broad variety of new and valuable insights.

However, currently there is no native connector to Qualtrics in SAP Signavio Process Intelligence. Hence, we can create a data pipeline in SAP Data Intelligence to extract the survey data from Qualtrics and push it to SAP Signavio Process Intelligence.

Let’s see how we can quickly set this up.

First of all, let’s create a new Ingestion API data source in SAP Signavio Process Intelligence.

The data source provides two parameters:

Second, let’s connect to Qualtrics to retrieve the information we need.

Login into your Qualtrics system and go to Account Settings and then to Qualtrics IDs

Here you can retrieve the following parameters

Last, let’s create a data pipeline in SAP Data Intelligence Cloud.

In this GitHub repository you can find a ready-to-use SAP Data Intelligence graph, with a connector to Qualtrics and a connector to SAP Signavio Process Intelligence.

You can download and archive the content of the 3 folders (that you can find in the repository) into 3 zip files, so that they can be imported as solutions into your SAP Data Intelligence tenant. Do not archive the folders, but for each folder select the content and “add to archive”.

In order to import solutions in SAP Data Intelligence, you can go to System Management, go to Files, click on the '+' symbol, select "Import Solution" and choose your zip file.

In SAP Data Intelligence, you can create an OPENAPI connection to Qualtrics

In SAP Data Intelligence, you can create an OPENAPI connection to SAP Signavio

You can configure the graph.

You can now save and run the pipeline.

Once its status is “completed” you can go to the SAP Signavio Process Intelligence Ingestion API integration and check the logs.

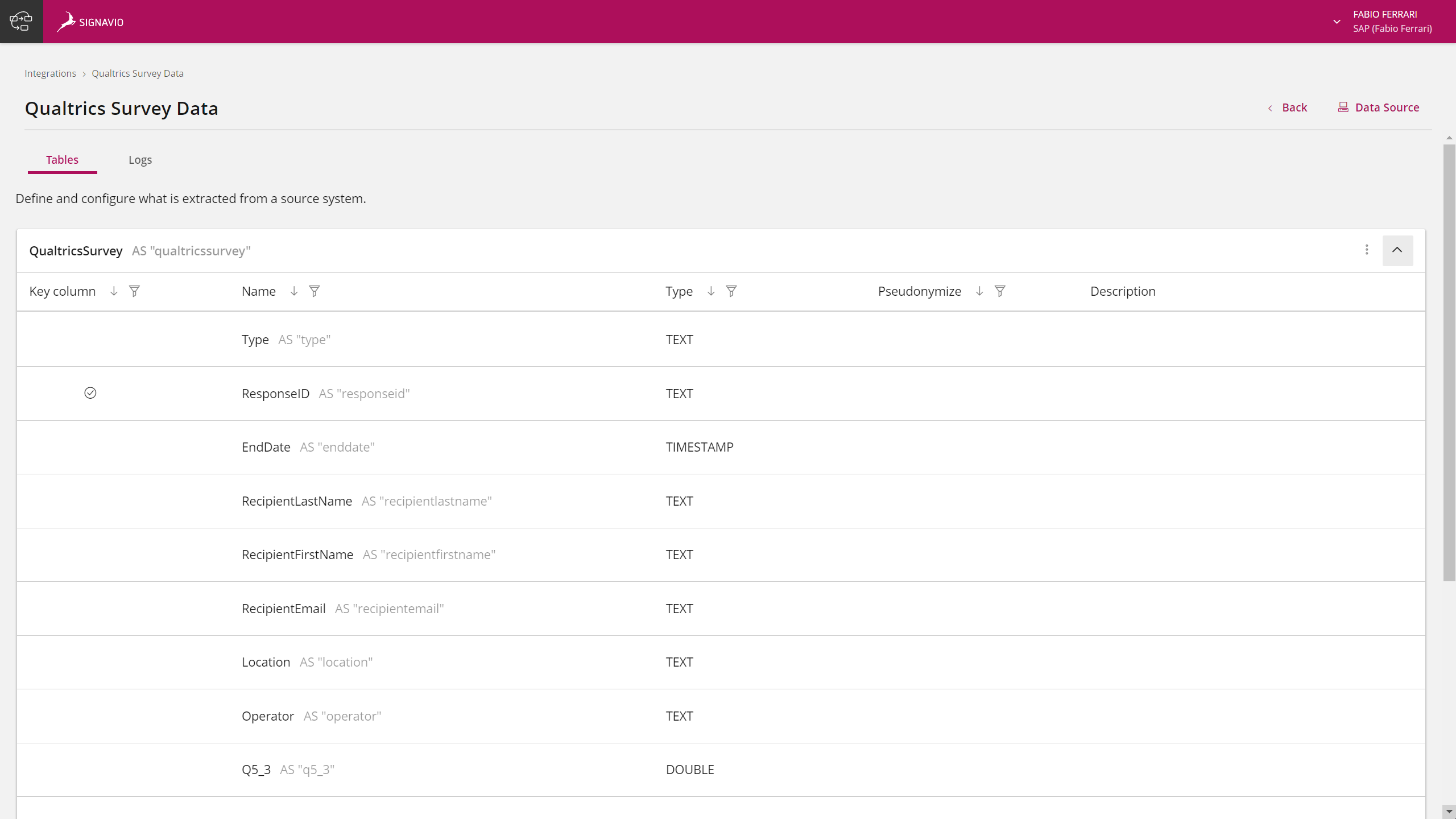

A new execution log will appear, with “Running” status. After a few seconds the status will become “Done” and you can check in the Tables tab that a new table has indeed been uploaded, with the name provided in the second python operator of the DI pipeline.

The data is now ready to be used for process mining!

As we stated above, the whole idea started with the business requirement to add Qualtrics data to the Incident to Resolution process, to enrich operational data with the customer experience perspective.

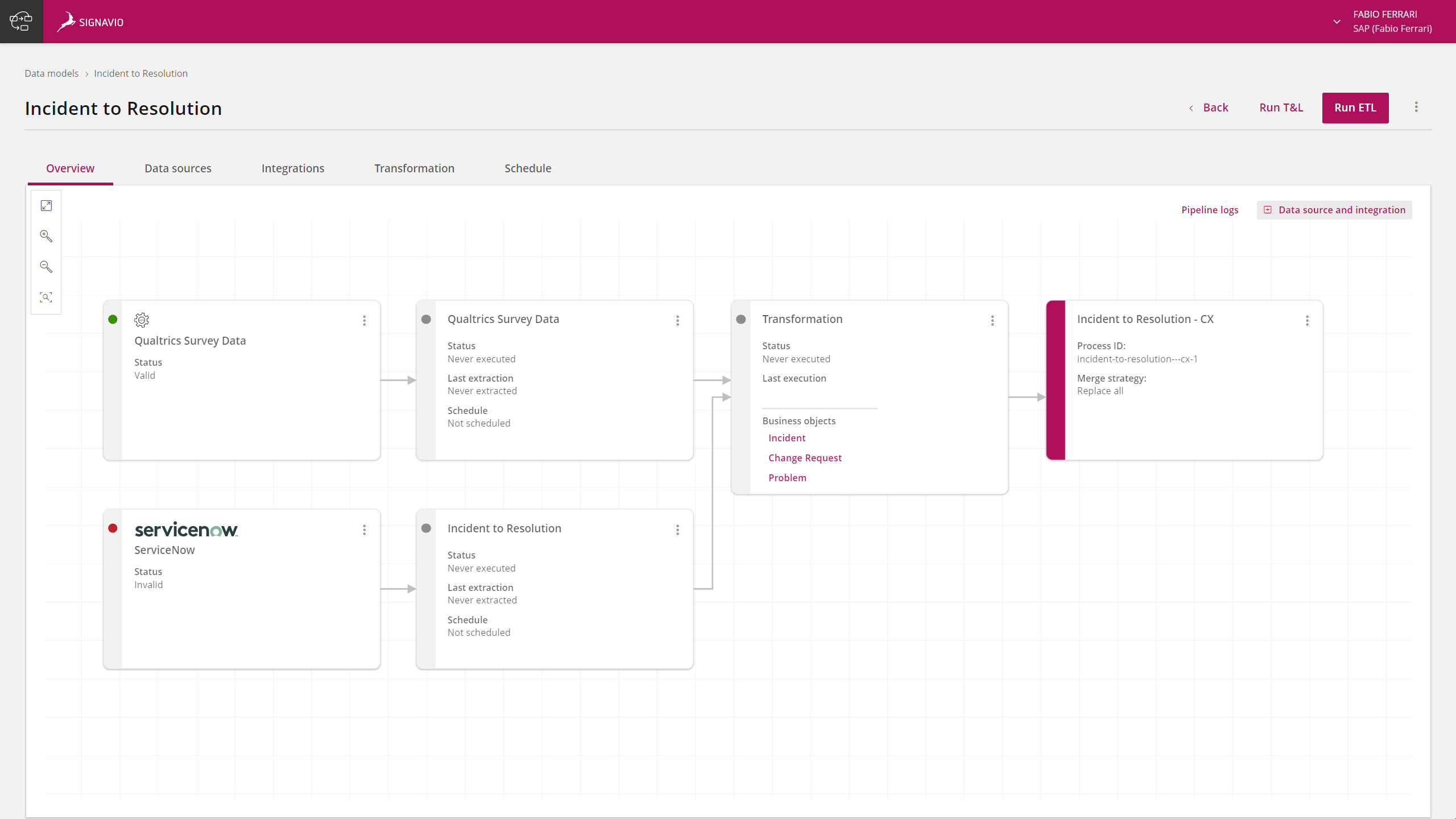

To achieve that, you can create a new data model selecting ServiceNow as source system.

Select the available Incident-to-Resolution transformation template, as an accelerator to transform raw data into an event log with a series of preconfigured SQL scripts.

Select New Integration and connect to an existing ServiceNow data source.

With the new Process Data Management (ETL 2.0) interface which was released in June 2022 you can now see the whole data pipeline, spanning connection, integration, and transformation. You can add the target Process Intelligence process.

Click on “+ Data source and integration” and select your Ingestion API data source. It will be added to the data pipeline.

Now you can start changing the existing SQL scripts to combine operational data with experience data coming from Qualtrics. For testing purposes, you can edit the Transformation block by adding a new “Qualtrics” business object.

At this point you can add a new event collector with a simple SQL script to preview the survey data that has been extracted from Qualtrics and pushed to the Ingestion API integration through the SAP Data Intelligence data pipeline.

Qualtrics survey data is now ready to be combined with ServiceNow data to create new valuable insights!

As a summary, in this blog post I have covered:

Follow the SAP Signavio Community and the SAP Data Intelligence Community for similar content, peer-to-peer networking and knowledge sharing.

If you’re interested to know more about Ingestion API, here are some additional resources that you can check:

In this GitHub repository you can find the data pipeline and the two python operators to be downloaded and imported into your SAP Data Intelligence tenant.

Welcome to the third episode of the series: Integrating SAP Signavio Process Intelligence and SAP Data Intelligence.

In case you missed the two previous blog posts:

- In Integrating SAP Signavio Process Intelligence and SAP Data Intelligence Cloud: process mining stream..., silvio.arcangeli explains how leveraging an enterprise-grade data integration and data orchestration solution (SAP Data Intelligence Cloud) in conjunction with a best-in-class process mining engine (SAP Signavio Process Intelligence) yields very powerful synergies.

- In Integrating SAP Signavio Process Intelligence and SAP Data Intelligence Cloud: a concrete example an..., Silvio showed a concrete example of a preliminary approach to combine the two products, with a step-by-step guide to setup a SAP Data Intelligence pipeline that was feeding data into SAP Signavio Process Intelligence by using a staging area implemented in an Amazon S3 bucket.

After the enhancements that were released in the product in June 2022, with the general availability of Ingestion API (in the Appendix you can find the link to the documentation), we can now overcome that preliminary approach and actually move to a direct connectivity between the products.

In this third post, I’ll therefore describe a concrete example that shows how you can use an SAP Data Intelligence pipeline to feed data directly into SAP Signavio Process Intelligence, via Ingestion API, bypassing the need for a staging area.

Ingestion API allows you to create an external data pipeline and ingest data into SAP Signavio Process Intelligence via API with an authentication token. This facilitates the integration with SAP Data Intelligence Cloud, where a data pipeline can be created with an operator at the end pushing the data into SAP Signavio Process Intelligence.

The example implemented in this blog shows how to read data from a customer survey from a Qualtrics system and how to then feed it into SAP Signavio Process Intelligence.

What is interesting is that such approach can be applied to any of the numerous sources supported by SAP Data Intelligence Cloud leveraging on its data processing and transformation capabilities, but it can also be applied to any kind of SAP and non-SAP data pipeline, external to SAP Signavio Process Intelligence.

Configuring an SAP Data Intelligence Cloud pipeline to move data from Qualtrics to SAP Signavio Process Intelligence via Ingestion API

Let’s first introduce some context.

Some of you already know about journey to process analytics, an innovative process management practice that connects experience and business operations data, aiming at understanding, improving and transforming your customer, supplier and employee experience. If you haven’t heard of it yet, I would suggest you to read this 5 minute blog post by aida.centelles.

Let’s now imagine that we want to analyze an Incident-to-Resolution process. From a traditional process mining perspective, the approach is quite straightforward: we configure a connection to ServiceNow, extract the data, create an event log, and apply process mining techniques on it.

But what if we could collect customers feedback, at the end of their journey, in a Qualtrics survey?

This experience data could be combined to the operational data to bring the customer experience perspective into play, enriching the data model and leading to a broad variety of new and valuable insights.

However, currently there is no native connector to Qualtrics in SAP Signavio Process Intelligence. Hence, we can create a data pipeline in SAP Data Intelligence to extract the survey data from Qualtrics and push it to SAP Signavio Process Intelligence.

Let’s see how we can quickly set this up.

Ingestion API

First of all, let’s create a new Ingestion API data source in SAP Signavio Process Intelligence.

You can either create a data source or an integration. Either way, the other one is then automatically created with the same title and with the same type (Ingestion API), and they will be interconnected with each other.

The data source provides two parameters:

- The API endpoint is the target URL to make a request to ingest external data into SAP Signavio Process Intelligence

- The Token is the authentication token required for a successful request pushing data into this specific pair of data source and integration

Qualtrics

Second, let’s connect to Qualtrics to retrieve the information we need.

Login into your Qualtrics system and go to Account Settings and then to Qualtrics IDs

Here you can retrieve the following parameters

- Datacenter ID

- API authentication parameters

- Token

- User ID

- Survey ID

SAP Data Intelligence pipeline

Last, let’s create a data pipeline in SAP Data Intelligence Cloud.

In this GitHub repository you can find a ready-to-use SAP Data Intelligence graph, with a connector to Qualtrics and a connector to SAP Signavio Process Intelligence.

You can download and archive the content of the 3 folders (that you can find in the repository) into 3 zip files, so that they can be imported as solutions into your SAP Data Intelligence tenant. Do not archive the folders, but for each folder select the content and “add to archive”.

In order to import solutions in SAP Data Intelligence, you can go to System Management, go to Files, click on the '+' symbol, select "Import Solution" and choose your zip file.

In SAP Data Intelligence, you can create an OPENAPI connection to Qualtrics

- Host: qualtrics.com

- Authentication Type: Basic

- Username: the Qualtrics data center ID (can be found under your Qualtrics User, Account Settings, Qualtrics IDs)

- Password: the Qualtrics token (can be found under your Qualtrics User, Account Settings, Qualtrics IDs)

In SAP Data Intelligence, you can create an OPENAPI connection to SAP Signavio

- Host: spi-etl-ingestion.eu-prod-cloud-os-eu.suite-saas-prod.signav.io (note: the API endpoint url of Ingestion API will change soon)

- Authentication Type: Basic

- Username: it's not relevant, you can type "signavio"

- Password: the Ingestion API token (can be found in the Ingestion API data source)

In SAP Data Intelligence, you can open the Modeler and add the imported graph ("Qualtrics to SAP Signavio").

You can configure the graph.

- In the Qualtrics operator, add (1) the connection, and (2) the Qualtrics Survey ID

- In the SAP Signavio operator, add (1) the connection, and (2) the name of the target table to be pushed in SAP Signavio Process Intelligence

You can now save and run the pipeline.

Once its status is “completed” you can go to the SAP Signavio Process Intelligence Ingestion API integration and check the logs.

A new execution log will appear, with “Running” status. After a few seconds the status will become “Done” and you can check in the Tables tab that a new table has indeed been uploaded, with the name provided in the second python operator of the DI pipeline.

The data is now ready to be used for process mining!

Additional step: Add Experience Data to your Data Model!

As we stated above, the whole idea started with the business requirement to add Qualtrics data to the Incident to Resolution process, to enrich operational data with the customer experience perspective.

To achieve that, you can create a new data model selecting ServiceNow as source system.

Select the available Incident-to-Resolution transformation template, as an accelerator to transform raw data into an event log with a series of preconfigured SQL scripts.

Select New Integration and connect to an existing ServiceNow data source.

With the new Process Data Management (ETL 2.0) interface which was released in June 2022 you can now see the whole data pipeline, spanning connection, integration, and transformation. You can add the target Process Intelligence process.

Click on “+ Data source and integration” and select your Ingestion API data source. It will be added to the data pipeline.

Now you can start changing the existing SQL scripts to combine operational data with experience data coming from Qualtrics. For testing purposes, you can edit the Transformation block by adding a new “Qualtrics” business object.

At this point you can add a new event collector with a simple SQL script to preview the survey data that has been extracted from Qualtrics and pushed to the Ingestion API integration through the SAP Data Intelligence data pipeline.

Qualtrics survey data is now ready to be combined with ServiceNow data to create new valuable insights!

Further steps

As a summary, in this blog post I have covered:

- How to create and use an Ingestion API data source to push external data into SAP Signavio Process Intelligence

- Which information you need to gather from your Qualtrics system in order to extract data from a survey, and how you can retrieve it

- How to connect SAP Data Intelligence Cloud to Qualtrics and then create a data pipeline with two operators, that respectively:

- Connect to Qualtrics and extract survey data

- Push the data to SAP Signavio Process Intelligence via Ingestion API

Follow the SAP Signavio Community and the SAP Data Intelligence Community for similar content, peer-to-peer networking and knowledge sharing.

Appendix

If you’re interested to know more about Ingestion API, here are some additional resources that you can check:

- Documentation on the Ingestion API connector:

- How to upload data using the Ingestion API:

- How to set up data ingestion:

- How to manage the ingestion API access token:

- How to use Ingestion API with code examples

In this GitHub repository you can find the data pipeline and the two python operators to be downloaded and imported into your SAP Data Intelligence tenant.

Labels:

You must be a registered user to add a comment. If you've already registered, sign in. Otherwise, register and sign in.

Labels in this area

-

ABAP CDS Views - CDC (Change Data Capture)

2 -

AI

1 -

Analyze Workload Data

1 -

BTP

1 -

Business and IT Integration

2 -

Business application stu

1 -

Business Technology Platform

1 -

Business Trends

1,661 -

Business Trends

87 -

CAP

1 -

cf

1 -

Cloud Foundry

1 -

Confluent

1 -

Customer COE Basics and Fundamentals

1 -

Customer COE Latest and Greatest

3 -

Customer Data Browser app

1 -

Data Analysis Tool

1 -

data migration

1 -

data transfer

1 -

Datasphere

2 -

Event Information

1,400 -

Event Information

64 -

Expert

1 -

Expert Insights

178 -

Expert Insights

273 -

General

1 -

Google cloud

1 -

Google Next'24

1 -

Kafka

1 -

Life at SAP

784 -

Life at SAP

11 -

Migrate your Data App

1 -

MTA

1 -

Network Performance Analysis

1 -

NodeJS

1 -

PDF

1 -

POC

1 -

Product Updates

4,577 -

Product Updates

326 -

Replication Flow

1 -

RisewithSAP

1 -

SAP BTP

1 -

SAP BTP Cloud Foundry

1 -

SAP Cloud ALM

1 -

SAP Cloud Application Programming Model

1 -

SAP Datasphere

2 -

SAP S4HANA Cloud

1 -

SAP S4HANA Migration Cockpit

1 -

Technology Updates

6,886 -

Technology Updates

403 -

Workload Fluctuations

1

Related Content

- 10+ ways to reshape your SAP landscape with SAP BTP - Blog 4 Interview in Technology Blogs by SAP

- 10+ ways to reshape your SAP landscape with SAP Business Technology Platform – Blog 4 in Technology Blogs by SAP

- Top Picks: Innovations Highlights from SAP Business Technology Platform (Q1/2024) in Technology Blogs by SAP

- Experiencing Embeddings with the First Baby Step in Technology Blogs by Members

- Understanding AI, Machine Learning and Deep Learning in Technology Blogs by Members

Popular Blog Posts

| Subject | Kudos |

|---|---|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

Top kudoed authors

| User | Count |

|---|---|

| 12 | |

| 10 | |

| 10 | |

| 7 | |

| 7 | |

| 7 | |

| 6 | |

| 6 | |

| 5 | |

| 4 |