- SAP Community

- Products and Technology

- Enterprise Resource Planning

- ERP Blogs by Members

- Lessons learned implementing an Event-Driven Cloud...

Enterprise Resource Planning Blogs by Members

Gain new perspectives and knowledge about enterprise resource planning in blog posts from community members. Share your own comments and ERP insights today!

Turn on suggestions

Auto-suggest helps you quickly narrow down your search results by suggesting possible matches as you type.

Showing results for

raeijpe

Contributor

Options

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

04-04-2022

12:10 PM

We were challenged when my company was asked to support a client's integration strategy to connect S/4HANA public cloud with their production plant equipment. The client planned to replace their Microsoft ERP application with S/4HANA Cloud. And this with minimal impact on existing connected systems.

S/4HANA supported the selling, planning, purchasing, and invoicing methods and master data for products and customers. Other applications will cover the Bill of material based on a recipe for (semi-)finish products, the control during the production process, quality checks, and needed transport documents. We need to integrate different systems to support their production process, resulting in many integrations flows where data should be exchanged.

With S/4HANA on-premises and 'classic' ERP systems, we mostly fall back to the traditional way of integrating and modifying the backend ERP. But in S/4HANA Cloud, interfering in existing processes is limited to the provided extension and integration points given by SAP. Another challenge was the wish of the client to determine any delay caused by the integration with their ERP system in the production process, which was an issue in their 'old' running process.

We reframed our challenge to find an architecture to integrate all systems, cloud, and on-premises as if it works as one integrated application almost in real-time and called it Event-Driven Cloud Integration Architecture.

We know that SAP S/4HANA supports events. And by choosing SAP Event Mesh and SAP Cloud Integration, SAP ALM, and SAP HANA Cloud on the SAP BTP, we got all the pieces we needed to implement our event-driven architecture for integrated systems.

Only the technology was not enough for a successful implementation. We also needed an implementation strategy and guidelines, which wasn't as easy as its sounds. The SAP API hub provides information about the S/4HANA events but does not tell how to integrate these with SAP Cloud Integration. And the Discover section of the SAP Cloud Integration didn't provide any guidelines or examples connected to S/4HANA events. But we didn't find an end-to-end implementation guide for an Event-Driven Cloud Integration Architecture from SAP.

During our preparation for the implementation phase, we found out why. And the answer is simple. The SAP events in S/4HANA are triggered on that high level that you don't know in detail what is happening where reasons and possible solutions can be that diverse, that this will be different for any industry. An event of S/4HANA should be followed by an API call to get the details in most cases. And only based on that detailed information can the relevance for the receiving system be determined. And even when an event is raised, it can have different meanings for S/4HANA and the other connected systems system. In other words, a change of a record in one system can result in the creation of a record in another system. Or the record change will be ignored when it is not relevant to the receiving system.

Let's explain this by an example in our project. S/4HAHA raises a create product event when we create a material in the SAP system. But for one of our interfaces, we are only interested in finished products. To find out if the raised product event belongs to a finished product, we first need to call an SAP API for the product details and use this information to filter the events from those unrelated to finished products. And when we change materials in S/4HANA, we have to look not only if it is a finished product but also to check if the changed fields are relevant for our interface. When these appropriate fields aren't adjusted for our interfaces, the raised S/4HANA product event is irrelevant and can be ignored. So we found out that an event for the S/4HANA event is not the same for the connected systems.

To overcome this problem, we decide to introduce our Common Data Events (CDE) concept. In this concept, we define a CDE as an event together with data that is only relevant to our organization. And in this CDE concept, we also split our integration into two phases:

We transform an application event into an agnostic and application-independent common data event and enrich it with applicable data based on a common data model (CDM) specific to our organization and raise it during this first phase. And we react to this CDE and process the event in the second phase.

In our project, we designed Common Data Events for all our entities by finding all integration scenarios which used the same S/4HANA events and looking for needed data.

We ensure data consistency by collecting all necessary raised S/4HANA events in an SAP Event Mesh queue. A webhook on this queue starts a Cloud Integration flow which enriches the event with detailed data by calling one or multiple APIs. The flow will also filter events on their relevance. Only enhanced and relevant will be raised again as Common Data Events on the SAP Event Mesh for further processing.

We developed our SAP CAP application to support this process, which checks the relevance and meaning of S/4 events and limits the needed processing of SAP Integration flows and the irrelevant traffic between SAP Cloud Integration and external systems. And this app was the missing glue for our event-driven integration solution.

Now that we have implemented this Enrich Common Data Event phase, we have become flexible because we have all relevant data belonging to our event action type in an Event Mesh queue. Next, we can dispatch Common Data Event to the receiving systems from the Event Mesh queue to multiple applications and push and pull notification and integration scenarios.

In notification scenarios, applications will handle the Common Data Event by themselves. They are primarily custom-built applications like SAP CAP applications or other mesh systems like Azure Event Grid or Amazon Simple Queue Service which will do the follow-up processes.

We focus on integration scenarios to fulfill a data exchange process between applications during our project. Based on the scenario, we need to transfer the CDE into a predefined action, primarily an API call or the creation of a flat file. We map the CDM data to the structure of the receiving system and map the content to the expected values. Again, we will use our SAP CAP application to determine the needed action in the receiving system. This helps us limit the number of calls (licenses costs) and modifications of the receiving systems (development and testing costs).

Based on our experiences with our client, we can conclude that SAP S/4HANA and SAP BTP Integration Suite are mature enough for running an Event-Driven Cloud Integration Architecture. But it will also bring challenges for our client. Every action in a related S/4HANA transaction will result in a small message, which should be handled in real-time in the proper context. Together with our client, we rethink how we would do the implementation and how they can support integration processes in the future because it is entirely different from a traditional integration.

But it brings much more value to our clients. They can now choose best-of-breeds software and custom-developed applications, which run like an integrated application without boundaries. Our client can now run and upscale their production process with a higher quality in real-time without limitations of the used applications. And it brings them many advantages in terms of cost, risk, implementation time, and support:

We like to hear from you about our Common Data Events concept and the chosen Event-Driven Cloud Integration Architecture from SAP. As you see, a lot of lessons were learned. There is much more to tell and explain, but I hope this will give you a first insight into the value of an Event-Driven Cloud Integration Architecture.

S/4HANA supported the selling, planning, purchasing, and invoicing methods and master data for products and customers. Other applications will cover the Bill of material based on a recipe for (semi-)finish products, the control during the production process, quality checks, and needed transport documents. We need to integrate different systems to support their production process, resulting in many integrations flows where data should be exchanged.

With S/4HANA on-premises and 'classic' ERP systems, we mostly fall back to the traditional way of integrating and modifying the backend ERP. But in S/4HANA Cloud, interfering in existing processes is limited to the provided extension and integration points given by SAP. Another challenge was the wish of the client to determine any delay caused by the integration with their ERP system in the production process, which was an issue in their 'old' running process.

The challenge

We reframed our challenge to find an architecture to integrate all systems, cloud, and on-premises as if it works as one integrated application almost in real-time and called it Event-Driven Cloud Integration Architecture.

We know that SAP S/4HANA supports events. And by choosing SAP Event Mesh and SAP Cloud Integration, SAP ALM, and SAP HANA Cloud on the SAP BTP, we got all the pieces we needed to implement our event-driven architecture for integrated systems.

Only the technology was not enough for a successful implementation. We also needed an implementation strategy and guidelines, which wasn't as easy as its sounds. The SAP API hub provides information about the S/4HANA events but does not tell how to integrate these with SAP Cloud Integration. And the Discover section of the SAP Cloud Integration didn't provide any guidelines or examples connected to S/4HANA events. But we didn't find an end-to-end implementation guide for an Event-Driven Cloud Integration Architecture from SAP.

During our preparation for the implementation phase, we found out why. And the answer is simple. The SAP events in S/4HANA are triggered on that high level that you don't know in detail what is happening where reasons and possible solutions can be that diverse, that this will be different for any industry. An event of S/4HANA should be followed by an API call to get the details in most cases. And only based on that detailed information can the relevance for the receiving system be determined. And even when an event is raised, it can have different meanings for S/4HANA and the other connected systems system. In other words, a change of a record in one system can result in the creation of a record in another system. Or the record change will be ignored when it is not relevant to the receiving system.

Let's explain this by an example in our project. S/4HAHA raises a create product event when we create a material in the SAP system. But for one of our interfaces, we are only interested in finished products. To find out if the raised product event belongs to a finished product, we first need to call an SAP API for the product details and use this information to filter the events from those unrelated to finished products. And when we change materials in S/4HANA, we have to look not only if it is a finished product but also to check if the changed fields are relevant for our interface. When these appropriate fields aren't adjusted for our interfaces, the raised S/4HANA product event is irrelevant and can be ignored. So we found out that an event for the S/4HANA event is not the same for the connected systems.

Common Data Events

To overcome this problem, we decide to introduce our Common Data Events (CDE) concept. In this concept, we define a CDE as an event together with data that is only relevant to our organization. And in this CDE concept, we also split our integration into two phases:

- the enrich CDE phase

- the dispatch CDE phase

We transform an application event into an agnostic and application-independent common data event and enrich it with applicable data based on a common data model (CDM) specific to our organization and raise it during this first phase. And we react to this CDE and process the event in the second phase.

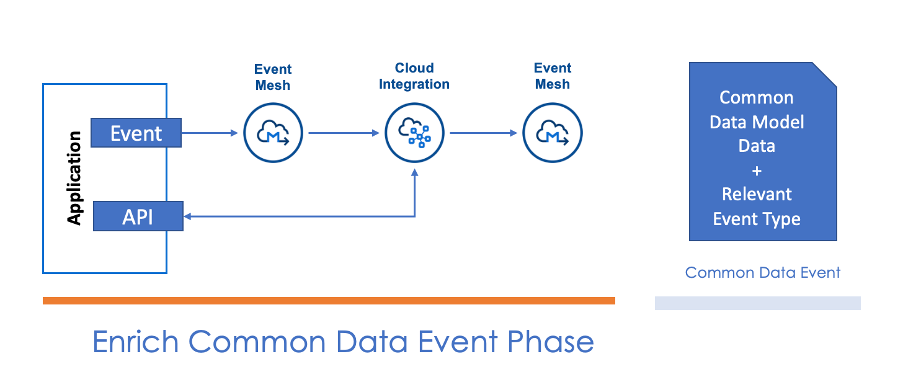

Enrich CDE phase

In our project, we designed Common Data Events for all our entities by finding all integration scenarios which used the same S/4HANA events and looking for needed data.

We ensure data consistency by collecting all necessary raised S/4HANA events in an SAP Event Mesh queue. A webhook on this queue starts a Cloud Integration flow which enriches the event with detailed data by calling one or multiple APIs. The flow will also filter events on their relevance. Only enhanced and relevant will be raised again as Common Data Events on the SAP Event Mesh for further processing.

We developed our SAP CAP application to support this process, which checks the relevance and meaning of S/4 events and limits the needed processing of SAP Integration flows and the irrelevant traffic between SAP Cloud Integration and external systems. And this app was the missing glue for our event-driven integration solution.

Event-Driven Integration Architecture

Now that we have implemented this Enrich Common Data Event phase, we have become flexible because we have all relevant data belonging to our event action type in an Event Mesh queue. Next, we can dispatch Common Data Event to the receiving systems from the Event Mesh queue to multiple applications and push and pull notification and integration scenarios.

In notification scenarios, applications will handle the Common Data Event by themselves. They are primarily custom-built applications like SAP CAP applications or other mesh systems like Azure Event Grid or Amazon Simple Queue Service which will do the follow-up processes.

We focus on integration scenarios to fulfill a data exchange process between applications during our project. Based on the scenario, we need to transfer the CDE into a predefined action, primarily an API call or the creation of a flat file. We map the CDM data to the structure of the receiving system and map the content to the expected values. Again, we will use our SAP CAP application to determine the needed action in the receiving system. This helps us limit the number of calls (licenses costs) and modifications of the receiving systems (development and testing costs).

Conclusion

Based on our experiences with our client, we can conclude that SAP S/4HANA and SAP BTP Integration Suite are mature enough for running an Event-Driven Cloud Integration Architecture. But it will also bring challenges for our client. Every action in a related S/4HANA transaction will result in a small message, which should be handled in real-time in the proper context. Together with our client, we rethink how we would do the implementation and how they can support integration processes in the future because it is entirely different from a traditional integration.

But it brings much more value to our clients. They can now choose best-of-breeds software and custom-developed applications, which run like an integrated application without boundaries. Our client can now run and upscale their production process with a higher quality in real-time without limitations of the used applications. And it brings them many advantages in terms of cost, risk, implementation time, and support:

- The CDE concept limits the number of calls to backend systems because it will call the backend only when it needs to enrich the event data once

- It improves the security because receiver applications will never call S/4HANA APIs directly, and only relevant CDE with limited data will be available for the receiver systems.

- S/4HANA events and APIs need to be configured only once and not for every integration flow

- The CDE concept is agnostic and also fits non-S/4HANA events, making it easy to attach new applications and extend the integrated solution.

- With the help of our custom build application, the events depend on the relevant data and not on the predefined high-level event type of S/4HANA. This will simplify the design of integration flows (development and support costs) and limit the number of calls to the receiving system (licenses costs).

- The introduction of the two phases in our CDE concept decouples the event raising part (S/4HANA integration) from the event handling part (the integration flow connecting the receiver system). This makes adding new and changing existing integration flows easy without interfering with other flows or the event-raising part.

We like to hear from you about our Common Data Events concept and the chosen Event-Driven Cloud Integration Architecture from SAP. As you see, a lot of lessons were learned. There is much more to tell and explain, but I hope this will give you a first insight into the value of an Event-Driven Cloud Integration Architecture.

3 Comments

You must be a registered user to add a comment. If you've already registered, sign in. Otherwise, register and sign in.

Labels in this area

-

"mm02"

1 -

A_PurchaseOrderItem additional fields

1 -

ABAP

1 -

ABAP Extensibility

1 -

ACCOSTRATE

1 -

ACDOCP

1 -

Adding your country in SPRO - Project Administration

1 -

Advance Return Management

1 -

AI and RPA in SAP Upgrades

1 -

Approval Workflows

1 -

ARM

1 -

ASN

1 -

Asset Management

1 -

Associations in CDS Views

1 -

auditlog

1 -

Authorization

1 -

Availability date

1 -

Azure Center for SAP Solutions

1 -

AzureSentinel

2 -

Bank

1 -

BAPI_SALESORDER_CREATEFROMDAT2

1 -

BRF+

1 -

BRFPLUS

1 -

Bundled Cloud Services

1 -

business participation

1 -

Business Processes

1 -

CAPM

1 -

Carbon

1 -

Cental Finance

1 -

CFIN

1 -

CFIN Document Splitting

1 -

Cloud ALM

1 -

Cloud Integration

1 -

condition contract management

1 -

Connection - The default connection string cannot be used.

1 -

Custom Table Creation

1 -

Customer Screen in Production Order

1 -

Data Quality Management

1 -

Date required

1 -

Decisions

1 -

desafios4hana

1 -

Developing with SAP Integration Suite

1 -

Direct Outbound Delivery

1 -

DMOVE2S4

1 -

EAM

1 -

EDI

2 -

EDI 850

1 -

EDI 856

1 -

EHS Product Structure

1 -

Emergency Access Management

1 -

Energy

1 -

EPC

1 -

Find

1 -

FINSSKF

1 -

Fiori

1 -

Flexible Workflow

1 -

Gas

1 -

Gen AI enabled SAP Upgrades

1 -

General

1 -

generate_xlsx_file

1 -

Getting Started

1 -

HomogeneousDMO

1 -

IDOC

2 -

Integration

1 -

Learning Content

2 -

LogicApps

2 -

low touchproject

1 -

Maintenance

1 -

management

1 -

Material creation

1 -

Material Management

1 -

MD04

1 -

MD61

1 -

methodology

1 -

Microsoft

2 -

MicrosoftSentinel

2 -

Migration

1 -

MRP

1 -

MS Teams

2 -

MT940

1 -

Newcomer

1 -

Notifications

1 -

Oil

1 -

open connectors

1 -

Order Change Log

1 -

ORDERS

2 -

OSS Note 390635

1 -

outbound delivery

1 -

outsourcing

1 -

PCE

1 -

Permit to Work

1 -

PIR Consumption Mode

1 -

PIR's

1 -

PIRs

1 -

PIRs Consumption

1 -

PIRs Reduction

1 -

Plan Independent Requirement

1 -

Premium Plus

1 -

pricing

1 -

Primavera P6

1 -

Process Excellence

1 -

Process Management

1 -

Process Order Change Log

1 -

Process purchase requisitions

1 -

Product Information

1 -

Production Order Change Log

1 -

Purchase requisition

1 -

Purchasing Lead Time

1 -

Redwood for SAP Job execution Setup

1 -

RISE with SAP

1 -

RisewithSAP

1 -

Rizing

1 -

S4 Cost Center Planning

1 -

S4 HANA

1 -

S4HANA

3 -

Sales and Distribution

1 -

Sales Commission

1 -

sales order

1 -

SAP

2 -

SAP Best Practices

1 -

SAP Build

1 -

SAP Build apps

1 -

SAP Cloud ALM

1 -

SAP Data Quality Management

1 -

SAP Maintenance resource scheduling

2 -

SAP Note 390635

1 -

SAP S4HANA

2 -

SAP S4HANA Cloud private edition

1 -

SAP Upgrade Automation

1 -

SAP WCM

1 -

SAP Work Clearance Management

1 -

Schedule Agreement

1 -

SDM

1 -

security

2 -

Settlement Management

1 -

soar

2 -

SSIS

1 -

SU01

1 -

SUM2.0SP17

1 -

SUMDMO

1 -

Teams

2 -

User Administration

1 -

User Participation

1 -

Utilities

1 -

va01

1 -

vendor

1 -

vl01n

1 -

vl02n

1 -

WCM

1 -

X12 850

1 -

xlsx_file_abap

1 -

YTD|MTD|QTD in CDs views using Date Function

1

- « Previous

- Next »

Related Content

- Quick Start guide for PLM system integration 3.0 Implementation/Installation in Enterprise Resource Planning Blogs by SAP

- Futuristic Aerospace or Defense BTP Data Mesh Layer using Collibra, Next Labs ABAC/DAM, IAG and GRC in Enterprise Resource Planning Blogs by Members

- SAP ERP Functionality for EDI Processing: UoMs Determination for Inbound Orders in Enterprise Resource Planning Blogs by Members

- SAP S/4HANA Cloud Extensions with SAP Build Best Practices: An Expert Roundtable in Enterprise Resource Planning Blogs by SAP

- Enhancing Performance in SAP Web Applications: Strategies and Best Practices in Enterprise Resource Planning Blogs by Members

Top kudoed authors

| User | Count |

|---|---|

| 2 | |

| 2 | |

| 2 | |

| 2 | |

| 2 | |

| 2 | |

| 2 | |

| 1 | |

| 1 | |

| 1 |