- SAP Community

- Products and Technology

- Technology

- Technology Blogs by Members

- Day 5 | Skybuffer Community Chatbot | How to Creat...

Technology Blogs by Members

Explore a vibrant mix of technical expertise, industry insights, and tech buzz in member blogs covering SAP products, technology, and events. Get in the mix!

Turn on suggestions

Auto-suggest helps you quickly narrow down your search results by suggesting possible matches as you type.

Showing results for

Siarhei

Explorer

Options

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

03-19-2021

10:53 AM

Business Challenge

It is quite a frequent case when companies start with a simple “Questions and Answers” chatbot development. But moving ahead and continuously improving AI skills of the chatbot, they are faced with a challenge that “Questions and Answers” skill should have a cascade structure to capture the user’s input and, having considered it, to provide a clarifying answer. Let us assume it should work for a multi-lingual chatbot that is, for the cost-saving purpose, trained properly in English only, and is supposed to provide high-quality (not automatically translated) replies in other languages as well by simply taking replies from the chatbot glossary.

Solution

Skybuffer’s offering provides “Questions and Answers” (QnA) mechanisms of creation and configuration of your multilingual skills with additional get-skills structure to capture users’ data or collect more information in order to provide the best answer. So, what you need to do is just get acquainted with the previous posts about our open-source AI content developed on SAP Conversational AI platform and learn how to, using template skills’ group, enrich your chatbot with a new advanced QnA skill in a fast and efficient manner.

Following next steps in this guide, you will be able to take a template skill’s group from our open-source SAP Conversational AI content and create advanced level QnA chat bot based on it.

Note: we are using Skybuffer open-source community version of the chatbot to develop an advanced QnA skill on top of it. You can always get access to this bot from our Day 1 post or directly navigating to:

Organization: https://cai.tools.sap/skybuffer-community

Chatbot: https://cai.tools.sap/skybuffer-community/skybuffer-foundation-content

We will start with the Function skills group. First of all, we'd like to point out that we are using Skybuffer-invented “object-oriented conversational AI” implementation approach (OO-CAI). This OO-CAI approached has been used to develop the community version of the Conversational AI content.

Consider Skills Group as a Class Entity

We use skill groups to encapsulate the set of skills that are supposed to execute one business step (function). It may be either complex QnA skill or a skill that requests some data from the backend system (via webhook or API integration). So, the skill group acts as a class entity in the object-oriented programming.

Consider Skills in the Skills Group as Methods of Class

Once we have an analogue of the class, we need an analogue of the interface, so we invented that each skill group can have maximum 4 skills. It can have fewer, but not more than 4, because each skill in the group is supposed to play a specific role for the consistent encapsulation of the business step. The skill group can consist of:

Trigger skill – this skill is used as a “main door” to execute a business step, so that is an entry point that can be called by the intent, entity or memory parameters values. We always use -trigger postfix for those skills.

Input skill – this skill is used to capture a user’s input. We separate user’s input skill because we can process it differently: either we allow jumping out of the input skill to another skill group (for example, when we ask about the user’s name), or we capture any user’s input and ignore intents/entities that are triggered by utterance (for example, when we need to capture the comment for the leave request). We always use -input postfix for those skills.

Function skill – this skill is used to execute an external function, so we use it to either call the webhook to the backend system, or API of the cloud service. We always use -function postfix for those skills.

Fallback skill – this skill we also call a “business fallback” that is a “back door” by its nature. We use it to try to return the user back to the skill group (business step) in case the user – be it by chance or on purpose – leaves the business step (skill group). For example, user jumped from one skill group to another and executed that business step completely, so we return them back to the skill they requested before. In this case, as soon as the user is routed by SAP Conversational AI to the general fallback skill (the grey one), we execute NLP once again from the general fallback skill and use the chance to return to the skills group via business fallback skill. We always use -fallback postfix for those skills.

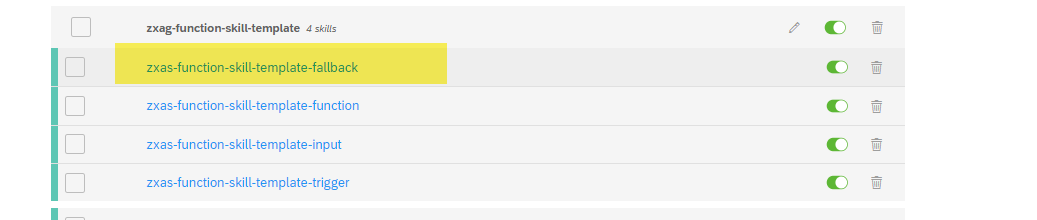

Step 1: Find zxas-function-skill-template-fallback business fallback skill of the Function skills group in your forked version of Skybuffer Foundation Content chatbot:

Step 2: Fork this skill into your bot, rename it and add the skill to the group. How to do it was already shown in Day 3 blog post.

Step 3: Create an Intent for your QnA skill.

Step 4: Start adapting the skills in the Function skills group. Go to the Build tab and select the -trigger skill (explained above) from the newly created skills group. Go to the Trigger section of the skill and replace the template intent with your brand-new skill intent you’ve created on the previous step.

Step 5: For the Function skills groups we have an additional block in the trigger section with rt_scenario_active memory parameter, this runtime memory parameter is used to activate a new scenario in the context of the dialogue. That is the key concept of Skybuffer open-source AI content that each skill group can be steered via memory parameter, so it is possible to dynamically disable a skill in a certain chatbot channel where you would not like the skill to be executable.

Note: Please remember that the memory parameter rt_scenario_active must be unset at the logical end of the scenario.

Replace the technical name of the template scenario that is mapped to rt_scenario_active memory parameter with the technical name of your own scenario (feel free to invent it):

Note: We use camel style for rt_scenario_active memory parameter value to make it easier to read.

Step 6: Now your trigger condition of the -trigger skill is ready and should look as follows:

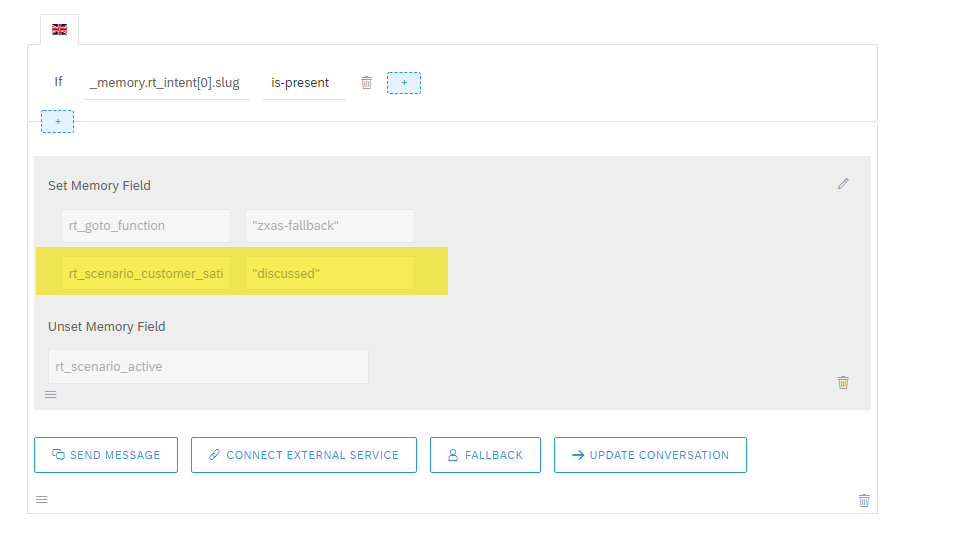

Step 7: Go to the Actions section. Switch to the edit mode in the first logical block. Replace the value of the memory parameter rt_return_to_function with the name of your -trigger skill:

Step 8: Replace the name of the memory parameter rt_scenario_<scenario name> with the name of your scenario (highlighted in yellow in the picture below).

Note: There is no restriction in our concept for the name of this memory parameter, however, in order to simplify debugging, we always try to keep the same name of the memory parameter rt_scenario_<scenario name> and the value we saved in the memory parameter rt_scenario_active

According to Skybuffer development concept for the open-source AI content, the memory parameter of rt_scenario_<scenario name> is activated at the beginning of the skillset and deactivated at the end of the skillset.

Note (major information about the central fallback skill): In our Skybuffer AI development methodology, we stick to two possible values for the memory parameter rt_scenario_<scenario name> that are “active” and “discussed”. rt_scenario_<scenario name> is used in the trigger section as a trigger condition in the -trigger and -fallback (business fallback) skills of the skill group to go back to the main skill from the central fallback skill (grey coloured fallback). Once the chatbot appears in the central fallback skill, we call SAP Conversational AI dialogue API to re-trigger NLP and try to return back to the previous active scenario that has not been processed completely, or that has not been cancelled by the user. In such a way we control such kind of “return to the skill” action by the value of the memory parameter rt_scenario_<scenario name> that is set to “active”.

Step 9: Press the Save button.

Note: rt_return_to_function memory parameter is used in Skybuffer Hybrid Chats solution to configure categorization (please, refer to Day 2 blog post)

Step 10: Now let us configure the next action blocks. We need to replace the text of [Your question in English] in two logical blocks of the skill.

Step 10.1 (English block) In the text section you need to switch to the Edit mode, input the text of the reply and save your entries.

Step 10.2 (Non-English block) Here we need to modify rt_source memory parameter value.

Note: to translate more than one phrase at a time, we use special conditions and translate arrays of phrases. To translate the array, activate the memory parameter rt_list_captions before calling /translate webhook.

Array in rt_source memory parameter should be in square brackets [], and each phrase should be put in quotes and separated with commas.

Note: Customize your bot replies translation using the Hybrid Chats - Chatbot Vocabulary application (find more details in Day 2 blog post)

Step 11: To display the translated array, we use a specific memory parameter rt_message_captions. This is an array, so to show it you need to use the structure {{memory.rt_message_captions.[n]}}, where n – sequence number of the translated phrase.

Note: please consider that numbering in the array starts with 0.

Step 12: Let us create a skill (the second level skills group) which will capture user’s answer and collect it in the memory variable, so that it would be possible to move to the embedded QnA layer of our advanced QnA skill.

Return to the Build tab. Find zxas-get-template-trigger skill in your forked version of Skybuffer Foundation Content chatbot:

Note: we use so-called “get skills”, that we usually do not call directly, to model re-usable actions in the chatbot business steps, for example, “get name”, “get email”, “get comment” and etc.

Fork this skill into your bot, rename it and add the skill to the group. In such a way you will follow object-oriented conversational AI (OO-CAI) implementation approach and keep all skills encapsulated into the skill group to simplify development and organize your chatbot skills better.

Step 13: Start adaptation of just forked get skill. Go to the Build tab and select Triggers section of the new skill. Replace the template rt_scenario_active with your specific scenario parameter which will be used in this skill. As we have already explained the technical purpose of the memory parameter rt_scenario_active and what we usually set as its value, we will not spend time here again, however, please keep using camel structures in the memory parameter value to simplify the debugging.

Step 14: Go to the Actions section. Here is the place to capture the user’s input. We can technically capture all the data types, like numbers, date and time, locations using entities, also we can capture whole user input or set parameters according to the recognized sentiments. In our case we will use sentiments because our question has emotional answers.

So, in the conditions section for our action, we will check rt_sentiment in negative, vnegative values set (user does not want our support), and also we will check that target memory parameter is absent.

As a result of the sentiment analysis, for example, we will set memory parameter rt_assistance_level to “low” that we might probably use in the main layer of the advanced QnA skill to provide an answer according to the user’s input that we captured in the get-skill.

Note: please remember to unset memory parameter rt_scenario_active.

Step 15: Add the block with the opposite condition sentiment that is not in negative, vnegative values set.

Note: the memory parameter rt_return_to_function is set on the main (root) skills layer of the advanced QnA skills and is used for dynamic redirection to the business scenario skill after the user’s input in the get-skill is captured.

Step 16: we configured the get skill (embedded layer of the advanced QnA skill) and now we can return to the Function skills group (the root layer of our advanced QnA skill).

Go to the Build tab and select the -trigger function skill (yes, the one with the -trigger postfix at the end of the skill name).

Step 17: go to the previously configured actions and replace the template parameters with the newly created get skill details.

Note: let us explain the meaning of those memory parameters according to our AI skills development concept:

rt_scenario_active - runtime memory parameter used to activate a new scenario in the dialogue. We use the same value of this memory parameter in all the skills of the skills group, so we can understand which AI scenario (skills group) is currently active. That is very meaningful, for example, for the mapping of an AI skill and backend API supposed to return the value for the skills group. Please, remember that rt_scenario_active should be unset at the logical end of the scenario.

rt_skill - memory parameter for recording current skill and checking in the trigger section of the -function skill (yes, the one that has -function as a postfix of the name of the skill) of the skills group. That is very useful once we deal with cascades of AI skill groups.

rt_goto_function - memory parameter for recording the target skill which is used for dynamic redirection to the get-skill, central fallback skill, or another business skill outside the current skill group. The main purpose of the dynamic redirection between the skills from different skills group is to break the link between skills groups and avoid non-desired relationship-based forking of other skills group once we fork the skills group we want to be forked.

Step 18: Repeat the same activities for non-English action block of the skill.

Note: You can add as many embedded QnA layers (get skill skills groups) as it is required for your advanced QnA skill, so you can simply create new skills groups of get skills to collect specific user’s inputs, and also, we recommend you to use our standard open-source AI content of get skills to collect general user data like name, phone number, email, etc. You can allocate foundation get skills we provide in the open-source version of our AI content by skills group name, for example.

Step 19: let us, for example, in our blog post of the advanced QnA skill creation, ask the user about additional information in case they aren’t satisfied with our support.

Add condition block where rt_assistance_level is low and redirect to get comment skill provided as part of Skybuffer open-source AI content, so that you can see the value of the content and how fast it is to be used in order to capture user’s input information.

Step 20: Add the same block for non-English actions (replies). Please, consider that you should use /translate webhook to get access to Hybrid Chats - Vocabulary (Fiori application) where you can store translated phrases into the language you want the chatbot to communicate with your users in.

Note: you can always get access to Hybrid Chats demo tenant from our Day 1 post.

Step 21: Once we are in the -input skill (one that has -input as a postfix in the skill’s name) to capture the user’s input, we need to think about the redirection to the -function skill.

(1) Check that the parameters needed for Function execution are present.

(2) Set:

rt_scenario_active as “<current business scenario id>”

rt_skill as “<current skill ID>” for check in -trigger of -function skill

(3) Redirect to the -function skill

Step 22: Modification of the -input skill. The purpose of the -input skill is to redirect to the skill from both outside and inside the skill group, where some user input is expected. Access your -input skill, open triggers section and correct the condition for the memory parameter rt_skill (expected previous skill value) and the condition for the memory parameter rt_scenario_active (name of active scenario for get-skill) parameters.

Step 23: Go to the Actions section and navigate to the last message block. This block is used to allow passing by the -input skill and triggering another scenario with the intent recognized from the user input utterance by the NLP engine:

Step 24: Set the current value of the memory parameter rt_scenario_<scenario_name> to “discussed”

Step 26: Navigate to the Actions section and make the following activities that are marked in the same sequence on the picture below:

(1) Check the memory parameter which we got in the get-skill.

(2) Replace the template answer

(3) Set the current scenario as discussed

(4) Unset the memory parameter

Before the activities execution:

After the activities execution:

Step 27: Configure non-English block of the skill

Step 28: Now repeat steps 26-27 for other values of the assistance levels memory parameter rt_assistance_level.

Step 29: Perform the modification of the business -fallback skill. Access your new -fallback skill, go to the Trigger section and replace the value of the memory parameter _memory.rt_intent[0].slug with the name of your new intent without @ sign and hit <ENTER>:

Step 29: Your fallback skill adjustment is now completed.

Step 30: Test and make sure that your new skill is ready to provide replies in any language! For sure, please do not forget to adjust Hybrid Chats – Vocabulary list of the chatbot replies, so that you could avoid automatic translation of the replies.

Conclusion

Now you have completed Day 5 guidelines and you know how to build a support chatbot that can collect user data and additional information from users to provide more person-oriented and better-quality support. Approximately it takes you only 15-20 minutes to add -get and -function skills groups to the chatbot.

Generally speaking, after you go through Day 1, Day 2, Day 3, Day 4 and Day 5 and follow the guidelines, your chatbot will be able to:

Looks like it is ready to Go Live and support your Clients, Employees or Business Partners, what would you say?

P.S. You can also find the entire list of our blog posts under the links below:

Day 1 | Skybuffer Enterprise-Level Conversational AI Content Made Public for SAP Community Developme...

Day 2 | Skybuffer Community Chatbot | How to Customize Your Chatbot “get-help” skill

Day 3 | Skybuffer Community Chatbot | How to Create Multilingual Question-and-Answers Scenario Fast ...

Day 4 | Skybuffer Community Chatbot | How to Bring Your Own Bot to Hybrid Chats

Day 5 | Skybuffer Community Chatbot | How to Create Multilingual “Question and Answer” Complex Scena...

It is quite a frequent case when companies start with a simple “Questions and Answers” chatbot development. But moving ahead and continuously improving AI skills of the chatbot, they are faced with a challenge that “Questions and Answers” skill should have a cascade structure to capture the user’s input and, having considered it, to provide a clarifying answer. Let us assume it should work for a multi-lingual chatbot that is, for the cost-saving purpose, trained properly in English only, and is supposed to provide high-quality (not automatically translated) replies in other languages as well by simply taking replies from the chatbot glossary.

Solution

Skybuffer’s offering provides “Questions and Answers” (QnA) mechanisms of creation and configuration of your multilingual skills with additional get-skills structure to capture users’ data or collect more information in order to provide the best answer. So, what you need to do is just get acquainted with the previous posts about our open-source AI content developed on SAP Conversational AI platform and learn how to, using template skills’ group, enrich your chatbot with a new advanced QnA skill in a fast and efficient manner.

Following next steps in this guide, you will be able to take a template skill’s group from our open-source SAP Conversational AI content and create advanced level QnA chat bot based on it.

Note: we are using Skybuffer open-source community version of the chatbot to develop an advanced QnA skill on top of it. You can always get access to this bot from our Day 1 post or directly navigating to:

Organization: https://cai.tools.sap/skybuffer-community

Chatbot: https://cai.tools.sap/skybuffer-community/skybuffer-foundation-content

We will start with the Function skills group. First of all, we'd like to point out that we are using Skybuffer-invented “object-oriented conversational AI” implementation approach (OO-CAI). This OO-CAI approached has been used to develop the community version of the Conversational AI content.

Consider Skills Group as a Class Entity

We use skill groups to encapsulate the set of skills that are supposed to execute one business step (function). It may be either complex QnA skill or a skill that requests some data from the backend system (via webhook or API integration). So, the skill group acts as a class entity in the object-oriented programming.

Consider Skills in the Skills Group as Methods of Class

Once we have an analogue of the class, we need an analogue of the interface, so we invented that each skill group can have maximum 4 skills. It can have fewer, but not more than 4, because each skill in the group is supposed to play a specific role for the consistent encapsulation of the business step. The skill group can consist of:

Trigger skill – this skill is used as a “main door” to execute a business step, so that is an entry point that can be called by the intent, entity or memory parameters values. We always use -trigger postfix for those skills.

Input skill – this skill is used to capture a user’s input. We separate user’s input skill because we can process it differently: either we allow jumping out of the input skill to another skill group (for example, when we ask about the user’s name), or we capture any user’s input and ignore intents/entities that are triggered by utterance (for example, when we need to capture the comment for the leave request). We always use -input postfix for those skills.

Function skill – this skill is used to execute an external function, so we use it to either call the webhook to the backend system, or API of the cloud service. We always use -function postfix for those skills.

Fallback skill – this skill we also call a “business fallback” that is a “back door” by its nature. We use it to try to return the user back to the skill group (business step) in case the user – be it by chance or on purpose – leaves the business step (skill group). For example, user jumped from one skill group to another and executed that business step completely, so we return them back to the skill they requested before. In this case, as soon as the user is routed by SAP Conversational AI to the general fallback skill (the grey one), we execute NLP once again from the general fallback skill and use the chance to return to the skills group via business fallback skill. We always use -fallback postfix for those skills.

Step 1: Find zxas-function-skill-template-fallback business fallback skill of the Function skills group in your forked version of Skybuffer Foundation Content chatbot:

Step 2: Fork this skill into your bot, rename it and add the skill to the group. How to do it was already shown in Day 3 blog post.

Step 3: Create an Intent for your QnA skill.

Step 4: Start adapting the skills in the Function skills group. Go to the Build tab and select the -trigger skill (explained above) from the newly created skills group. Go to the Trigger section of the skill and replace the template intent with your brand-new skill intent you’ve created on the previous step.

Step 5: For the Function skills groups we have an additional block in the trigger section with rt_scenario_active memory parameter, this runtime memory parameter is used to activate a new scenario in the context of the dialogue. That is the key concept of Skybuffer open-source AI content that each skill group can be steered via memory parameter, so it is possible to dynamically disable a skill in a certain chatbot channel where you would not like the skill to be executable.

Note: Please remember that the memory parameter rt_scenario_active must be unset at the logical end of the scenario.

Replace the technical name of the template scenario that is mapped to rt_scenario_active memory parameter with the technical name of your own scenario (feel free to invent it):

Note: We use camel style for rt_scenario_active memory parameter value to make it easier to read.

Step 6: Now your trigger condition of the -trigger skill is ready and should look as follows:

Step 7: Go to the Actions section. Switch to the edit mode in the first logical block. Replace the value of the memory parameter rt_return_to_function with the name of your -trigger skill:

Step 8: Replace the name of the memory parameter rt_scenario_<scenario name> with the name of your scenario (highlighted in yellow in the picture below).

Note: There is no restriction in our concept for the name of this memory parameter, however, in order to simplify debugging, we always try to keep the same name of the memory parameter rt_scenario_<scenario name> and the value we saved in the memory parameter rt_scenario_active

According to Skybuffer development concept for the open-source AI content, the memory parameter of rt_scenario_<scenario name> is activated at the beginning of the skillset and deactivated at the end of the skillset.

Note (major information about the central fallback skill): In our Skybuffer AI development methodology, we stick to two possible values for the memory parameter rt_scenario_<scenario name> that are “active” and “discussed”. rt_scenario_<scenario name> is used in the trigger section as a trigger condition in the -trigger and -fallback (business fallback) skills of the skill group to go back to the main skill from the central fallback skill (grey coloured fallback). Once the chatbot appears in the central fallback skill, we call SAP Conversational AI dialogue API to re-trigger NLP and try to return back to the previous active scenario that has not been processed completely, or that has not been cancelled by the user. In such a way we control such kind of “return to the skill” action by the value of the memory parameter rt_scenario_<scenario name> that is set to “active”.

Step 9: Press the Save button.

Note: rt_return_to_function memory parameter is used in Skybuffer Hybrid Chats solution to configure categorization (please, refer to Day 2 blog post)

Step 10: Now let us configure the next action blocks. We need to replace the text of [Your question in English] in two logical blocks of the skill.

Step 10.1 (English block) In the text section you need to switch to the Edit mode, input the text of the reply and save your entries.

Step 10.2 (Non-English block) Here we need to modify rt_source memory parameter value.

Note: to translate more than one phrase at a time, we use special conditions and translate arrays of phrases. To translate the array, activate the memory parameter rt_list_captions before calling /translate webhook.

Array in rt_source memory parameter should be in square brackets [], and each phrase should be put in quotes and separated with commas.

Note: Customize your bot replies translation using the Hybrid Chats - Chatbot Vocabulary application (find more details in Day 2 blog post)

Step 11: To display the translated array, we use a specific memory parameter rt_message_captions. This is an array, so to show it you need to use the structure {{memory.rt_message_captions.[n]}}, where n – sequence number of the translated phrase.

Note: please consider that numbering in the array starts with 0.

Step 12: Let us create a skill (the second level skills group) which will capture user’s answer and collect it in the memory variable, so that it would be possible to move to the embedded QnA layer of our advanced QnA skill.

Return to the Build tab. Find zxas-get-template-trigger skill in your forked version of Skybuffer Foundation Content chatbot:

Note: we use so-called “get skills”, that we usually do not call directly, to model re-usable actions in the chatbot business steps, for example, “get name”, “get email”, “get comment” and etc.

Fork this skill into your bot, rename it and add the skill to the group. In such a way you will follow object-oriented conversational AI (OO-CAI) implementation approach and keep all skills encapsulated into the skill group to simplify development and organize your chatbot skills better.

Step 13: Start adaptation of just forked get skill. Go to the Build tab and select Triggers section of the new skill. Replace the template rt_scenario_active with your specific scenario parameter which will be used in this skill. As we have already explained the technical purpose of the memory parameter rt_scenario_active and what we usually set as its value, we will not spend time here again, however, please keep using camel structures in the memory parameter value to simplify the debugging.

Step 14: Go to the Actions section. Here is the place to capture the user’s input. We can technically capture all the data types, like numbers, date and time, locations using entities, also we can capture whole user input or set parameters according to the recognized sentiments. In our case we will use sentiments because our question has emotional answers.

So, in the conditions section for our action, we will check rt_sentiment in negative, vnegative values set (user does not want our support), and also we will check that target memory parameter is absent.

As a result of the sentiment analysis, for example, we will set memory parameter rt_assistance_level to “low” that we might probably use in the main layer of the advanced QnA skill to provide an answer according to the user’s input that we captured in the get-skill.

Note: please remember to unset memory parameter rt_scenario_active.

Step 15: Add the block with the opposite condition sentiment that is not in negative, vnegative values set.

Note: the memory parameter rt_return_to_function is set on the main (root) skills layer of the advanced QnA skills and is used for dynamic redirection to the business scenario skill after the user’s input in the get-skill is captured.

Step 16: we configured the get skill (embedded layer of the advanced QnA skill) and now we can return to the Function skills group (the root layer of our advanced QnA skill).

Go to the Build tab and select the -trigger function skill (yes, the one with the -trigger postfix at the end of the skill name).

Step 17: go to the previously configured actions and replace the template parameters with the newly created get skill details.

Note: let us explain the meaning of those memory parameters according to our AI skills development concept:

rt_scenario_active - runtime memory parameter used to activate a new scenario in the dialogue. We use the same value of this memory parameter in all the skills of the skills group, so we can understand which AI scenario (skills group) is currently active. That is very meaningful, for example, for the mapping of an AI skill and backend API supposed to return the value for the skills group. Please, remember that rt_scenario_active should be unset at the logical end of the scenario.

rt_skill - memory parameter for recording current skill and checking in the trigger section of the -function skill (yes, the one that has -function as a postfix of the name of the skill) of the skills group. That is very useful once we deal with cascades of AI skill groups.

rt_goto_function - memory parameter for recording the target skill which is used for dynamic redirection to the get-skill, central fallback skill, or another business skill outside the current skill group. The main purpose of the dynamic redirection between the skills from different skills group is to break the link between skills groups and avoid non-desired relationship-based forking of other skills group once we fork the skills group we want to be forked.

Step 18: Repeat the same activities for non-English action block of the skill.

Note: You can add as many embedded QnA layers (get skill skills groups) as it is required for your advanced QnA skill, so you can simply create new skills groups of get skills to collect specific user’s inputs, and also, we recommend you to use our standard open-source AI content of get skills to collect general user data like name, phone number, email, etc. You can allocate foundation get skills we provide in the open-source version of our AI content by skills group name, for example.

Step 19: let us, for example, in our blog post of the advanced QnA skill creation, ask the user about additional information in case they aren’t satisfied with our support.

Add condition block where rt_assistance_level is low and redirect to get comment skill provided as part of Skybuffer open-source AI content, so that you can see the value of the content and how fast it is to be used in order to capture user’s input information.

Step 20: Add the same block for non-English actions (replies). Please, consider that you should use /translate webhook to get access to Hybrid Chats - Vocabulary (Fiori application) where you can store translated phrases into the language you want the chatbot to communicate with your users in.

Note: you can always get access to Hybrid Chats demo tenant from our Day 1 post.

Step 21: Once we are in the -input skill (one that has -input as a postfix in the skill’s name) to capture the user’s input, we need to think about the redirection to the -function skill.

(1) Check that the parameters needed for Function execution are present.

(2) Set:

rt_scenario_active as “<current business scenario id>”

rt_skill as “<current skill ID>” for check in -trigger of -function skill

(3) Redirect to the -function skill

Step 22: Modification of the -input skill. The purpose of the -input skill is to redirect to the skill from both outside and inside the skill group, where some user input is expected. Access your -input skill, open triggers section and correct the condition for the memory parameter rt_skill (expected previous skill value) and the condition for the memory parameter rt_scenario_active (name of active scenario for get-skill) parameters.

Step 23: Go to the Actions section and navigate to the last message block. This block is used to allow passing by the -input skill and triggering another scenario with the intent recognized from the user input utterance by the NLP engine:

Step 24: Set the current value of the memory parameter rt_scenario_<scenario_name> to “discussed”

Step 25: Now we will do the modification of the -function skill. Access your -function skill, open the triggers section and correct the value of the memory parameter rt_skill (expected previous skill value) and the value of the memory parameter rt_scenario_active (name of active scenario for get-skill) parameters.

Step 25: Now we will do the modification of the -function skill. Access your -function skill, open the triggers section and correct the value of the memory parameter rt_skill (expected previous skill value) and the value of the memory parameter rt_scenario_active (name of active scenario for get-skill) parameters.

Step 26: Navigate to the Actions section and make the following activities that are marked in the same sequence on the picture below:

(1) Check the memory parameter which we got in the get-skill.

(2) Replace the template answer

(3) Set the current scenario as discussed

(4) Unset the memory parameter

Before the activities execution:

After the activities execution:

Step 27: Configure non-English block of the skill

Step 28: Now repeat steps 26-27 for other values of the assistance levels memory parameter rt_assistance_level.

Step 29: Perform the modification of the business -fallback skill. Access your new -fallback skill, go to the Trigger section and replace the value of the memory parameter _memory.rt_intent[0].slug with the name of your new intent without @ sign and hit <ENTER>:

Step 29: Your fallback skill adjustment is now completed.

Step 30: Test and make sure that your new skill is ready to provide replies in any language! For sure, please do not forget to adjust Hybrid Chats – Vocabulary list of the chatbot replies, so that you could avoid automatic translation of the replies.

Conclusion

Now you have completed Day 5 guidelines and you know how to build a support chatbot that can collect user data and additional information from users to provide more person-oriented and better-quality support. Approximately it takes you only 15-20 minutes to add -get and -function skills groups to the chatbot.

Generally speaking, after you go through Day 1, Day 2, Day 3, Day 4 and Day 5 and follow the guidelines, your chatbot will be able to:

- Capture verified users’ contact details and generate new leads for you

- Speak about the provided services

- Seamlessly integrate an operator

- Categorize conversations

- Provide replies in various languages without any additional training

- Provide customized replies that are not translated automatically

- Capture support requests in case operators are offline or Hybrid Chats are connected to SAP Conversational AI chatbot in the operator-free mode

- Save all conversations so that you could always review them

- Provide information according to QnA knowledge base that is added as a set of QnA skills

- Collect user data and provide more personal information for your clients.

Looks like it is ready to Go Live and support your Clients, Employees or Business Partners, what would you say?

P.S. You can also find the entire list of our blog posts under the links below:

Day 1 | Skybuffer Enterprise-Level Conversational AI Content Made Public for SAP Community Developme...

Day 2 | Skybuffer Community Chatbot | How to Customize Your Chatbot “get-help” skill

Day 3 | Skybuffer Community Chatbot | How to Create Multilingual Question-and-Answers Scenario Fast ...

Day 4 | Skybuffer Community Chatbot | How to Bring Your Own Bot to Hybrid Chats

Day 5 | Skybuffer Community Chatbot | How to Create Multilingual “Question and Answer” Complex Scena...

Day 6 | Success Story | SAP Innovation Award | Cognitively Automated Customer Care

- SAP Managed Tags:

- SAP Conversational AI,

- Artificial Intelligence,

- SAP Integration Strategy

You must be a registered user to add a comment. If you've already registered, sign in. Otherwise, register and sign in.

Labels in this area

-

"automatische backups"

1 -

"regelmäßige sicherung"

1 -

505 Technology Updates 53

1 -

ABAP

14 -

ABAP API

1 -

ABAP CDS Views

2 -

ABAP CDS Views - BW Extraction

1 -

ABAP CDS Views - CDC (Change Data Capture)

1 -

ABAP class

2 -

ABAP Cloud

2 -

ABAP Development

5 -

ABAP in Eclipse

1 -

ABAP Platform Trial

1 -

ABAP Programming

2 -

abap technical

1 -

absl

1 -

access data from SAP Datasphere directly from Snowflake

1 -

Access data from SAP datasphere to Qliksense

1 -

Accrual

1 -

action

1 -

adapter modules

1 -

Addon

1 -

Adobe Document Services

1 -

ADS

1 -

ADS Config

1 -

ADS with ABAP

1 -

ADS with Java

1 -

ADT

2 -

Advance Shipping and Receiving

1 -

Advanced Event Mesh

3 -

AEM

1 -

AI

7 -

AI Launchpad

1 -

AI Projects

1 -

AIML

9 -

Alert in Sap analytical cloud

1 -

Amazon S3

1 -

Analytical Dataset

1 -

Analytical Model

1 -

Analytics

1 -

Analyze Workload Data

1 -

annotations

1 -

API

1 -

API and Integration

3 -

API Call

2 -

Application Architecture

1 -

Application Development

5 -

Application Development for SAP HANA Cloud

3 -

Applications and Business Processes (AP)

1 -

Artificial Intelligence

1 -

Artificial Intelligence (AI)

4 -

Artificial Intelligence (AI) 1 Business Trends 363 Business Trends 8 Digital Transformation with Cloud ERP (DT) 1 Event Information 462 Event Information 15 Expert Insights 114 Expert Insights 76 Life at SAP 418 Life at SAP 1 Product Updates 4

1 -

Artificial Intelligence (AI) blockchain Data & Analytics

1 -

Artificial Intelligence (AI) blockchain Data & Analytics Intelligent Enterprise

1 -

Artificial Intelligence (AI) blockchain Data & Analytics Intelligent Enterprise Oil Gas IoT Exploration Production

1 -

Artificial Intelligence (AI) blockchain Data & Analytics Intelligent Enterprise sustainability responsibility esg social compliance cybersecurity risk

1 -

ASE

1 -

ASR

2 -

ASUG

1 -

Attachments

1 -

Authorisations

1 -

Automating Processes

1 -

Automation

1 -

aws

2 -

Azure

1 -

Azure AI Studio

1 -

B2B Integration

1 -

Backorder Processing

1 -

Backup

1 -

Backup and Recovery

1 -

Backup schedule

1 -

BADI_MATERIAL_CHECK error message

1 -

Bank

1 -

BAS

1 -

basis

2 -

Basis Monitoring & Tcodes with Key notes

2 -

Batch Management

1 -

BDC

1 -

Best Practice

1 -

bitcoin

1 -

Blockchain

3 -

BOP in aATP

1 -

BOP Segments

1 -

BOP Strategies

1 -

BOP Variant

1 -

BPC

1 -

BPC LIVE

1 -

BTP

11 -

BTP Destination

2 -

Business AI

1 -

Business and IT Integration

1 -

Business application stu

1 -

Business Architecture

1 -

Business Communication Services

1 -

Business Continuity

1 -

Business Data Fabric

3 -

Business Partner

12 -

Business Partner Master Data

10 -

Business Technology Platform

2 -

Business Trends

1 -

CA

1 -

calculation view

1 -

CAP

3 -

Capgemini

1 -

CAPM

1 -

Catalyst for Efficiency: Revolutionizing SAP Integration Suite with Artificial Intelligence (AI) and

1 -

CCMS

2 -

CDQ

12 -

CDS

2 -

Cental Finance

1 -

Certificates

1 -

CFL

1 -

Change Management

1 -

chatbot

1 -

chatgpt

3 -

CL_SALV_TABLE

2 -

Class Runner

1 -

Classrunner

1 -

Cloud ALM Monitoring

1 -

Cloud ALM Operations

1 -

cloud connector

1 -

Cloud Extensibility

1 -

Cloud Foundry

3 -

Cloud Integration

6 -

Cloud Platform Integration

2 -

cloudalm

1 -

communication

1 -

Compensation Information Management

1 -

Compensation Management

1 -

Compliance

1 -

Compound Employee API

1 -

Configuration

1 -

Connectors

1 -

Consolidation Extension for SAP Analytics Cloud

1 -

Controller-Service-Repository pattern

1 -

Conversion

1 -

Cosine similarity

1 -

cryptocurrency

1 -

CSI

1 -

ctms

1 -

Custom chatbot

3 -

Custom Destination Service

1 -

custom fields

1 -

Customer Experience

1 -

Customer Journey

1 -

Customizing

1 -

Cyber Security

2 -

Data

1 -

Data & Analytics

1 -

Data Aging

1 -

Data Analytics

2 -

Data and Analytics (DA)

1 -

Data Archiving

1 -

Data Back-up

1 -

Data Governance

5 -

Data Integration

2 -

Data Quality

12 -

Data Quality Management

12 -

Data Synchronization

1 -

data transfer

1 -

Data Unleashed

1 -

Data Value

8 -

database tables

1 -

Datasphere

2 -

datenbanksicherung

1 -

dba cockpit

1 -

dbacockpit

1 -

Debugging

2 -

Delimiting Pay Components

1 -

Delta Integrations

1 -

Destination

3 -

Destination Service

1 -

Developer extensibility

1 -

Developing with SAP Integration Suite

1 -

Devops

1 -

digital transformation

1 -

Documentation

1 -

Dot Product

1 -

DQM

1 -

dump database

1 -

dump transaction

1 -

e-Invoice

1 -

E4H Conversion

1 -

Eclipse ADT ABAP Development Tools

2 -

edoc

1 -

edocument

1 -

ELA

1 -

Embedded Consolidation

1 -

Embedding

1 -

Embeddings

1 -

Employee Central

1 -

Employee Central Payroll

1 -

Employee Central Time Off

1 -

Employee Information

1 -

Employee Rehires

1 -

Enable Now

1 -

Enable now manager

1 -

endpoint

1 -

Enhancement Request

1 -

Enterprise Architecture

1 -

ETL Business Analytics with SAP Signavio

1 -

Euclidean distance

1 -

Event Dates

1 -

Event Driven Architecture

1 -

Event Mesh

2 -

Event Reason

1 -

EventBasedIntegration

1 -

EWM

1 -

EWM Outbound configuration

1 -

EWM-TM-Integration

1 -

Existing Event Changes

1 -

Expand

1 -

Expert

2 -

Expert Insights

1 -

Fiori

14 -

Fiori Elements

2 -

Fiori SAPUI5

12 -

Flask

1 -

Full Stack

8 -

Funds Management

1 -

General

1 -

Generative AI

1 -

Getting Started

1 -

GitHub

8 -

Grants Management

1 -

groovy

1 -

GTP

1 -

HANA

5 -

HANA Cloud

2 -

Hana Cloud Database Integration

2 -

HANA DB

1 -

HANA XS Advanced

1 -

Historical Events

1 -

home labs

1 -

HowTo

1 -

HR Data Management

1 -

html5

8 -

Identity cards validation

1 -

idm

1 -

Implementation

1 -

input parameter

1 -

instant payments

1 -

Integration

3 -

Integration Advisor

1 -

Integration Architecture

1 -

Integration Center

1 -

Integration Suite

1 -

intelligent enterprise

1 -

Java

1 -

job

1 -

Job Information Changes

1 -

Job-Related Events

1 -

Job_Event_Information

1 -

joule

4 -

Journal Entries

1 -

Just Ask

1 -

Kerberos for ABAP

8 -

Kerberos for JAVA

8 -

Launch Wizard

1 -

Learning Content

2 -

Life at SAP

1 -

lightning

1 -

Linear Regression SAP HANA Cloud

1 -

local tax regulations

1 -

LP

1 -

Machine Learning

2 -

Marketing

1 -

Master Data

3 -

Master Data Management

14 -

Maxdb

2 -

MDG

1 -

MDGM

1 -

MDM

1 -

Message box.

1 -

Messages on RF Device

1 -

Microservices Architecture

1 -

Microsoft Universal Print

1 -

Middleware Solutions

1 -

Migration

5 -

ML Model Development

1 -

Modeling in SAP HANA Cloud

8 -

Monitoring

3 -

MTA

1 -

Multi-Record Scenarios

1 -

Multiple Event Triggers

1 -

Neo

1 -

New Event Creation

1 -

New Feature

1 -

Newcomer

1 -

NodeJS

2 -

ODATA

2 -

OData APIs

1 -

odatav2

1 -

ODATAV4

1 -

ODBC

1 -

ODBC Connection

1 -

Onpremise

1 -

open source

2 -

OpenAI API

1 -

Oracle

1 -

PaPM

1 -

PaPM Dynamic Data Copy through Writer function

1 -

PaPM Remote Call

1 -

PAS-C01

1 -

Pay Component Management

1 -

PGP

1 -

Pickle

1 -

PLANNING ARCHITECTURE

1 -

Popup in Sap analytical cloud

1 -

PostgrSQL

1 -

POSTMAN

1 -

Process Automation

2 -

Product Updates

4 -

PSM

1 -

Public Cloud

1 -

Python

4 -

Qlik

1 -

Qualtrics

1 -

RAP

3 -

RAP BO

2 -

Record Deletion

1 -

Recovery

1 -

recurring payments

1 -

redeply

1 -

Release

1 -

Remote Consumption Model

1 -

Replication Flows

1 -

Research

1 -

Resilience

1 -

REST

1 -

REST API

1 -

Retagging Required

1 -

Risk

1 -

Rolling Kernel Switch

1 -

route

1 -

rules

1 -

S4 HANA

1 -

S4 HANA Cloud

1 -

S4 HANA On-Premise

1 -

S4HANA

3 -

S4HANA_OP_2023

2 -

SAC

10 -

SAC PLANNING

9 -

SAP

4 -

SAP ABAP

1 -

SAP Advanced Event Mesh

1 -

SAP AI Core

8 -

SAP AI Launchpad

8 -

SAP Analytic Cloud Compass

1 -

Sap Analytical Cloud

1 -

SAP Analytics Cloud

4 -

SAP Analytics Cloud for Consolidation

2 -

SAP Analytics Cloud Story

1 -

SAP analytics clouds

1 -

SAP BAS

1 -

SAP Basis

6 -

SAP BODS

1 -

SAP BODS certification.

1 -

SAP BTP

20 -

SAP BTP Build Work Zone

2 -

SAP BTP Cloud Foundry

5 -

SAP BTP Costing

1 -

SAP BTP CTMS

1 -

SAP BTP Innovation

1 -

SAP BTP Migration Tool

1 -

SAP BTP SDK IOS

1 -

SAP Build

11 -

SAP Build App

1 -

SAP Build apps

1 -

SAP Build CodeJam

1 -

SAP Build Process Automation

3 -

SAP Build work zone

10 -

SAP Business Objects Platform

1 -

SAP Business Technology

2 -

SAP Business Technology Platform (XP)

1 -

sap bw

1 -

SAP CAP

2 -

SAP CDC

1 -

SAP CDP

1 -

SAP Certification

1 -

SAP Cloud ALM

4 -

SAP Cloud Application Programming Model

1 -

SAP Cloud Integration for Data Services

1 -

SAP cloud platform

8 -

SAP Companion

1 -

SAP CPI

3 -

SAP CPI (Cloud Platform Integration)

2 -

SAP CPI Discover tab

1 -

sap credential store

1 -

SAP Customer Data Cloud

1 -

SAP Customer Data Platform

1 -

SAP Data Intelligence

1 -

SAP Data Migration in Retail Industry

1 -

SAP Data Services

1 -

SAP DATABASE

1 -

SAP Dataspher to Non SAP BI tools

1 -

SAP Datasphere

9 -

SAP DRC

1 -

SAP EWM

1 -

SAP Fiori

2 -

SAP Fiori App Embedding

1 -

Sap Fiori Extension Project Using BAS

1 -

SAP GRC

1 -

SAP HANA

1 -

SAP HCM (Human Capital Management)

1 -

SAP HR Solutions

1 -

SAP IDM

1 -

SAP Integration Suite

9 -

SAP Integrations

4 -

SAP iRPA

2 -

SAP Learning Class

1 -

SAP Learning Hub

1 -

SAP Odata

2 -

SAP on Azure

1 -

SAP PartnerEdge

1 -

sap partners

1 -

SAP Password Reset

1 -

SAP PO Migration

1 -

SAP Prepackaged Content

1 -

SAP Process Automation

2 -

SAP Process Integration

2 -

SAP Process Orchestration

1 -

SAP S4HANA

2 -

SAP S4HANA Cloud

1 -

SAP S4HANA Cloud for Finance

1 -

SAP S4HANA Cloud private edition

1 -

SAP Sandbox

1 -

SAP STMS

1 -

SAP SuccessFactors

2 -

SAP SuccessFactors HXM Core

1 -

SAP Time

1 -

SAP TM

2 -

SAP Trading Partner Management

1 -

SAP UI5

1 -

SAP Upgrade

1 -

SAP-GUI

8 -

SAP_COM_0276

1 -

SAPBTP

1 -

SAPCPI

1 -

SAPEWM

1 -

sapmentors

1 -

saponaws

2 -

SAPUI5

4 -

schedule

1 -

Secure Login Client Setup

8 -

security

9 -

Selenium Testing

1 -

SEN

1 -

SEN Manager

1 -

service

1 -

SET_CELL_TYPE

1 -

SET_CELL_TYPE_COLUMN

1 -

SFTP scenario

2 -

Simplex

1 -

Single Sign On

8 -

Singlesource

1 -

SKLearn

1 -

soap

1 -

Software Development

1 -

SOLMAN

1 -

solman 7.2

2 -

Solution Manager

3 -

sp_dumpdb

1 -

sp_dumptrans

1 -

SQL

1 -

sql script

1 -

SSL

8 -

SSO

8 -

Substring function

1 -

SuccessFactors

1 -

SuccessFactors Time Tracking

1 -

Sybase

1 -

system copy method

1 -

System owner

1 -

Table splitting

1 -

Tax Integration

1 -

Technical article

1 -

Technical articles

1 -

Technology Updates

1 -

Technology Updates

1 -

Technology_Updates

1 -

Threats

1 -

Time Collectors

1 -

Time Off

2 -

Tips and tricks

2 -

Tools

1 -

Trainings & Certifications

1 -

Transport in SAP BODS

1 -

Transport Management

1 -

TypeScript

2 -

unbind

1 -

Unified Customer Profile

1 -

UPB

1 -

Use of Parameters for Data Copy in PaPM

1 -

User Unlock

1 -

VA02

1 -

Validations

1 -

Vector Database

1 -

Vector Engine

1 -

Visual Studio Code

1 -

VSCode

1 -

Web SDK

1 -

work zone

1 -

workload

1 -

xsa

1 -

XSA Refresh

1

- « Previous

- Next »

Related Content

- Deliver Real-World Results with SAP Business AI: Q4 2023 & Q1 2024 Release Highlights in Technology Blogs by SAP

- AI Foundation on SAP BTP: Q4 2023 Release Highlights in Technology Blogs by SAP

- A Guide to Advanced RAG Techniques for Success in Business Landscape in Technology Blogs by SAP

- Enhancing Operational Efficiency in Management of Subscription Order Document Flow, Or How to Simplify SAP Business Processes with Adaptive Cards Intelligent Interface in MS Teams Connected with Trusted Single Sign On in Technology Blogs by Members

- How to make your application multilingual in SAP Build Apps in Technology Blogs by Members

Top kudoed authors

| User | Count |

|---|---|

| 11 | |

| 9 | |

| 7 | |

| 6 | |

| 4 | |

| 4 | |

| 3 | |

| 3 | |

| 3 | |

| 2 |