- SAP Community

- Products and Technology

- Technology

- Technology Blogs by SAP

- SAP Data Intelligence – What’s New in DI:2013

Technology Blogs by SAP

Learn how to extend and personalize SAP applications. Follow the SAP technology blog for insights into SAP BTP, ABAP, SAP Analytics Cloud, SAP HANA, and more.

Turn on suggestions

Auto-suggest helps you quickly narrow down your search results by suggesting possible matches as you type.

Showing results for

Product and Topic Expert

Options

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

03-08-2021

11:05 AM

SAP Data Intelligence, cloud edition DI:2013 is now available.

Within this blog post, you will find updates on the latest enhancements in DI:2013. We want to share and describe the new functions and features of SAP Data Intelligence for the Q1 2021 release.

If you would like to review what was made available in the previous release, please have a look at this blog post.

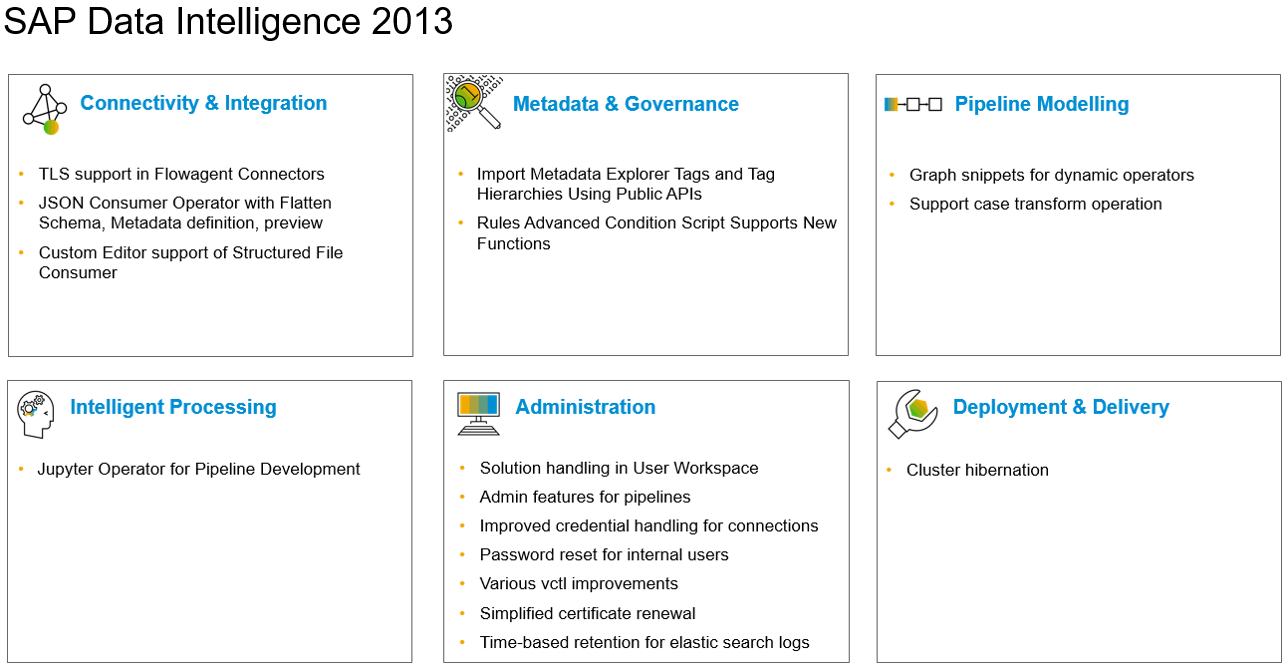

This section will give you a quick preview about the main developments in each topic area. All details will be described in the following sections for each individual topic area.

This topic area focuses mainly on all kinds of connection and integration capabilities which are used across the product - for example in the Metadata Explorer or on operator level in the Pipeline Modeler.

Configure Oracle connection with TLS in order to ensure privacy and data security.

Flowagent based Structured File Consumer supports consumption of valid JSON files with JSON or JSONL extension.

Configure the Structured File Consumer operator using the new form-based editor. The custom editor lets you to define the projection of columns, apply filters, and preview data.

In this topic area you will find all features dealing with discovering metadata, working with it and also data preparation functionalities. Sometimes you will find similar information about newly supported systems. The reason is that people only having a look into one area, do not miss information as well as there could also be some more information related to the topic area.

APIs have been added to Metadata Explorer that allow to import tag and tag hierarchies from external metadata catalog and data governance systems. This enables the enrichment of metadata with auto classifications, correlations, and suggestions stemming from outside systems.

The Metadata Rules advanced condition script editor now allows functions is_unique and is_data_dependent to be used in rule condition scripts. Information Steward rules containing functions is_unique and is_data_dependent can be imported to Metadata Explorer and added to rulebooks for execution.

This topic area covers new operators or enhancements of existing operators. Improvements or new functionalities of the Pipeline Modeler and the development of pipelines.

Operators such as the ABAP readers have dynamic attributes that could not be handled by snippets for now. With the new version, these attributes are supported to build domain-specifc snippets.

The ”Data Transform” operator now allows to model cases in the ETL process.

This topic area includes all improvements, updates and way forward for Machine Learning in SAP Data Intelligence.

This topic area includes all services that are provided by the system - like administration, user management or system management.

Allow tenant administrators to monitor the pipelines of all users.

When importing solutions to the User Workspace the meta data gets persisted and can be re-used when exporting to the filesystem or solution repository.

Administrators can enforce a password change on first login or force existing users to change the password.

Credentials such as username are partly masked (depending on connection type). Administrators are able to see the full credentials (except passwords and secrets) to simplify troubleshooting.

Using kubectl and the shipped “dhinstaller” script for the certificates of a cluster to be renewed. The tool is aimed to be called by automation scripts to maintain the cluster.

The logs in elastic can be configured to retain until a certain time in addition to be retained up to a certain size.

User can output results as json and yaml and show age column for scheduled workloads, amongst others.

Within this focus area, all functions and features which are dealing with the setup process, installation or deployment will be described.

Please note that the feature below will be available as of March 11th, 2021 after SAP Cloud Platform team has enabled some changes.

These are the new functions, features and enhancements in SAP Data Intelligence, cloud edition DI:2013 release.

We hope you like them and, by reading the above descriptions, have already identified some areas you would like to try out.

If you are interested, please refer to What's New in Data Intelligence - Central Blog Post.

For more updates, follow the tag SAP Data Intelligence.

We recommend visiting our SAP Data Intelligence community topic page to check helpful resources, links and what other community members post. If you have a question, feel free to check out the Q&A area and ask a question here.

Thank you & Best Regards,

Eduardo and the SAP Data Intelligence PM team

Within this blog post, you will find updates on the latest enhancements in DI:2013. We want to share and describe the new functions and features of SAP Data Intelligence for the Q1 2021 release.

If you would like to review what was made available in the previous release, please have a look at this blog post.

Overview

This section will give you a quick preview about the main developments in each topic area. All details will be described in the following sections for each individual topic area.

Connectivity & Integration

This topic area focuses mainly on all kinds of connection and integration capabilities which are used across the product - for example in the Metadata Explorer or on operator level in the Pipeline Modeler.

TLS support in Flowagent Connectors

Configure Oracle connection with TLS in order to ensure privacy and data security.

JSON Consumer Operator with Flatten Schema, Metadata definition, preview

Flowagent based Structured File Consumer supports consumption of valid JSON files with JSON or JSONL extension.

Custom Editor support of Structured File Consumer

Configure the Structured File Consumer operator using the new form-based editor. The custom editor lets you to define the projection of columns, apply filters, and preview data.

Metadata & Governance

In this topic area you will find all features dealing with discovering metadata, working with it and also data preparation functionalities. Sometimes you will find similar information about newly supported systems. The reason is that people only having a look into one area, do not miss information as well as there could also be some more information related to the topic area.

Import Metadata Explorer Tags and Tag Hierarchies Using Public APIs

APIs have been added to Metadata Explorer that allow to import tag and tag hierarchies from external metadata catalog and data governance systems. This enables the enrichment of metadata with auto classifications, correlations, and suggestions stemming from outside systems.

Rules Advanced Condition Script Supports New Functions

The Metadata Rules advanced condition script editor now allows functions is_unique and is_data_dependent to be used in rule condition scripts. Information Steward rules containing functions is_unique and is_data_dependent can be imported to Metadata Explorer and added to rulebooks for execution.

Pipeline Modelling

This topic area covers new operators or enhancements of existing operators. Improvements or new functionalities of the Pipeline Modeler and the development of pipelines.

Graph snippets for dynamic operators

Operators such as the ABAP readers have dynamic attributes that could not be handled by snippets for now. With the new version, these attributes are supported to build domain-specifc snippets.

Support case transform operation

The ”Data Transform” operator now allows to model cases in the ETL process.

Intelligent Processing

This topic area includes all improvements, updates and way forward for Machine Learning in SAP Data Intelligence.

Jupyter Operator for Pipeline Development:

- launches a Jupyter notebook application where you can create new notebooks / open existing notebooks from within the respective location.

- allows execution in either an “interactive” mode or a “productive” mode.

- Interactive mode: Jupyter notebook UI is accessible and identified cells can be manually executed to interact with the current data flow

- Productive mode: Jupyter Operator behaves like a Python 3 operator and no user interaction is required to run identified cells

Administration

This topic area includes all services that are provided by the system - like administration, user management or system management.

Admin features for pipelines

Allow tenant administrators to monitor the pipelines of all users.

Solution handling in User Workspace

When importing solutions to the User Workspace the meta data gets persisted and can be re-used when exporting to the filesystem or solution repository.

Password reset for internal users

Administrators can enforce a password change on first login or force existing users to change the password.

Improved credential handling for connections

Credentials such as username are partly masked (depending on connection type). Administrators are able to see the full credentials (except passwords and secrets) to simplify troubleshooting.

Simplified certificate renewal

Using kubectl and the shipped “dhinstaller” script for the certificates of a cluster to be renewed. The tool is aimed to be called by automation scripts to maintain the cluster.

Time-based retention for elastic search logs

The logs in elastic can be configured to retain until a certain time in addition to be retained up to a certain size.

Various vctl improvements

User can output results as json and yaml and show age column for scheduled workloads, amongst others.

Deployment & Delivery

Within this focus area, all functions and features which are dealing with the setup process, installation or deployment will be described.

Please note that the feature below will be available as of March 11th, 2021 after SAP Cloud Platform team has enabled some changes.

Cluster hibernation

- Customers now have the option to schedule or manually start/stop Data Intelligence Cloud clusters via SAP Cloud Platform or cf command line.

- During hibernation:

- Cluster size is reduced to zero nodes, effectively reducing operational costs to near-zero

- Customers only pay for persistency (metadata, modeler objects, DI_DATA_LAKE, etc)

- Front-end will be inaccessible and time out during hibernation.

These are the new functions, features and enhancements in SAP Data Intelligence, cloud edition DI:2013 release.

We hope you like them and, by reading the above descriptions, have already identified some areas you would like to try out.

If you are interested, please refer to What's New in Data Intelligence - Central Blog Post.

For more updates, follow the tag SAP Data Intelligence.

We recommend visiting our SAP Data Intelligence community topic page to check helpful resources, links and what other community members post. If you have a question, feel free to check out the Q&A area and ask a question here.

Thank you & Best Regards,

Eduardo and the SAP Data Intelligence PM team

- SAP Managed Tags:

- SAP Data Intelligence

Labels:

You must be a registered user to add a comment. If you've already registered, sign in. Otherwise, register and sign in.

Labels in this area

-

ABAP CDS Views - CDC (Change Data Capture)

2 -

AI

1 -

Analyze Workload Data

1 -

BTP

1 -

Business and IT Integration

2 -

Business application stu

1 -

Business Technology Platform

1 -

Business Trends

1,658 -

Business Trends

91 -

CAP

1 -

cf

1 -

Cloud Foundry

1 -

Confluent

1 -

Customer COE Basics and Fundamentals

1 -

Customer COE Latest and Greatest

3 -

Customer Data Browser app

1 -

Data Analysis Tool

1 -

data migration

1 -

data transfer

1 -

Datasphere

2 -

Event Information

1,400 -

Event Information

66 -

Expert

1 -

Expert Insights

177 -

Expert Insights

293 -

General

1 -

Google cloud

1 -

Google Next'24

1 -

Kafka

1 -

Life at SAP

780 -

Life at SAP

12 -

Migrate your Data App

1 -

MTA

1 -

Network Performance Analysis

1 -

NodeJS

1 -

PDF

1 -

POC

1 -

Product Updates

4,577 -

Product Updates

340 -

Replication Flow

1 -

RisewithSAP

1 -

SAP BTP

1 -

SAP BTP Cloud Foundry

1 -

SAP Cloud ALM

1 -

SAP Cloud Application Programming Model

1 -

SAP Datasphere

2 -

SAP S4HANA Cloud

1 -

SAP S4HANA Migration Cockpit

1 -

Technology Updates

6,873 -

Technology Updates

417 -

Workload Fluctuations

1

Related Content

- What’s new in Mobile development kit client 24.4 in Technology Blogs by SAP

- AI Core - on-premise Git support in Technology Q&A

- What is the preferred way of data fetch from HANA view in ABAP Program in Technology Q&A

- what is the standard page to display employee Username in SuccessFactors : IAS or Spotlight? in Technology Q&A

- Behind the compatibility - What are the compatibility means between GRC and the plugins in Technology Blogs by SAP

Top kudoed authors

| User | Count |

|---|---|

| 34 | |

| 25 | |

| 12 | |

| 7 | |

| 7 | |

| 6 | |

| 6 | |

| 6 | |

| 5 | |

| 4 |