- SAP Community

- Products and Technology

- Technology

- Technology Blogs by SAP

- SAP Automation Pilot 101 - Subscribing and First S...

Technology Blogs by SAP

Learn how to extend and personalize SAP applications. Follow the SAP technology blog for insights into SAP BTP, ABAP, SAP Analytics Cloud, SAP HANA, and more.

Turn on suggestions

Auto-suggest helps you quickly narrow down your search results by suggesting possible matches as you type.

Showing results for

former_member35

Active Participant

Options

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

10-14-2020

7:55 AM

What are you going to learn?

In the series "SAP Cloud Platform Automation Pilot 101," we will explore this brand new service. We are going to start with this general article and continue to deep-dive into the service details. We will learn more about how to automate your repetitive DevOps work on top of the SAP Cloud Platform without effort. We are going to explore different automation content provided out-of-the-box by Automation Pilot for you. We will learn how to extend this content effortlessly and automate simple to elaborate DevOps processes. We will explore different engaging scenarios like - Scheduling a restart of a system, Updating a HANA DB on Cloud Platform, automatic alert remediation, and many more.

In this particular blog post, we are going to subscribe to the service and take our first steps within it.

What SAP Cloud Platform Automation Pilot is all about?

At the beginning of my career in the cloud business, I observed something - that was when my team and I are working on a brand new cloud solution, we always start with the delivery - How are we going to deploy this? How are we going to make those deployments continuous? What infrastructure are we going to use? And so on. And then, once we have our solution up and running, we discover that many things can go wrong and we haven't thought about them. I observe that those occurring problems might be repetitive or not, and require a broad set of skills. So we always missed this.

This is how we also came with Automation Pilot - we figured out that as a DevOps engineer, one should know deeply: the building blocks of their application, the dependencies of this application, the underlying infrastructure, the best practices, and processes of their organization. Also, as the Platform evolves, this knowledge should be caught up as well. Can a part of these challenges be automated? Yes. Is it easy? No.

So this is what Automation Pilot is here for - to allow you to easily automate those processes without requiring in-depth knowledge in coding, infrastructure, or the continually evolving Platform.

An Example

To explain it better, I am going to use an example:

A typical cloud solution consists of some or all of those: a couple of microservices, a couple of databases, depends on much additional SAP or Hyperscaler provided services. Once this solution is deployed into a production environment, we can distinguish a couple of categories which might break our solution:

- Unexpected downtimes of either microservice, database, dependent service, or network.

- Unexpected re-configurations of our solution - for example, applying urgent security hotfix.

- Planned downtimes - for example, running a regular database update.

All of those require work from DevOps engineers on at least one of the building blocks of their solution. All of the 3 can be either done manually or automated.

Automating those with Automation Pilot does not require any coding or in-depth platform skills from your side.

What scenarios can I use Automation Pilot for?

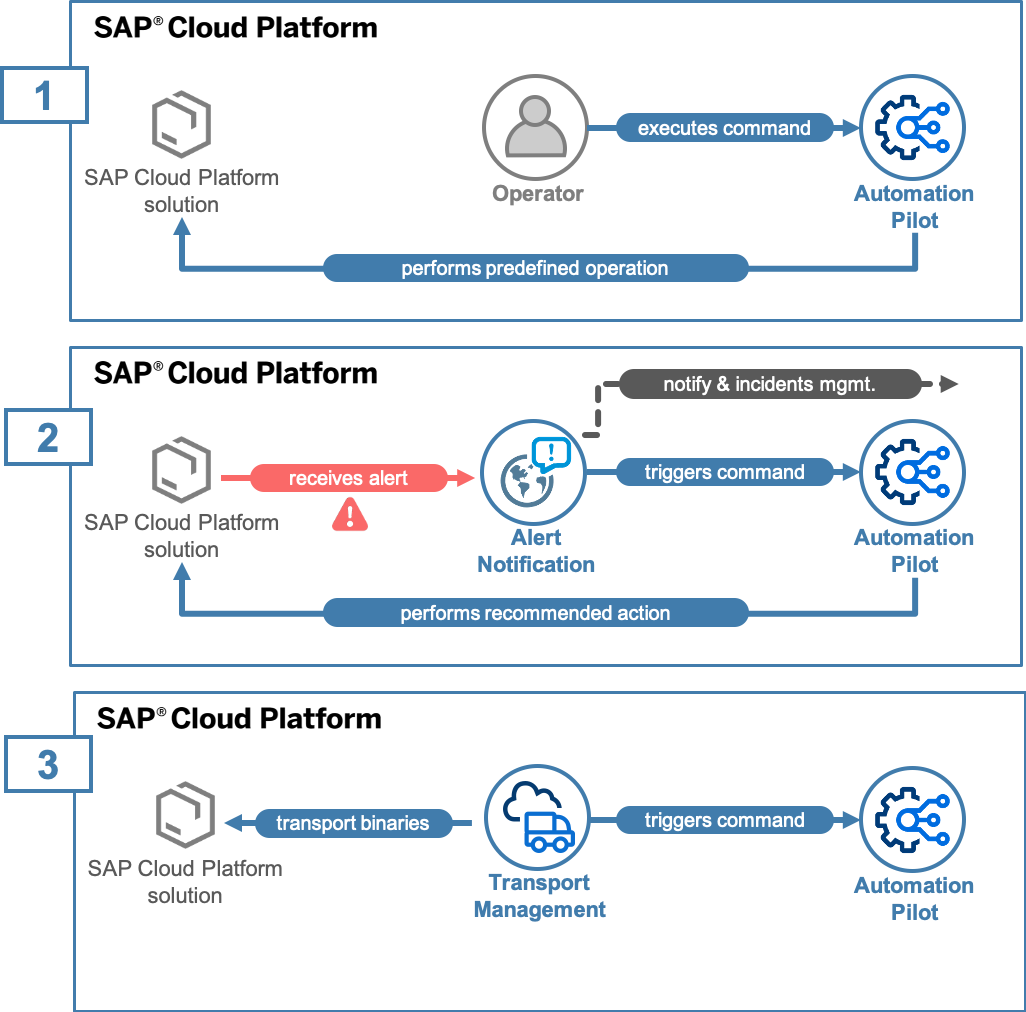

You can use Automation Pilot for 3 high-level scenarios described below.

- Automating repetitive DevOps tasks that occur during the maintenance of your deployment.

- Automatic alert remediation with the integration of SAP Cloud Platform Alert Notification or another alerting service(like AWS SNS). More information for this scenario you can read here.

- Integration with existing SAP Cloud Platform services like Transport Management - from which you can invoke Automation Pilot. Note that we are currently working on integrations with different SAP provided services that will grow over time.

How Automation Pilot works, and where does it live?

Availability

It is essential to know that Automation Pilot is Platform and infrastructure agnostic. This means that no matter if your workloads are on Neo or Cloud Foundry and no matter the region, it can manage them for you. AutoPi is currently available on EU10 and US30 regions, and we are considering providing it in multiple areas. It is a standard CPEA service; click here to understand how to enable it.

Main building blocks of the service

Commands

The commands are the primary entity you are working with. You can imagine the Commands as a mini-program:

- It requires an input - the Input is data that the Command requires to run. For example - username, region, password, etc.

- The Command executes and produces an output - for example, response code from a REST API call. As Automation Pilot allows

- Commands can be chained to one another and create even more complex scenarios. Automation Pilot can orchestrate those complex scenarios for you. Every step of the Command can use the output of the previous steps.

Automation Pilot offers different granularity of commands:

- Low-level - a Script Execution command which supports Node.js, Python, Perl, and Bash. It can take as Input your existing automation scripts, execute them, and produce an output.

- Mid-level - Http Request command, which allows you to execute and orchestrate HTTP calls to different systems.

- High-level - a set of commands coming pre-defined for you which represent complex DevOps processes like - Updating a HANA, Restarting an application, Getting information from Dynatrace, and so on.

All of those can be chained in more complex scenarios regardless of the granularity. Automation Pilot will provide you with the necessary orchestration mechanisms like - scheduling, automatic retries, stopping for human interaction, and many more. We are going to look in detail at those in another post.

Also, commands come with metadata, which gives you the ability to distinguish them easily, like tags, versioning, etc.

Inputs

As we have discussed, every Command needs Input to run - this is the context of your workloads that the command needs—for example - the region where your deployment resides or the password required for your HANA update. Frequently, those inputs might be shared amongst the needs of many commands that you are using. So not to fill them every time manually or passing them from somewhere, we offer the notion for Input. The inputs are key-value pairs organized into a particular section of the UI. They are integrated with the commands, and whenever you define a Command, you can point to it, which inputs it needs to run. Note that inputs can store sensitive information like passwords in a secure manner.

Catalogs

All Commands are organized into Catalogs. The idea of the Catalog is to semantically help you with the knowledge of what commands are used for a given scenario. For example - the Application Lifecycle Management Catalog contains commands like - Restart Application for CF and Neo, start/stop node, and many more. The catalogs can be of two types:

- Provided - within this Catalog, we will provide you with out-of-the-box content regarding different DevOps processes on top of SAP CP. This is maintained by us, and we make sure to get the latest and greatest version. Those commands are not editable for you, and you can use them as they are. You can chain them into your own Command. Every Command has a version, and every time something is changed within the Platform, the Command is being updated. This takes off the burden for you to continually know if something in the Platform has changed.

- Owned - those are the catalogs developed by you. Once you subscribe to Automation Pilot, you will get multiple examples within those who can help you quickly bootstrap with command development. Note that in those catalogs, you can use the commands from both Owned and Provided Catalogs for building your own scenarios. We are going to dedicate a particular blog post on content authoring.

What's next?

- Subscribe and use SAP Cloud Platform Automation Pilot

- Send us your feedback

- Stay tuned for more blog posts, which will look in-depth at the service.

- SAP Managed Tags:

- DevOps,

- SAP Automation Pilot,

- SAP Business Technology Platform

Labels:

1 Comment

You must be a registered user to add a comment. If you've already registered, sign in. Otherwise, register and sign in.

Labels in this area

-

ABAP CDS Views - CDC (Change Data Capture)

2 -

AI

1 -

Analyze Workload Data

1 -

BTP

1 -

Business and IT Integration

2 -

Business application stu

1 -

Business Technology Platform

1 -

Business Trends

1,661 -

Business Trends

87 -

CAP

1 -

cf

1 -

Cloud Foundry

1 -

Confluent

1 -

Customer COE Basics and Fundamentals

1 -

Customer COE Latest and Greatest

3 -

Customer Data Browser app

1 -

Data Analysis Tool

1 -

data migration

1 -

data transfer

1 -

Datasphere

2 -

Event Information

1,400 -

Event Information

64 -

Expert

1 -

Expert Insights

178 -

Expert Insights

273 -

General

1 -

Google cloud

1 -

Google Next'24

1 -

Kafka

1 -

Life at SAP

784 -

Life at SAP

11 -

Migrate your Data App

1 -

MTA

1 -

Network Performance Analysis

1 -

NodeJS

1 -

PDF

1 -

POC

1 -

Product Updates

4,577 -

Product Updates

323 -

Replication Flow

1 -

RisewithSAP

1 -

SAP BTP

1 -

SAP BTP Cloud Foundry

1 -

SAP Cloud ALM

1 -

SAP Cloud Application Programming Model

1 -

SAP Datasphere

2 -

SAP S4HANA Cloud

1 -

SAP S4HANA Migration Cockpit

1 -

Technology Updates

6,886 -

Technology Updates

398 -

Workload Fluctuations

1

Related Content

- Dynamic URL's in Build Process Automation in Technology Q&A

- 10+ ways to reshape your SAP landscape with SAP Business Technology Platform – Blog 4 in Technology Blogs by SAP

- Top Picks: Innovations Highlights from SAP Business Technology Platform (Q1/2024) in Technology Blogs by SAP

- SAP Workflow Management - OData response headers in Technology Q&A

- How to raise a ITSM ticket using SAP Build Process Automation in SAP Build in Technology Q&A

Top kudoed authors

| User | Count |

|---|---|

| 11 | |

| 10 | |

| 9 | |

| 9 | |

| 7 | |

| 7 | |

| 7 | |

| 6 | |

| 6 | |

| 5 |