- SAP Community

- Products and Technology

- Technology

- Technology Blogs by Members

- How to determine and perform SAP HANA partitioning

Technology Blogs by Members

Explore a vibrant mix of technical expertise, industry insights, and tech buzz in member blogs covering SAP products, technology, and events. Get in the mix!

Turn on suggestions

Auto-suggest helps you quickly narrow down your search results by suggesting possible matches as you type.

Showing results for

rajarajeswari_k

Active Participant

Options

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

09-11-2020

1:51 PM

HANA PARTITIONING CONCEPTS ,SQLs,TECHNICAL BACKGROUND

Here I wish to discuss about HANA partitioning concepts , how to determine the number of partitions,how to determine the optimal column,how does HANA handles the partition command ,Types of basic partitions and so on.

Why do we need to partition tables in HANA ?

As HANA can not hold more than 2 billion records per column store table or per partition , any table which has crossed more than 1.5 billion rows are valid candidates of partitioning . It also helps in parallel procession of queries , if they are distributed across nodes in case of HANA scale out scenario producing results faster .

CASE STUDY: Here I am going to change an existing table which is partitioned on all the key fields to partition on 1 optimum key column: ie, The partition of a table EDIDS is going to be changed from HASH 21 MANDT,DOCNUM,LOGDAT,LOGTIM,COUNTR to HASH 7 DOCNU . The main reason is because , many SQLs and joins that are running against this table are running with where clause on DOCNUM .

Steps:

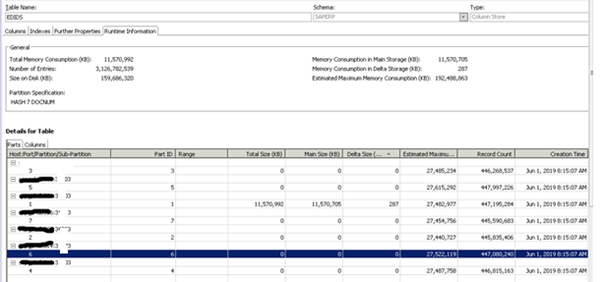

Log into studio and display the table and make a note of details.

Find the table and display: Observe the things highlighted below .Column Key: Primary key fields of any table.

Check the runtime properties.:

Things to observer here .

Number of entries =Row count(3.1 billion)

Memory consumption in main and in Delta is also indicated there.

So how does HANA handles this partitioning?

When the alter command specified in previous gets executed below things happen in backend in HANA DB.

We can check for the status of the threads from performance tab and filter it out based on the master node and into the server on which this table is sitting.

Look for the SQL that we had executed:Choose the master node and the slave node to which we had moved the partitions to.

Click ok.

Sort by application user in performance tab. Though I had logged in as SYSTEM for re-partition, HANA will also know the WTS via which I am connecting to this DB.

In the background

1.My SQL gets prepared and delta merge for that table will first executed.

Here is my delta merge job that got triggered and the other jobs that will follow the same.

Below delta merge job has got completed 59/63 ..All the remaining jobs has 30 steps and it will run in parallel post delta merge completes.

so,Why is the max progress for _SYS_SPLIT_EDIDS shows as 30 ? It is actually the total number of columns in that table (including internal columns)

I have used M_CS_ALL_COLUMNS rather than M_CS_COLUMNS because the former also holds hana internal columns against the lateral

Lets check the threads now.

After 6 hours, my re-partition got completed .Now I distribute the 7 partitions between the 7 HANA nodes. Moving back the partitions:

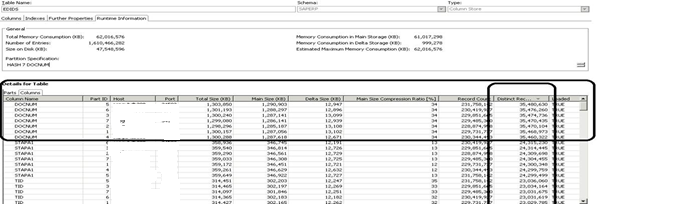

Post re-partition and table distribution to different nodes.

Here if we see the total 3.1 billion is spread across 21 partitions with around 148 million records count per partition.

To partition command to work, we need to first move all the partitions from different node to single node.

Below I choose a to leave partitions 1,2,3 to the same server and move the other partitions from other servers to this server.

Sample SQL:

ALTER TABLE "SAPERP"."EDIDS" MOVE PARTITION 4 TO '<server_name>:3<nn>03' PHYSICAL;

ALTER TABLE "SAPERP"."EDIDS" MOVE PARTITION 5 TO '<server_name>:3<nn>03' PHYSICAL;

ALTER TABLE "SAPERP"."EDIDS" MOVE PARTITION 6 TO '<server_name>:3<nn>03' PHYSICAL;

ALTER TABLE "SAPERP"."EDIDS" MOVE PARTITION 7 TO '<server_name>:3<nn>03' PHYSICAL;

ALTER TABLE "SAPERP"."EDIDS" MOVE PARTITION 8 TO '<server_name>:3<nn>03' PHYSICAL;

ALTER TABLE "SAPERP"."EDIDS" MOVE PARTITION 9 TO '<server_name>:3<nn>03' PHYSICAL;

ALTER TABLE "SAPERP"."EDIDS" MOVE PARTITION 10 TO '<server_name>:3<nn>03' PHYSICAL;

ALTER TABLE "SAPERP"."EDIDS" MOVE PARTITION 11 TO '<server_name>:3<nn>03' PHYSICAL;

ALTER TABLE "SAPERP"."EDIDS" MOVE PARTITION 12 TO '<server_name>:3<nn>03' PHYSICAL;

ALTER TABLE "SAPERP"."EDIDS" MOVE PARTITION 13 TO '<server_name>:3<nn>03' PHYSICAL;

ALTER TABLE "SAPERP"."EDIDS" MOVE PARTITION 14 TO '<server_name>:3<nn>03' PHYSICAL;

ALTER TABLE "SAPERP"."EDIDS" MOVE PARTITION 15 TO '<server_name>:3<nn>03' PHYSICAL;

ALTER TABLE "SAPERP"."EDIDS" MOVE PARTITION 16 TO '<server_name>:3<nn>03' PHYSICAL;

ALTER TABLE "SAPERP"."EDIDS" MOVE PARTITION 17 TO '<server_name>:3<nn>03' PHYSICAL;

ALTER TABLE "SAPERP"."EDIDS" MOVE PARTITION 18 TO '<server_name>:3<nn>03' PHYSICAL;

ALTER TABLE "SAPERP"."EDIDS" MOVE PARTITION 19 TO '<server_name>:3<nn>03' PHYSICAL;

ALTER TABLE "SAPERP"."EDIDS" MOVE PARTITION 20 TO '<server_name>:3<nn>03' PHYSICAL;

ALTER TABLE "SAPERP"."EDIDS" MOVE PARTITION 21 TO '<server_name>:3<nn>03' PHYSICAL;

o/p:

Post this we have all the partition in one node in a scale out environment.

Now I proceed with partitioning command.

ALTER TABLE SAPERP.EDIDS PARTITION BY HASH (DOCNUM) PARTITIONS 7;

DETERMINING THE OPTIMUM COLUMN and NUMBER FOR PARTITION

So why would I consider DOCNUM as optimal column to be partitioned and why the number of partitions was choosen as 7?

In order to choose a column for partitioning we need to analyze the table usage in depth .

Analyzing and understanding the SQLs on the table:

1.Check with the functional team. Ask them which column does they query frequently and which column is always part of where clause . If they are not very clear on the same we can help them with plan cache data.

Eg: If a table keep getting queried based on a where clause on year, and in general the table has data since 2015, a Range partition on YEAR column will create a clear demarcation between hot and cold data .

Sample SQL for range partition:

ALTER TABLE SAPERP.BSEG PARTITION BY RANGE (BUKRS) (PARTITION 0 <= VALUES < 1000, PARTITION 1000 <= VALUES < 1101, PARTITION 2000 <= VALUES < 4000,PARTITION 4000 <= VALUES < 4031, PARTITION 4033 <= VALUES < 4055, PARTITION 4058 <= VALUES < 4257, PARTITION VALUE = 4900, PARTITION OTHERS);

2.Check M_SQL_PLAN_CACHE .

With the help of below query we can get a list of queries that are to identify the where clause

select statement_string, execution_count, total_execution_time from m_sql_plan_cache where statement_string like '%<TABLE_NAME>%' order by total_execution_time desc;

Download the SQL HANA_SQL_Statistics_JoinStatistics_1.00.120+ from OSS 1969700 and modify the SQL like below.

Goto modification section.

Modify the modification section as per your requirement.

From above we can see that most of the joins are happening on DOCNUM and hence a HASH on this column will make the query runtime faster .

4. There can be some cases where we will not have enough information even from here or it will be empty . In that case we will have to enable the sql trace for the specific table .

5.If there is no specific range values that are frequently queried and if we have a case like most of the columns are used most of the times a HASH algorithm will be a best fit.

It is similar to round robin partition but , data will be distributed according to the hash algorithm on their one or 2 designated primary key columns.

-A Hash algorithm can only happen on a PRIMARY key field.

-It is always optimal not to choose more than 2 primary key field for HASH

-Within primary key , check for which row has maximum distinct records . That specific column can be chosen for re-partition .

To determine which primary key column we can choose for re-partition, perform below.

a.Load the table fully into memory

c.Find the primary key column which has maximum distinct record.

Hence a HASH on DOCNUM for EDIDS table is best choice.

ALTER TABLE SAPERP.EDIDS" PARTITION BY HASH (DOCNUM) PARTITIONS 7

NOTE:When ever a table is queried and if that table or partition is not present in memory HANA automatically loads it into the memory either partially or fully. If that table is partitioned ,which ever row that is getting queried, then that specific partition which has the required data gets into memory. Even if you only need 1 row from a partition of a table which has 1 billion records, that entire partition will get loaded either partially or fully . In HANA we can never load 1 row alone into memory from a table.

So,How to determine the number of partitions in scale out scenario?

The optimal number of partition should be decided optimally such that the load gets distributed across different nodes in HANA in case of scale-out scenario.For a table which is not yet partitioned and which is nearing more than 1.5 billion.

It is recommended to always go for n-1 number of partition where n is the total number of nodes in HANA including Master node. Why -1 is because we should not have any partitions sitting on master node as it will always be better to leave the master node alone to perform transaction processing alone.

Eg:

HANA – 1 master node,3 slaves,1 standby => Then the number of partitions is 3 . (Always don’t take into count the standby node as it does not have any data volumes assigned to it)

HANA – 9 nodes =1 Master,7 slaves,1 standby =>Partition number should be 7 and it should be distributed across the worked nodes via the move command specified

GENERAL RULE OF THUMB IN PARTITION

-We can have any number of partitions as you specify, but it is generally not advisable to have any tables with more than 100 partitions

-It is recommended to have atleast 100 million records per partitions and each partition memory utilization at any point of time should not be more than 50GB.

-Any non-partitioned table which has more than 2.14 billion records will make the system crash and hence it is best to put a partition when it is at 1.5 billion

-For tables which are already partitioned and we have to re-partition as either the current partition is not optimal or if the record count per partition is more than 1.5 billion we need to increase the number of partitions

-Always choose the number of partition as a multiple or dividend of current partition . Eg:If a table is partitioned with 7 partition , you can increase the partition to 7*2=14 or so on. This is only useful to make the partition job run parallelly.

-HANA will crash if the number of rows per partition is more than 2.14 billion

TYPES OF BASIC PARTITIONS AND THEORY INVOLVED

Thanks for reading!! Please leave a suggestion !!

Here I wish to discuss about HANA partitioning concepts , how to determine the number of partitions,how to determine the optimal column,how does HANA handles the partition command ,Types of basic partitions and so on.

Why do we need to partition tables in HANA ?

As HANA can not hold more than 2 billion records per column store table or per partition , any table which has crossed more than 1.5 billion rows are valid candidates of partitioning . It also helps in parallel procession of queries , if they are distributed across nodes in case of HANA scale out scenario producing results faster .

CASE STUDY: Here I am going to change an existing table which is partitioned on all the key fields to partition on 1 optimum key column: ie, The partition of a table EDIDS is going to be changed from HASH 21 MANDT,DOCNUM,LOGDAT,LOGTIM,COUNTR to HASH 7 DOCNU . The main reason is because , many SQLs and joins that are running against this table are running with where clause on DOCNUM .

Steps:

Log into studio and display the table and make a note of details.

Find the table and display: Observe the things highlighted below .Column Key: Primary key fields of any table.

Check the runtime properties.:

Things to observer here .

Number of entries =Row count(3.1 billion)

Memory consumption in main and in Delta is also indicated there.

Partition specification: 21 partitions distributed across 7 slave nodes ,3 partitions per node.

So how does HANA handles this partitioning?

When the alter command specified in previous gets executed below things happen in backend in HANA DB.

We can check for the status of the threads from performance tab and filter it out based on the master node and into the server on which this table is sitting.

Look for the SQL that we had executed:Choose the master node and the slave node to which we had moved the partitions to.

Click ok.

Sort by application user in performance tab. Though I had logged in as SYSTEM for re-partition, HANA will also know the WTS via which I am connecting to this DB.

In the background

1.My SQL gets prepared and delta merge for that table will first executed.

Here is my delta merge job that got triggered and the other jobs that will follow the same.

Below delta merge job has got completed 59/63 ..All the remaining jobs has 30 steps and it will run in parallel post delta merge completes.

so,Why is the max progress for _SYS_SPLIT_EDIDS shows as 30 ? It is actually the total number of columns in that table (including internal columns)

I have used M_CS_ALL_COLUMNS rather than M_CS_COLUMNS because the former also holds hana internal columns against the lateral

Lets check the threads now.

After 6 hours, my re-partition got completed .Now I distribute the 7 partitions between the 7 HANA nodes. Moving back the partitions:

Post re-partition and table distribution to different nodes.

Here if we see the total 3.1 billion is spread across 21 partitions with around 148 million records count per partition.

To partition command to work, we need to first move all the partitions from different node to single node.

Below I choose a to leave partitions 1,2,3 to the same server and move the other partitions from other servers to this server.

Sample SQL:

ALTER TABLE "SAPERP"."EDIDS" MOVE PARTITION 4 TO '<server_name>:3<nn>03' PHYSICAL;

ALTER TABLE "SAPERP"."EDIDS" MOVE PARTITION 5 TO '<server_name>:3<nn>03' PHYSICAL;

ALTER TABLE "SAPERP"."EDIDS" MOVE PARTITION 6 TO '<server_name>:3<nn>03' PHYSICAL;

ALTER TABLE "SAPERP"."EDIDS" MOVE PARTITION 7 TO '<server_name>:3<nn>03' PHYSICAL;

ALTER TABLE "SAPERP"."EDIDS" MOVE PARTITION 8 TO '<server_name>:3<nn>03' PHYSICAL;

ALTER TABLE "SAPERP"."EDIDS" MOVE PARTITION 9 TO '<server_name>:3<nn>03' PHYSICAL;

ALTER TABLE "SAPERP"."EDIDS" MOVE PARTITION 10 TO '<server_name>:3<nn>03' PHYSICAL;

ALTER TABLE "SAPERP"."EDIDS" MOVE PARTITION 11 TO '<server_name>:3<nn>03' PHYSICAL;

ALTER TABLE "SAPERP"."EDIDS" MOVE PARTITION 12 TO '<server_name>:3<nn>03' PHYSICAL;

ALTER TABLE "SAPERP"."EDIDS" MOVE PARTITION 13 TO '<server_name>:3<nn>03' PHYSICAL;

ALTER TABLE "SAPERP"."EDIDS" MOVE PARTITION 14 TO '<server_name>:3<nn>03' PHYSICAL;

ALTER TABLE "SAPERP"."EDIDS" MOVE PARTITION 15 TO '<server_name>:3<nn>03' PHYSICAL;

ALTER TABLE "SAPERP"."EDIDS" MOVE PARTITION 16 TO '<server_name>:3<nn>03' PHYSICAL;

ALTER TABLE "SAPERP"."EDIDS" MOVE PARTITION 17 TO '<server_name>:3<nn>03' PHYSICAL;

ALTER TABLE "SAPERP"."EDIDS" MOVE PARTITION 18 TO '<server_name>:3<nn>03' PHYSICAL;

ALTER TABLE "SAPERP"."EDIDS" MOVE PARTITION 19 TO '<server_name>:3<nn>03' PHYSICAL;

ALTER TABLE "SAPERP"."EDIDS" MOVE PARTITION 20 TO '<server_name>:3<nn>03' PHYSICAL;

ALTER TABLE "SAPERP"."EDIDS" MOVE PARTITION 21 TO '<server_name>:3<nn>03' PHYSICAL;

o/p:

Post this we have all the partition in one node in a scale out environment.

Now I proceed with partitioning command.

ALTER TABLE SAPERP.EDIDS PARTITION BY HASH (DOCNUM) PARTITIONS 7;

DETERMINING THE OPTIMUM COLUMN and NUMBER FOR PARTITION

So why would I consider DOCNUM as optimal column to be partitioned and why the number of partitions was choosen as 7?

In order to choose a column for partitioning we need to analyze the table usage in depth .

Analyzing and understanding the SQLs on the table:

1.Check with the functional team. Ask them which column does they query frequently and which column is always part of where clause . If they are not very clear on the same we can help them with plan cache data.

Eg: If a table keep getting queried based on a where clause on year, and in general the table has data since 2015, a Range partition on YEAR column will create a clear demarcation between hot and cold data .

Sample SQL for range partition:

ALTER TABLE SAPERP.BSEG PARTITION BY RANGE (BUKRS) (PARTITION 0 <= VALUES < 1000, PARTITION 1000 <= VALUES < 1101, PARTITION 2000 <= VALUES < 4000,PARTITION 4000 <= VALUES < 4031, PARTITION 4033 <= VALUES < 4055, PARTITION 4058 <= VALUES < 4257, PARTITION VALUE = 4900, PARTITION OTHERS);

2.Check M_SQL_PLAN_CACHE .

With the help of below query we can get a list of queries that are to identify the where clause

select statement_string, execution_count, total_execution_time from m_sql_plan_cache where statement_string like '%<TABLE_NAME>%' order by total_execution_time desc;

3.How to check the columns that are getting joined on this table using Join statistics?

Download the SQL HANA_SQL_Statistics_JoinStatistics_1.00.120+ from OSS 1969700 and modify the SQL like below.

Goto modification section.

Modify the modification section as per your requirement.

From above we can see that most of the joins are happening on DOCNUM and hence a HASH on this column will make the query runtime faster .

4. There can be some cases where we will not have enough information even from here or it will be empty . In that case we will have to enable the sql trace for the specific table .

5.If there is no specific range values that are frequently queried and if we have a case like most of the columns are used most of the times a HASH algorithm will be a best fit.

It is similar to round robin partition but , data will be distributed according to the hash algorithm on their one or 2 designated primary key columns.

-A Hash algorithm can only happen on a PRIMARY key field.

-It is always optimal not to choose more than 2 primary key field for HASH

-Within primary key , check for which row has maximum distinct records . That specific column can be chosen for re-partition .

To determine which primary key column we can choose for re-partition, perform below.

a.Load the table fully into memory

b.Open the table from studio and find the primary key for the table

b.Open the table from studio and find the primary key for the table

c.Find the primary key column which has maximum distinct record.

Hence a HASH on DOCNUM for EDIDS table is best choice.

ALTER TABLE SAPERP.EDIDS" PARTITION BY HASH (DOCNUM) PARTITIONS 7

NOTE:When ever a table is queried and if that table or partition is not present in memory HANA automatically loads it into the memory either partially or fully. If that table is partitioned ,which ever row that is getting queried, then that specific partition which has the required data gets into memory. Even if you only need 1 row from a partition of a table which has 1 billion records, that entire partition will get loaded either partially or fully . In HANA we can never load 1 row alone into memory from a table.

So,How to determine the number of partitions in scale out scenario?

The optimal number of partition should be decided optimally such that the load gets distributed across different nodes in HANA in case of scale-out scenario.For a table which is not yet partitioned and which is nearing more than 1.5 billion.

It is recommended to always go for n-1 number of partition where n is the total number of nodes in HANA including Master node. Why -1 is because we should not have any partitions sitting on master node as it will always be better to leave the master node alone to perform transaction processing alone.

Eg:

HANA – 1 master node,3 slaves,1 standby => Then the number of partitions is 3 . (Always don’t take into count the standby node as it does not have any data volumes assigned to it)

HANA – 9 nodes =1 Master,7 slaves,1 standby =>Partition number should be 7 and it should be distributed across the worked nodes via the move command specified

GENERAL RULE OF THUMB IN PARTITION

-We can have any number of partitions as you specify, but it is generally not advisable to have any tables with more than 100 partitions

-It is recommended to have atleast 100 million records per partitions and each partition memory utilization at any point of time should not be more than 50GB.

-Any non-partitioned table which has more than 2.14 billion records will make the system crash and hence it is best to put a partition when it is at 1.5 billion

-For tables which are already partitioned and we have to re-partition as either the current partition is not optimal or if the record count per partition is more than 1.5 billion we need to increase the number of partitions

-Always choose the number of partition as a multiple or dividend of current partition . Eg:If a table is partitioned with 7 partition , you can increase the partition to 7*2=14 or so on. This is only useful to make the partition job run parallelly.

-HANA will crash if the number of rows per partition is more than 2.14 billion

TYPES OF BASIC PARTITIONS AND THEORY INVOLVED

- The partitioning feature of the SAP HANA database splits column-store tables horizontally into disjunctive sub-tables or partitions. In this way, large tables can be broken down into smaller, more manageable parts. Partitioning is typically used in multiple-host systems.

- HANA can not hold any table which is not partitioned to have more than 2.14 billion records . To overcome this limitation re-partitioning of tables comes into picture along with enhancing the query performance in a scale -out scenario.

- There are certain reasons for splitting tables in multiple partitions:

- To improve performance on delta merge operations

- If the WHERE clause of a query matches the partitioning criteria, then it may be possible to exclude some partitions from the execution of the query or even to execute it on a single partition only

- To avoid running into limitation of storing maximum 2 billion rows

- For load balancing in scale-out environments

- AP HANA provides administration tools for monitoring table distribution and redistributing tables. There are table placement rules that are used to assign certain tables always to the master or to slave index servers, or to specify the number rows that should trigger a repartitioning of the table. Table placement rules are created based on a classification of tables into table group types, sub types and groups, which is part of the table metadata.

NOTE:1.If we have primary key fields we can use only HASH or RANGE .2.ROUNDROBIN can only be used when we don’t have any primary key fields.3.RANGE PARTITION can not be based on non primary key fields.4.HASH partition can only be done on primary key fields like range partition.5.HASH algorithm is based on primary key fields which has maximum number of distinct records as already discussed which can be determined after loading the table into memory.6.We can find the most frequently ran SQL on any table from M_SQL_PAN_CACHE as shown which might also help in determining the best algorithm based on “WHERE” clause in SQL.select statement_string, execution_count, total_execution_time from m_sql_plan_cache where statement_string like '>table_name>%' order by total_execution_time desc;HANA Partitioning- Partitioning Performance Tips

NOTE:1.If we have primary key fields we can use only HASH or RANGE .2.ROUNDROBIN can only be used when we don’t have any primary key fields.3.RANGE PARTITION can not be based on non primary key fields.4.HASH partition can only be done on primary key fields like range partition.5.HASH algorithm is based on primary key fields which has maximum number of distinct records as already discussed which can be determined after loading the table into memory.6.We can find the most frequently ran SQL on any table from M_SQL_PAN_CACHE as shown which might also help in determining the best algorithm based on “WHERE” clause in SQL.select statement_string, execution_count, total_execution_time from m_sql_plan_cache where statement_string like '>table_name>%' order by total_execution_time desc;HANA Partitioning- Partitioning Performance Tips

- Consider the following possible optimization approaches:

- Make sure that existing partitioning optimally supports the most important SQL statements

- Define partitioning on as few columns as possible

- Use as few partitions as possible

- Try to avoid changes of records that require a remote uniqueness check or a partition move

- For HASH partitioning, include at least one of the key columns

- Starting with SAP HANA 1.00.122 OLTP accesses to partitioned tables are executed single-threaded and so the runtime can be increased compared to a parallelized execution (particularly in cases where a high amount of records is processed). You can set indexserver.ini -> [joins] -> single_thread_execution_for_partitioned_tables to 'false' in order to allow also parallelized processing (e.g. in context of COUNT DISTINCT performance on a partitioned table). See SAP Note 2620310 for more information.

- The partitioning process can be parallelized using parameters below(Note 2222250:For Split_threads parameter, value=80 would be a good starting value for production system. You can consider to increase until 128 based on system behavior.

- To avoid transaction timeouts during long running partitioning SQL statements, please increase timeout parameters. (See Note 1909707)

indexserver.ini -> [transaction] -> idle_cursor_lifetime

SQL COMMANDS FOR PARTITIONING:1.RANGE:

Sample SQL:ALTER TABLE SAPERP.BSEG PARTITION BY RANGE (BUKRS) (PARTITION 0 <= VALUES < 1000, PARTITION 1000 <= VALUES < 1101, PARTITION 2000 <= VALUES < 4000,PARTITION 4000 <= VALUES < 4031, PARTITION 4033 <= VALUES < 4055, PARTITION 4058 <= VALUES < 4257, PARTITION VALUE = 4900, PARTITION OTHERS);

We have to be very cautious with the range that we choose in range partition. Range partition will not ensure equal distribution of data . Eg. In above query we have a range as below

PARTITION 1 = BUKRS->1 to 999

PARTITION 2 = BUKRS->1000 to 1100

PARTITION 3 = BUKRS->2000 to 3999

PARTITION 4 = BUKRS->4000 to 4030

PARTITION 5 = BUKRS->4033 to 4054

PARTITION 6 = BUKRS->4058 to 4256

PARTITION 7 = BUKRS = 4900

PARTITION 8 = BUKRS-> Any other value

We have dedicated 1 full partition to value BUKRS=4900 as it alone will have around 400 million records . In above we have chosen a range where each partition will have around 300 to 400 million records . Any other value for BUKRS will go and sit in PARTITION 8. However we need to be very careful on the range because any new inserts will go into only partition 8 and we should ensure that its row count does not gets overloaded.

A wise use of range partition will ensure a good separation between hot and cold data .

2.ROUNDROBIN:

SQL:

ALTER TABLE SAPERP."/BIC/FZGFSMT02" PARTITION BY ROUNDROBIN 7

Here for round robin, a primary requirement is that they do not have any primary key fields . In that case a roundrobin with 7 partition will ensure data inserts in all the partition in cyclic manner .

Row 1 will go to partition 1

Row 2 will go to partition 2

Row 3 will go to partition 3

Row 3 will go to partition 4

Row 3 will go to partition 5

Row 3 will go to partition 6

Row 3 will go to partition 7

Row 3 will go to partition 1

Row 3 will go to partition 2

….. and so on.

MULTILEVEL-PARTITIONING:

What is multi-level partitioning?Multi-level partitioning can be used to overcome the limitation of single-level hash partitioning and range partitioning, that is, the limitation of only being able to use key columns as partitioning columns. Multi-level partitioning makes it possible to partition by a column that is not part of the primary key.

There are 4 types of multi-level partitioning.

1.HASH-RANGE – Most common

2.Round_Robin-RANGE

3.Hash-Hash

4.RANGE-RANGE

SAMPLE Commands:

1.HASH-RANGE partition: ALTER TABLE SAPERP.CDPOS_RANGE1 PARTITION BY HASH (a, b) PARTITIONS 4,RANGE (c) (PARTITION 1 <= VALUES < 10, PARTITION 10 <= VALUES < 20)

2.Round_Robin-RANGE partition:ALTER TABLE SAPERP.CDPOS_RANGE1 PARTITION BY ROUNDROBIN PARTITIONS 4,RANGE (c) (PARTITION 1 <= VALUES < 10, PARTITION 10 <= VALUES < 20) or

ALTER TABLE SAPBE3."/BIC/F****RC002" WITH PARAMETERS ('PARTITION_SPEC' = 'ROUNDROBIN 5; RANGE KEY_Z***002P 0,1,2,*');

3.HASH-HASH: ALTER TABLE SAPERP.CDPOS_RANGE1 PARTITION BY HASH (a, b) PARTITIONS 4, HASH (c) PARTITIONS 7

4.RANGE-RANGE: ALTER TABLE SAPERP.CDPOS_RANGE1 PARTITION BY RANGE (a) (PARTITION 1 <= VALUES < 5, PARTITION 5 <= VALUES < 20), RANGE (c)(PARTITION 1 <= VALUES < 5, PARTITION 5 <= VALUES < 20)

A.HASH-RANGE:

You can use this approach to implement time-based partitioning, for example, to leverage a date column and build partitions according to month or year.The performance of the delta merge operation depends on the size of the main index of a table. If data is inserted into a table over time and it also contains temporal information in its structure, for example a date, multi-level partitioning may be an ideal candidate. If the partitions containing old data are infrequently modified, there is no need for a delta merge on these partitions: the delta merge is only required on new partitions where new data is inserted. Using time-based partitioning in this way the run-time of the delta merge operation remains relatively constant over time as new partitions are being created and used.As mentioned above, in the second level of partitioning there is a relaxation of the key column restriction (for hash-range, hash-hash and range-range).

B.ROUND-ROBIN-RANGE: Round-robin-range multi-level partitioning is the same as hash-range multi-level partitioning but with round-robin partitioning at the first level.

C.HASH-HASH: Hash-hash multi-level partitioning is implemented with hash partitioning at both levels. The advantage of this is that the hash partitioning at the second level may be defined on a non-key column.

RANGE-RANGE: Range-range multi-level partitioning is implemented with range partitioning at both levels. The advantage of this is that the range partitioning at the second level may be defined on a non-key column.

OTHER USEFUL OSS NOTES:Despite above, SAP itself provides recommendations for many of the tables partition .

- 2289491 - Best Practices for Partitioning of Finance Tables

- 2418299 - SAP HANA: Partitioning Best Practices / Examples for SAP Tables

- 1695778 - Partitioning of BW tables in the SAP HANA database

Thanks for reading!! Please leave a suggestion !!

- SAP Managed Tags:

- SAP HANA,

- SAP HANA, express edition,

- SAP HANA, platform edition,

- Basis Technology

15 Comments

You must be a registered user to add a comment. If you've already registered, sign in. Otherwise, register and sign in.

Labels in this area

-

"automatische backups"

1 -

"regelmäßige sicherung"

1 -

"TypeScript" "Development" "FeedBack"

1 -

505 Technology Updates 53

1 -

ABAP

14 -

ABAP API

1 -

ABAP CDS Views

2 -

ABAP CDS Views - BW Extraction

1 -

ABAP CDS Views - CDC (Change Data Capture)

1 -

ABAP class

2 -

ABAP Cloud

2 -

ABAP Development

5 -

ABAP in Eclipse

1 -

ABAP Platform Trial

1 -

ABAP Programming

2 -

abap technical

1 -

absl

2 -

access data from SAP Datasphere directly from Snowflake

1 -

Access data from SAP datasphere to Qliksense

1 -

Accrual

1 -

action

1 -

adapter modules

1 -

Addon

1 -

Adobe Document Services

1 -

ADS

1 -

ADS Config

1 -

ADS with ABAP

1 -

ADS with Java

1 -

ADT

2 -

Advance Shipping and Receiving

1 -

Advanced Event Mesh

3 -

AEM

1 -

AI

7 -

AI Launchpad

1 -

AI Projects

1 -

AIML

9 -

Alert in Sap analytical cloud

1 -

Amazon S3

1 -

Analytical Dataset

1 -

Analytical Model

1 -

Analytics

1 -

Analyze Workload Data

1 -

annotations

1 -

API

1 -

API and Integration

3 -

API Call

2 -

Application Architecture

1 -

Application Development

5 -

Application Development for SAP HANA Cloud

3 -

Applications and Business Processes (AP)

1 -

Artificial Intelligence

1 -

Artificial Intelligence (AI)

5 -

Artificial Intelligence (AI) 1 Business Trends 363 Business Trends 8 Digital Transformation with Cloud ERP (DT) 1 Event Information 462 Event Information 15 Expert Insights 114 Expert Insights 76 Life at SAP 418 Life at SAP 1 Product Updates 4

1 -

Artificial Intelligence (AI) blockchain Data & Analytics

1 -

Artificial Intelligence (AI) blockchain Data & Analytics Intelligent Enterprise

1 -

Artificial Intelligence (AI) blockchain Data & Analytics Intelligent Enterprise Oil Gas IoT Exploration Production

1 -

Artificial Intelligence (AI) blockchain Data & Analytics Intelligent Enterprise sustainability responsibility esg social compliance cybersecurity risk

1 -

ASE

1 -

ASR

2 -

ASUG

1 -

Attachments

1 -

Authorisations

1 -

Automating Processes

1 -

Automation

2 -

aws

2 -

Azure

1 -

Azure AI Studio

1 -

B2B Integration

1 -

Backorder Processing

1 -

Backup

1 -

Backup and Recovery

1 -

Backup schedule

1 -

BADI_MATERIAL_CHECK error message

1 -

Bank

1 -

BAS

1 -

basis

2 -

Basis Monitoring & Tcodes with Key notes

2 -

Batch Management

1 -

BDC

1 -

Best Practice

1 -

bitcoin

1 -

Blockchain

3 -

bodl

1 -

BOP in aATP

1 -

BOP Segments

1 -

BOP Strategies

1 -

BOP Variant

1 -

BPC

1 -

BPC LIVE

1 -

BTP

12 -

BTP Destination

2 -

Business AI

1 -

Business and IT Integration

1 -

Business application stu

1 -

Business Application Studio

1 -

Business Architecture

1 -

Business Communication Services

1 -

Business Continuity

1 -

Business Data Fabric

3 -

Business Partner

12 -

Business Partner Master Data

10 -

Business Technology Platform

2 -

Business Trends

1 -

CA

1 -

calculation view

1 -

CAP

3 -

Capgemini

1 -

CAPM

1 -

Catalyst for Efficiency: Revolutionizing SAP Integration Suite with Artificial Intelligence (AI) and

1 -

CCMS

2 -

CDQ

12 -

CDS

2 -

Cental Finance

1 -

Certificates

1 -

CFL

1 -

Change Management

1 -

chatbot

1 -

chatgpt

3 -

CL_SALV_TABLE

2 -

Class Runner

1 -

Classrunner

1 -

Cloud ALM Monitoring

1 -

Cloud ALM Operations

1 -

cloud connector

1 -

Cloud Extensibility

1 -

Cloud Foundry

4 -

Cloud Integration

6 -

Cloud Platform Integration

2 -

cloudalm

1 -

communication

1 -

Compensation Information Management

1 -

Compensation Management

1 -

Compliance

1 -

Compound Employee API

1 -

Configuration

1 -

Connectors

1 -

Consolidation Extension for SAP Analytics Cloud

2 -

Control Indicators.

1 -

Controller-Service-Repository pattern

1 -

Conversion

1 -

Cosine similarity

1 -

cryptocurrency

1 -

CSI

1 -

ctms

1 -

Custom chatbot

3 -

Custom Destination Service

1 -

custom fields

1 -

Customer Experience

1 -

Customer Journey

1 -

Customizing

1 -

cyber security

3 -

Data

1 -

Data & Analytics

1 -

Data Aging

1 -

Data Analytics

2 -

Data and Analytics (DA)

1 -

Data Archiving

1 -

Data Back-up

1 -

Data Governance

5 -

Data Integration

2 -

Data Quality

12 -

Data Quality Management

12 -

Data Synchronization

1 -

data transfer

1 -

Data Unleashed

1 -

Data Value

8 -

database tables

1 -

Datasphere

2 -

datenbanksicherung

1 -

dba cockpit

1 -

dbacockpit

1 -

Debugging

2 -

Delimiting Pay Components

1 -

Delta Integrations

1 -

Destination

3 -

Destination Service

1 -

Developer extensibility

1 -

Developing with SAP Integration Suite

1 -

Devops

1 -

digital transformation

1 -

Documentation

1 -

Dot Product

1 -

DQM

1 -

dump database

1 -

dump transaction

1 -

e-Invoice

1 -

E4H Conversion

1 -

Eclipse ADT ABAP Development Tools

2 -

edoc

1 -

edocument

1 -

ELA

1 -

Embedded Consolidation

1 -

Embedding

1 -

Embeddings

1 -

Employee Central

1 -

Employee Central Payroll

1 -

Employee Central Time Off

1 -

Employee Information

1 -

Employee Rehires

1 -

Enable Now

1 -

Enable now manager

1 -

endpoint

1 -

Enhancement Request

1 -

Enterprise Architecture

1 -

ETL Business Analytics with SAP Signavio

1 -

Euclidean distance

1 -

Event Dates

1 -

Event Driven Architecture

1 -

Event Mesh

2 -

Event Reason

1 -

EventBasedIntegration

1 -

EWM

1 -

EWM Outbound configuration

1 -

EWM-TM-Integration

1 -

Existing Event Changes

1 -

Expand

1 -

Expert

2 -

Expert Insights

1 -

Fiori

14 -

Fiori Elements

2 -

Fiori SAPUI5

12 -

Flask

1 -

Full Stack

8 -

Funds Management

1 -

General

1 -

Generative AI

1 -

Getting Started

1 -

GitHub

8 -

Grants Management

1 -

groovy

1 -

GTP

1 -

HANA

6 -

HANA Cloud

2 -

Hana Cloud Database Integration

2 -

HANA DB

2 -

HANA XS Advanced

1 -

Historical Events

1 -

home labs

1 -

HowTo

1 -

HR Data Management

1 -

html5

8 -

HTML5 Application

1 -

Identity cards validation

1 -

idm

1 -

Implementation

1 -

input parameter

1 -

instant payments

1 -

Integration

3 -

Integration Advisor

1 -

Integration Architecture

1 -

Integration Center

1 -

Integration Suite

1 -

intelligent enterprise

1 -

iot

1 -

Java

1 -

job

1 -

Job Information Changes

1 -

Job-Related Events

1 -

Job_Event_Information

1 -

joule

4 -

Journal Entries

1 -

Just Ask

1 -

Kerberos for ABAP

8 -

Kerberos for JAVA

8 -

KNN

1 -

Launch Wizard

1 -

learning content

2 -

Life at SAP

1 -

lightning

1 -

Linear Regression SAP HANA Cloud

1 -

local tax regulations

1 -

LP

1 -

Machine Learning

2 -

Marketing

1 -

Master Data

3 -

Master Data Management

14 -

Maxdb

2 -

MDG

1 -

MDGM

1 -

MDM

1 -

Message box.

1 -

Messages on RF Device

1 -

Microservices Architecture

1 -

Microsoft Universal Print

1 -

Middleware Solutions

1 -

Migration

5 -

ML Model Development

1 -

Modeling in SAP HANA Cloud

8 -

Monitoring

3 -

MTA

1 -

Multi-Record Scenarios

1 -

Multiple Event Triggers

1 -

Neo

1 -

New Event Creation

1 -

New Feature

1 -

Newcomer

1 -

NodeJS

2 -

ODATA

2 -

OData APIs

1 -

odatav2

1 -

ODATAV4

1 -

ODBC

1 -

ODBC Connection

1 -

Onpremise

1 -

open source

2 -

OpenAI API

1 -

Oracle

1 -

PaPM

1 -

PaPM Dynamic Data Copy through Writer function

1 -

PaPM Remote Call

1 -

PAS-C01

1 -

Pay Component Management

1 -

PGP

1 -

Pickle

1 -

PLANNING ARCHITECTURE

1 -

Popup in Sap analytical cloud

1 -

PostgrSQL

1 -

POSTMAN

1 -

Process Automation

2 -

Product Updates

4 -

PSM

1 -

Public Cloud

1 -

Python

4 -

Qlik

1 -

Qualtrics

1 -

RAP

3 -

RAP BO

2 -

Record Deletion

1 -

Recovery

1 -

recurring payments

1 -

redeply

1 -

Release

1 -

Remote Consumption Model

1 -

Replication Flows

1 -

research

1 -

Resilience

1 -

REST

1 -

REST API

1 -

Retagging Required

1 -

Risk

1 -

Rolling Kernel Switch

1 -

route

1 -

rules

1 -

S4 HANA

1 -

S4 HANA Cloud

1 -

S4 HANA On-Premise

1 -

S4HANA

3 -

S4HANA_OP_2023

2 -

SAC

10 -

SAC PLANNING

9 -

SAP

4 -

SAP ABAP

1 -

SAP Advanced Event Mesh

1 -

SAP AI Core

8 -

SAP AI Launchpad

8 -

SAP Analytic Cloud Compass

1 -

Sap Analytical Cloud

1 -

SAP Analytics Cloud

4 -

SAP Analytics Cloud for Consolidation

3 -

SAP Analytics Cloud Story

1 -

SAP analytics clouds

1 -

SAP BAS

1 -

SAP Basis

6 -

SAP BODS

1 -

SAP BODS certification.

1 -

SAP BTP

21 -

SAP BTP Build Work Zone

2 -

SAP BTP Cloud Foundry

6 -

SAP BTP Costing

1 -

SAP BTP CTMS

1 -

SAP BTP Innovation

1 -

SAP BTP Migration Tool

1 -

SAP BTP SDK IOS

1 -

SAP Build

11 -

SAP Build App

1 -

SAP Build apps

1 -

SAP Build CodeJam

1 -

SAP Build Process Automation

3 -

SAP Build work zone

10 -

SAP Business Objects Platform

1 -

SAP Business Technology

2 -

SAP Business Technology Platform (XP)

1 -

sap bw

1 -

SAP CAP

2 -

SAP CDC

1 -

SAP CDP

1 -

SAP CDS VIEW

1 -

SAP Certification

1 -

SAP Cloud ALM

4 -

SAP Cloud Application Programming Model

1 -

SAP Cloud Integration for Data Services

1 -

SAP cloud platform

8 -

SAP Companion

1 -

SAP CPI

3 -

SAP CPI (Cloud Platform Integration)

2 -

SAP CPI Discover tab

1 -

sap credential store

1 -

SAP Customer Data Cloud

1 -

SAP Customer Data Platform

1 -

SAP Data Intelligence

1 -

SAP Data Migration in Retail Industry

1 -

SAP Data Services

1 -

SAP DATABASE

1 -

SAP Dataspher to Non SAP BI tools

1 -

SAP Datasphere

10 -

SAP DRC

1 -

SAP EWM

1 -

SAP Fiori

2 -

SAP Fiori App Embedding

1 -

Sap Fiori Extension Project Using BAS

1 -

SAP GRC

1 -

SAP HANA

1 -

SAP HCM (Human Capital Management)

1 -

SAP HR Solutions

1 -

SAP IDM

1 -

SAP Integration Suite

9 -

SAP Integrations

4 -

SAP iRPA

2 -

SAP Learning Class

1 -

SAP Learning Hub

1 -

SAP Odata

2 -

SAP on Azure

1 -

SAP PartnerEdge

1 -

sap partners

1 -

SAP Password Reset

1 -

SAP PO Migration

1 -

SAP Prepackaged Content

1 -

SAP Process Automation

2 -

SAP Process Integration

2 -

SAP Process Orchestration

1 -

SAP S4HANA

2 -

SAP S4HANA Cloud

1 -

SAP S4HANA Cloud for Finance

1 -

SAP S4HANA Cloud private edition

1 -

SAP Sandbox

1 -

SAP STMS

1 -

SAP successfactors

3 -

SAP SuccessFactors HXM Core

1 -

SAP Time

1 -

SAP TM

2 -

SAP Trading Partner Management

1 -

SAP UI5

1 -

SAP Upgrade

1 -

SAP Utilities

1 -

SAP-GUI

8 -

SAP_COM_0276

1 -

SAPBTP

1 -

SAPCPI

1 -

SAPEWM

1 -

sapmentors

1 -

saponaws

2 -

SAPS4HANA

1 -

SAPUI5

4 -

schedule

1 -

Secure Login Client Setup

8 -

security

9 -

Selenium Testing

1 -

SEN

1 -

SEN Manager

1 -

service

1 -

SET_CELL_TYPE

1 -

SET_CELL_TYPE_COLUMN

1 -

SFTP scenario

2 -

Simplex

1 -

Single Sign On

8 -

Singlesource

1 -

SKLearn

1 -

soap

1 -

Software Development

1 -

SOLMAN

1 -

solman 7.2

2 -

Solution Manager

3 -

sp_dumpdb

1 -

sp_dumptrans

1 -

SQL

1 -

sql script

1 -

SSL

8 -

SSO

8 -

Substring function

1 -

SuccessFactors

1 -

SuccessFactors Platform

1 -

SuccessFactors Time Tracking

1 -

Sybase

1 -

system copy method

1 -

System owner

1 -

Table splitting

1 -

Tax Integration

1 -

Technical article

1 -

Technical articles

1 -

Technology Updates

1 -

Technology Updates

1 -

Technology_Updates

1 -

terraform

1 -

Threats

1 -

Time Collectors

1 -

Time Off

2 -

Time Sheet

1 -

Time Sheet SAP SuccessFactors Time Tracking

1 -

Tips and tricks

2 -

toggle button

1 -

Tools

1 -

Trainings & Certifications

1 -

Transport in SAP BODS

1 -

Transport Management

1 -

TypeScript

2 -

ui designer

1 -

unbind

1 -

Unified Customer Profile

1 -

UPB

1 -

Use of Parameters for Data Copy in PaPM

1 -

User Unlock

1 -

VA02

1 -

Validations

1 -

Vector Database

2 -

Vector Engine

1 -

Visual Studio Code

1 -

VSCode

1 -

Web SDK

1 -

work zone

1 -

workload

1 -

xsa

1 -

XSA Refresh

1

- « Previous

- Next »

Related Content

- Partitioning a table in HANA using Range Partitioning using multiple values from Partitioning column in Technology Q&A

- explore the business continuity recovery sap solutions on AWS DRS in Technology Blogs by Members

- Single Sign On to SAP Cloud Integration (CPI runtime) from an external Identity Provider in Technology Blogs by SAP

- SAP Datasphere - Space, Data Integration, and Data Modeling Best Practices in Technology Blogs by SAP

- Migrating BP Counterparty role (TR0151) in Technology Blogs by Members

Top kudoed authors

| User | Count |

|---|---|

| 12 | |

| 12 | |

| 7 | |

| 5 | |

| 5 | |

| 4 | |

| 4 | |

| 3 | |

| 3 | |

| 3 |