- SAP Community

- Products and Technology

- Enterprise Resource Planning

- ERP Blogs by SAP

- SAP LT Replication Server for CFin - Third-Party S...

Enterprise Resource Planning Blogs by SAP

Get insights and updates about cloud ERP and RISE with SAP, SAP S/4HANA and SAP S/4HANA Cloud, and more enterprise management capabilities with SAP blog posts.

Turn on suggestions

Auto-suggest helps you quickly narrow down your search results by suggesting possible matches as you type.

Showing results for

former_member27

Explorer

Options

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

06-11-2020

3:32 PM

This blog is intended for project members responsible for implementing Central Finance with Non-SAP System. this blog explains the various steps to follow and the special Considerations to transfer data into SLT Staging table .

Introduction:

There is various tool available to update SLT that help to load data to a simplified staging area. The staging area is used to connect Source system that have different data models than a Central Finance system. A new Mass transfer ID is needed in SLT for the Source system. General journal entries (including source references, Profit Center and trading partner) for Accounting Documents (e.g. Invoices, Credit memos, etc.) can be transferred from Source system to Central Finance.

Post replication of data, Application Interface Framework (AIF) is triggered to control data processing within the Central Finance system. The monitoring of the FI document processing is done in the AIF. In AIF, the error messages for documents which are not posted in the Central Finance system are displayed. The correction of these documents can be done, and documents can be reprocessed from within the AIF.

1-Pre-Requisites:

You can start a data load once the data is uploaded into the staging area. You can also start the replication process for the staging area; this means that once data is inserted into the staging area, it will be replicated to Central Finance. Note that, as header tables are linked to dependent tables, you need to ensure that all records for dependent tables are inserted before the records for the header tables are inserted (this is because the replication of data might start once header records are inserted)

2-Options to update Data in SLT Staging Table

ABAP Program for SLT Staging Area

Requirements: Customer Transactions from the non-SAP instance need to be replicated to S/4HANA central Finance system via SLT. In order to transfer the Accounting Documents, an ABAP program need to be developed to transfer the information appropriately to the SLT staging area from where the data can be further passed to the Central Finance AIF interface via standard SLT triggers.

High level approach:

Step 1: Input data files (.csv) containing details of the Accounting Documents will be uploaded on the SLT application server instance via SFTP. Data fields in the file will be as per the standard SLT table structures for the Non-SAP data transmission.

Step 2: Input files will be read from the application layer (on time basis) via ABAP program and will be parsed based on the SLT structures, mapped to the right fields and will be populated to be updated on the SLT tables. Following tables will be used for the same.

Step 3: File once successfully processed, will be marked so that the same file is not getting picked up in the next run.

Step 4: Once the records are populated in the SLT staging tables (dependent tables first, header table later) the std. SAP SLT DB triggers will pull the data based on the active SLT replication configuration and will replicate it to the Central Finance AIF interface for processing.

Scope –

Assumptions-

SAP HANA smart data integration

Available Transformations

Basic SQL: Aggregation, Filter, Join, Sort and Union

Advanced SQL: Case, Lookup, Pivot and Unpivot

Data Life cycle : Date Generation, History Preserving Map Operation, Row Generation, Table Compare and Data Mask

Data Quality: Cleanse, Match and Geocode

Code Execution: AFL, Procedure and R Script

Data Load Process flow:

BAPI – Inbound Interface

Approach : Develop a Custom Program to read files stored in Application server and post Accounting Documents in S/4 HANA via BAPI/IDOC

Pros

Cons

Magnitude :

SAP Central Finance Transaction Replication by Magnitude is a key accelerator for Central Finance implementation projects, enabling integration with non-SAP ERP source systems via pre-defined and purpose-built capabilities .

SAP Central Finance Transaction Replication by Magnitude

Accelerates and maximizes the value of your SAP Central Finance investment

Important Notes for Non-SAP System

2610660 - SAP LT Replication Server for Central Finance - Third-Party System Integration

2589428 - Third Party Interface: Posting documents with more than 900 line items

2551200 - Central Finance Third Party Interface: Additional Filed PRDTAX_CODE in Structure CF_E_DEBIT and CF_E_CREDIT

Conclusion:

This blog is very useful for all the customers and Consultants who are planning to implement Central Finance with Non-SAP System .it Covers the Consideration of Understand the supported various business scenarios in SLT.

Introduction:

There is various tool available to update SLT that help to load data to a simplified staging area. The staging area is used to connect Source system that have different data models than a Central Finance system. A new Mass transfer ID is needed in SLT for the Source system. General journal entries (including source references, Profit Center and trading partner) for Accounting Documents (e.g. Invoices, Credit memos, etc.) can be transferred from Source system to Central Finance.

Post replication of data, Application Interface Framework (AIF) is triggered to control data processing within the Central Finance system. The monitoring of the FI document processing is done in the AIF. In AIF, the error messages for documents which are not posted in the Central Finance system are displayed. The correction of these documents can be done, and documents can be reprocessed from within the AIF.

1-Pre-Requisites:

- Install the current content version, download the content file and upload it to the SAP Landscape Transformation Replication Server system using program DMC_UPLOAD_OBJECT

- The upload program has two parameters. For the first parameter, specify the path to the list file (*_LIST.TXT). For the second parameter, specify the path for the data file (*_DATA_xxx.txt).

- Create the tables for the staging area. following database tables required to generate.

| Table | Description |

| /1LT/CF_E_HEADER | Document header table |

| /1LT/CF_E_ACCT | Accounting items |

| /1LT/CF_E_DEBIT | Debtor items |

| /1LT/CF_E_CREDIT | Creditor items |

| /1LT/CF_E_WHTAX | Withholding tax items |

| /1LT/CF_E_PRDTAX | Product tax items |

| /1LT/CF_E_EXTENT | Customer extension on header level |

| /1LT/CF_E_EXT_IT | Customer extension on item level |

You can start a data load once the data is uploaded into the staging area. You can also start the replication process for the staging area; this means that once data is inserted into the staging area, it will be replicated to Central Finance. Note that, as header tables are linked to dependent tables, you need to ensure that all records for dependent tables are inserted before the records for the header tables are inserted (this is because the replication of data might start once header records are inserted)

2-Options to update Data in SLT Staging Table

- ABAP Program for SLT Staging Area

- SAP HANA smart data integration

- BAPI – Inbound Interface

- Magnitude

ABAP Program for SLT Staging Area

Requirements: Customer Transactions from the non-SAP instance need to be replicated to S/4HANA central Finance system via SLT. In order to transfer the Accounting Documents, an ABAP program need to be developed to transfer the information appropriately to the SLT staging area from where the data can be further passed to the Central Finance AIF interface via standard SLT triggers.

High level approach:

Step 1: Input data files (.csv) containing details of the Accounting Documents will be uploaded on the SLT application server instance via SFTP. Data fields in the file will be as per the standard SLT table structures for the Non-SAP data transmission.

Step 2: Input files will be read from the application layer (on time basis) via ABAP program and will be parsed based on the SLT structures, mapped to the right fields and will be populated to be updated on the SLT tables. Following tables will be used for the same.

Step 3: File once successfully processed, will be marked so that the same file is not getting picked up in the next run.

Step 4: Once the records are populated in the SLT staging tables (dependent tables first, header table later) the std. SAP SLT DB triggers will pull the data based on the active SLT replication configuration and will replicate it to the Central Finance AIF interface for processing.

Scope –

- Read the files data from the SLT application server, parse it based on the SLT structures, mapping handling, and populate it on the SLT staging area.

- Duplicate / data redundancy checks will be there in the ABAP program

- Conversion of fields to SAP DDICs will be required for certain fields and will be built in the ABAP program

- No data harmonization/ complex mapping will be handled in the ABAP program. Mapping is assumed to be provided by the input file.

- Authorizations logic need to be validated during the ABAP program build.

- ABAP program will have the logic to move the processed file to another folder/or another approach so that the same file is not picked up again.

- Basic/Unit testing for the ABAP program from SAP for data samples provided.

- Update FMs to ensure data consistency for data updates

- Determination of failed records during the processing of the file

Assumptions-

- Separate .CSV files will be created per SAP SLT table structure and will be uploaded to the application layer file directory.

- UNIQUE_ID and other key fields will be provided by the input file and ABAP program does not need to generate it.

- The input file will be available on the SLT application server(AL11) . The file transfer from SFTP to SLT app server is not part of scope for the program.

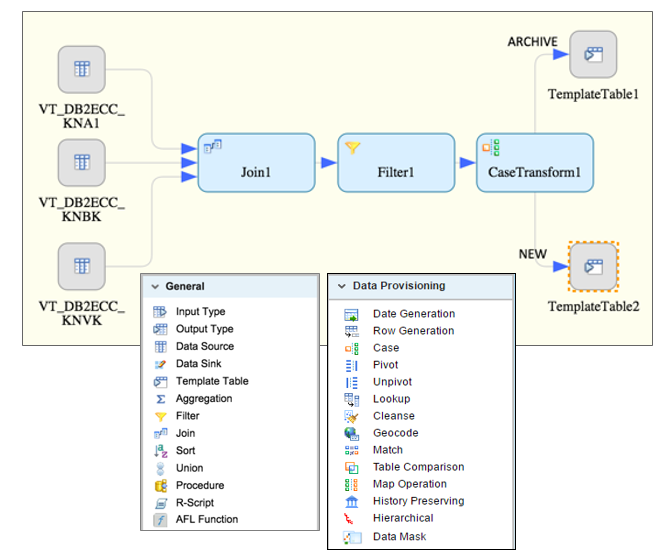

SAP HANA smart data integration

- SDI is very easy to Design and can manage complex data flows

- Fully Graphical user interface

- Drag-and-Drop design paradigm

- Same approach for simple to complex flows, single or multiple flows

Available Transformations

Basic SQL: Aggregation, Filter, Join, Sort and Union

Advanced SQL: Case, Lookup, Pivot and Unpivot

Data Life cycle : Date Generation, History Preserving Map Operation, Row Generation, Table Compare and Data Mask

Data Quality: Cleanse, Match and Geocode

Code Execution: AFL, Procedure and R Script

Data Load Process flow:

BAPI – Inbound Interface

Approach : Develop a Custom Program to read files stored in Application server and post Accounting Documents in S/4 HANA via BAPI/IDOC

Pros

- The file format and sequence of data can be flexible.

- Both Header and line item can be maintained in a same file with Unique ID

Cons

- No Standard content (SLT) can be used to post Accounting Document in S/4

- Extensive Testing required on the custom development

- Standard error monitoring and reprocessing capabilities of AIF cannot be leveraged

- Custom Error handling strategy to be developed

- Cannot utilize SAP Customer Support System, referencing the E2E failure point for the Internal

- Any system upgrade may require adjustment to Custom Code

- Need to Custom build for mapping of key data like Company code, profit center etc. (Non-availability of Master Data Governance)

Magnitude :

SAP Central Finance Transaction Replication by Magnitude is a key accelerator for Central Finance implementation projects, enabling integration with non-SAP ERP source systems via pre-defined and purpose-built capabilities .

SAP Central Finance Transaction Replication by Magnitude

Accelerates and maximizes the value of your SAP Central Finance investment

- Connects and normalizes detailed transactions from non-SAP sources in real-time

- Provides drilldown to non-SAP operational detail

- Enables write-back to source for shared service processes

- Tracks status and changes to data through the replication and posting processes

Important Notes for Non-SAP System

2610660 - SAP LT Replication Server for Central Finance - Third-Party System Integration

2589428 - Third Party Interface: Posting documents with more than 900 line items

2551200 - Central Finance Third Party Interface: Additional Filed PRDTAX_CODE in Structure CF_E_DEBIT and CF_E_CREDIT

Conclusion:

This blog is very useful for all the customers and Consultants who are planning to implement Central Finance with Non-SAP System .it Covers the Consideration of Understand the supported various business scenarios in SLT.

- SAP Managed Tags:

- SAP S/4HANA Finance,

- FIN (Finance)

Labels:

3 Comments

You must be a registered user to add a comment. If you've already registered, sign in. Otherwise, register and sign in.

Labels in this area

-

Artificial Intelligence (AI)

1 -

Business Trends

363 -

Business Trends

21 -

Customer COE Basics and Fundamentals

1 -

Digital Transformation with Cloud ERP (DT)

1 -

Event Information

461 -

Event Information

23 -

Expert Insights

114 -

Expert Insights

151 -

General

1 -

Governance and Organization

1 -

Introduction

1 -

Life at SAP

415 -

Life at SAP

2 -

Product Updates

4,685 -

Product Updates

205 -

Roadmap and Strategy

1 -

Technology Updates

1,502 -

Technology Updates

85

Related Content

- How to Create Outbound Delivery With order reference in SAP VL01N in Enterprise Resource Planning Blogs by Members

- Creation of Outbound Delivery using VL01N in SAP in Enterprise Resource Planning Q&A

- Deep Dive into SAP Build Process Automation with SAP S/4HANA Cloud Public Edition - Retail in Enterprise Resource Planning Blogs by SAP

- Functional Highlights of the New 3.0 Release of PLM System Integration for SAP S/4HANA in Enterprise Resource Planning Blogs by SAP

- SAP S/4HANA Cloud Private Edition | 2023 FPS01 Release – Part 1 in Enterprise Resource Planning Blogs by SAP

Top kudoed authors

| User | Count |

|---|---|

| 5 | |

| 4 | |

| 4 | |

| 3 | |

| 3 | |

| 3 | |

| 2 | |

| 2 | |

| 2 | |

| 2 |