- SAP Community

- Products and Technology

- Technology

- Technology Blogs by SAP

- SAP Data Intelligence as Optimization Platform

Technology Blogs by SAP

Learn how to extend and personalize SAP applications. Follow the SAP technology blog for insights into SAP BTP, ABAP, SAP Analytics Cloud, SAP HANA, and more.

Turn on suggestions

Auto-suggest helps you quickly narrow down your search results by suggesting possible matches as you type.

Showing results for

former_member67

Discoverer

Options

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

05-11-2020

8:03 AM

Below article briefly talks about what entails optimization and its widespread reach across day-to-day users and enterprises.

Optimization techniques (or prescriptive analytics) are used to determine optimal solution (best decision) to a problem, formulated in form of (linear/non-linear) mathematical “Objective function” given the set of “Decision Variables” bound by a list of (linear/non-linear) mathematical “Constraints”. Modern day optimization problems can have several million decision variables and are typically solved using sophisticated “Solvers” such as IBM CPLEX, Gurobi or FICO Xpress. Most machine learning algorithms solve an optimization problem internally using methods like “Gradient Descent”, thus optimization has become an important part of analytics-based solutions in Enterprises.

Optimization Techniques can fall under following categories:

1) Linear Programming

2) Integer Programming (or Mixed Integer Programming)

3) Goal Programming

4) Non-Linear Programming

5) Meta-Heuristics Programming

In rest of the article, I will show how SAP Data Intelligence provides end-to-end orchestration platform to host, compile, solve and execute an optimization problem that could fall in categories listed above.

As a sample scenario, I am considering a Diet Optimization problem. The objective is to get the cost optimized balanced diet, based on list of ingredients and cost of each ingredient. We will see Step-by-step on technically implementing this Optimization problem which falls under Mixed Integer Programming (MIP) Problem in SAP Data Intelligence.

So let’s get started: -

SAP DI ML Scenario Manager can be used to create a single Dashboard where all artifacts related to an Optimization problem can be stored and managed through their end of life. Artifacts like data-sets: Datasets (or) Model Formulation file (in LP format), Jupyter Notebook for experimentation, Pipelines for optimization solvers producing solver output.

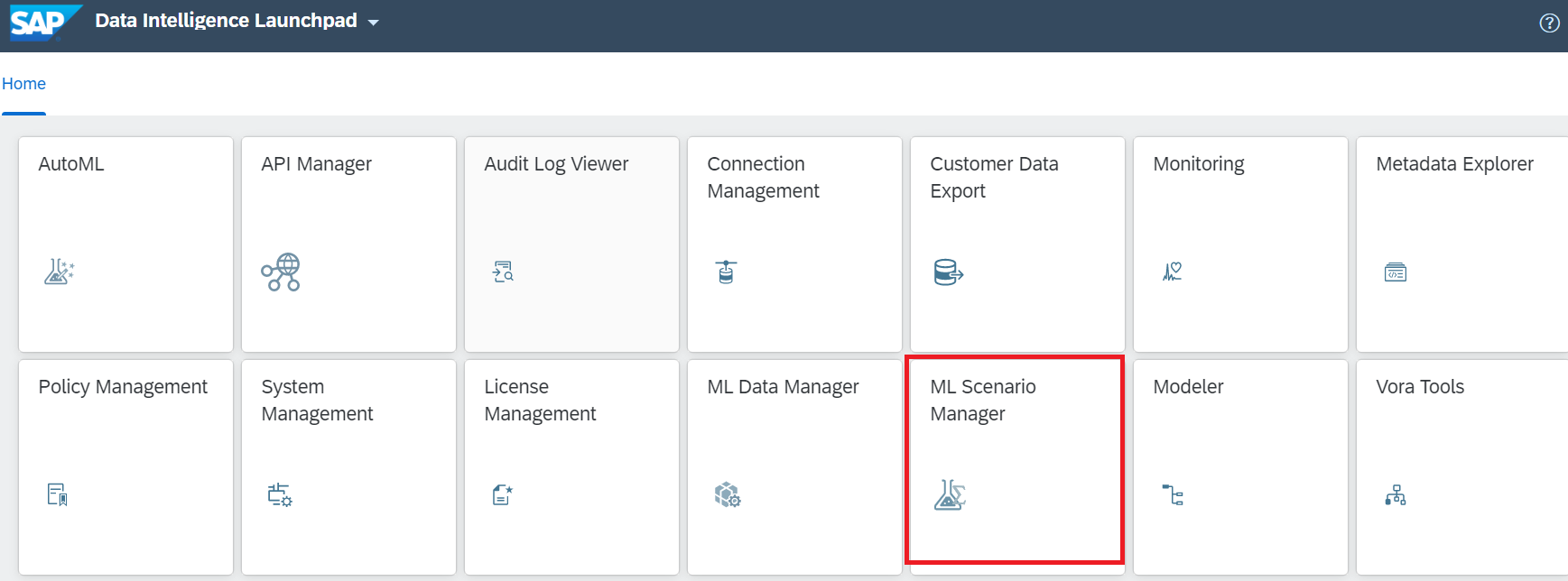

Go to SAP DI Launchpad and click on ML Scenario Manager as highlighted in screen shot below:

Click on ‘+’ button, give scenario a name like ‘Optimization_CPLEX’ and hit Create button:

Registering the Data-set: We can start of Registering the ‘Diet_Optimization.csv’ Dataset to the scenario. Click on ‘+’ button under ‘Datasets’ tab:

Setting-Up Jupyter Notebook: We can create a new Jupyter Notebook via ML Scenario Manager and use it for writing experimental python optimization script.

To create Jupyter notebook instance, Go to Jupyter Notebook tab and click ‘+’ button, enter name for the new notebook and hit ‘Create’.

We first start with installing required CPLEX and Do-CPLEX Python Optimization libraries:

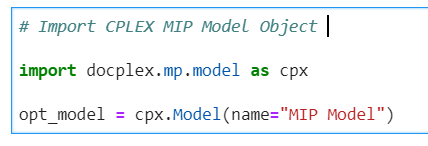

Initialize the Mixed-Integer-Programming (MIP) cplex model for solving:

Objective is to create Mathematical formulations with 'Decision variables' bounded with 'Linear Constraints' and define “Minimization” Cost Objective function. Details of MIP Optimization mathematical formulations can be viewed in LP file attached in artifacts folder at the end of the article.

Below image shows the declaration of objective function as “Minimization” function and runs CPLEX Solver to “solve” the formulations.

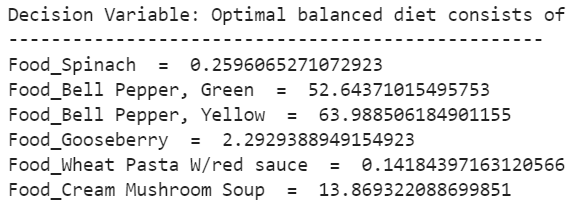

O/p of above Solver’s exercise can be viewed as below:

Optimal Objective Function value:

Optimal Decision Variables Value:

Next section of the blog addresses specific asks from customers on hosting Optimization solution on SAP DI:

1. Can SAP DI host 3rd party Optimization Solvers like IBM CPLEX and avail its run-time seamlessly?

2. Solve optimization Model specified in file formats like (.lp / .mps ) using CPLEX run-time in SAP DI.

3. Can SAP DI support execution of Optimization Solutions developed (in-house) in multiple programming languages?

4. Multi-users concurrency for Optimization Applications in SAP DI and achieve user parallelization? (not discussed this article)

5. Scalability to run multiple (easy/medium/hard) application concurrently in SAP DI and performance monitoring using Kibana/Grafana DI services? (not discussed in this article)

Lets proceed to see how SAP DI addresses above scenarios:

1. Addressing 1st ask,

SAP DI presents BYOL - Bring Your Own Language Runtime:

SAP DI provides robust and scalable BYOL platform which allows to install Solvers like IBM CPLEX using docker containerization capability. (Similarly, BYOL can help enable other solvers like Gurobi / FiCo Express / Mosel also on SAP DI platform.)

Steps to install IBM CPLEX Solver in SAP DI are as follows:

IBM CPLEX Community Edition Linux Binary looks as below:

Bring required dependencies (‘COSCE129LIN64.bin’ and ‘silent.properties’ file in this case) in SAP DI by attaching them to VREP repository under ‘dockerfiles’ folder. Looks as below in SAP DI Modeler:

Second Step is to create required docker file with relevant tags in SAP DI. For installation of licensed version one of the ways is to incorporate s/w license in Docker file (as applicable and highlighted).

Tags File: ‘byolcplex’ is the custom tag for the docker file.

Above process leverages BYOL capability to host 3rd party software like IBM CPLEX and enable their run-time. We will see next how the CPLEX run-time can be used directly in SAP DI henceforth.

2. Addressing 2nd ask,

Using BYOL capabilities, an optimization problem modeled in '.LP' file format can be solved directly in SAP DI by invoking CPLEX run time.

Steps as below:

Depending on 3rd party solver in consideration, optimization model can be coded in different file formats (like MPS, SAV, ANN, CSV, BAS, FLT, MST, NET, ORD, PRM, SQL, VMC, XML). We can pass the same in SAP DI command line as shown above.

3. Addressing 3rd ask,

Can SAP DI execute statically compiled optimization binaries (originally coded in C++ or Python)

For Customers who are largely Windows machine users and have their optimization code base on windows platform, it’s required for them to recompile their raw optimization code in Linux environment to execute in SAP DI. It was also observed that preferred programming languages for coding optimization problems are C++ and Python. Hence one of the pre-steps is to get the re-compiled C++ & Python Windows based code and generate static Linux binaries.

Steps as follows:

Sample Docker file in SAP DI:

I have consolidate all docker commands in a single docker file which can be found below:

Summary:

Above article shows how SAP DI can present a single platform to solve different Enterprise Optimization problems and can host different 3rd party solvers within platform. In subsequent blog, I will be covering the scalability, multi-user concurrency and resource consumption analysis in context of same optimization problem using Kibana and Grafana services that SAP DI offers.

Optimization techniques (or prescriptive analytics) are used to determine optimal solution (best decision) to a problem, formulated in form of (linear/non-linear) mathematical “Objective function” given the set of “Decision Variables” bound by a list of (linear/non-linear) mathematical “Constraints”. Modern day optimization problems can have several million decision variables and are typically solved using sophisticated “Solvers” such as IBM CPLEX, Gurobi or FICO Xpress. Most machine learning algorithms solve an optimization problem internally using methods like “Gradient Descent”, thus optimization has become an important part of analytics-based solutions in Enterprises.

Optimization Techniques can fall under following categories:

1) Linear Programming

2) Integer Programming (or Mixed Integer Programming)

3) Goal Programming

4) Non-Linear Programming

5) Meta-Heuristics Programming

In rest of the article, I will show how SAP Data Intelligence provides end-to-end orchestration platform to host, compile, solve and execute an optimization problem that could fall in categories listed above.

As a sample scenario, I am considering a Diet Optimization problem. The objective is to get the cost optimized balanced diet, based on list of ingredients and cost of each ingredient. We will see Step-by-step on technically implementing this Optimization problem which falls under Mixed Integer Programming (MIP) Problem in SAP Data Intelligence.

So let’s get started: -

SAP DI ML Scenario Manager can be used to create a single Dashboard where all artifacts related to an Optimization problem can be stored and managed through their end of life. Artifacts like data-sets: Datasets (or) Model Formulation file (in LP format), Jupyter Notebook for experimentation, Pipelines for optimization solvers producing solver output.

Go to SAP DI Launchpad and click on ML Scenario Manager as highlighted in screen shot below:

Click on ‘+’ button, give scenario a name like ‘Optimization_CPLEX’ and hit Create button:

Registering the Data-set: We can start of Registering the ‘Diet_Optimization.csv’ Dataset to the scenario. Click on ‘+’ button under ‘Datasets’ tab:

Select ‘diet_optimization.csv’ dataset loaded already in SAP DI Data Manager environment by clicking on the button highlighted, enter ‘NAME’ and hit ‘Register’:

Setting-Up Jupyter Notebook: We can create a new Jupyter Notebook via ML Scenario Manager and use it for writing experimental python optimization script.

To create Jupyter notebook instance, Go to Jupyter Notebook tab and click ‘+’ button, enter name for the new notebook and hit ‘Create’.

Next, the application control moves to Jupyter Lab environment where the system prompts to select the kernel. Select ‘Python 3’ kernel and hit ‘Select’.

We first start with installing required CPLEX and Do-CPLEX Python Optimization libraries:

Initialize the Mixed-Integer-Programming (MIP) cplex model for solving:

import docplex.mp.model as cpx

import pandas as pd

df = pd.read_csv("diet_optimization.csv",nrows=64)

df.drop("Serving Size",axis=1,inplace=True)

# Defining CPLEX Mixed Integer Programming Model

opt_model = cpx.Model(name="MIP Model")

food_items = list(df['Foods'])

print("List of food items considered, are\n"+"-"*100)

for f in food_items:

print(f,end=', ')Objective is to create Mathematical formulations with 'Decision variables' bounded with 'Linear Constraints' and define “Minimization” Cost Objective function. Details of MIP Optimization mathematical formulations can be viewed in LP file attached in artifacts folder at the end of the article.

# Create a dictionary for all food items for each "decision variable" and append to a data frame

new_col_names = [ x for x in df.columns if x != 'Foods']

output = pd.DataFrame()

for col in new_col_names:

temp = dict(zip(food_items,df[col]))

output = output.append(temp, ignore_index=True)

#Adding Decision Variables to MIP Optim Model

food_vars = opt_model.continuous_var_dict(food_items,name="Food")

# Establishing Constraints

output['Constraint_params'] = new_col_names

# Setting minimum and maximum constraints in dataframe

output['Minimum'] = [0,1500,30,20,800,130,125,60,1000,400,700,10]

output['Maximum'] = [0,2500,240,70,2000,450,250,100,10000,5000,1500,40]

# Adding constraints to MIP Optim Model

for i in range(11):

opt_model.add_constraint(opt_model.sum([output.loc[i+1,f] * food_vars[f] for f in food_items]) >= output['Minimum'].values[i+1], output['Constraint_params'].values[i+1] + 'Minimum')# "Minimum Constraint")

opt_model.add_constraint(opt_model.sum([output.loc[i+1,f] * food_vars[f] for f in food_items]) <= output['Maximum'].values[i+1], output['Constraint_params'].values[i+1] + 'Maximum')# "Minimum Constraint")

# Add Objective Cost Function

objective = opt_model.sum([output.loc[0,i]*food_vars[i] for i in food_items])Below image shows the declaration of objective function as “Minimization” function and runs CPLEX Solver to “solve” the formulations.

# for minimization

opt_model.minimize(objective)

# Run CPLEX Solver for Minimization - MIP

opt_model.solve()

O/p of above Solver’s exercise can be viewed as below:

Optimal Objective Function value:

![]()

Optimal Decision Variables Value:

Next section of the blog addresses specific asks from customers on hosting Optimization solution on SAP DI:

1. Can SAP DI host 3rd party Optimization Solvers like IBM CPLEX and avail its run-time seamlessly?

2. Solve optimization Model specified in file formats like (.lp / .mps ) using CPLEX run-time in SAP DI.

3. Can SAP DI support execution of Optimization Solutions developed (in-house) in multiple programming languages?

4. Multi-users concurrency for Optimization Applications in SAP DI and achieve user parallelization? (not discussed this article)

5. Scalability to run multiple (easy/medium/hard) application concurrently in SAP DI and performance monitoring using Kibana/Grafana DI services? (not discussed in this article)

Lets proceed to see how SAP DI addresses above scenarios:

1. Addressing 1st ask,

SAP DI presents BYOL - Bring Your Own Language Runtime:

SAP DI provides robust and scalable BYOL platform which allows to install Solvers like IBM CPLEX using docker containerization capability. (Similarly, BYOL can help enable other solvers like Gurobi / FiCo Express / Mosel also on SAP DI platform.)

Steps to install IBM CPLEX Solver in SAP DI are as follows:

- To achieve below exercise, community version of IBM CPLEX (compatible for Linux environment) was downloaded from IBM CPLEX site. Steps for installation of licensed CPLEX version remain same with few additions to Docker file (highlighted in relevant section).

IBM CPLEX Community Edition Linux Binary looks as below:

- We intend to invoke ‘silent installation’ for CPLEX software on SAP DI. Hence a file like ‘silent.properties’ is created and maintained with required configurations as shown below :

- Next step is to create docker file in SAP DI which can then be tagged to relevant operator while developing the execution pipeline. It's a 2-Step process:

Bring required dependencies (‘COSCE129LIN64.bin’ and ‘silent.properties’ file in this case) in SAP DI by attaching them to VREP repository under ‘dockerfiles’ folder. Looks as below in SAP DI Modeler:

Second Step is to create required docker file with relevant tags in SAP DI. For installation of licensed version one of the ways is to incorporate s/w license in Docker file (as applicable and highlighted).

Tags File: ‘byolcplex’ is the custom tag for the docker file.

Above process leverages BYOL capability to host 3rd party software like IBM CPLEX and enable their run-time. We will see next how the CPLEX run-time can be used directly in SAP DI henceforth.

2. Addressing 2nd ask,

Using BYOL capabilities, an optimization problem modeled in '.LP' file format can be solved directly in SAP DI by invoking CPLEX run time.

Steps as below:

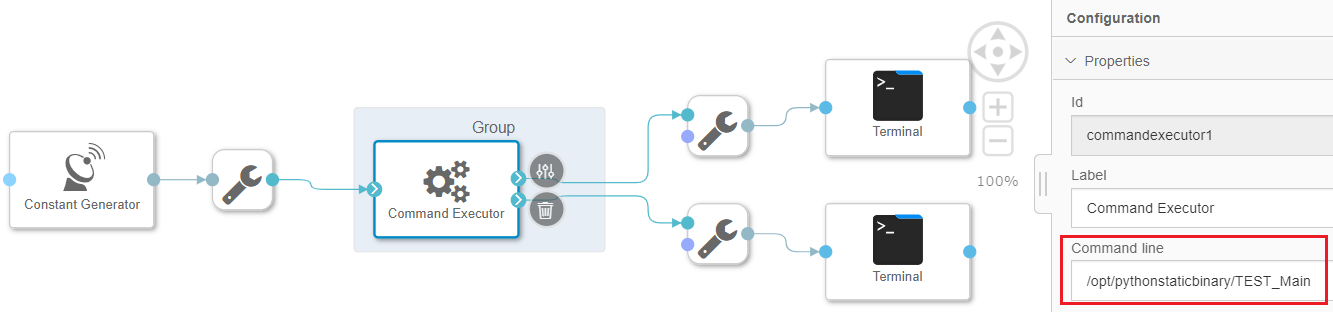

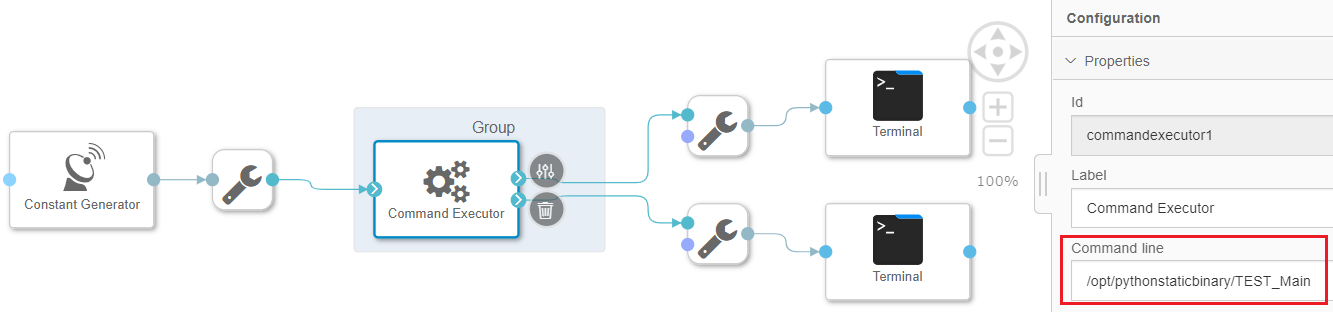

- Create a simple pipeline using out-of-the-box Command Executor. CPLEX run-time environment can be triggered via command-line of ‘Command Executor’ Operator as highlighted in screen-shot. Invoking CPLEX run-time via command-line along with passing the required file argument as ‘cplexscript’

- Command executor is grouped and tagged with the Docker file created in Step 1 with custom tag ‘byolcplex’.

- ‘Cplexscript’ is the Custom Optim Script (created and attached in file System Management VREP) and invokes the LP file with Optimization Model as coded in the script.

Depending on 3rd party solver in consideration, optimization model can be coded in different file formats (like MPS, SAV, ANN, CSV, BAS, FLT, MST, NET, ORD, PRM, SQL, VMC, XML). We can pass the same in SAP DI command line as shown above.

- Save and Run the pipeline.

- Once the pipeline is in running state, we can view Optimization output in output Terminal.

3. Addressing 3rd ask,

Can SAP DI execute statically compiled optimization binaries (originally coded in C++ or Python)

For Customers who are largely Windows machine users and have their optimization code base on windows platform, it’s required for them to recompile their raw optimization code in Linux environment to execute in SAP DI. It was also observed that preferred programming languages for coding optimization problems are C++ and Python. Hence one of the pre-steps is to get the re-compiled C++ & Python Windows based code and generate static Linux binaries.

Steps as follows:

- Re-compile raw C++ / Python code in Customer’s Local Linux environment (static compilation). Extract the same in ‘.tar.gz’ format like as below.

- Bring required dependencies (Compiled Binaries and Dataset) in SAP DI and create relevant docker file for execution:

Sample Docker file in SAP DI:

- A simple pipeline is created using “Command Executor” operator. Command executor is Grouped and the same is tagged with the docker built above. Highlighted portion shows the command-line invocation of statically compiled binary:

- Save and Run the pipeline.

- Once the pipeline is in running state, we can view Optimization output in output Terminal.

I have consolidate all docker commands in a single docker file which can be found below:

FROM opensuse/leap:15.1

RUN zypper --non-interactive update && \

# Install tar, gzip, python, python3, pip, pip3, gcc and libgthread

zypper --non-interactive install --no-recommends --force-resolution \

make \

java-1_8_0-openjdk \

vim \

tar \

gzip \

ncompress \

python3 \

python3-pip \

gcc=7 \

gcc-c++=7 \

libgthread-2_0-0=2.54.3

RUN python3 -m pip install numpy

RUN python3 -m pip install pandas

RUN python3 -m pip install tornado==5.0.2

RUN python3.6 -m pip install sklearn

RUN python3.6 -m pip install docplex

RUN python3.6 -m pip install cplex

RUN python3.6 -m pip install xlrd

RUN python3.6 -m pip install ortools

WORKDIR /home/vflow

ENV HOME=/home/vflow

ENV WORK=$HOME/work

#cplex installation

RUN mkdir -p $HOME/opt/ilog

COPY silent.properties $HOME/opt/ilog/silent.properties

COPY COSCE129LIN64.bin $HOME/opt/ilog/COSCE129LIN64.bin

# Give Required accesses and start installations

RUN chmod +x $HOME/opt/ilog/COSCE129LIN64.bin

RUN chmod 777 $HOME/opt/ilog/COSCE129LIN64.bin

RUN chmod 777 $HOME/opt/ilog/silent.properties

RUN $HOME/opt/ilog/COSCE129LIN64.bin -f $HOME/opt/ilog/silent.properties

## Getting Pythin Static Binary within DI Docker

RUN mkdir -p $HOME/opt/pythonstaticbinary

# Set initial environment variable to the directory we created

ENV BINARYDIR $HOME/opt/pythonstaticbinary

RUN chmod 777 $BINARYDIR/

#### TEST_Main.tar.gz could be any C++ Python statically compiled

ADD TEST_Main.tar.gz $BINARYDIR/

COPY diet_optimization.csv $BINARYDIR/

RUN chmod +x $BINARYDIR/TEST_Main

RUN chmod 777 $BINARYDIR/TEST_Main

RUN chmod +x $BINARYDIR/diet_optimization.csv

RUN chmod 777 $BINARYDIR/diet_optimization.csv

## Add the license file to the bin directory

# ADD xpauth.xpr $HOME/opt/ilog/bin

RUN groupadd -g 1972 vflow && useradd -g 1972 -u 1972 -m vflow

USER 1972:1972

Summary:

Above article shows how SAP DI can present a single platform to solve different Enterprise Optimization problems and can host different 3rd party solvers within platform. In subsequent blog, I will be covering the scalability, multi-user concurrency and resource consumption analysis in context of same optimization problem using Kibana and Grafana services that SAP DI offers.

- SAP Managed Tags:

- SAP Data Intelligence

Labels:

5 Comments

You must be a registered user to add a comment. If you've already registered, sign in. Otherwise, register and sign in.

Labels in this area

-

ABAP CDS Views - CDC (Change Data Capture)

2 -

AI

1 -

Analyze Workload Data

1 -

BTP

1 -

Business and IT Integration

2 -

Business application stu

1 -

Business Technology Platform

1 -

Business Trends

1,661 -

Business Trends

88 -

CAP

1 -

cf

1 -

Cloud Foundry

1 -

Confluent

1 -

Customer COE Basics and Fundamentals

1 -

Customer COE Latest and Greatest

3 -

Customer Data Browser app

1 -

Data Analysis Tool

1 -

data migration

1 -

data transfer

1 -

Datasphere

2 -

Event Information

1,400 -

Event Information

65 -

Expert

1 -

Expert Insights

178 -

Expert Insights

280 -

General

1 -

Google cloud

1 -

Google Next'24

1 -

Kafka

1 -

Life at SAP

784 -

Life at SAP

11 -

Migrate your Data App

1 -

MTA

1 -

Network Performance Analysis

1 -

NodeJS

1 -

PDF

1 -

POC

1 -

Product Updates

4,577 -

Product Updates

330 -

Replication Flow

1 -

RisewithSAP

1 -

SAP BTP

1 -

SAP BTP Cloud Foundry

1 -

SAP Cloud ALM

1 -

SAP Cloud Application Programming Model

1 -

SAP Datasphere

2 -

SAP S4HANA Cloud

1 -

SAP S4HANA Migration Cockpit

1 -

Technology Updates

6,886 -

Technology Updates

408 -

Workload Fluctuations

1

Related Content

- Unify your process and task mining insights: How SAP UEM by Knoa integrates with SAP Signavio in Technology Blogs by SAP

- 10+ ways to reshape your SAP landscape with SAP BTP - Blog 4 Interview in Technology Blogs by SAP

- 10+ ways to reshape your SAP landscape with SAP Business Technology Platform – Blog 4 in Technology Blogs by SAP

- Top Picks: Innovations Highlights from SAP Business Technology Platform (Q1/2024) in Technology Blogs by SAP

- Business Process Integration and Assimilation in a M&A and How to Accelerate Synergy Savings. in Technology Blogs by SAP

Top kudoed authors

| User | Count |

|---|---|

| 13 | |

| 10 | |

| 10 | |

| 9 | |

| 8 | |

| 7 | |

| 6 | |

| 5 | |

| 5 | |

| 5 |