- SAP Community

- Products and Technology

- Technology

- Technology Blogs by Members

- Continuous Cloud Integration – Practical usage of ...

Technology Blogs by Members

Explore a vibrant mix of technical expertise, industry insights, and tech buzz in member blogs covering SAP products, technology, and events. Get in the mix!

Turn on suggestions

Auto-suggest helps you quickly narrow down your search results by suggesting possible matches as you type.

Showing results for

uwevoigt123

Participant

Options

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

12-24-2019

8:42 AM

Edit on Jan 31,2020: Source is available here: https://github.com/uvoigt/scpi-ci-tools

When we think of a development cycle of a cloud application, this has certain common ingredients:

Since the SAP Cloud Platform Integration still does not fully support this scenario, there are the public OData API’s to fill in the gap, at least partly.

This blog first explains how we defined our development and staging workflow and then shows how I used these API’s by putting them all together within a Bash-based CLI.

We have three stages (three sub accounts) on the Cloud Platform. This is useful for restricting access to required roles and for staging specific scaling.

This takes place in the development environment. It is the only stage integration flows require to be in the design workspace because we have to use the integration flow editor. Each developer may have an own package to visibly separate from each other.

When starting the development on an integration flow, it is either created from scratch or copied from another package from the ‘Discover’ section.

When copied, the first action has to be to execute

which downloads the integration flow, creates a git repository locally and remotely via Bitbucket API and finally pushes the newly created repository to the remote.

Since there is already a bitbucket-pipelines.yml inside, it takes a couple of seconds and the integration flow is deployed within the development environment runtime.

The integration flow might have been removed from the design workspace (we do not have to have them here because we stored them in the git repository). If that is the case, clone the remote repository or do

and then execute

Apply the modifications to the integration flow. If done, execute

to push the changes to the remote repository. The integration flow might have been deployed in the meantime by the developer for testing purposes. Nevertheless, when the push occurs the pipeline finally deploys it to the development environment.

If this is the first time the integration flow is pushed to the test stage, a branch ‘test’ has to be created and prepared. This is done by cloning the repository or do

Then

Now the file src/main/resources/parameters.prop has to be modified so that all staging specific variables like endpoints to the Commerce Cloud match those of the test stage.

That file contains all externalized parameters that are defined using the integration flow editor.

Then

Now the pipeline deploys this integration flow to the testing stage for the first time.

For subsequent merges into the test branch execute

instead of creating a new branch.

If modifications on the parameters.prop file have been applied in development, this always requires a manual merge conflict resolution. The developer should be careful when doing this!

This is almost the same as to the test stage except that we name the branch ‘release-<version>’. We can work the supportive function of the git hosting environment like branch protection or manual intervention when executing pipelines.

There is the main entry point called scpi which directs to concrete scripts with the functionality. We also have an scpi-completion.sh to support the user by typing tab twice to get sub-command or argument support in the shell.

The following arguments are necessary to enable authentication via OAuth2. Once given, they are saved for subsequent invocations within $HOME/.scpi/config

The scripts support to kinds of the OAuth flow, one that can be used by human users from their shells and the second which is meant for the continues integration pipeline.

The flow contains a redirect URI that is hit after the user has authenticated himself at the Cloud Platform. It is a very simple web server in C that is launched by the authentication part of the scripts and stopped after the authorization code has been grabbed from the log file.

This scenario requires a secret and a password which is configured within the pipeline execution environment. In my case this is Bitbucket.

Below is how it looks in Bitbucket. I use a custom docker registry to pull a thin image with the CLI built in and the variable DEPLOY_CONFIG that contains a base64 encoded file with all the necessary secrets and passwords for the stages.

This is a sample content of the deploy config file:

#!/bin/sh

# der Variablenpräfix muss mit dem Deployment-Environment übereinstimmen

export DEVELOPMENT_ACCOUNT_ID='devAccountId'

export DEVELOPMENT_OAUTH_PREFIX='devOauthPrefix'

export DEVELOPMENT_CLIENT_CREDS=' odata_ci:password'

export TEST_ACCOUNT_ID='testAccountId'

export TEST_OAUTH_PREFIX='testOauthPrefix'

export TEST_CLIENT_CREDS=' odata_ci:password'

export PRODUCTION_ACCOUNT_ID='prodAccountId'

export PRODUCTION_OAUTH_PREFIX='prodOauthPrefix'

export PRODUCTION_CLIENT_CREDS=' odata_ci:password'

This lists the packages of the design workspace.

Unfortunately, there is no public OData API to get the design time artifacts. Therefore, I used the same API which is used by the Cloud Platform Integration web application. Since OAuth authentication is impossible here, we have to type user name and password.

While listing the packages, their ID’s additionally are stored within $HOME/.scpi/packages so that they can be used by the bash completion script. (If those API’s would support OAuth2, this won’t be necessary.)

This lists the design time artifacts in all packages. A package ID can be specified to list only the artifacts of that package.

While listing the artifacts, their ID’s additionally are stored within $HOME/.scpi/artifacts so that they can be used by the bash completion script.

With this command a design time artifact can be downloaded. The corresponding API is GET /IntegrationDesigntimeArtifacts(Id=’{Id}’, Version=’{Version}’)/$value

The downloaded zip is extracted and by default copied to the directory $GIT_BASE_DIR/iflow_<artifact_id>. The zip program asks you to confirm overriding existing files which we normally do because this is a git repository which gives us all tooling to compare with previous commits.

This can be used to create an artifact within the design workspace (the used API is POST /IntegrationDesigntimeArtifacts).

<Artifact ID> – when pressing tab for completion, all local artifacts (directories starting with iflow_ within the configured workspace root)

<Package ID> – when pressing tab for completion, the packages saved in $HOME/.scpi/packages are presented

[Artifact Name] – if not specified, this is the same as the artifact ID

[Folder] – the directory to read the artifact from. By default, this is $GIT_BASE_DIR/iflow_<artifact_id>

With this command, it is possible to only have the integration flows within the design workspace which you currently work on.

This lets you delete an artifact from the design workspace. It corresponds to the API DELETE /IntegrationDesigntimeArtifacts(Id=’{Id}’, Version=’{Version}’)

Only there for completeness. This corresponds to the API POST /IntegrationDesigntimeArtifact.DeployIntegrationDesigntimeArtifact

This lists all deployed artifacts corresponding to the API GET /IntegrationRuntimeArtifacts

Triggers the deployment of the artifact uploaded. This corresponds to the API POST /IntegrationRuntimeArtifacts

This enables us to deploy artifacts without having them within the design workspace.

This was an overview how the OData API's can be used to create a developer workflow which better fits to what one might be used to from other cloud environments.

There are more functions included like message processing logs display or endpoint invocation (with another OAuth client involved because this needs other permissions), but that would exceed the scope of this article.

Introduction

When we think of a development cycle of a cloud application, this has certain common ingredients:

- We store development artifacts within a code repository like Git.

- We have an integrated continuous build and delivery pipeline which targets multiple deployment stages.

- We can use a CLI for the runtime platform which gives us the chance to use common shell-based tooling for some monitoring tasks.

Since the SAP Cloud Platform Integration still does not fully support this scenario, there are the public OData API’s to fill in the gap, at least partly.

This blog first explains how we defined our development and staging workflow and then shows how I used these API’s by putting them all together within a Bash-based CLI.

Usage and workflow

We have three stages (three sub accounts) on the Cloud Platform. This is useful for restricting access to required roles and for staging specific scaling.

Development of integration flows

This takes place in the development environment. It is the only stage integration flows require to be in the design workspace because we have to use the integration flow editor. Each developer may have an own package to visibly separate from each other.

Start of the development

When starting the development on an integration flow, it is either created from scratch or copied from another package from the ‘Discover’ section.

When copied, the first action has to be to execute

scpi design download -p <artifact-id>which downloads the integration flow, creates a git repository locally and remotely via Bitbucket API and finally pushes the newly created repository to the remote.

Since there is already a bitbucket-pipelines.yml inside, it takes a couple of seconds and the integration flow is deployed within the development environment runtime.

Modify an integration flow or fix a bug

The integration flow might have been removed from the design workspace (we do not have to have them here because we stored them in the git repository). If that is the case, clone the remote repository or do

git checkout develop

git pulland then execute

scpi design create <artifact-id>Apply the modifications to the integration flow. If done, execute

scpi design download -p <artifact-id>to push the changes to the remote repository. The integration flow might have been deployed in the meantime by the developer for testing purposes. Nevertheless, when the push occurs the pipeline finally deploys it to the development environment.

Bring an integration flow to the test stage

If this is the first time the integration flow is pushed to the test stage, a branch ‘test’ has to be created and prepared. This is done by cloning the repository or do

git checkout develop

git pullThen

git checkout -b testNow the file src/main/resources/parameters.prop has to be modified so that all staging specific variables like endpoints to the Commerce Cloud match those of the test stage.

That file contains all externalized parameters that are defined using the integration flow editor.

Then

git push –set-upstream origin testNow the pipeline deploys this integration flow to the testing stage for the first time.

For subsequent merges into the test branch execute

git merge developinstead of creating a new branch.

If modifications on the parameters.prop file have been applied in development, this always requires a manual merge conflict resolution. The developer should be careful when doing this!

Bring an integration flow to the production stage

This is almost the same as to the test stage except that we name the branch ‘release-<version>’. We can work the supportive function of the git hosting environment like branch protection or manual intervention when executing pipelines.

Parts of the CLI and their API counterparts

There is the main entry point called scpi which directs to concrete scripts with the functionality. We also have an scpi-completion.sh to support the user by typing tab twice to get sub-command or argument support in the shell.

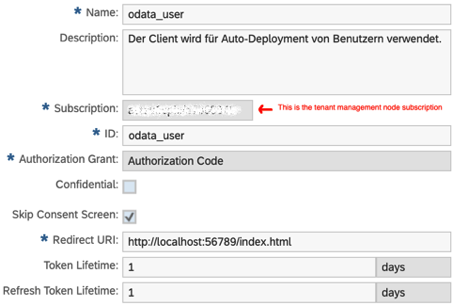

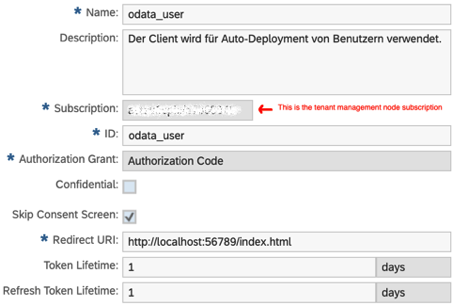

The following arguments are necessary to enable authentication via OAuth2. Once given, they are saved for subsequent invocations within $HOME/.scpi/config

OAuth authentication

The scripts support to kinds of the OAuth flow, one that can be used by human users from their shells and the second which is meant for the continues integration pipeline.

OAuth authentication of a human user

The flow contains a redirect URI that is hit after the user has authenticated himself at the Cloud Platform. It is a very simple web server in C that is launched by the authentication part of the scripts and stopped after the authorization code has been grabbed from the log file.

OAuth authentication within the CI

This scenario requires a secret and a password which is configured within the pipeline execution environment. In my case this is Bitbucket.

Below is how it looks in Bitbucket. I use a custom docker registry to pull a thin image with the CLI built in and the variable DEPLOY_CONFIG that contains a base64 encoded file with all the necessary secrets and passwords for the stages.

This is a sample content of the deploy config file:

#!/bin/sh

# der Variablenpräfix muss mit dem Deployment-Environment übereinstimmen

export DEVELOPMENT_ACCOUNT_ID='devAccountId'

export DEVELOPMENT_OAUTH_PREFIX='devOauthPrefix'

export DEVELOPMENT_CLIENT_CREDS=' odata_ci:password'

export TEST_ACCOUNT_ID='testAccountId'

export TEST_OAUTH_PREFIX='testOauthPrefix'

export TEST_CLIENT_CREDS=' odata_ci:password'

export PRODUCTION_ACCOUNT_ID='prodAccountId'

export PRODUCTION_OAUTH_PREFIX='prodOauthPrefix'

export PRODUCTION_CLIENT_CREDS=' odata_ci:password'

scpi design packages

This lists the packages of the design workspace.

Unfortunately, there is no public OData API to get the design time artifacts. Therefore, I used the same API which is used by the Cloud Platform Integration web application. Since OAuth authentication is impossible here, we have to type user name and password.

While listing the packages, their ID’s additionally are stored within $HOME/.scpi/packages so that they can be used by the bash completion script. (If those API’s would support OAuth2, this won’t be necessary.)

scpi design artifacts

This lists the design time artifacts in all packages. A package ID can be specified to list only the artifacts of that package.

While listing the artifacts, their ID’s additionally are stored within $HOME/.scpi/artifacts so that they can be used by the bash completion script.

scpi design download

With this command a design time artifact can be downloaded. The corresponding API is GET /IntegrationDesigntimeArtifacts(Id=’{Id}’, Version=’{Version}’)/$value

The downloaded zip is extracted and by default copied to the directory $GIT_BASE_DIR/iflow_<artifact_id>. The zip program asks you to confirm overriding existing files which we normally do because this is a git repository which gives us all tooling to compare with previous commits.

scpi design create

This can be used to create an artifact within the design workspace (the used API is POST /IntegrationDesigntimeArtifacts).

Options

<Artifact ID> – when pressing tab for completion, all local artifacts (directories starting with iflow_ within the configured workspace root)

<Package ID> – when pressing tab for completion, the packages saved in $HOME/.scpi/packages are presented

[Artifact Name] – if not specified, this is the same as the artifact ID

[Folder] – the directory to read the artifact from. By default, this is $GIT_BASE_DIR/iflow_<artifact_id>

With this command, it is possible to only have the integration flows within the design workspace which you currently work on.

scpi design delete

This lets you delete an artifact from the design workspace. It corresponds to the API DELETE /IntegrationDesigntimeArtifacts(Id=’{Id}’, Version=’{Version}’)

scpi design deploy

Only there for completeness. This corresponds to the API POST /IntegrationDesigntimeArtifact.DeployIntegrationDesigntimeArtifact

scpi runtime artifacts

This lists all deployed artifacts corresponding to the API GET /IntegrationRuntimeArtifacts

scpi runtime deploy

Triggers the deployment of the artifact uploaded. This corresponds to the API POST /IntegrationRuntimeArtifacts

This enables us to deploy artifacts without having them within the design workspace.

Conclusion

This was an overview how the OData API's can be used to create a developer workflow which better fits to what one might be used to from other cloud environments.

There are more functions included like message processing logs display or endpoint invocation (with another OAuth client involved because this needs other permissions), but that would exceed the scope of this article.

- SAP Managed Tags:

- SAP Integration Suite,

- Cloud Integration

3 Comments

You must be a registered user to add a comment. If you've already registered, sign in. Otherwise, register and sign in.

Labels in this area

-

"automatische backups"

1 -

"regelmäßige sicherung"

1 -

"TypeScript" "Development" "FeedBack"

1 -

505 Technology Updates 53

1 -

ABAP

14 -

ABAP API

1 -

ABAP CDS Views

2 -

ABAP CDS Views - BW Extraction

1 -

ABAP CDS Views - CDC (Change Data Capture)

1 -

ABAP class

2 -

ABAP Cloud

2 -

ABAP Development

5 -

ABAP in Eclipse

1 -

ABAP Platform Trial

1 -

ABAP Programming

2 -

abap technical

1 -

absl

2 -

access data from SAP Datasphere directly from Snowflake

1 -

Access data from SAP datasphere to Qliksense

1 -

Accrual

1 -

action

1 -

adapter modules

1 -

Addon

1 -

Adobe Document Services

1 -

ADS

1 -

ADS Config

1 -

ADS with ABAP

1 -

ADS with Java

1 -

ADT

2 -

Advance Shipping and Receiving

1 -

Advanced Event Mesh

3 -

AEM

1 -

AI

7 -

AI Launchpad

1 -

AI Projects

1 -

AIML

9 -

Alert in Sap analytical cloud

1 -

Amazon S3

1 -

Analytical Dataset

1 -

Analytical Model

1 -

Analytics

1 -

Analyze Workload Data

1 -

annotations

1 -

API

1 -

API and Integration

3 -

API Call

2 -

Application Architecture

1 -

Application Development

5 -

Application Development for SAP HANA Cloud

3 -

Applications and Business Processes (AP)

1 -

Artificial Intelligence

1 -

Artificial Intelligence (AI)

5 -

Artificial Intelligence (AI) 1 Business Trends 363 Business Trends 8 Digital Transformation with Cloud ERP (DT) 1 Event Information 462 Event Information 15 Expert Insights 114 Expert Insights 76 Life at SAP 418 Life at SAP 1 Product Updates 4

1 -

Artificial Intelligence (AI) blockchain Data & Analytics

1 -

Artificial Intelligence (AI) blockchain Data & Analytics Intelligent Enterprise

1 -

Artificial Intelligence (AI) blockchain Data & Analytics Intelligent Enterprise Oil Gas IoT Exploration Production

1 -

Artificial Intelligence (AI) blockchain Data & Analytics Intelligent Enterprise sustainability responsibility esg social compliance cybersecurity risk

1 -

ASE

1 -

ASR

2 -

ASUG

1 -

Attachments

1 -

Authorisations

1 -

Automating Processes

1 -

Automation

2 -

aws

2 -

Azure

1 -

Azure AI Studio

1 -

B2B Integration

1 -

Backorder Processing

1 -

Backup

1 -

Backup and Recovery

1 -

Backup schedule

1 -

BADI_MATERIAL_CHECK error message

1 -

Bank

1 -

BAS

1 -

basis

2 -

Basis Monitoring & Tcodes with Key notes

2 -

Batch Management

1 -

BDC

1 -

Best Practice

1 -

bitcoin

1 -

Blockchain

3 -

bodl

1 -

BOP in aATP

1 -

BOP Segments

1 -

BOP Strategies

1 -

BOP Variant

1 -

BPC

1 -

BPC LIVE

1 -

BTP

12 -

BTP Destination

2 -

Business AI

1 -

Business and IT Integration

1 -

Business application stu

1 -

Business Application Studio

1 -

Business Architecture

1 -

Business Communication Services

1 -

Business Continuity

1 -

Business Data Fabric

3 -

Business Partner

12 -

Business Partner Master Data

10 -

Business Technology Platform

2 -

Business Trends

4 -

CA

1 -

calculation view

1 -

CAP

3 -

Capgemini

1 -

CAPM

1 -

Catalyst for Efficiency: Revolutionizing SAP Integration Suite with Artificial Intelligence (AI) and

1 -

CCMS

2 -

CDQ

12 -

CDS

2 -

Cental Finance

1 -

Certificates

1 -

CFL

1 -

Change Management

1 -

chatbot

1 -

chatgpt

3 -

CL_SALV_TABLE

2 -

Class Runner

1 -

Classrunner

1 -

Cloud ALM Monitoring

1 -

Cloud ALM Operations

1 -

cloud connector

1 -

Cloud Extensibility

1 -

Cloud Foundry

4 -

Cloud Integration

6 -

Cloud Platform Integration

2 -

cloudalm

1 -

communication

1 -

Compensation Information Management

1 -

Compensation Management

1 -

Compliance

1 -

Compound Employee API

1 -

Configuration

1 -

Connectors

1 -

Consolidation Extension for SAP Analytics Cloud

2 -

Control Indicators.

1 -

Controller-Service-Repository pattern

1 -

Conversion

1 -

Cosine similarity

1 -

cryptocurrency

1 -

CSI

1 -

ctms

1 -

Custom chatbot

3 -

Custom Destination Service

1 -

custom fields

1 -

Customer Experience

1 -

Customer Journey

1 -

Customizing

1 -

cyber security

3 -

Data

1 -

Data & Analytics

1 -

Data Aging

1 -

Data Analytics

2 -

Data and Analytics (DA)

1 -

Data Archiving

1 -

Data Back-up

1 -

Data Governance

5 -

Data Integration

2 -

Data Quality

12 -

Data Quality Management

12 -

Data Synchronization

1 -

data transfer

1 -

Data Unleashed

1 -

Data Value

8 -

database tables

1 -

Datasphere

2 -

datenbanksicherung

1 -

dba cockpit

1 -

dbacockpit

1 -

Debugging

2 -

Delimiting Pay Components

1 -

Delta Integrations

1 -

Destination

3 -

Destination Service

1 -

Developer extensibility

1 -

Developing with SAP Integration Suite

1 -

Devops

1 -

digital transformation

1 -

Documentation

1 -

Dot Product

1 -

DQM

1 -

dump database

1 -

dump transaction

1 -

e-Invoice

1 -

E4H Conversion

1 -

Eclipse ADT ABAP Development Tools

2 -

edoc

1 -

edocument

1 -

ELA

1 -

Embedded Consolidation

1 -

Embedding

1 -

Embeddings

1 -

Employee Central

1 -

Employee Central Payroll

1 -

Employee Central Time Off

1 -

Employee Information

1 -

Employee Rehires

1 -

Enable Now

1 -

Enable now manager

1 -

endpoint

1 -

Enhancement Request

1 -

Enterprise Architecture

1 -

ETL Business Analytics with SAP Signavio

1 -

Euclidean distance

1 -

Event Dates

1 -

Event Driven Architecture

1 -

Event Mesh

2 -

Event Reason

1 -

EventBasedIntegration

1 -

EWM

1 -

EWM Outbound configuration

1 -

EWM-TM-Integration

1 -

Existing Event Changes

1 -

Expand

1 -

Expert

2 -

Expert Insights

2 -

Fiori

14 -

Fiori Elements

2 -

Fiori SAPUI5

12 -

Flask

1 -

Full Stack

8 -

Funds Management

1 -

General

1 -

Generative AI

1 -

Getting Started

1 -

GitHub

8 -

Grants Management

1 -

groovy

1 -

GTP

1 -

HANA

6 -

HANA Cloud

2 -

Hana Cloud Database Integration

2 -

HANA DB

2 -

HANA XS Advanced

1 -

Historical Events

1 -

home labs

1 -

HowTo

1 -

HR Data Management

1 -

html5

8 -

HTML5 Application

1 -

Identity cards validation

1 -

idm

1 -

Implementation

1 -

input parameter

1 -

instant payments

1 -

Integration

3 -

Integration Advisor

1 -

Integration Architecture

1 -

Integration Center

1 -

Integration Suite

1 -

intelligent enterprise

1 -

iot

1 -

Java

1 -

job

1 -

Job Information Changes

1 -

Job-Related Events

1 -

Job_Event_Information

1 -

joule

4 -

Journal Entries

1 -

Just Ask

1 -

Kerberos for ABAP

8 -

Kerberos for JAVA

8 -

KNN

1 -

Launch Wizard

1 -

learning content

2 -

Life at SAP

5 -

lightning

1 -

Linear Regression SAP HANA Cloud

1 -

local tax regulations

1 -

LP

1 -

Machine Learning

2 -

Marketing

1 -

Master Data

3 -

Master Data Management

14 -

Maxdb

2 -

MDG

1 -

MDGM

1 -

MDM

1 -

Message box.

1 -

Messages on RF Device

1 -

Microservices Architecture

1 -

Microsoft Universal Print

1 -

Middleware Solutions

1 -

Migration

5 -

ML Model Development

1 -

Modeling in SAP HANA Cloud

8 -

Monitoring

3 -

MTA

1 -

Multi-Record Scenarios

1 -

Multiple Event Triggers

1 -

Neo

1 -

New Event Creation

1 -

New Feature

1 -

Newcomer

1 -

NodeJS

2 -

ODATA

2 -

OData APIs

1 -

odatav2

1 -

ODATAV4

1 -

ODBC

1 -

ODBC Connection

1 -

Onpremise

1 -

open source

2 -

OpenAI API

1 -

Oracle

1 -

PaPM

1 -

PaPM Dynamic Data Copy through Writer function

1 -

PaPM Remote Call

1 -

PAS-C01

1 -

Pay Component Management

1 -

PGP

1 -

Pickle

1 -

PLANNING ARCHITECTURE

1 -

Popup in Sap analytical cloud

1 -

PostgrSQL

1 -

POSTMAN

1 -

Process Automation

2 -

Product Updates

4 -

PSM

1 -

Public Cloud

1 -

Python

4 -

Qlik

1 -

Qualtrics

1 -

RAP

3 -

RAP BO

2 -

Record Deletion

1 -

Recovery

1 -

recurring payments

1 -

redeply

1 -

Release

1 -

Remote Consumption Model

1 -

Replication Flows

1 -

research

1 -

Resilience

1 -

REST

1 -

REST API

1 -

Retagging Required

1 -

Risk

1 -

Rolling Kernel Switch

1 -

route

1 -

rules

1 -

S4 HANA

1 -

S4 HANA Cloud

1 -

S4 HANA On-Premise

1 -

S4HANA

3 -

S4HANA_OP_2023

2 -

SAC

10 -

SAC PLANNING

9 -

SAP

4 -

SAP ABAP

1 -

SAP Advanced Event Mesh

1 -

SAP AI Core

8 -

SAP AI Launchpad

8 -

SAP Analytic Cloud Compass

1 -

Sap Analytical Cloud

1 -

SAP Analytics Cloud

4 -

SAP Analytics Cloud for Consolidation

3 -

SAP Analytics Cloud Story

1 -

SAP analytics clouds

1 -

SAP BAS

1 -

SAP Basis

6 -

SAP BODS

1 -

SAP BODS certification.

1 -

SAP BTP

21 -

SAP BTP Build Work Zone

2 -

SAP BTP Cloud Foundry

6 -

SAP BTP Costing

1 -

SAP BTP CTMS

1 -

SAP BTP Innovation

1 -

SAP BTP Migration Tool

1 -

SAP BTP SDK IOS

1 -

SAP Build

11 -

SAP Build App

1 -

SAP Build apps

1 -

SAP Build CodeJam

1 -

SAP Build Process Automation

3 -

SAP Build work zone

10 -

SAP Business Objects Platform

1 -

SAP Business Technology

2 -

SAP Business Technology Platform (XP)

1 -

sap bw

1 -

SAP CAP

2 -

SAP CDC

1 -

SAP CDP

1 -

SAP CDS VIEW

1 -

SAP Certification

1 -

SAP Cloud ALM

4 -

SAP Cloud Application Programming Model

1 -

SAP Cloud Integration for Data Services

1 -

SAP cloud platform

8 -

SAP Companion

1 -

SAP CPI

3 -

SAP CPI (Cloud Platform Integration)

2 -

SAP CPI Discover tab

1 -

sap credential store

1 -

SAP Customer Data Cloud

1 -

SAP Customer Data Platform

1 -

SAP Data Intelligence

1 -

SAP Data Migration in Retail Industry

1 -

SAP Data Services

1 -

SAP DATABASE

1 -

SAP Dataspher to Non SAP BI tools

1 -

SAP Datasphere

10 -

SAP DRC

1 -

SAP EWM

1 -

SAP Fiori

2 -

SAP Fiori App Embedding

1 -

Sap Fiori Extension Project Using BAS

1 -

SAP GRC

1 -

SAP HANA

1 -

SAP HCM (Human Capital Management)

1 -

SAP HR Solutions

1 -

SAP IDM

1 -

SAP Integration Suite

9 -

SAP Integrations

4 -

SAP iRPA

2 -

SAP Learning Class

1 -

SAP Learning Hub

1 -

SAP Odata

2 -

SAP on Azure

1 -

SAP PartnerEdge

1 -

sap partners

1 -

SAP Password Reset

1 -

SAP PO Migration

1 -

SAP Prepackaged Content

1 -

SAP Process Automation

2 -

SAP Process Integration

2 -

SAP Process Orchestration

1 -

SAP S4HANA

2 -

SAP S4HANA Cloud

1 -

SAP S4HANA Cloud for Finance

1 -

SAP S4HANA Cloud private edition

1 -

SAP Sandbox

1 -

SAP STMS

1 -

SAP successfactors

3 -

SAP SuccessFactors HXM Core

1 -

SAP Time

1 -

SAP TM

2 -

SAP Trading Partner Management

1 -

SAP UI5

1 -

SAP Upgrade

1 -

SAP Utilities

1 -

SAP-GUI

8 -

SAP_COM_0276

1 -

SAPBTP

1 -

SAPCPI

1 -

SAPEWM

1 -

sapmentors

1 -

saponaws

2 -

SAPS4HANA

1 -

SAPUI5

4 -

schedule

1 -

Secure Login Client Setup

8 -

security

9 -

Selenium Testing

1 -

SEN

1 -

SEN Manager

1 -

service

1 -

SET_CELL_TYPE

1 -

SET_CELL_TYPE_COLUMN

1 -

SFTP scenario

2 -

Simplex

1 -

Single Sign On

8 -

Singlesource

1 -

SKLearn

1 -

soap

1 -

Software Development

1 -

SOLMAN

1 -

solman 7.2

2 -

Solution Manager

3 -

sp_dumpdb

1 -

sp_dumptrans

1 -

SQL

1 -

sql script

1 -

SSL

8 -

SSO

8 -

Substring function

1 -

SuccessFactors

1 -

SuccessFactors Platform

1 -

SuccessFactors Time Tracking

1 -

Sybase

1 -

system copy method

1 -

System owner

1 -

Table splitting

1 -

Tax Integration

1 -

Technical article

1 -

Technical articles

1 -

Technology Updates

14 -

Technology Updates

1 -

Technology_Updates

1 -

terraform

1 -

Threats

1 -

Time Collectors

1 -

Time Off

2 -

Time Sheet

1 -

Time Sheet SAP SuccessFactors Time Tracking

1 -

Tips and tricks

2 -

toggle button

1 -

Tools

1 -

Trainings & Certifications

1 -

Transport in SAP BODS

1 -

Transport Management

1 -

TypeScript

2 -

ui designer

1 -

unbind

1 -

Unified Customer Profile

1 -

UPB

1 -

Use of Parameters for Data Copy in PaPM

1 -

User Unlock

1 -

VA02

1 -

Validations

1 -

Vector Database

2 -

Vector Engine

1 -

Visual Studio Code

1 -

VSCode

1 -

Web SDK

1 -

work zone

1 -

workload

1 -

xsa

1 -

XSA Refresh

1

- « Previous

- Next »

Related Content

- FAQ for C4C Certificate Renewal in Technology Blogs by SAP

- Lowest SAP ECC version that can be integrated with SAP BTP through Cloud Connector in Technology Q&A

- how to read the name of groovy script sap cloud integration cpi in Technology Q&A

- How to host static webpages through SAP CPI-Iflow in Technology Blogs by Members

- How to use AI services to translate Picklists in SAP SuccessFactors - An example in Technology Blogs by SAP

Top kudoed authors

| User | Count |

|---|---|

| 10 | |

| 9 | |

| 5 | |

| 4 | |

| 4 | |

| 3 | |

| 3 | |

| 3 | |

| 3 | |

| 3 |