- SAP Community

- Products and Technology

- Technology

- Technology Blogs by Members

- Exposing On Premise Data to SAP Cloud Foundry

Technology Blogs by Members

Explore a vibrant mix of technical expertise, industry insights, and tech buzz in member blogs covering SAP products, technology, and events. Get in the mix!

Turn on suggestions

Auto-suggest helps you quickly narrow down your search results by suggesting possible matches as you type.

Showing results for

pranav_kandpal

Participant

Options

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

09-23-2019

4:56 PM

Introduction:

In this Blog , I will try to cover the topic of exposing On Premise Data to SAP Cloud Foundry using a Python based application using Flask Library and no authentication initially.

My overall objective is to expose the data to Data Hub , refine the data in Data Hub and store it / transfer it to the target system which i will try to cover in a series of blogs.

Brief Background : Why is this needed and Why Python ?

While working on a topic in Data intelligence running on SAP Cloud foundry instance , I came across the situation of exposing the table content of the ABAP stack to the pipelines in Data intelligence.

Since the SAP systems are running on-premise environment , exposing data directly outside the on-premise environment (as an OData service primarily) is not easy but of course not impossible,so I decided to shed some light on this topic.

As to Why Python - My overall objective is to create an application with a bear minimum basic authorization on Cloud foundry which can be called then within Data Hub using the Python3 operator and further using Pandas library , the data can be written to a CSV file in the storage.

Solution :

One possible solution to expose tables of on-premise system can be done by following the below mentioned technical steps

- On Premise System - Create a CDS View for exposing table data with OData service enabled

- Enable SAP Gateway Services in the Back end system to expose the CDS view as a service implementation as an OData service

- In Cloud connector-Configure the following parameters

- Create a connection to the Cloud Foundry Instance

- Map Actual server of the back end to a virtual host

- Deploy App in SAP Cloud Foundry environment

- Create an App using the Destination , Connectivity and XSUAA services of cloud foundry to pull data from the on-premise system and expose the data on a HTTPS Host with basic authentication.

Available solutions but why this?

Link here provides a wonderful solution to our problem statement in basically minimal effort and coding.

Mostly the end to end connectivity is ensured via the Node JS app deployed in the App router with an authentication service. I know you must be thinking if something similar can be achieved with a Simple app-router with basically no line of code,then why should be look at a separate approach ?

As I mentioned earlier my target is to consume this data within ´Data hub as an HTTP service' which is not possible via the app-router due to technical limitations.

With the app router in Node JS , the work is done so brilliantly that we don't actually see the intricacies involved internally. The inbuilt XSUAA service is the central point of truth for authentication.The internal routing which is done by the app-router cant be easily replicated in the Data hub pipeline . The app-router only shows us the final link of the app , however internally it first authenticates with an O-Auth scheme , keeps the JWT for future authentication ,provides JWTs for authenticating services and internally does the call to retrieve data from on-premise system and finally show the data in the deployed app. The main trick here is the OAuth scheme , which i wasn't able to replicate in the Data Hub pipeline .

So as a work around , I used this approach to feed the on-premise data to the Data Hub via a python based App .

How Cloud connector - Cloud Foundry and the ABAP system on Premise are linked ?

Well to be honest this is the main part which took me a while to decipher. I am no expert in any of these technologies and linking them together was bit hard. So let's try to sum it up in short .

The main 3 services in Cloud foundry which do the trick are :

- Authorization and Trust management

- Connectivity

- Destination

SAP Cloud Connector - as the name states is a connector. It's a gateway with 2 doors , one that opens up in the world of on-premise data and the other door opens up to the SAP Cloud platform. It's a secure tunnel which allows only one way connection ensuring a safe and secure transfer of data .The cloud connector ensures mapping of the Internal system to a Virtual address which hides the actual serve details when a response is provided back to the applications running on Cloud platform.

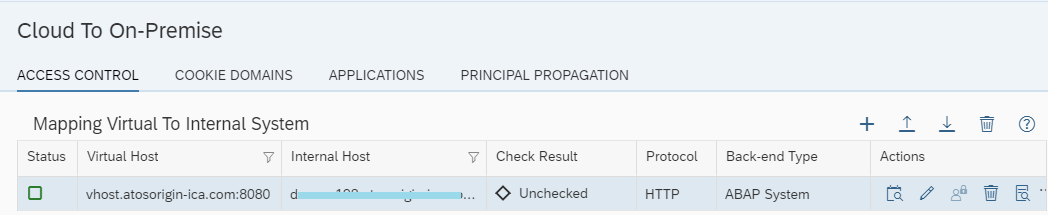

Above is an image of the mapping of the actual Internal host of the on-premise system with a Virtual Host in SAP Cloud connector. The cloud connector now uses the virtual host as a reference and uses this as a host name for making calls to the on-premise OData service .

So now instead of having a call for the OData service as :

http://Internal Host:Internal Port/sap/opu/odata/sap/<Odata service>

the call to the Odata service by the cloud connector would be :

http://Virtual Host:Virtual Port/sap/opu/odata/sap/<Odata service>

so if i take the above example the link would be something like :

http://vhost.atosorigin-ica.com:8080/sap/opu/odata/sap/<My Odata service> .

Below is an example of a Node JS application deployed on Cloud foundry which uses the Cloud connector to connect to the ABAP system (Link here for the app router ).

If you notice , the virtual mapping works and the actual details of the on-premise host and port are masked and instead as a response the metadata of the service points to the virtual address instead of the actual server information .

Now just for the sake of fun , i would remove the connection of my Cloud connector to my Cloud Foundry trial account and run the same app again :

And voila , the app doesn't show any data what-so-ever .

The cloud connector is the link between this Virtual OData URL and the app running on cloud. The cloud connector is queried by the Connectivity service of CF using a Proxy host and Proxy Port with an actual Get request for the Virtual OData URL which is retrieved from the Destination services after a proper authentication by the XSUAA service to receive the data .

I know that was a bit too much, so lets roll back to the 3 services needed in Cloud Foundry that do the trick and check them in detail.

Connectivity Service :

This is one of the most important service from my opinion when connection between On premise and Cloud system is considered .

If you have used the connectivity service or gone through this link here , you know how to create a service implementation for the connectivity service .Once the service implementation is created , go inside the instance and create a service , by clicking on the Create service Key button as seen in the below image.

Service key has some crucial information regarding the Connectivity service and the below image contains important keys to consider :

The connectivity service has to first get a JWT from the XSUAA services to authenticate itself.Once validated , using the token a request is made to the Virtual OData address maintained in the Destination services via the cloud connector .

The request made by the connectivity service is not a direct one , instead it uses as proxy host and proxy port which are used while making a get request to get the data from the source system.The information of the server details for Proxy host and port are maintained in the service key (see image above ) .

This part would be clear when we talk about the CURL part later on .

Destination Services :

Destination service is probably the easiest of the 3. This service stores the information regarding the destination which needs to be queried by the connectivity service . So in short that has the information regarding the Virtual host and port name (given in the cloud connector ) along with the authentication parameters . A simple destination would somewhat like below :

The service key for destination service also hold some interesting information regarding the client ID , client secret and URL for authentication .

Authorization and Trust management

The last piece of our puzzle but also another very important service - xsuaa .

The main use of the xsuaa service is to provide trust to identify providers for authentication.This service mainly handles this by providing JWT's( Java Web tokens ) .Any service can authenticate itself against the xsuaa service using a client Id and client secret by making a get call to the url for xsuaa service . This information can be found in the service keys for the particular instance . Using the JWT's further calls are made to either fetch the destination name or to make a call to the SAP cloud connector to fetch the data using proxy server details.

The below image shows a typical service key implementation for xsuaa service instance . The most interesting key to notice here is the URL . This is the service URL for authentication to which a get query is triggered to get the JWT.

How this is done will be more clear in the section for CURL and finally the python code , so in-case this still did not make much sense , just relax 😉 .

In simple words ,

- Destination service will hold the server information of the on-premise system where the query will be made to pull the data .

- Connectivity services will use this information from destination service to retrieve where the query has to be made and using a proxy host and port it will make a call to retrieve the data from the on-premise system .

- For the purpose of authentication xsuaa service will be used which will provide JWTs for authentication which will have its own lifespan of validity.

CURL :

Well to be honest , until now its been more of 'why' and 'what' , lets do the 'how' now !

Lets start with the destination service .

Retrieve destination details:

Step 1 - Understanding the API for destination services:

The API documentation for the destination service can be found on this link here.

Now if you want to retrieve a particular destination from your cloud foundry you need to first get the URI mentioned in the service key for destination (discussed before ) and would somewhat look like below :

https://destination-configuration.cfapps.eu10.hana.ondemand.com

As per the API documentation the get destination request query is - /destinations/{name}

An actual request URL would be like : <URI Of Destination from service key>/destination-configuration/v1/destinations/{name of the destination }.

An example for the get request query would look somewhat like below where my destination is named abapBackend1

https://destination-configuration.cfapps.eu10.hana.ondemand.com/destination-configuration/v1/destina...

A query to this URL would result in an error as shown below for a query from postman.

The error clearly states a missing authorization bearer . The reason is that it requires a JWT for authenticating this call . As mentioned already this JWT is provided by the xsuaa services . So lets move to getting this JWT .

Step 2 - Retrieve JWT for destination services:

To get a JWT from the xsuaa service a get query has to be triggered to the URL mentioned in the service key for the instance of XSUAA services .

The request URL to get the token would be :

<URL>/oauth/token

so in my example it would be look somewhat like below( please note the request is a POST request):

https://s0018786787trial.authentication.eu10.hana.ondemand.com/oauth/token

along with this request URL some other crucial information has to be fed , primarily the basic authentication details and some other parameters .

In our case the JWT is requested by the destination services , therefore the username and password will be the client ID and secret from the service key of destination service . Please navigate to your destination service and open the service key for the implementation which would have client ID and client secret . Your username will be the value of client id and the password would be client secret .

Mentioned below is a complete CURL statement for this request :

curl -X POST \

<URL from the service key of xsuaa service >/oauth/token'\ -H 'Authorization: Basic <client ID and client secret from destination service encoded in base64 format>' \ -H 'Content-Type: application/x-www-form-urlencoded' \ -d 'client_id=<client id from key of your destination service>&grant_type=client_credentials'

Replace the parameters with your instance specific details and import the CURL into postman to trigger a POST request which would return and access_token as a response :

Step 3 - Call destination service API to retrieve details :

As discussed before in Step 1 we make a call to the destination API along with an authorization header using the access_token as the JWT as mentioned in the below CURL :

curl -X GET \

https://<URL from the destination service key>/destination-configuration/v1/destinations/abapBackend1 \

-H 'Authorization: bearer <token received in Step 2>

On executing this CURL statement we will receive the details of our destination :

Using connectivity services :

The next important topic is to use connectivity services . Using the details regarding the username , password , destination URL , the connectivity services can make a request via the SAP CC the data from on-premise system . But first it would need a JWT for authentication .

Step 1: Retrieve the JWT for connectivity services

As discussed in the previous section the process would be similar except the details used here would be from the service key for connectivity service .

curl -X POST \

<URL from the service key of xsuaa service >/oauth/token'\ -H 'Authorization: Basic <client ID and client secret from connectivity service encoded in base64 format>' \ -H 'Content-Type: application/x-www-form-urlencoded' \ -d 'client_id=<client id from key of your connectivity service>&grant_type=client_credentials'

A Post request of the above CURL would retrieve a JWT to authorize the connectivity service , and a response over postman would somewhat look like below:

Step 2: Call the backend OData service

This step would involve a bit of coding so let's directly cover this via a Python code snippet. Important technical aspects to consider are as below :

- The call to the SAP back end is using a basic authentication so it would make a call to the OData service using the SAP user name and Password for a user who has the roles for viewing data of the OData service

- Connectivity service will not make a direct get request to the OData URL . The request will be made via a proxy host : proxy port , which can be found in the service key of connectivity service

- To authenticate the call to the proxy server , Proxy-Authorization will be passed as a header which would use the JWT obtained in Step 1 as the bearer .

APP To retrieve data from On-premise system :

Now comes the most important aspect of this whole blog.Our code !!

You can follow the below steps or directly download the whole implementation from here.

Step 1 - Create a folder where the you want to add all the files of your code. Eg -> C:\CF App

Step 2 - Create a file runtime.txt with below content.

python-3.6.8This will tell the CF instance the runtime environment to be used . You can also use any other supported CF instance , 3.6.9 for example

Step 3 - Create a file requirements.txt with below content.

Flask

requests

cfenvSince our app would be deployed in the cloud foundry instance , we need to explicitly mention the libraries which are needed apart from the standard libs which are available in the standard runtime . These library will be also installed when the app will be deployed to the CF instance .

Flask - we would use this to display our final result as a dict

requests - used to make get/post requests

cfenv - would be used to read the environment variables associated with the app .

Step 4 - Create file Procfile with below content :

web: python read_data.pyThis would tell the application to execute the read_data.py file after deployment . Please note this file is not yet create and will be done in step 6.

Step 5 - Create the file manifest.yml with the below content:

---

applications:

- memory: 128MB

disk_quota: 256MB

random-route: true

services:

- <Name of your XSUAA service instance>

- <Name of your connectivity instance>Please change the memory allocations as per your wish or use the same as mentioned above .

In the services section we explicitly bind the service instances to the app so that they are available as an environment variable in the app and can be later used within the code for further processing . Please do not forget to change the name of your service instance in the above code .

Step 6 : Create the file read_data.py

Since this is a bigger code snippet , please retrieve it from here.

I have marked the comments to each step so it should be self explanatory .

Step 7: Deploy the app

Open CMD and navigate to the specific location where your file's are stored .Eg -> CD C:\CF App

Important info :

- Incase you dont have the Cloud foundry CLI installed , please install it and then proceed with the next steps .We would be using commands pertaining to CF now

- Please ensure your SAP Cloud connector is connected to your CF instance or else you will receive an error as no response will be received by the app and the app will fail in deployment

Login to the CF instance of SAP using the below command :

cf login

Please then enter your username and password . Please note password would not be visible when typed in so go with the flow 🙂 .

In case you have various sub accounts , select the sub account which you want to use for deployment

Once you are in the instance of CF , use the CF Push <your application name > to deploy the app

Once deployment is complete you will get a success message which should look like below :

Once this application is deployed , you can navigate to the applications inside space and now the app with the name that you deployed with should be visible . When you click on the URL , the response will be visible as an HTML app somewhat like below :

In case you look at the metadata - the metadata points to the virtual host and pulls the exact same data as that of the on-premise OData service outside the on-premise environment.

And using this approach we can happily expose the data outside the SAP world.

However this approach still has few blocking points :

- The data is exposed to an open internet.In case you are dealing with crucial client data , no one would appreciate this and a more secure APP would be needed.

- There is still some hard coding regarding destination and Username / password details .

- Data is exposed just as a basic response . We need to make it more meaningful and readable.

In my next article i will cover these topics and provide a better and improved solution . Also the final target to expose this data to Data Hub is not yet covered , so i will try to sum everything up in another blog .

Hopefully this blog is of some purpose to someone,somewhere,someday 🙂 !

- SAP Managed Tags:

- SAP Data Intelligence,

- OData,

- SAP BTP, Cloud Foundry runtime and environment

7 Comments

You must be a registered user to add a comment. If you've already registered, sign in. Otherwise, register and sign in.

Labels in this area

-

"automatische backups"

1 -

"regelmäßige sicherung"

1 -

"TypeScript" "Development" "FeedBack"

1 -

505 Technology Updates 53

1 -

ABAP

14 -

ABAP API

1 -

ABAP CDS Views

2 -

ABAP CDS Views - BW Extraction

1 -

ABAP CDS Views - CDC (Change Data Capture)

1 -

ABAP class

2 -

ABAP Cloud

2 -

ABAP Development

5 -

ABAP in Eclipse

1 -

ABAP Platform Trial

1 -

ABAP Programming

2 -

abap technical

1 -

absl

2 -

access data from SAP Datasphere directly from Snowflake

1 -

Access data from SAP datasphere to Qliksense

1 -

Accrual

1 -

action

1 -

adapter modules

1 -

Addon

1 -

Adobe Document Services

1 -

ADS

1 -

ADS Config

1 -

ADS with ABAP

1 -

ADS with Java

1 -

ADT

2 -

Advance Shipping and Receiving

1 -

Advanced Event Mesh

3 -

AEM

1 -

AI

7 -

AI Launchpad

1 -

AI Projects

1 -

AIML

9 -

Alert in Sap analytical cloud

1 -

Amazon S3

1 -

Analytical Dataset

1 -

Analytical Model

1 -

Analytics

1 -

Analyze Workload Data

1 -

annotations

1 -

API

1 -

API and Integration

3 -

API Call

2 -

Application Architecture

1 -

Application Development

5 -

Application Development for SAP HANA Cloud

3 -

Applications and Business Processes (AP)

1 -

Artificial Intelligence

1 -

Artificial Intelligence (AI)

5 -

Artificial Intelligence (AI) 1 Business Trends 363 Business Trends 8 Digital Transformation with Cloud ERP (DT) 1 Event Information 462 Event Information 15 Expert Insights 114 Expert Insights 76 Life at SAP 418 Life at SAP 1 Product Updates 4

1 -

Artificial Intelligence (AI) blockchain Data & Analytics

1 -

Artificial Intelligence (AI) blockchain Data & Analytics Intelligent Enterprise

1 -

Artificial Intelligence (AI) blockchain Data & Analytics Intelligent Enterprise Oil Gas IoT Exploration Production

1 -

Artificial Intelligence (AI) blockchain Data & Analytics Intelligent Enterprise sustainability responsibility esg social compliance cybersecurity risk

1 -

ASE

1 -

ASR

2 -

ASUG

1 -

Attachments

1 -

Authorisations

1 -

Automating Processes

1 -

Automation

2 -

aws

2 -

Azure

1 -

Azure AI Studio

1 -

B2B Integration

1 -

Backorder Processing

1 -

Backup

1 -

Backup and Recovery

1 -

Backup schedule

1 -

BADI_MATERIAL_CHECK error message

1 -

Bank

1 -

BAS

1 -

basis

2 -

Basis Monitoring & Tcodes with Key notes

2 -

Batch Management

1 -

BDC

1 -

Best Practice

1 -

bitcoin

1 -

Blockchain

3 -

bodl

1 -

BOP in aATP

1 -

BOP Segments

1 -

BOP Strategies

1 -

BOP Variant

1 -

BPC

1 -

BPC LIVE

1 -

BTP

12 -

BTP Destination

2 -

Business AI

1 -

Business and IT Integration

1 -

Business application stu

1 -

Business Application Studio

1 -

Business Architecture

1 -

Business Communication Services

1 -

Business Continuity

1 -

Business Data Fabric

3 -

Business Partner

12 -

Business Partner Master Data

10 -

Business Technology Platform

2 -

Business Trends

4 -

CA

1 -

calculation view

1 -

CAP

3 -

Capgemini

1 -

CAPM

1 -

Catalyst for Efficiency: Revolutionizing SAP Integration Suite with Artificial Intelligence (AI) and

1 -

CCMS

2 -

CDQ

12 -

CDS

2 -

Cental Finance

1 -

Certificates

1 -

CFL

1 -

Change Management

1 -

chatbot

1 -

chatgpt

3 -

CL_SALV_TABLE

2 -

Class Runner

1 -

Classrunner

1 -

Cloud ALM Monitoring

1 -

Cloud ALM Operations

1 -

cloud connector

1 -

Cloud Extensibility

1 -

Cloud Foundry

4 -

Cloud Integration

6 -

Cloud Platform Integration

2 -

cloudalm

1 -

communication

1 -

Compensation Information Management

1 -

Compensation Management

1 -

Compliance

1 -

Compound Employee API

1 -

Configuration

1 -

Connectors

1 -

Consolidation Extension for SAP Analytics Cloud

2 -

Control Indicators.

1 -

Controller-Service-Repository pattern

1 -

Conversion

1 -

Cosine similarity

1 -

cryptocurrency

1 -

CSI

1 -

ctms

1 -

Custom chatbot

3 -

Custom Destination Service

1 -

custom fields

1 -

Customer Experience

1 -

Customer Journey

1 -

Customizing

1 -

cyber security

3 -

cybersecurity

1 -

Data

1 -

Data & Analytics

1 -

Data Aging

1 -

Data Analytics

2 -

Data and Analytics (DA)

1 -

Data Archiving

1 -

Data Back-up

1 -

Data Flow

1 -

Data Governance

5 -

Data Integration

2 -

Data Quality

12 -

Data Quality Management

12 -

Data Synchronization

1 -

data transfer

1 -

Data Unleashed

1 -

Data Value

8 -

database tables

1 -

Datasphere

3 -

datenbanksicherung

1 -

dba cockpit

1 -

dbacockpit

1 -

Debugging

2 -

Delimiting Pay Components

1 -

Delta Integrations

1 -

Destination

3 -

Destination Service

1 -

Developer extensibility

1 -

Developing with SAP Integration Suite

1 -

Devops

1 -

digital transformation

1 -

Documentation

1 -

Dot Product

1 -

DQM

1 -

dump database

1 -

dump transaction

1 -

e-Invoice

1 -

E4H Conversion

1 -

Eclipse ADT ABAP Development Tools

2 -

edoc

1 -

edocument

1 -

ELA

1 -

Embedded Consolidation

1 -

Embedding

1 -

Embeddings

1 -

Employee Central

1 -

Employee Central Payroll

1 -

Employee Central Time Off

1 -

Employee Information

1 -

Employee Rehires

1 -

Enable Now

1 -

Enable now manager

1 -

endpoint

1 -

Enhancement Request

1 -

Enterprise Architecture

1 -

ETL Business Analytics with SAP Signavio

1 -

Euclidean distance

1 -

Event Dates

1 -

Event Driven Architecture

1 -

Event Mesh

2 -

Event Reason

1 -

EventBasedIntegration

1 -

EWM

1 -

EWM Outbound configuration

1 -

EWM-TM-Integration

1 -

Existing Event Changes

1 -

Expand

1 -

Expert

2 -

Expert Insights

2 -

Exploits

1 -

Fiori

14 -

Fiori Elements

2 -

Fiori SAPUI5

12 -

Flask

1 -

Full Stack

8 -

Funds Management

1 -

General

1 -

General Splitter

1 -

Generative AI

1 -

Getting Started

1 -

GitHub

8 -

Grants Management

1 -

GraphQL

1 -

groovy

1 -

GTP

1 -

HANA

6 -

HANA Cloud

2 -

Hana Cloud Database Integration

2 -

HANA DB

2 -

HANA XS Advanced

1 -

Historical Events

1 -

home labs

1 -

HowTo

1 -

HR Data Management

1 -

html5

8 -

HTML5 Application

1 -

Identity cards validation

1 -

idm

1 -

Implementation

1 -

input parameter

1 -

instant payments

1 -

Integration

3 -

Integration Advisor

1 -

Integration Architecture

1 -

Integration Center

1 -

Integration Suite

1 -

intelligent enterprise

1 -

iot

1 -

Java

1 -

job

1 -

Job Information Changes

1 -

Job-Related Events

1 -

Job_Event_Information

1 -

joule

4 -

Journal Entries

1 -

Just Ask

1 -

Kerberos for ABAP

8 -

Kerberos for JAVA

8 -

KNN

1 -

Launch Wizard

1 -

Learning Content

2 -

Life at SAP

5 -

lightning

1 -

Linear Regression SAP HANA Cloud

1 -

Loading Indicator

1 -

local tax regulations

1 -

LP

1 -

Machine Learning

2 -

Marketing

1 -

Master Data

3 -

Master Data Management

14 -

Maxdb

2 -

MDG

1 -

MDGM

1 -

MDM

1 -

Message box.

1 -

Messages on RF Device

1 -

Microservices Architecture

1 -

Microsoft Universal Print

1 -

Middleware Solutions

1 -

Migration

5 -

ML Model Development

1 -

Modeling in SAP HANA Cloud

8 -

Monitoring

3 -

MTA

1 -

Multi-Record Scenarios

1 -

Multiple Event Triggers

1 -

Myself Transformation

1 -

Neo

1 -

New Event Creation

1 -

New Feature

1 -

Newcomer

1 -

NodeJS

2 -

ODATA

2 -

OData APIs

1 -

odatav2

1 -

ODATAV4

1 -

ODBC

1 -

ODBC Connection

1 -

Onpremise

1 -

open source

2 -

OpenAI API

1 -

Oracle

1 -

PaPM

1 -

PaPM Dynamic Data Copy through Writer function

1 -

PaPM Remote Call

1 -

PAS-C01

1 -

Pay Component Management

1 -

PGP

1 -

Pickle

1 -

PLANNING ARCHITECTURE

1 -

Popup in Sap analytical cloud

1 -

PostgrSQL

1 -

POSTMAN

1 -

Process Automation

2 -

Product Updates

4 -

PSM

1 -

Public Cloud

1 -

Python

4 -

Qlik

1 -

Qualtrics

1 -

RAP

3 -

RAP BO

2 -

Record Deletion

1 -

Recovery

1 -

recurring payments

1 -

redeply

1 -

Release

1 -

Remote Consumption Model

1 -

Replication Flows

1 -

research

1 -

Resilience

1 -

REST

1 -

REST API

2 -

Retagging Required

1 -

Risk

1 -

Rolling Kernel Switch

1 -

route

1 -

rules

1 -

S4 HANA

1 -

S4 HANA Cloud

1 -

S4 HANA On-Premise

1 -

S4HANA

3 -

S4HANA_OP_2023

2 -

SAC

10 -

SAC PLANNING

9 -

SAP

4 -

SAP ABAP

1 -

SAP Advanced Event Mesh

1 -

SAP AI Core

8 -

SAP AI Launchpad

8 -

SAP Analytic Cloud Compass

1 -

Sap Analytical Cloud

1 -

SAP Analytics Cloud

4 -

SAP Analytics Cloud for Consolidation

3 -

SAP Analytics Cloud Story

1 -

SAP analytics clouds

1 -

SAP BAS

1 -

SAP Basis

6 -

SAP BODS

1 -

SAP BODS certification.

1 -

SAP BTP

21 -

SAP BTP Build Work Zone

2 -

SAP BTP Cloud Foundry

6 -

SAP BTP Costing

1 -

SAP BTP CTMS

1 -

SAP BTP Innovation

1 -

SAP BTP Migration Tool

1 -

SAP BTP SDK IOS

1 -

SAP Build

11 -

SAP Build App

1 -

SAP Build apps

1 -

SAP Build CodeJam

1 -

SAP Build Process Automation

3 -

SAP Build work zone

10 -

SAP Business Objects Platform

1 -

SAP Business Technology

2 -

SAP Business Technology Platform (XP)

1 -

sap bw

1 -

SAP CAP

2 -

SAP CDC

1 -

SAP CDP

1 -

SAP CDS VIEW

1 -

SAP Certification

1 -

SAP Cloud ALM

4 -

SAP Cloud Application Programming Model

1 -

SAP Cloud Integration for Data Services

1 -

SAP cloud platform

8 -

SAP Companion

1 -

SAP CPI

3 -

SAP CPI (Cloud Platform Integration)

2 -

SAP CPI Discover tab

1 -

sap credential store

1 -

SAP Customer Data Cloud

1 -

SAP Customer Data Platform

1 -

SAP Data Intelligence

1 -

SAP Data Migration in Retail Industry

1 -

SAP Data Services

1 -

SAP DATABASE

1 -

SAP Dataspher to Non SAP BI tools

1 -

SAP Datasphere

9 -

SAP DRC

1 -

SAP EWM

1 -

SAP Fiori

3 -

SAP Fiori App Embedding

1 -

Sap Fiori Extension Project Using BAS

1 -

SAP GRC

1 -

SAP HANA

1 -

SAP HCM (Human Capital Management)

1 -

SAP HR Solutions

1 -

SAP IDM

1 -

SAP Integration Suite

9 -

SAP Integrations

4 -

SAP iRPA

2 -

SAP LAGGING AND SLOW

1 -

SAP Learning Class

1 -

SAP Learning Hub

1 -

SAP Odata

2 -

SAP on Azure

1 -

SAP PartnerEdge

1 -

sap partners

1 -

SAP Password Reset

1 -

SAP PO Migration

1 -

SAP Prepackaged Content

1 -

SAP Process Automation

2 -

SAP Process Integration

2 -

SAP Process Orchestration

1 -

SAP S4HANA

2 -

SAP S4HANA Cloud

1 -

SAP S4HANA Cloud for Finance

1 -

SAP S4HANA Cloud private edition

1 -

SAP Sandbox

1 -

SAP STMS

1 -

SAP successfactors

3 -

SAP SuccessFactors HXM Core

1 -

SAP Time

1 -

SAP TM

2 -

SAP Trading Partner Management

1 -

SAP UI5

1 -

SAP Upgrade

1 -

SAP Utilities

1 -

SAP-GUI

8 -

SAP_COM_0276

1 -

SAPBTP

1 -

SAPCPI

1 -

SAPEWM

1 -

sapmentors

1 -

saponaws

2 -

SAPS4HANA

1 -

SAPUI5

5 -

schedule

1 -

Script Operator

1 -

Secure Login Client Setup

8 -

security

9 -

Selenium Testing

1 -

Self Transformation

1 -

Self-Transformation

1 -

SEN

1 -

SEN Manager

1 -

service

1 -

SET_CELL_TYPE

1 -

SET_CELL_TYPE_COLUMN

1 -

SFTP scenario

2 -

Simplex

1 -

Single Sign On

8 -

Singlesource

1 -

SKLearn

1 -

Slow loading

1 -

soap

1 -

Software Development

1 -

SOLMAN

1 -

solman 7.2

2 -

Solution Manager

3 -

sp_dumpdb

1 -

sp_dumptrans

1 -

SQL

1 -

sql script

1 -

SSL

8 -

SSO

8 -

Substring function

1 -

SuccessFactors

1 -

SuccessFactors Platform

1 -

SuccessFactors Time Tracking

1 -

Sybase

1 -

system copy method

1 -

System owner

1 -

Table splitting

1 -

Tax Integration

1 -

Technical article

1 -

Technical articles

1 -

Technology Updates

14 -

Technology Updates

1 -

Technology_Updates

1 -

terraform

1 -

Threats

2 -

Time Collectors

1 -

Time Off

2 -

Time Sheet

1 -

Time Sheet SAP SuccessFactors Time Tracking

1 -

Tips and tricks

2 -

toggle button

1 -

Tools

1 -

Trainings & Certifications

1 -

Transformation Flow

1 -

Transport in SAP BODS

1 -

Transport Management

1 -

TypeScript

2 -

ui designer

1 -

unbind

1 -

Unified Customer Profile

1 -

UPB

1 -

Use of Parameters for Data Copy in PaPM

1 -

User Unlock

1 -

VA02

1 -

Validations

1 -

Vector Database

2 -

Vector Engine

1 -

Visual Studio Code

1 -

VSCode

1 -

Vulnerabilities

1 -

Web SDK

1 -

work zone

1 -

workload

1 -

xsa

1 -

XSA Refresh

1

- « Previous

- Next »

Related Content

- ABAP Cloud Developer Trial 2022 Available Now in Technology Blogs by SAP

- Consuming CAPM Application's OData service into SAP Fiori Application in Business Application Studio in Technology Blogs by Members

- Expose CDS View as OData Service/API in S/4HANA 2002 On-Premise in Technology Q&A

- SAP Datasphere - Space, Data Integration, and Data Modeling Best Practices in Technology Blogs by SAP

- Can assign users to specific user group in target system (SAP AS ABAP) in Technology Q&A

Top kudoed authors

| User | Count |

|---|---|

| 6 | |

| 5 | |

| 5 | |

| 5 | |

| 5 | |

| 4 | |

| 4 | |

| 4 | |

| 3 | |

| 3 |