- SAP Community

- Products and Technology

- Technology

- Technology Blogs by Members

- SAP PO Async Message Processing Analysis

Technology Blogs by Members

Explore a vibrant mix of technical expertise, industry insights, and tech buzz in member blogs covering SAP products, technology, and events. Get in the mix!

Turn on suggestions

Auto-suggest helps you quickly narrow down your search results by suggesting possible matches as you type.

Showing results for

stephen_xue

Active Participant

Options

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

09-20-2019

5:23 AM

In SAP PO, single java stack system, we have at least the following options controlling number of message processing threads:

Let’s take the following story line to elaborate the functionalities of all above options.

Company A has a SAP PO system in single java stack, which has a bellowing scenario.

PO retrieves order messages from a JMS queue Q1 by using JMS sender channel and pushes the order messages to system Target01’s API via a REST Receiver channel.

The PO server has one cluster node and all configurations are default.

It works fine.

One day, a requirement has come that a new similar scenario needs to be built as below.

Whereas the velocity that messages dropped off into the JMS queue Q2, is a bit fast from the upstream systems. Problem has been found that

Even though the maximum 5 threads of JMS Send queue (JMS_http://sap.com/xi/XI/SystemSend) have been used, there are still some backlogs in the Q2.

Take into account that the system SAP PO installed has enough number of CPUs and enough memory, to solve the problem above, the number of cluster nodes has been increased from 1 to 2(SAP KBA # 1734360).

Since the maximum number of threads picking up messages from JMS queue has been doubled, we have enough workers picking up the messages from queue and system is working properly now.

Company A’s business keeps growing. There are more and more order messages dropped into the Q1 and Q2. The time spent to receive an Ack. (normally it is a HTTP status) from Target01 perspective is a bit longer than JMS sender channel retrieving messages from Q1 and Q2. Little by little it results in a large backlog inside the dispatcher queue.

The symptom is that lots of the messages are in the ‘To Be Delivered’ status in the message monitor and it takes long time to put through a message.

On the other side, Target01 says that even though it takes long time to receive one message, whereas the system has lots of capacity and can accept multiple incoming calls in parallel.

Therefore we can increase the value of messaging.connectionDefinition

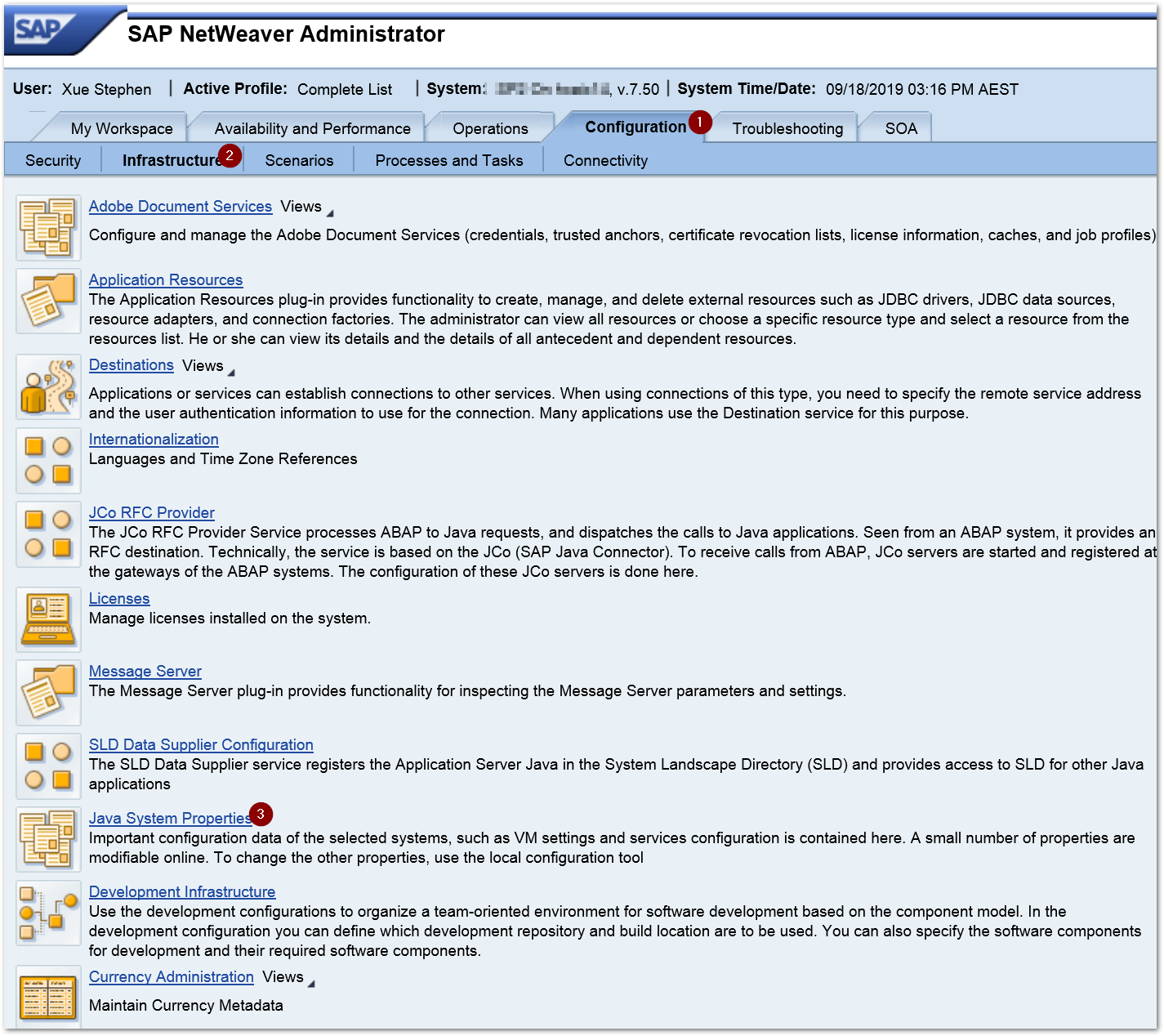

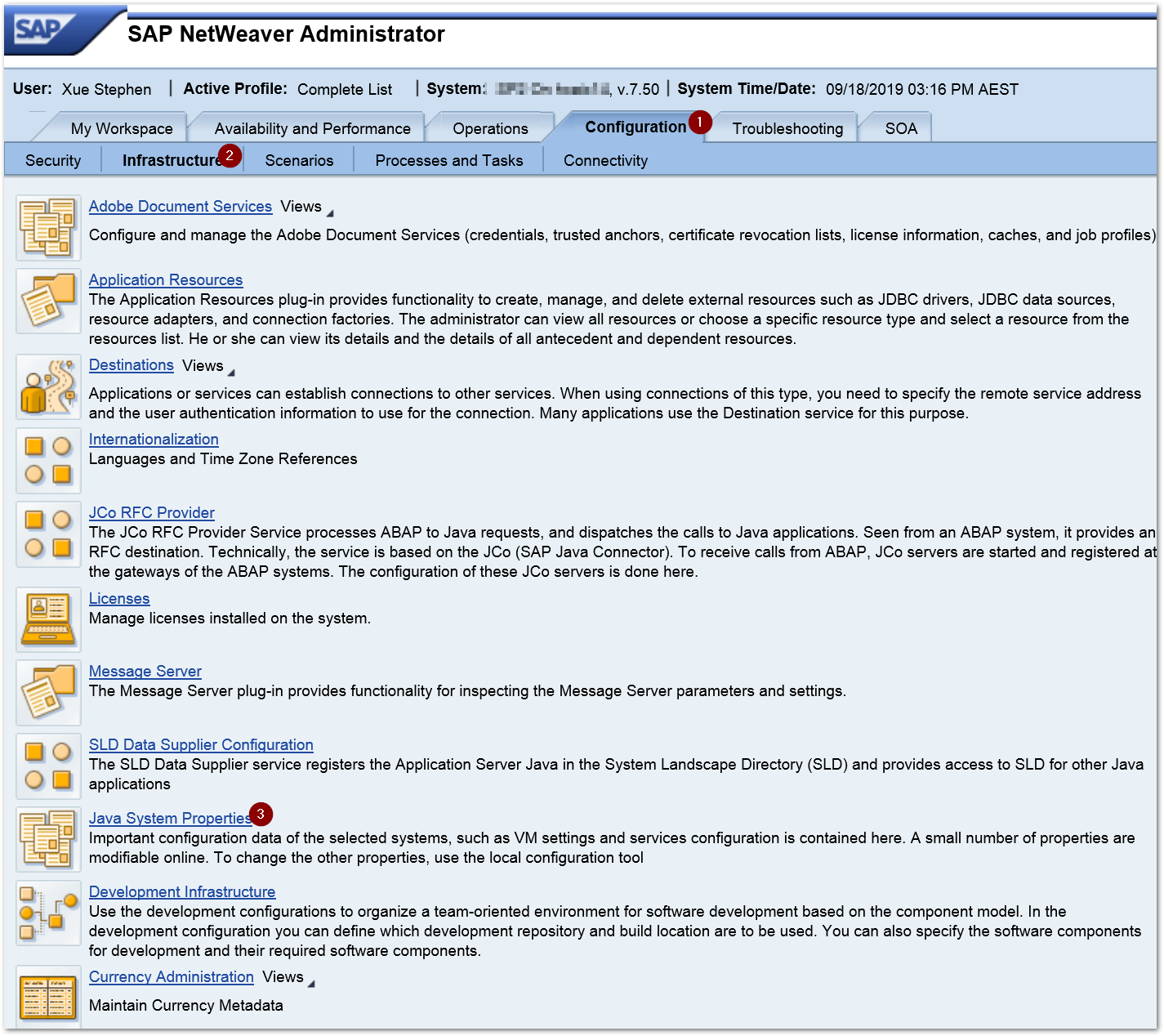

NWA--> Configuration-->Infrastructure-->Java Service Properties

Tab Services-->XPI Service: AF Core-->messaging.connectionDefinition

In this property, we can configure the number of Adapter specific queues. The default number is 5 per cluster node. Now we increase it to 20. Add the following string to the property and restart the J2EE service.

(name=JMS_http://sap.com/xi/XI/System, messageListener=localejbs/AFWListener, exceptionListener=localejbs/AFWListener, pollInterval=60000, pollAttempts=60, Send.maxConsumers=20, Recv.maxConsumers=5, Call.maxConsumers=5, Rqst.maxConsumers=5)

Since we have two cluster nodes now, altogether we have maximum 40 threads for all scenarios using JMS sender channels now.

After the configuration change, there are more threads picking up messages in the dispatcher queue. Even though the message process time is a bit slow on the Target01 side, with more threads (JMS send queue), the backlogs in dispatcher queue have been broomed.

As the business development of Company A, a new scenario has been add as below.

A new queue has been added to receive new master data (from upstream systems) and SAP PO synchronises the data to Target02. Since the size of a single master data is extremely huge, it took very long time to finish one call, say 1 minute something per one call.

Even though there are 3 scenarios in the system, soon we can find that all of 40 threads have been used up by scenario number 3. For scenario number 1 and 2, the Target01 is much faster than Target02, whereas there is no vacant threads (queue) assigned to process their messages. It still takes long time for these two scenarios to finish one process. Most of their messages are in status ‘To Be Delivered’ again.

NWA-->Configuration-->Infrastructure-->Java Service Properties

Tab Services-->XPI Service: Messaging System-->

To let the mechanism work, set property perInterface to true from the default value false. Then set value of property maxReceivers to 10. It means once configured, the maximum number of threads (adapter specific queue) per interface per cluster node is 10.

Remember to restart the J2EE service.

After restarting the service, we can see scenario number 3 has occupied 10 * 2 = 20 threads at most. Since the whole PO system has another 20 threads for scenario 1 and 2, these two scenarios will not have too many backlogs.

By using this configuration, the performance of one scenario will not impact the whole system.

A new scenario has been built up in Company A as below

Since Target03 is a server running a PC, it cannot accept too many connections in parallel. During the performance test, PO find lots of HTTP 500 issue while pushes the messages into Target03. The technical help of Target03 indicates that the maximum connections for Target03 is 4.

As per the configuration done for maxReceivers is 10, PO can generate maximum 20 connection to Target03 which is much larger than its capacity and it leads the HTTP 500 connection issue.

Configuration and Monitor Home-->Configuration and Administration-->Adapter Engine-->PI Receiver Parallelism

Note: This option will only work if the previous step has been configured. When access the view, you will see message as below

It means you can go on configure it.

If it is like this

It means you need to specify a maximum number of threads per interface over the system wide at first, then you can go on configure here to restrict number of connections for a specific interface.

After clicking the create button, input the Party/Component/Interface/Namespace information to the table below.

Remember, we have 2 system cluster nodes, the count of interface should be 2 here so that the maximum number connections will not excess 4.

The configuration will be working right after clicking the save button. There is no need to restart J2EE service.

Company A has a new business. Message is supposed to be retrieved from a new JMS queue and pushed through as soon as possible for a better user experience. Whereas when scenario 1/2/3 have large amount of messages to be sent, say data synchronization process, messages of this new scenario will be delayed for a long time in the queue. In order to solve the problem, we need to let these messages cut line.

Configuration and Monitor HomeàConfiguration and AdministrationàAdapter EngineàMessage Prioritization

Configure a rule like below

Without this configuration, the priority of a scenario is Normal, which will be assigned 20% of system resource.

With Priority High, system will assign 75% resource to the scenario by default;

With Priority Low, system will assign 5% resource to the scenario by default;

No J2EE restart is needed for this configuration.

Since more resource has been assigned to the scenario, a message of this scenario in the dispatcher queue will have larger chance to be picked by the Adapter Specific Queue, by which means the message has cut in the line.

Asynchronous Message Process Sequence inside SAP PO

I cannot find any official document on how asynchronous message has been processed step by step inside SAP PO. Here I am trying to elaborate it according to my feeling. If I am incorrect, you are very welcome to point it out. Thanks

Step 1. When a message has been pushed to an endpoint exposed by SAP PO or polled by a channel like FILE/JDBC/JMS, it arrives in the adapter engine at first then be put into the ‘Adapter Specific Send Queue’. The name of queue is <Adapter Type>_http://sap.com/xi/XI/SystemSend

For examples

Note: if the message is coming from BPM, the queue is JPR_http://sap.com/xi/XI/SystemSend. JPR stands for Java Proxy Runtime.

Step2. A thread in the adapter specific send queue will put the message into the dispatcher queue;

Step3. A thread in the adapter specific send queue picks up the message from the dispatcher queue based on factors like number of free threads, messaging.connectionDefinition, messaging.system.queueParallelism.perInterface and prioritization etc.

Step 4. The thread puts the message to receiver determination defined in the ICO to which message belongs. After that a receiver component stamp will be sticked to the message and the message will be placed again into the dispatcher queue. Once done, the thread will be released and it will try to pick up a new message from the dispatcher queue. The red rectangle shows the lifecycle of the thead.

Step 5. A thread in the adapter specific send queue picks up the message from the dispatcher queue based on factors like number of of free threads, messaging.system.queueParallelism.perInterface, ReceiverParallelism and prioritization etc.

Step 6. The thread pushes the message to the mapping program.

Step 7. The thread pushes the target message to the receiver channel. Once the receiver channel has got the HTTP status (200, 202, 503 etc.) back from the receiver system, the thread will be released and it will try to pick up a new message from dispatcher queue. The green rectangle shows the lifecycle of the thread.

Reference:

https://blogs.sap.com/2011/01/26/tuning-the-pi-messaging-system-queues/

- Number of cluster nodes;

- connectionDefinition

- system.queueParallelism.perInterface

- ReceiverParallelism

- MessagePrioritization

Let’s take the following story line to elaborate the functionalities of all above options.

Company A has a SAP PO system in single java stack, which has a bellowing scenario.

PO retrieves order messages from a JMS queue Q1 by using JMS sender channel and pushes the order messages to system Target01’s API via a REST Receiver channel.

The PO server has one cluster node and all configurations are default.

It works fine.

1. Number of cluster nodes

One day, a requirement has come that a new similar scenario needs to be built as below.

Whereas the velocity that messages dropped off into the JMS queue Q2, is a bit fast from the upstream systems. Problem has been found that

Even though the maximum 5 threads of JMS Send queue (JMS_http://sap.com/xi/XI/SystemSend) have been used, there are still some backlogs in the Q2.

Take into account that the system SAP PO installed has enough number of CPUs and enough memory, to solve the problem above, the number of cluster nodes has been increased from 1 to 2(SAP KBA # 1734360).

Since the maximum number of threads picking up messages from JMS queue has been doubled, we have enough workers picking up the messages from queue and system is working properly now.

2. messaging.connectionDefinition

Company A’s business keeps growing. There are more and more order messages dropped into the Q1 and Q2. The time spent to receive an Ack. (normally it is a HTTP status) from Target01 perspective is a bit longer than JMS sender channel retrieving messages from Q1 and Q2. Little by little it results in a large backlog inside the dispatcher queue.

The symptom is that lots of the messages are in the ‘To Be Delivered’ status in the message monitor and it takes long time to put through a message.

On the other side, Target01 says that even though it takes long time to receive one message, whereas the system has lots of capacity and can accept multiple incoming calls in parallel.

Therefore we can increase the value of messaging.connectionDefinition

Solution

NWA--> Configuration-->Infrastructure-->Java Service Properties

Tab Services-->XPI Service: AF Core-->messaging.connectionDefinition

In this property, we can configure the number of Adapter specific queues. The default number is 5 per cluster node. Now we increase it to 20. Add the following string to the property and restart the J2EE service.

(name=JMS_http://sap.com/xi/XI/System, messageListener=localejbs/AFWListener, exceptionListener=localejbs/AFWListener, pollInterval=60000, pollAttempts=60, Send.maxConsumers=20, Recv.maxConsumers=5, Call.maxConsumers=5, Rqst.maxConsumers=5)

Since we have two cluster nodes now, altogether we have maximum 40 threads for all scenarios using JMS sender channels now.

After the configuration change, there are more threads picking up messages in the dispatcher queue. Even though the message process time is a bit slow on the Target01 side, with more threads (JMS send queue), the backlogs in dispatcher queue have been broomed.

3 messaging.system.queueParallelism.

perInterface

As the business development of Company A, a new scenario has been add as below.

A new queue has been added to receive new master data (from upstream systems) and SAP PO synchronises the data to Target02. Since the size of a single master data is extremely huge, it took very long time to finish one call, say 1 minute something per one call.

Even though there are 3 scenarios in the system, soon we can find that all of 40 threads have been used up by scenario number 3. For scenario number 1 and 2, the Target01 is much faster than Target02, whereas there is no vacant threads (queue) assigned to process their messages. It still takes long time for these two scenarios to finish one process. Most of their messages are in status ‘To Be Delivered’ again.

Solution

NWA-->Configuration-->Infrastructure-->Java Service Properties

Tab Services-->XPI Service: Messaging System-->

- system.queueParallelism.perInterface

- system.queueParallelism.maxReceivers

To let the mechanism work, set property perInterface to true from the default value false. Then set value of property maxReceivers to 10. It means once configured, the maximum number of threads (adapter specific queue) per interface per cluster node is 10.

Remember to restart the J2EE service.

After restarting the service, we can see scenario number 3 has occupied 10 * 2 = 20 threads at most. Since the whole PO system has another 20 threads for scenario 1 and 2, these two scenarios will not have too many backlogs.

By using this configuration, the performance of one scenario will not impact the whole system.

4 ReceiverParallelism

A new scenario has been built up in Company A as below

Since Target03 is a server running a PC, it cannot accept too many connections in parallel. During the performance test, PO find lots of HTTP 500 issue while pushes the messages into Target03. The technical help of Target03 indicates that the maximum connections for Target03 is 4.

As per the configuration done for maxReceivers is 10, PO can generate maximum 20 connection to Target03 which is much larger than its capacity and it leads the HTTP 500 connection issue.

Solution

Configuration and Monitor Home-->Configuration and Administration-->Adapter Engine-->PI Receiver Parallelism

Note: This option will only work if the previous step has been configured. When access the view, you will see message as below

It means you can go on configure it.

If it is like this

It means you need to specify a maximum number of threads per interface over the system wide at first, then you can go on configure here to restrict number of connections for a specific interface.

After clicking the create button, input the Party/Component/Interface/Namespace information to the table below.

Remember, we have 2 system cluster nodes, the count of interface should be 2 here so that the maximum number connections will not excess 4.

The configuration will be working right after clicking the save button. There is no need to restart J2EE service.

5 MessagePrioritization

Company A has a new business. Message is supposed to be retrieved from a new JMS queue and pushed through as soon as possible for a better user experience. Whereas when scenario 1/2/3 have large amount of messages to be sent, say data synchronization process, messages of this new scenario will be delayed for a long time in the queue. In order to solve the problem, we need to let these messages cut line.

Solution

Configuration and Monitor HomeàConfiguration and AdministrationàAdapter EngineàMessage Prioritization

Configure a rule like below

Without this configuration, the priority of a scenario is Normal, which will be assigned 20% of system resource.

With Priority High, system will assign 75% resource to the scenario by default;

With Priority Low, system will assign 5% resource to the scenario by default;

No J2EE restart is needed for this configuration.

Since more resource has been assigned to the scenario, a message of this scenario in the dispatcher queue will have larger chance to be picked by the Adapter Specific Queue, by which means the message has cut in the line.

Appendix

Asynchronous Message Process Sequence inside SAP PO

I cannot find any official document on how asynchronous message has been processed step by step inside SAP PO. Here I am trying to elaborate it according to my feeling. If I am incorrect, you are very welcome to point it out. Thanks

Step 1. When a message has been pushed to an endpoint exposed by SAP PO or polled by a channel like FILE/JDBC/JMS, it arrives in the adapter engine at first then be put into the ‘Adapter Specific Send Queue’. The name of queue is <Adapter Type>_http://sap.com/xi/XI/SystemSend

For examples

- if the message is picked by a JMS channel, the queue name will be JMS_http://sap.com/xi/XI/SystemSend;

- if the message is pushed to a RESTful Endpoint of SAP PO, the queue name will be REST_http://sap.com/xi/XI/SystemSend;

Note: if the message is coming from BPM, the queue is JPR_http://sap.com/xi/XI/SystemSend. JPR stands for Java Proxy Runtime.

Step2. A thread in the adapter specific send queue will put the message into the dispatcher queue;

Step3. A thread in the adapter specific send queue picks up the message from the dispatcher queue based on factors like number of free threads, messaging.connectionDefinition, messaging.system.queueParallelism.perInterface and prioritization etc.

Step 4. The thread puts the message to receiver determination defined in the ICO to which message belongs. After that a receiver component stamp will be sticked to the message and the message will be placed again into the dispatcher queue. Once done, the thread will be released and it will try to pick up a new message from the dispatcher queue. The red rectangle shows the lifecycle of the thead.

Step 5. A thread in the adapter specific send queue picks up the message from the dispatcher queue based on factors like number of of free threads, messaging.system.queueParallelism.perInterface, ReceiverParallelism and prioritization etc.

Step 6. The thread pushes the message to the mapping program.

Step 7. The thread pushes the target message to the receiver channel. Once the receiver channel has got the HTTP status (200, 202, 503 etc.) back from the receiver system, the thread will be released and it will try to pick up a new message from dispatcher queue. The green rectangle shows the lifecycle of the thread.

Reference:

https://blogs.sap.com/2011/01/26/tuning-the-pi-messaging-system-queues/

- SAP Managed Tags:

- SAP Process Integration

4 Comments

You must be a registered user to add a comment. If you've already registered, sign in. Otherwise, register and sign in.

Labels in this area

-

"automatische backups"

1 -

"regelmäßige sicherung"

1 -

"TypeScript" "Development" "FeedBack"

1 -

505 Technology Updates 53

1 -

ABAP

14 -

ABAP API

1 -

ABAP CDS Views

2 -

ABAP CDS Views - BW Extraction

1 -

ABAP CDS Views - CDC (Change Data Capture)

1 -

ABAP class

2 -

ABAP Cloud

2 -

ABAP Development

5 -

ABAP in Eclipse

1 -

ABAP Platform Trial

1 -

ABAP Programming

2 -

abap technical

1 -

absl

2 -

access data from SAP Datasphere directly from Snowflake

1 -

Access data from SAP datasphere to Qliksense

1 -

Accrual

1 -

action

1 -

adapter modules

1 -

Addon

1 -

Adobe Document Services

1 -

ADS

1 -

ADS Config

1 -

ADS with ABAP

1 -

ADS with Java

1 -

ADT

2 -

Advance Shipping and Receiving

1 -

Advanced Event Mesh

3 -

AEM

1 -

AI

7 -

AI Launchpad

1 -

AI Projects

1 -

AIML

9 -

Alert in Sap analytical cloud

1 -

Amazon S3

1 -

Analytical Dataset

1 -

Analytical Model

1 -

Analytics

1 -

Analyze Workload Data

1 -

annotations

1 -

API

1 -

API and Integration

3 -

API Call

2 -

Application Architecture

1 -

Application Development

5 -

Application Development for SAP HANA Cloud

3 -

Applications and Business Processes (AP)

1 -

Artificial Intelligence

1 -

Artificial Intelligence (AI)

5 -

Artificial Intelligence (AI) 1 Business Trends 363 Business Trends 8 Digital Transformation with Cloud ERP (DT) 1 Event Information 462 Event Information 15 Expert Insights 114 Expert Insights 76 Life at SAP 418 Life at SAP 1 Product Updates 4

1 -

Artificial Intelligence (AI) blockchain Data & Analytics

1 -

Artificial Intelligence (AI) blockchain Data & Analytics Intelligent Enterprise

1 -

Artificial Intelligence (AI) blockchain Data & Analytics Intelligent Enterprise Oil Gas IoT Exploration Production

1 -

Artificial Intelligence (AI) blockchain Data & Analytics Intelligent Enterprise sustainability responsibility esg social compliance cybersecurity risk

1 -

ASE

1 -

ASR

2 -

ASUG

1 -

Attachments

1 -

Authorisations

1 -

Automating Processes

1 -

Automation

2 -

aws

2 -

Azure

1 -

Azure AI Studio

1 -

B2B Integration

1 -

Backorder Processing

1 -

Backup

1 -

Backup and Recovery

1 -

Backup schedule

1 -

BADI_MATERIAL_CHECK error message

1 -

Bank

1 -

BAS

1 -

basis

2 -

Basis Monitoring & Tcodes with Key notes

2 -

Batch Management

1 -

BDC

1 -

Best Practice

1 -

bitcoin

1 -

Blockchain

3 -

bodl

1 -

BOP in aATP

1 -

BOP Segments

1 -

BOP Strategies

1 -

BOP Variant

1 -

BPC

1 -

BPC LIVE

1 -

BTP

12 -

BTP Destination

2 -

Business AI

1 -

Business and IT Integration

1 -

Business application stu

1 -

Business Application Studio

1 -

Business Architecture

1 -

Business Communication Services

1 -

Business Continuity

1 -

Business Data Fabric

3 -

Business Partner

12 -

Business Partner Master Data

10 -

Business Technology Platform

2 -

Business Trends

4 -

CA

1 -

calculation view

1 -

CAP

3 -

Capgemini

1 -

CAPM

1 -

Catalyst for Efficiency: Revolutionizing SAP Integration Suite with Artificial Intelligence (AI) and

1 -

CCMS

2 -

CDQ

12 -

CDS

2 -

Cental Finance

1 -

Certificates

1 -

CFL

1 -

Change Management

1 -

chatbot

1 -

chatgpt

3 -

CL_SALV_TABLE

2 -

Class Runner

1 -

Classrunner

1 -

Cloud ALM Monitoring

1 -

Cloud ALM Operations

1 -

cloud connector

1 -

Cloud Extensibility

1 -

Cloud Foundry

4 -

Cloud Integration

6 -

Cloud Platform Integration

2 -

cloudalm

1 -

communication

1 -

Compensation Information Management

1 -

Compensation Management

1 -

Compliance

1 -

Compound Employee API

1 -

Configuration

1 -

Connectors

1 -

Consolidation Extension for SAP Analytics Cloud

2 -

Control Indicators.

1 -

Controller-Service-Repository pattern

1 -

Conversion

1 -

Cosine similarity

1 -

cryptocurrency

1 -

CSI

1 -

ctms

1 -

Custom chatbot

3 -

Custom Destination Service

1 -

custom fields

1 -

Customer Experience

1 -

Customer Journey

1 -

Customizing

1 -

cyber security

3 -

Data

1 -

Data & Analytics

1 -

Data Aging

1 -

Data Analytics

2 -

Data and Analytics (DA)

1 -

Data Archiving

1 -

Data Back-up

1 -

Data Governance

5 -

Data Integration

2 -

Data Quality

12 -

Data Quality Management

12 -

Data Synchronization

1 -

data transfer

1 -

Data Unleashed

1 -

Data Value

8 -

database tables

1 -

Datasphere

2 -

datenbanksicherung

1 -

dba cockpit

1 -

dbacockpit

1 -

Debugging

2 -

Delimiting Pay Components

1 -

Delta Integrations

1 -

Destination

3 -

Destination Service

1 -

Developer extensibility

1 -

Developing with SAP Integration Suite

1 -

Devops

1 -

digital transformation

1 -

Documentation

1 -

Dot Product

1 -

DQM

1 -

dump database

1 -

dump transaction

1 -

e-Invoice

1 -

E4H Conversion

1 -

Eclipse ADT ABAP Development Tools

2 -

edoc

1 -

edocument

1 -

ELA

1 -

Embedded Consolidation

1 -

Embedding

1 -

Embeddings

1 -

Employee Central

1 -

Employee Central Payroll

1 -

Employee Central Time Off

1 -

Employee Information

1 -

Employee Rehires

1 -

Enable Now

1 -

Enable now manager

1 -

endpoint

1 -

Enhancement Request

1 -

Enterprise Architecture

1 -

ETL Business Analytics with SAP Signavio

1 -

Euclidean distance

1 -

Event Dates

1 -

Event Driven Architecture

1 -

Event Mesh

2 -

Event Reason

1 -

EventBasedIntegration

1 -

EWM

1 -

EWM Outbound configuration

1 -

EWM-TM-Integration

1 -

Existing Event Changes

1 -

Expand

1 -

Expert

2 -

Expert Insights

2 -

Fiori

14 -

Fiori Elements

2 -

Fiori SAPUI5

12 -

Flask

1 -

Full Stack

8 -

Funds Management

1 -

General

1 -

Generative AI

1 -

Getting Started

1 -

GitHub

8 -

Grants Management

1 -

groovy

1 -

GTP

1 -

HANA

6 -

HANA Cloud

2 -

Hana Cloud Database Integration

2 -

HANA DB

2 -

HANA XS Advanced

1 -

Historical Events

1 -

home labs

1 -

HowTo

1 -

HR Data Management

1 -

html5

8 -

HTML5 Application

1 -

Identity cards validation

1 -

idm

1 -

Implementation

1 -

input parameter

1 -

instant payments

1 -

Integration

3 -

Integration Advisor

1 -

Integration Architecture

1 -

Integration Center

1 -

Integration Suite

1 -

intelligent enterprise

1 -

iot

1 -

Java

1 -

job

1 -

Job Information Changes

1 -

Job-Related Events

1 -

Job_Event_Information

1 -

joule

4 -

Journal Entries

1 -

Just Ask

1 -

Kerberos for ABAP

8 -

Kerberos for JAVA

8 -

KNN

1 -

Launch Wizard

1 -

learning content

2 -

Life at SAP

4 -

lightning

1 -

Linear Regression SAP HANA Cloud

1 -

local tax regulations

1 -

LP

1 -

Machine Learning

2 -

Marketing

1 -

Master Data

3 -

Master Data Management

14 -

Maxdb

2 -

MDG

1 -

MDGM

1 -

MDM

1 -

Message box.

1 -

Messages on RF Device

1 -

Microservices Architecture

1 -

Microsoft Universal Print

1 -

Middleware Solutions

1 -

Migration

5 -

ML Model Development

1 -

Modeling in SAP HANA Cloud

8 -

Monitoring

3 -

MTA

1 -

Multi-Record Scenarios

1 -

Multiple Event Triggers

1 -

Neo

1 -

New Event Creation

1 -

New Feature

1 -

Newcomer

1 -

NodeJS

2 -

ODATA

2 -

OData APIs

1 -

odatav2

1 -

ODATAV4

1 -

ODBC

1 -

ODBC Connection

1 -

Onpremise

1 -

open source

2 -

OpenAI API

1 -

Oracle

1 -

PaPM

1 -

PaPM Dynamic Data Copy through Writer function

1 -

PaPM Remote Call

1 -

PAS-C01

1 -

Pay Component Management

1 -

PGP

1 -

Pickle

1 -

PLANNING ARCHITECTURE

1 -

Popup in Sap analytical cloud

1 -

PostgrSQL

1 -

POSTMAN

1 -

Process Automation

2 -

Product Updates

4 -

PSM

1 -

Public Cloud

1 -

Python

4 -

Qlik

1 -

Qualtrics

1 -

RAP

3 -

RAP BO

2 -

Record Deletion

1 -

Recovery

1 -

recurring payments

1 -

redeply

1 -

Release

1 -

Remote Consumption Model

1 -

Replication Flows

1 -

research

1 -

Resilience

1 -

REST

1 -

REST API

1 -

Retagging Required

1 -

Risk

1 -

Rolling Kernel Switch

1 -

route

1 -

rules

1 -

S4 HANA

1 -

S4 HANA Cloud

1 -

S4 HANA On-Premise

1 -

S4HANA

3 -

S4HANA_OP_2023

2 -

SAC

10 -

SAC PLANNING

9 -

SAP

4 -

SAP ABAP

1 -

SAP Advanced Event Mesh

1 -

SAP AI Core

8 -

SAP AI Launchpad

8 -

SAP Analytic Cloud Compass

1 -

Sap Analytical Cloud

1 -

SAP Analytics Cloud

4 -

SAP Analytics Cloud for Consolidation

3 -

SAP Analytics Cloud Story

1 -

SAP analytics clouds

1 -

SAP BAS

1 -

SAP Basis

6 -

SAP BODS

1 -

SAP BODS certification.

1 -

SAP BTP

21 -

SAP BTP Build Work Zone

2 -

SAP BTP Cloud Foundry

6 -

SAP BTP Costing

1 -

SAP BTP CTMS

1 -

SAP BTP Innovation

1 -

SAP BTP Migration Tool

1 -

SAP BTP SDK IOS

1 -

SAP Build

11 -

SAP Build App

1 -

SAP Build apps

1 -

SAP Build CodeJam

1 -

SAP Build Process Automation

3 -

SAP Build work zone

10 -

SAP Business Objects Platform

1 -

SAP Business Technology

2 -

SAP Business Technology Platform (XP)

1 -

sap bw

1 -

SAP CAP

2 -

SAP CDC

1 -

SAP CDP

1 -

SAP CDS VIEW

1 -

SAP Certification

1 -

SAP Cloud ALM

4 -

SAP Cloud Application Programming Model

1 -

SAP Cloud Integration for Data Services

1 -

SAP cloud platform

8 -

SAP Companion

1 -

SAP CPI

3 -

SAP CPI (Cloud Platform Integration)

2 -

SAP CPI Discover tab

1 -

sap credential store

1 -

SAP Customer Data Cloud

1 -

SAP Customer Data Platform

1 -

SAP Data Intelligence

1 -

SAP Data Migration in Retail Industry

1 -

SAP Data Services

1 -

SAP DATABASE

1 -

SAP Dataspher to Non SAP BI tools

1 -

SAP Datasphere

10 -

SAP DRC

1 -

SAP EWM

1 -

SAP Fiori

2 -

SAP Fiori App Embedding

1 -

Sap Fiori Extension Project Using BAS

1 -

SAP GRC

1 -

SAP HANA

1 -

SAP HCM (Human Capital Management)

1 -

SAP HR Solutions

1 -

SAP IDM

1 -

SAP Integration Suite

9 -

SAP Integrations

4 -

SAP iRPA

2 -

SAP Learning Class

1 -

SAP Learning Hub

1 -

SAP Odata

2 -

SAP on Azure

1 -

SAP PartnerEdge

1 -

sap partners

1 -

SAP Password Reset

1 -

SAP PO Migration

1 -

SAP Prepackaged Content

1 -

SAP Process Automation

2 -

SAP Process Integration

2 -

SAP Process Orchestration

1 -

SAP S4HANA

2 -

SAP S4HANA Cloud

1 -

SAP S4HANA Cloud for Finance

1 -

SAP S4HANA Cloud private edition

1 -

SAP Sandbox

1 -

SAP STMS

1 -

SAP successfactors

3 -

SAP SuccessFactors HXM Core

1 -

SAP Time

1 -

SAP TM

2 -

SAP Trading Partner Management

1 -

SAP UI5

1 -

SAP Upgrade

1 -

SAP Utilities

1 -

SAP-GUI

8 -

SAP_COM_0276

1 -

SAPBTP

1 -

SAPCPI

1 -

SAPEWM

1 -

sapmentors

1 -

saponaws

2 -

SAPS4HANA

1 -

SAPUI5

4 -

schedule

1 -

Secure Login Client Setup

8 -

security

9 -

Selenium Testing

1 -

SEN

1 -

SEN Manager

1 -

service

1 -

SET_CELL_TYPE

1 -

SET_CELL_TYPE_COLUMN

1 -

SFTP scenario

2 -

Simplex

1 -

Single Sign On

8 -

Singlesource

1 -

SKLearn

1 -

soap

1 -

Software Development

1 -

SOLMAN

1 -

solman 7.2

2 -

Solution Manager

3 -

sp_dumpdb

1 -

sp_dumptrans

1 -

SQL

1 -

sql script

1 -

SSL

8 -

SSO

8 -

Substring function

1 -

SuccessFactors

1 -

SuccessFactors Platform

1 -

SuccessFactors Time Tracking

1 -

Sybase

1 -

system copy method

1 -

System owner

1 -

Table splitting

1 -

Tax Integration

1 -

Technical article

1 -

Technical articles

1 -

Technology Updates

14 -

Technology Updates

1 -

Technology_Updates

1 -

terraform

1 -

Threats

1 -

Time Collectors

1 -

Time Off

2 -

Time Sheet

1 -

Time Sheet SAP SuccessFactors Time Tracking

1 -

Tips and tricks

2 -

toggle button

1 -

Tools

1 -

Trainings & Certifications

1 -

Transport in SAP BODS

1 -

Transport Management

1 -

TypeScript

2 -

ui designer

1 -

unbind

1 -

Unified Customer Profile

1 -

UPB

1 -

Use of Parameters for Data Copy in PaPM

1 -

User Unlock

1 -

VA02

1 -

Validations

1 -

Vector Database

2 -

Vector Engine

1 -

Visual Studio Code

1 -

VSCode

1 -

Web SDK

1 -

work zone

1 -

workload

1 -

xsa

1 -

XSA Refresh

1

- « Previous

- Next »

Related Content

- Onboarding Users in SAP Quality Issue Resolution in Technology Blogs by SAP

- Hack2Build on Business AI – Highlighted Use Cases in Technology Blogs by SAP

- Analyze Expensive ABAP Workload in the Cloud with Work Process Sampling in Technology Blogs by SAP

- SAP HANA Cloud Vector Engine: Quick FAQ Reference in Technology Blogs by SAP

- FB60 does not post. No error message is displayed. in Technology Q&A

Top kudoed authors

| User | Count |

|---|---|

| 13 | |

| 11 | |

| 5 | |

| 5 | |

| 5 | |

| 4 | |

| 4 | |

| 3 | |

| 3 | |

| 3 |