- SAP Community

- Products and Technology

- Technology

- Technology Blogs by SAP

- What is Big Data and Why do we need Hadoop for Big...

Technology Blogs by SAP

Learn how to extend and personalize SAP applications. Follow the SAP technology blog for insights into SAP BTP, ABAP, SAP Analytics Cloud, SAP HANA, and more.

Turn on suggestions

Auto-suggest helps you quickly narrow down your search results by suggesting possible matches as you type.

Showing results for

Advisor

Options

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

06-24-2019

8:31 AM

This blog post is part of the series My Learning Journey for Hadoop. In this blog post I will focus on

"A picture is worth a thousand words" - Keeping that in mind, I have tried to explain with less words and more images. Let me know know in comment if this is helpful or not 🙂

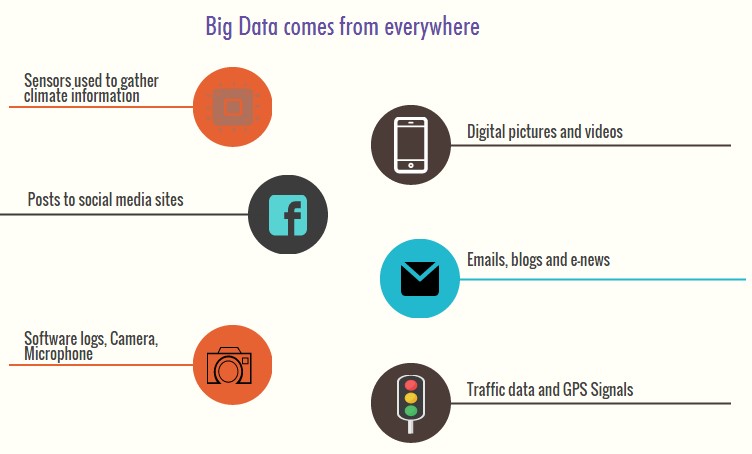

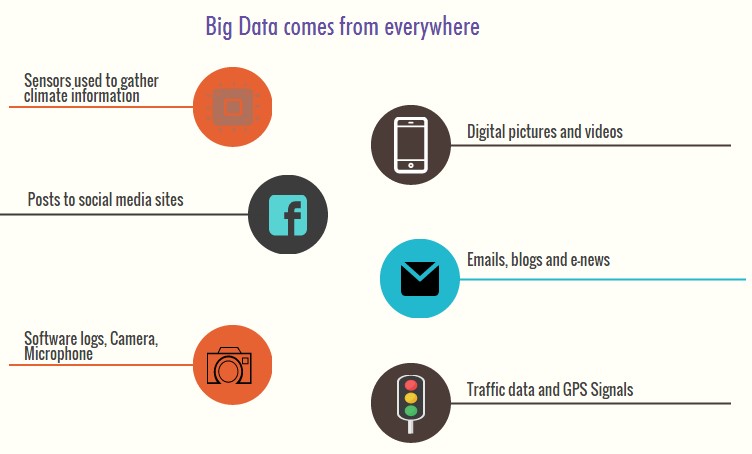

The data coming from everywhere for example

All these together constitute Big Data.

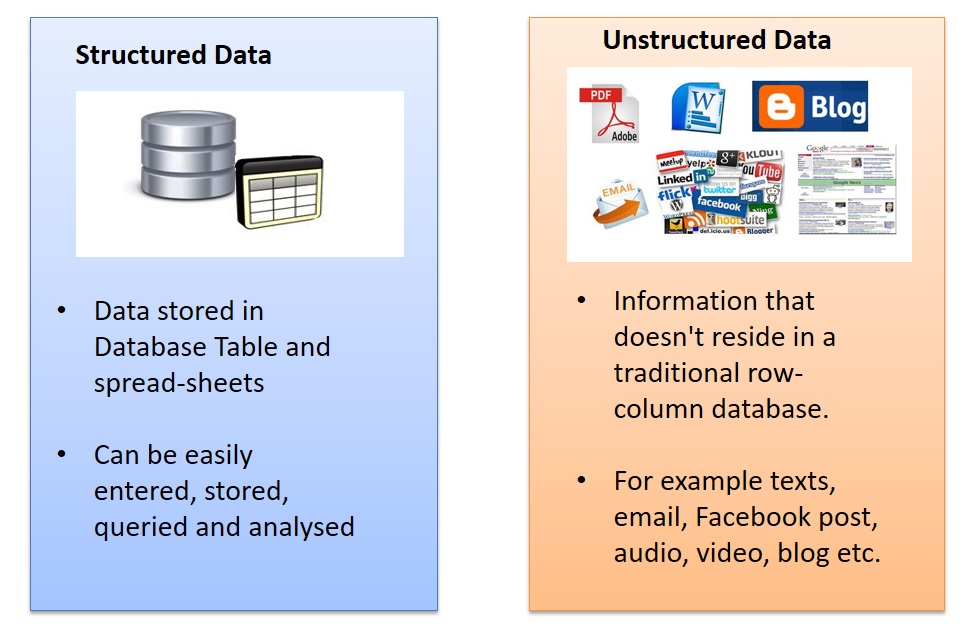

Large collection of structured and unstructured data that can be captured, stored, aggregated, analyzed and communicated to make better business decisions is called Big Data.

The size of available data is growing today exponentially. A text file is a few kilobytes, a sound file is a few megabytes while a full-length movie is a few gigabytes.

More sources of data are getting added on continuous basis. It is very common to have Terabytes and Petabytes of the storage system for enterprises. As the database grows the applications and architecture built to support the data needs to be changed quite often.

The data growth and social media explosion have changed how we look at the data. Initially, companies analyzed data using a batch process. One takes a chunk of data, submits a job to the server and waits for output. That process works when the incoming data rate is slower.

With the new sources of data such as social and mobile applications, the batch process breaks down. Today people reply on social media to update them with the latest happening. On social media sometimes a few seconds old messages (a tweet, status updates etc.) is not something interests users. They often discard old messages and pay attention to recent updates. The data movement is now almost real time and the update window has reduced to fractions of the seconds.

From excel tables and databases, data structure has changed to lose its structure and to add hundreds of formats. Pure text, photo, audio, video, web, GPS data, sensor data, relational data bases, documents, SMS, pdf, flash etc.

Now we no longer have control over the input data format. Structure can no longer be imposed like in the past to keep control over the analysis. As new applications are introduced new data formats come to life. The real world has data in many different formats and that is the challenge we need to overcome with the Big Data.

Now let us see why we need Hadoop for Big Data.

If relational databases can solve your problem, then you can use it but with the origin of Big Data, new challenges got introduced which traditional database system couldn’t solve fully.

Let us understand these challenges in more details.

Challenge 1:

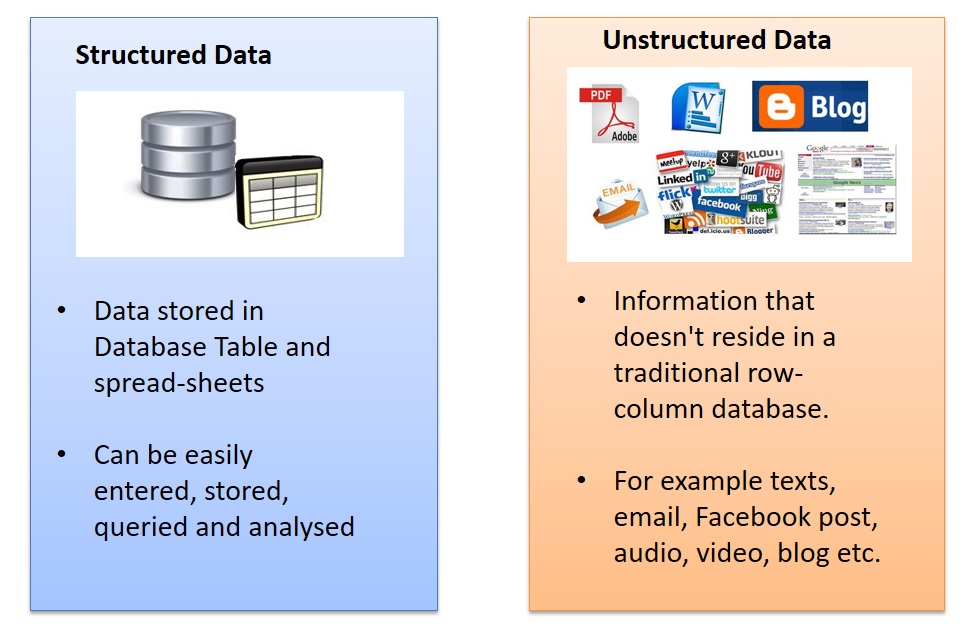

Big Data has got variety of data means along with structured data which relational databases can handle very well, Big Data also includes unstructured data (text, log, audio, streams, video stream, sensor, GPS data). The traditional databases require the database schema to be created in ADVANCE to define the data how it would look like which makes it harder to handle Big unstructured data.

Challenge 2:

Big Data is getting generated at very high speed. The traditional databases are not designed to handle database insert/update rates required to support the speed at which Big Data arrives or needs to be analyzed.

Challenge 3:

Big Data is data in Zettabytes, growing with exponential rate. If the data to be processed is in the degree of Terabytes and petabytes, it is more appropriate to process them in parallel independent tasks and collate the results to give the output. Traditional database approach can’t handle this.

To handle these challenges a new framework came into existence, Hadoop.

Hadoop is a frame work to handle vast volume of structured and unstructured data in a distributed manner.

It’s very important to know that Hadoop is not replacement of traditional database.

Unlike RDBMS where you can query in real-time, the Hadoop process takes time and doesn’t produce immediate results. Hadoop is a computing architecture, not a database.

Check the blog series My Learning Journey for Hadoop or directly jump to next article Introduction to Hadoop in simple words

- What is Big Data?

- Why Hadoop came into existence?

"A picture is worth a thousand words" - Keeping that in mind, I have tried to explain with less words and more images. Let me know know in comment if this is helpful or not 🙂

What is Big Data?

Where does Big Data come from?

The data coming from everywhere for example

- In last 10-15 minutes on Facebook, you see millions of links shared, event invites, friend requests, photos uploaded and comments

- Terabytes of data generated through Twitter feeds in the last few hours

- Consumer product companies and retail organizations are monitoring social media like Facebook and Twitter to get an unprecedented view into customer behaviour, preferences, and product perception

- GPS data from mobile devices

- weblogs, emails text, email attachments

- sensors used to gather climate information

- posts to social media sites,

- purchase transaction records and much more

All these together constitute Big Data.

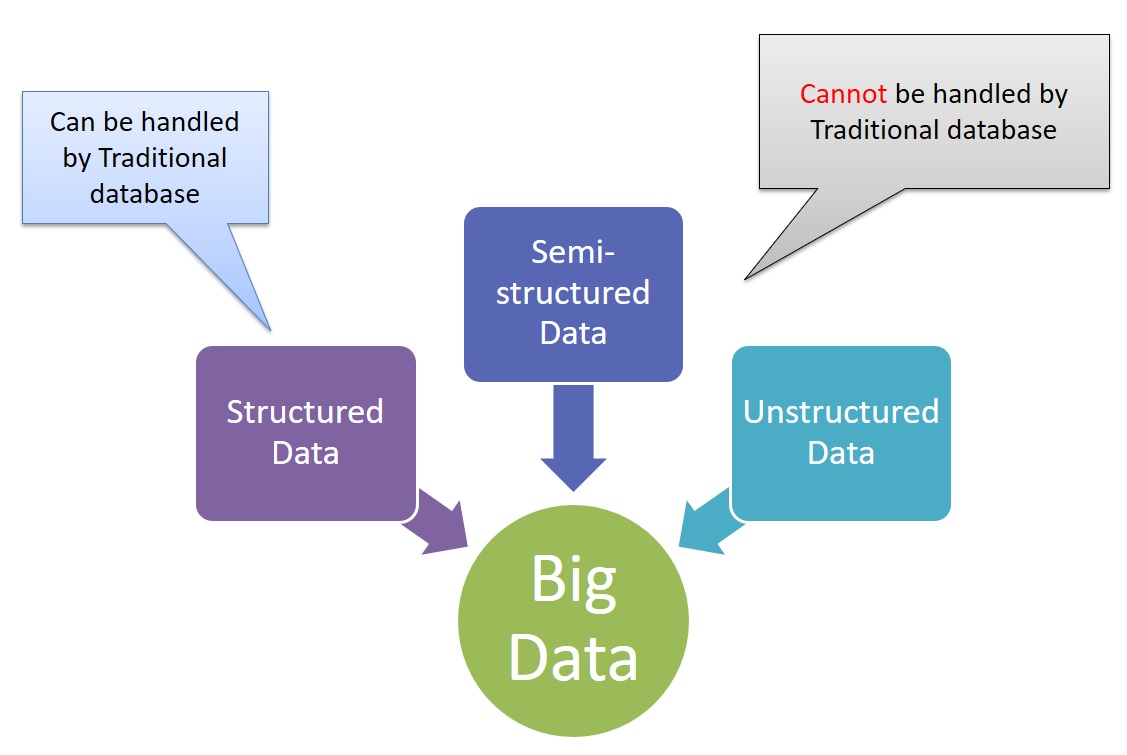

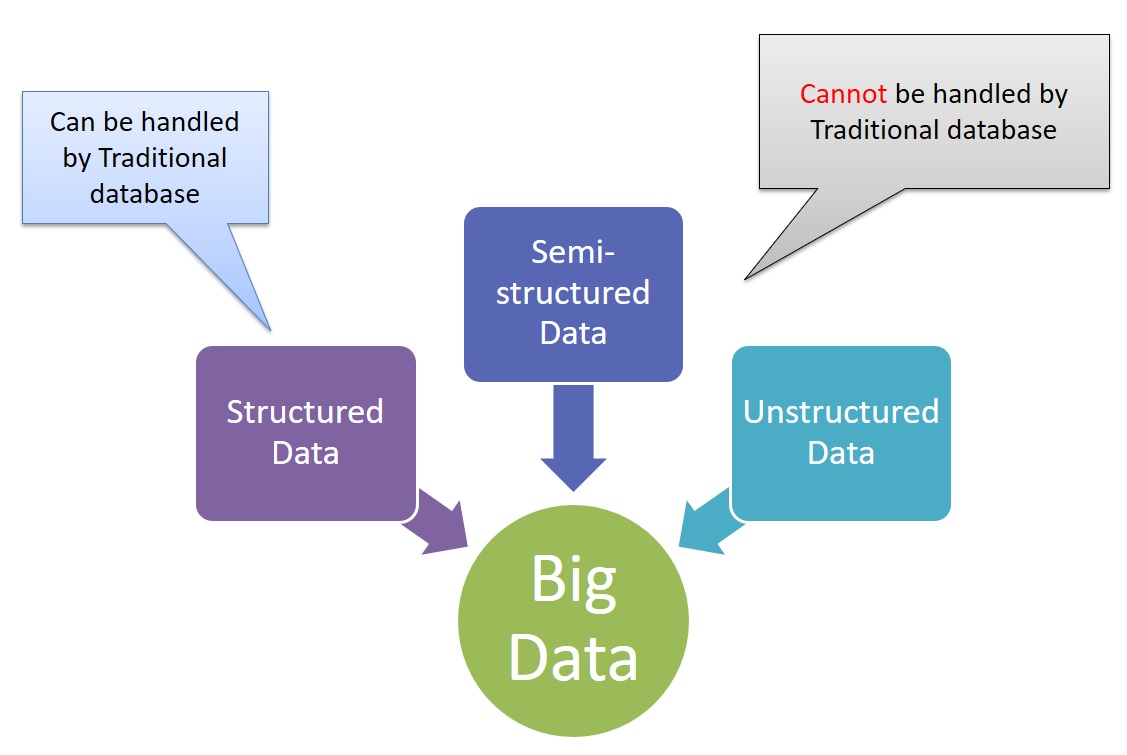

Big Data contains both Structured and Unstructured Data

Large collection of structured and unstructured data that can be captured, stored, aggregated, analyzed and communicated to make better business decisions is called Big Data.

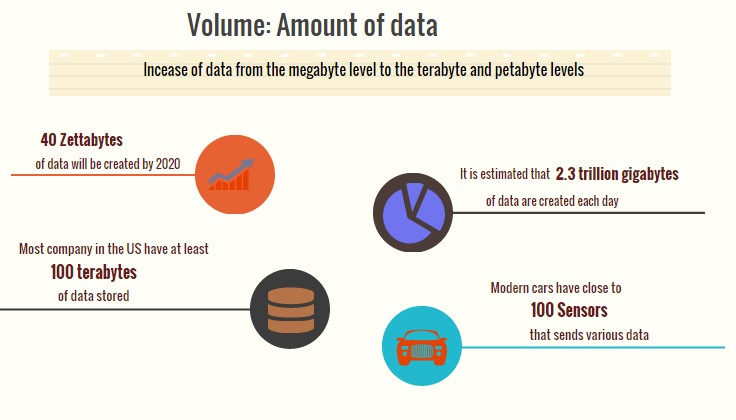

Big Data is Growing Fast

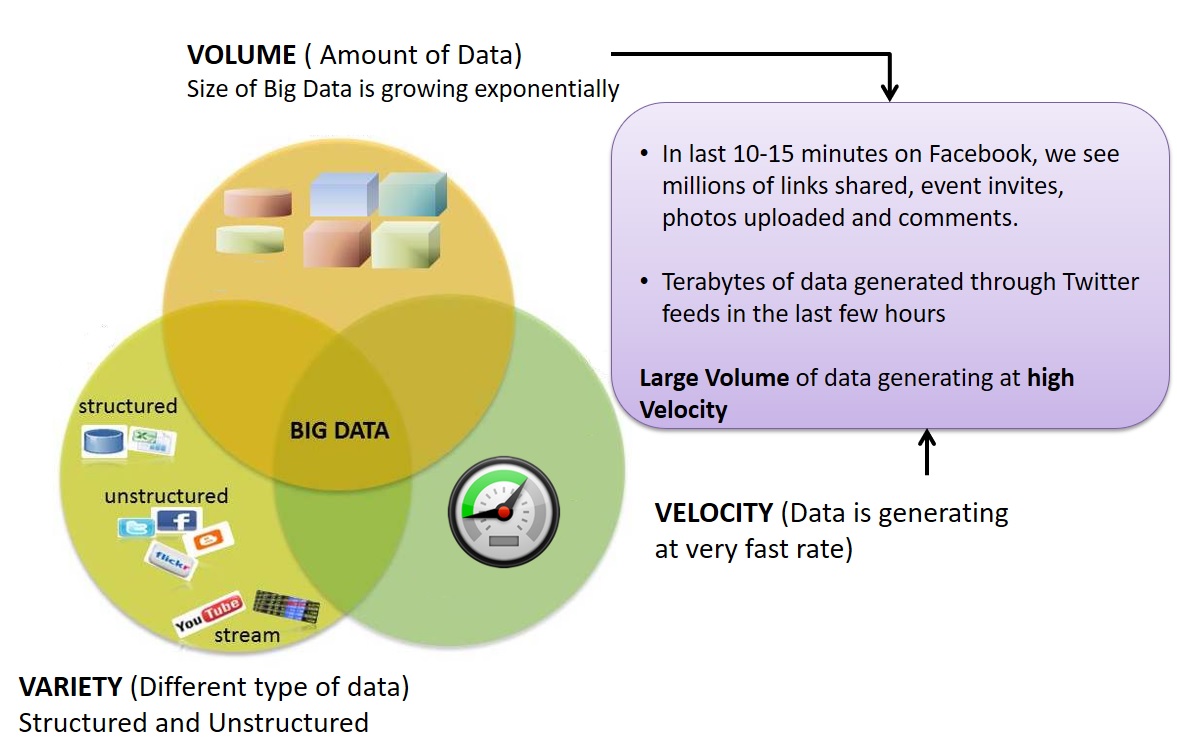

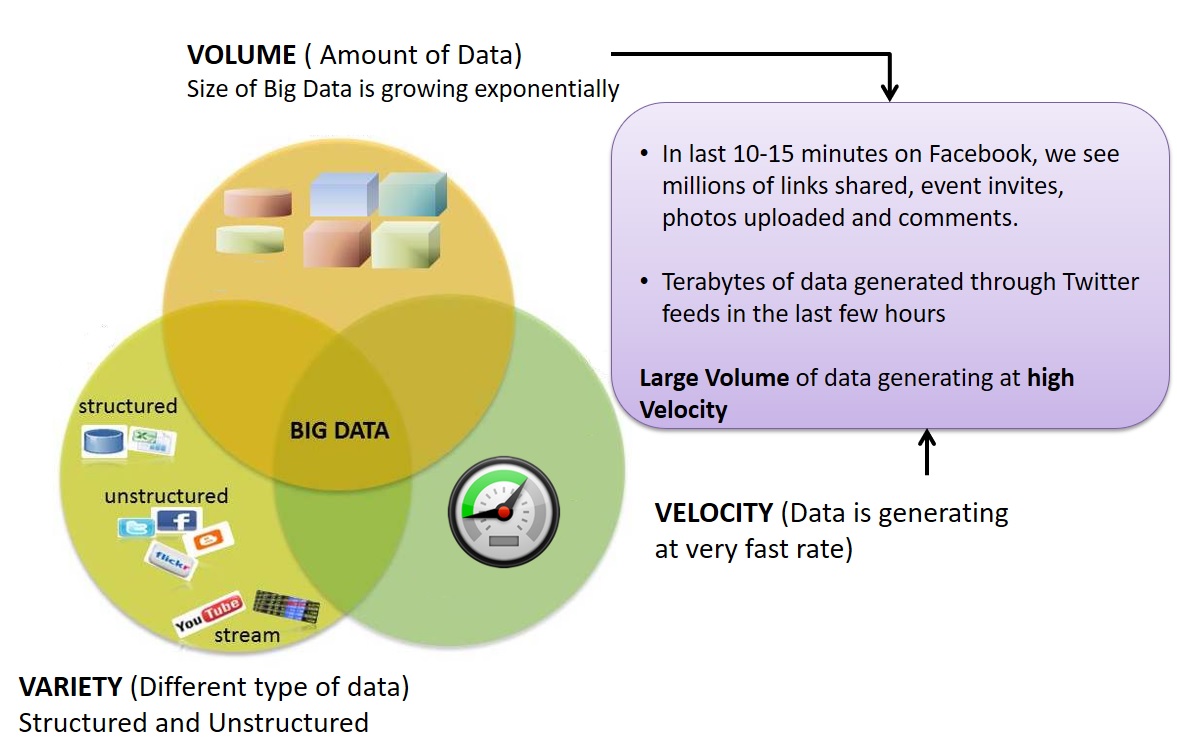

3Vs (volume, variety and velocity) defining Big Data

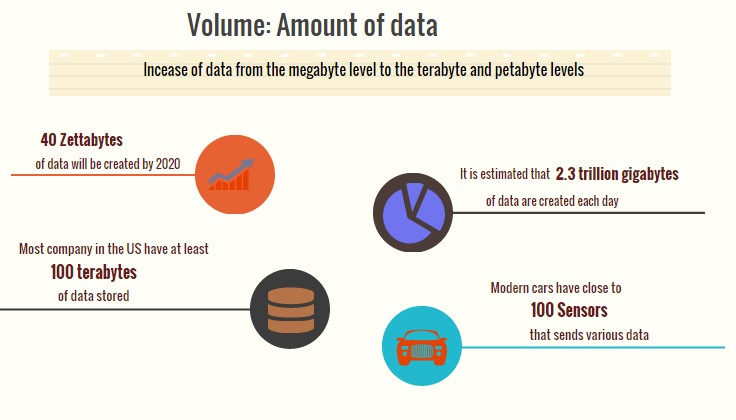

Volume refers to the amount of data

The size of available data is growing today exponentially. A text file is a few kilobytes, a sound file is a few megabytes while a full-length movie is a few gigabytes.

More sources of data are getting added on continuous basis. It is very common to have Terabytes and Petabytes of the storage system for enterprises. As the database grows the applications and architecture built to support the data needs to be changed quite often.

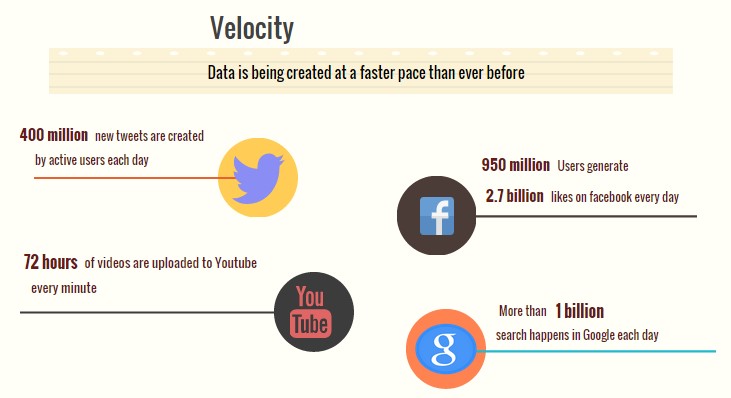

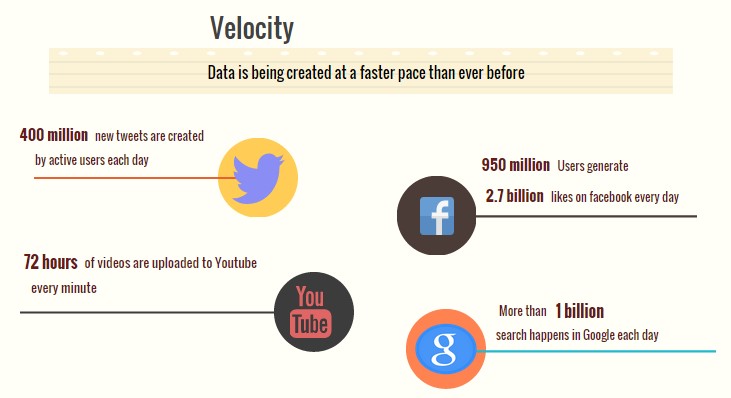

Velocity refers to the speed of data processing

The data growth and social media explosion have changed how we look at the data. Initially, companies analyzed data using a batch process. One takes a chunk of data, submits a job to the server and waits for output. That process works when the incoming data rate is slower.

With the new sources of data such as social and mobile applications, the batch process breaks down. Today people reply on social media to update them with the latest happening. On social media sometimes a few seconds old messages (a tweet, status updates etc.) is not something interests users. They often discard old messages and pay attention to recent updates. The data movement is now almost real time and the update window has reduced to fractions of the seconds.

Variety refers to the number of types of data

From excel tables and databases, data structure has changed to lose its structure and to add hundreds of formats. Pure text, photo, audio, video, web, GPS data, sensor data, relational data bases, documents, SMS, pdf, flash etc.

Now we no longer have control over the input data format. Structure can no longer be imposed like in the past to keep control over the analysis. As new applications are introduced new data formats come to life. The real world has data in many different formats and that is the challenge we need to overcome with the Big Data.

Why Hadoop is Needed for Big Data?

Now let us see why we need Hadoop for Big Data.

Hadoop starts where distributed relational databases ends.

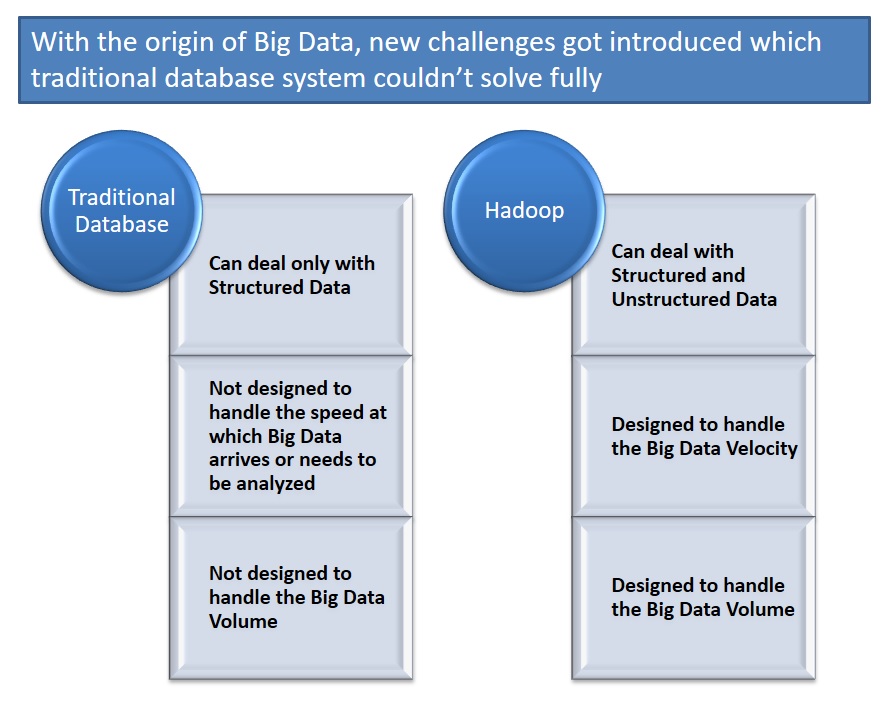

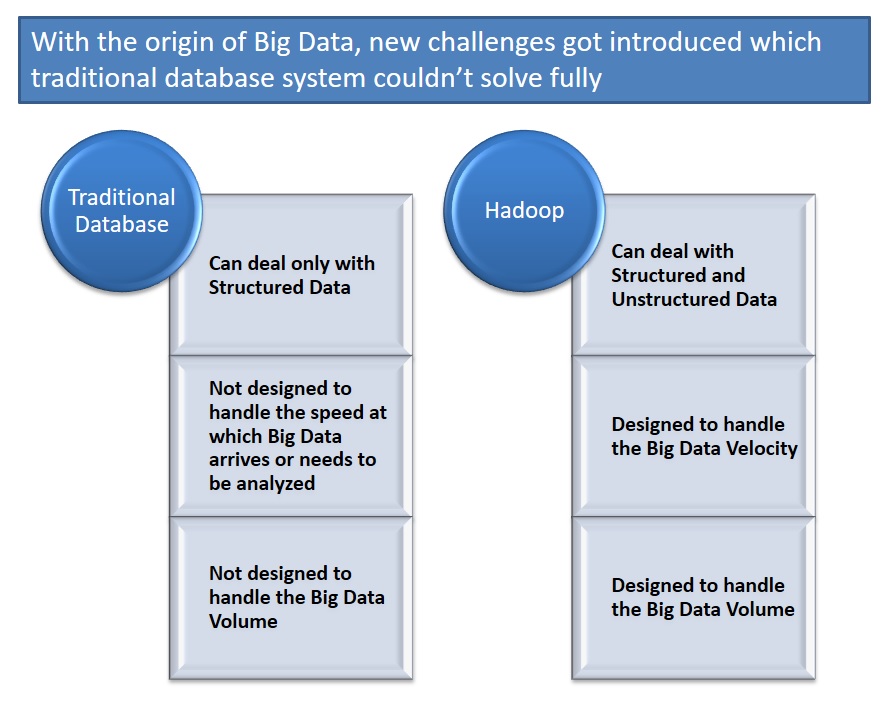

If relational databases can solve your problem, then you can use it but with the origin of Big Data, new challenges got introduced which traditional database system couldn’t solve fully.

Let us understand these challenges in more details.

Challenges for traditional Database management system to handle Big Data

Challenge 1:

Big Data has got variety of data means along with structured data which relational databases can handle very well, Big Data also includes unstructured data (text, log, audio, streams, video stream, sensor, GPS data). The traditional databases require the database schema to be created in ADVANCE to define the data how it would look like which makes it harder to handle Big unstructured data.

Challenge 2:

Big Data is getting generated at very high speed. The traditional databases are not designed to handle database insert/update rates required to support the speed at which Big Data arrives or needs to be analyzed.

Challenge 3:

Big Data is data in Zettabytes, growing with exponential rate. If the data to be processed is in the degree of Terabytes and petabytes, it is more appropriate to process them in parallel independent tasks and collate the results to give the output. Traditional database approach can’t handle this.

To handle these challenges a new framework came into existence, Hadoop.

Hadoop is a frame work to handle vast volume of structured and unstructured data in a distributed manner.

Hadoop is not a database

It’s very important to know that Hadoop is not replacement of traditional database.

Unlike RDBMS where you can query in real-time, the Hadoop process takes time and doesn’t produce immediate results. Hadoop is a computing architecture, not a database.

What’s Next?

Check the blog series My Learning Journey for Hadoop or directly jump to next article Introduction to Hadoop in simple words

- SAP Managed Tags:

- Big Data

Labels:

4 Comments

You must be a registered user to add a comment. If you've already registered, sign in. Otherwise, register and sign in.

Labels in this area

-

ABAP CDS Views - CDC (Change Data Capture)

2 -

AI

1 -

Analyze Workload Data

1 -

BTP

1 -

Business and IT Integration

2 -

Business application stu

1 -

Business Technology Platform

1 -

Business Trends

1,661 -

Business Trends

88 -

CAP

1 -

cf

1 -

Cloud Foundry

1 -

Confluent

1 -

Customer COE Basics and Fundamentals

1 -

Customer COE Latest and Greatest

3 -

Customer Data Browser app

1 -

Data Analysis Tool

1 -

data migration

1 -

data transfer

1 -

Datasphere

2 -

Event Information

1,400 -

Event Information

65 -

Expert

1 -

Expert Insights

178 -

Expert Insights

280 -

General

1 -

Google cloud

1 -

Google Next'24

1 -

Kafka

1 -

Life at SAP

784 -

Life at SAP

11 -

Migrate your Data App

1 -

MTA

1 -

Network Performance Analysis

1 -

NodeJS

1 -

PDF

1 -

POC

1 -

Product Updates

4,577 -

Product Updates

330 -

Replication Flow

1 -

RisewithSAP

1 -

SAP BTP

1 -

SAP BTP Cloud Foundry

1 -

SAP Cloud ALM

1 -

SAP Cloud Application Programming Model

1 -

SAP Datasphere

2 -

SAP S4HANA Cloud

1 -

SAP S4HANA Migration Cockpit

1 -

Technology Updates

6,886 -

Technology Updates

408 -

Workload Fluctuations

1

Related Content

- Iterating through JSONModel with multiple nested arrays in Technology Q&A

- Enabling Support for Existing CAP Projects in SAP Build Code in Technology Blogs by Members

- Vendor Invoice Screen 'Payment' tab screen field 'DTWS1' update in Technology Q&A

- I have data like Date, Net, Gross. I want to derive values by Week Range text as dimension in Technology Q&A

- ABAP Cloud Developer Trial 2022 Available Now in Technology Blogs by SAP

Top kudoed authors

| User | Count |

|---|---|

| 13 | |

| 11 | |

| 10 | |

| 9 | |

| 9 | |

| 7 | |

| 6 | |

| 5 | |

| 5 | |

| 5 |