- SAP Community

- Products and Technology

- Technology

- Technology Blogs by Members

- Your SAP on Azure – Part 17 – Connect SAP Vora wit...

Technology Blogs by Members

Explore a vibrant mix of technical expertise, industry insights, and tech buzz in member blogs covering SAP products, technology, and events. Get in the mix!

Turn on suggestions

Auto-suggest helps you quickly narrow down your search results by suggesting possible matches as you type.

Showing results for

BJarkowski

Active Contributor

Options

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

05-21-2019

9:45 AM

As soon as I finished my earlier post about the integration of the SAP Vora with the Azure HDInsight I started to wonder if it would be possible to reuse the Spark Extension with the Azure Databricks. It’s a new analytics platform based on the Apache Spark that enables an active collaboration between data scientists, technical teams and the business. It offers a fully managed Spark clusters that can be created within seconds and automatically terminated when not used. It can even scale up and down depending on the current utilization. As all Azure services, the Databricks natively connects to other cloud platform services like the Data Lake storage or Azure Data Factory. A big differentiator comparing to HDInsight is a tight integration with Azure AD to manage users and permissions which increase the security of your landscape.

That’s enough of theory, but if you’d like to get more information why the Azure Databricks is the way to go, please visit official Microsoft Documentation .

What I would like to present today is how to build the Spark cluster using Azure Databricks, connect it to the SAP Vora engine and expose the table to SAP HANA. The scope is very similar to the post about HDInsight and I will even re-use parts of the code. If something is not clear enough, please check the previous episode as I explained there how to get all required connection information or how to create a service principal.

CREATE DATABRICKS CLUSTER

As always, the first thing we need to do is to define a resource in Azure Portal. When using the Azure Databricks you’re billed based on the used virtual machines and the processing capability per hour (DBU). At this step we just define the service – we will deploy the cluster later.

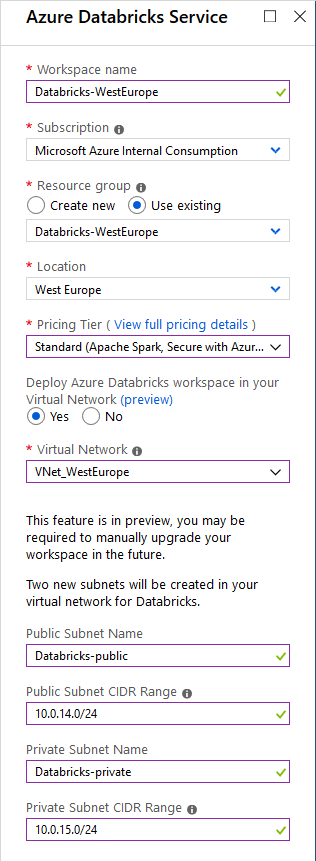

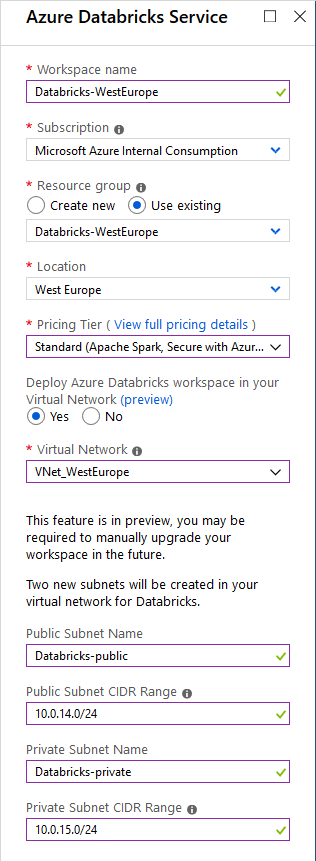

There are two pricing tiers available. I used the Standard one, but in case you need additional features like ODBC endpoints or indexing / caching support then choose the Premium tier. A detailed summary of what’s included in each tier can be found on Microsoft pricing details.

I want the Databricks cluster to be integrated with my existing VNet as it will simplify the connection to the SAP Vora on AKS. During the deployment, Azure will create two new subnets in selected VNet and assign the IP ranges according to our input. If you don’t want to put the cluster to an existing network, then the Azure can deploy a separate VNet just for the Databricks. Communication would still be possible but an additional effort is required to enable VNet peering or expose the SAP Vora service to the internet.

The service is created within seconds and you can access the Databricks blade. Click on the Launch Workspace to log in to the service.

Sometimes it can take a couple of minutes to access the workspace for the first time, so please be patient.

Now we can define a cluster. Choose Clusters in the menu and then click on the Create Cluster button. Here we can decide about basic settings like autoscaling limits or cluster termination after a certain amount of time (this way you don’t pay for the compute when the cluster is not used). When the cluster is stopped, all VMs are deleted but the configuration is retained by the Databricks service. Later on, if you start the cluster again all virtual machines will be redeployed using the same configuration.

I decided to go with the smallest virtual machine size and allowed the cluster termination after 60 minutes of inactivity.

The Create Cluster screen is also a place where you can enter Spark configuration for the SAP Vora connection. Expand the Advanced Options and enter the following settings:

If you’d like to connect through SSH to cluster nodes, you can also enter the public key in the SSH tab (I won’t use this feature in this blog).

It takes a couple of minutes to provision VMs. You can see them in the Azure Portal:

When the cluster is ready you can see it in the Databricks workspace:

To access SAP Vora we will require the Spark Extension – the same that was used in the previous blog. There are two ways of installing the library – you can do it directly on the cluster or set up automatic installation. I think the second approach is better if you plan to use the Vora connection continuously – whenever the Databricks cluster is provisioned the extension is installed without additional interaction.

Choose Workspace from the menu create a new library in the Shared section:

Select JAR as the library type and upload the spark-sap-datasources-spark2.jar file.

On the next screen mark the Install automatically checkbox and confirm by clicking Install. The service deploys the library to all cluster nodes.

When the installation completes, we can execute the first test to verify the connectivity between Databricks and the SAP Vora. Go back to the Workspace menu and create a new Notebook in the user area:

Enter and execute the following script:

When the cluster finished the processing, it returned information about the database and available schemas. It means the addon was installed successfully and we can establish the connection to the SAP Vora.

EXPOSE THE DATA TO SAP VORA AND HANA DATABASE

The Databricks offers its unique distributed filesystem called DBFS. All data stored in the cluster are persisted in the Azure Blob Storage, therefore, you won’t lose them even if you terminate the VMs. But you can also access the Azure Data Lake Storage from the Databricks by mounting a directory on the internal filesystem.

The Azure Data Lake Storage is using POSIX permissions, so we need to use the Service Principal to access the data. What I like in the Databricks configuration is that you can use Azure Key Vault service to store the service principal credentials instead of typing them directly to configuration. In my opinion, it’s a much safer approach and it also simplifies the setup, as there is only a single place of truth. Otherwise, if you'd like to change the assigned service principal you'd have to go through all scripts and manually replace the password.

Create a new Key Vault service and then open following webpage to create a Secret Scope inside the Databricks service:

https://<your_azure_databricks_url>#secrets/createScope

In my case it is:

https://westeurope.azuredatabricks.net#secrets/createScope

Type the desired Name that we will reference later in the script. You can find the DNS name and resource ID in the properties of the Key Vault. If you are using a Standard tier of the Databricks you need to select All Users in the Manage Permissions list, otherwise, you will encounter an issue during saving.

In the created Azure Key Vault service add a new entry under "Create a secret" and type the service principal key in the Value field.

The bellow script mounts the ADLS storage in the Databricks File System. Modify the values in brackets with your environment details.

Add new section with the script in the existing notebook and execute it:

We can check if that’s working correctly by listing the files inside the directory:

dbutils.fs.ls("/mnt/DemoDatasets")

The file is available so we can create SAP Vora table where we will upload the data:

Read the airports.dat file to a DataFrame. The inferSchema option automatically detects each field type and we won’t need any additional data conversion.

And finally, we can push the data from the DataFrame into SAP Vora table:

The only outstanding activity is to configure SAP Vora Remote Source in the HANA database…

…and display the content of virtual table:

My experience when working with Azure Databricks was excellent. I think the required configuration is much more straightforward compared to the HDInsight and I really enjoy the possibility to terminate the cluster when not active. As Databricks is the future way to work with Big Data I’m happy that existing SAP Vora extension can be used without any workarounds.

(source: Microsoft.com)

That’s enough of theory, but if you’d like to get more information why the Azure Databricks is the way to go, please visit official Microsoft Documentation .

What I would like to present today is how to build the Spark cluster using Azure Databricks, connect it to the SAP Vora engine and expose the table to SAP HANA. The scope is very similar to the post about HDInsight and I will even re-use parts of the code. If something is not clear enough, please check the previous episode as I explained there how to get all required connection information or how to create a service principal.

CREATE DATABRICKS CLUSTER

As always, the first thing we need to do is to define a resource in Azure Portal. When using the Azure Databricks you’re billed based on the used virtual machines and the processing capability per hour (DBU). At this step we just define the service – we will deploy the cluster later.

There are two pricing tiers available. I used the Standard one, but in case you need additional features like ODBC endpoints or indexing / caching support then choose the Premium tier. A detailed summary of what’s included in each tier can be found on Microsoft pricing details.

I want the Databricks cluster to be integrated with my existing VNet as it will simplify the connection to the SAP Vora on AKS. During the deployment, Azure will create two new subnets in selected VNet and assign the IP ranges according to our input. If you don’t want to put the cluster to an existing network, then the Azure can deploy a separate VNet just for the Databricks. Communication would still be possible but an additional effort is required to enable VNet peering or expose the SAP Vora service to the internet.

The service is created within seconds and you can access the Databricks blade. Click on the Launch Workspace to log in to the service.

Sometimes it can take a couple of minutes to access the workspace for the first time, so please be patient.

Now we can define a cluster. Choose Clusters in the menu and then click on the Create Cluster button. Here we can decide about basic settings like autoscaling limits or cluster termination after a certain amount of time (this way you don’t pay for the compute when the cluster is not used). When the cluster is stopped, all VMs are deleted but the configuration is retained by the Databricks service. Later on, if you start the cluster again all virtual machines will be redeployed using the same configuration.

I decided to go with the smallest virtual machine size and allowed the cluster termination after 60 minutes of inactivity.

The Create Cluster screen is also a place where you can enter Spark configuration for the SAP Vora connection. Expand the Advanced Options and enter the following settings:

spark.sap.vora.host = <vora-tx-coordinator hostname>

spark.sap.vora.port = <vora-tx-coordinator port>

spark.sap.vora.username = <SAP Data Hub username>

spark.sap.vora.password = <SAP Data Hub password>

spark.sap.vora.tenant = <SAP Data Hub tenant>

spark.sap.vora.tls.insecure = trueIf you’d like to connect through SSH to cluster nodes, you can also enter the public key in the SSH tab (I won’t use this feature in this blog).

It takes a couple of minutes to provision VMs. You can see them in the Azure Portal:

When the cluster is ready you can see it in the Databricks workspace:

To access SAP Vora we will require the Spark Extension – the same that was used in the previous blog. There are two ways of installing the library – you can do it directly on the cluster or set up automatic installation. I think the second approach is better if you plan to use the Vora connection continuously – whenever the Databricks cluster is provisioned the extension is installed without additional interaction.

Choose Workspace from the menu create a new library in the Shared section:

Select JAR as the library type and upload the spark-sap-datasources-spark2.jar file.

On the next screen mark the Install automatically checkbox and confirm by clicking Install. The service deploys the library to all cluster nodes.

When the installation completes, we can execute the first test to verify the connectivity between Databricks and the SAP Vora. Go back to the Workspace menu and create a new Notebook in the user area:

Enter and execute the following script:

import sap.spark.vora.PublicVoraClientUtils

import sap.spark.vora.config.VoraParameters

val client = PublicVoraClientUtils.createClient(spark)

client.query("select * from SYS.VORA_DATABASE").foreach(println)

client.query("select * from SYS.SCHEMAS").foreach(println)When the cluster finished the processing, it returned information about the database and available schemas. It means the addon was installed successfully and we can establish the connection to the SAP Vora.

EXPOSE THE DATA TO SAP VORA AND HANA DATABASE

The Databricks offers its unique distributed filesystem called DBFS. All data stored in the cluster are persisted in the Azure Blob Storage, therefore, you won’t lose them even if you terminate the VMs. But you can also access the Azure Data Lake Storage from the Databricks by mounting a directory on the internal filesystem.

The Azure Data Lake Storage is using POSIX permissions, so we need to use the Service Principal to access the data. What I like in the Databricks configuration is that you can use Azure Key Vault service to store the service principal credentials instead of typing them directly to configuration. In my opinion, it’s a much safer approach and it also simplifies the setup, as there is only a single place of truth. Otherwise, if you'd like to change the assigned service principal you'd have to go through all scripts and manually replace the password.

Create a new Key Vault service and then open following webpage to create a Secret Scope inside the Databricks service:

https://<your_azure_databricks_url>#secrets/createScope

In my case it is:

https://westeurope.azuredatabricks.net#secrets/createScope

Type the desired Name that we will reference later in the script. You can find the DNS name and resource ID in the properties of the Key Vault. If you are using a Standard tier of the Databricks you need to select All Users in the Manage Permissions list, otherwise, you will encounter an issue during saving.

In the created Azure Key Vault service add a new entry under "Create a secret" and type the service principal key in the Value field.

The bellow script mounts the ADLS storage in the Databricks File System. Modify the values in brackets with your environment details.

val configs = Map(

"dfs.adls.oauth2.access.token.provider.type" -> "ClientCredential",

"dfs.adls.oauth2.client.id" -> "<your-service-client-id>",

"dfs.adls.oauth2.credential" -> dbutils.secrets.get(scope = "<scope-name>", key = "<key-name>"),

"dfs.adls.oauth2.refresh.url" -> "https://login.microsoftonline.com/<your-directory-id>/oauth2/token")

// Optionally, you can add <your-directory-name> to the source URI of your mount point.

dbutils.fs.mount(

source = "adl://<your-data-lake-store-account-name>.azuredatalakestore.net/<your-directory-name>",

mountPoint = "/mnt/<mount-name>",

extraConfigs = configs)

(source: docs.azuredatabricks.net)

Add new section with the script in the existing notebook and execute it:

We can check if that’s working correctly by listing the files inside the directory:

dbutils.fs.ls("/mnt/DemoDatasets")

The file is available so we can create SAP Vora table where we will upload the data:

import sap.spark.vora.PublicVoraClientUtils

import sap.spark.vora.config.VoraParameters

val client = PublicVoraClientUtils.createClient(spark)

client.execute("""CREATE TABLE "default"."airports"

("Airport_ID" INTEGER,

"Name" VARCHAR(70),

"City" VARCHAR(40),

"Country" VARCHAR(40),

"IATA" VARCHAR(3),

"ICAO" VARCHAR(4),

"Latitude" DOUBLE,

"Longitude" DOUBLE,

"Altitude" INTEGER,

"Timezone" VARCHAR(5),

"DST" VARCHAR(5),

"Tz" VARCHAR(40),

"Type" VARCHAR(10),

"Source" VARCHAR(15))

TYPE STREAMING STORE ON DISK""")

Read the airports.dat file to a DataFrame. The inferSchema option automatically detects each field type and we won’t need any additional data conversion.

val df = spark.read.option("inferSchema", true).csv("dbfs:/mnt/DemoDatasets/airports.dat")

df.show()

And finally, we can push the data from the DataFrame into SAP Vora table:

import org.apache.spark.sql.SaveMode

df.write.

format("sap.spark.vora").

option("table", "airports").

option("namespace", "default").

mode(SaveMode.Append).

save()

The only outstanding activity is to configure SAP Vora Remote Source in the HANA database…

…and display the content of virtual table:

My experience when working with Azure Databricks was excellent. I think the required configuration is much more straightforward compared to the HDInsight and I really enjoy the possibility to terminate the cluster when not active. As Databricks is the future way to work with Big Data I’m happy that existing SAP Vora extension can be used without any workarounds.

- SAP Managed Tags:

- SAP Data Intelligence,

- SAP HANA,

- SAP Vora,

- Big Data

4 Comments

You must be a registered user to add a comment. If you've already registered, sign in. Otherwise, register and sign in.

Labels in this area

-

"automatische backups"

1 -

"regelmäßige sicherung"

1 -

"TypeScript" "Development" "FeedBack"

1 -

505 Technology Updates 53

1 -

ABAP

14 -

ABAP API

1 -

ABAP CDS Views

2 -

ABAP CDS Views - BW Extraction

1 -

ABAP CDS Views - CDC (Change Data Capture)

1 -

ABAP class

2 -

ABAP Cloud

2 -

ABAP Development

5 -

ABAP in Eclipse

1 -

ABAP Platform Trial

1 -

ABAP Programming

2 -

abap technical

1 -

absl

2 -

access data from SAP Datasphere directly from Snowflake

1 -

Access data from SAP datasphere to Qliksense

1 -

Accrual

1 -

action

1 -

adapter modules

1 -

Addon

1 -

Adobe Document Services

1 -

ADS

1 -

ADS Config

1 -

ADS with ABAP

1 -

ADS with Java

1 -

ADT

2 -

Advance Shipping and Receiving

1 -

Advanced Event Mesh

3 -

AEM

1 -

AI

7 -

AI Launchpad

1 -

AI Projects

1 -

AIML

9 -

Alert in Sap analytical cloud

1 -

Amazon S3

1 -

Analytical Dataset

1 -

Analytical Model

1 -

Analytics

1 -

Analyze Workload Data

1 -

annotations

1 -

API

1 -

API and Integration

3 -

API Call

2 -

Application Architecture

1 -

Application Development

5 -

Application Development for SAP HANA Cloud

3 -

Applications and Business Processes (AP)

1 -

Artificial Intelligence

1 -

Artificial Intelligence (AI)

5 -

Artificial Intelligence (AI) 1 Business Trends 363 Business Trends 8 Digital Transformation with Cloud ERP (DT) 1 Event Information 462 Event Information 15 Expert Insights 114 Expert Insights 76 Life at SAP 418 Life at SAP 1 Product Updates 4

1 -

Artificial Intelligence (AI) blockchain Data & Analytics

1 -

Artificial Intelligence (AI) blockchain Data & Analytics Intelligent Enterprise

1 -

Artificial Intelligence (AI) blockchain Data & Analytics Intelligent Enterprise Oil Gas IoT Exploration Production

1 -

Artificial Intelligence (AI) blockchain Data & Analytics Intelligent Enterprise sustainability responsibility esg social compliance cybersecurity risk

1 -

ASE

1 -

ASR

2 -

ASUG

1 -

Attachments

1 -

Authorisations

1 -

Automating Processes

1 -

Automation

2 -

aws

2 -

Azure

1 -

Azure AI Studio

1 -

B2B Integration

1 -

Backorder Processing

1 -

Backup

1 -

Backup and Recovery

1 -

Backup schedule

1 -

BADI_MATERIAL_CHECK error message

1 -

Bank

1 -

BAS

1 -

basis

2 -

Basis Monitoring & Tcodes with Key notes

2 -

Batch Management

1 -

BDC

1 -

Best Practice

1 -

bitcoin

1 -

Blockchain

3 -

bodl

1 -

BOP in aATP

1 -

BOP Segments

1 -

BOP Strategies

1 -

BOP Variant

1 -

BPC

1 -

BPC LIVE

1 -

BTP

12 -

BTP Destination

2 -

Business AI

1 -

Business and IT Integration

1 -

Business application stu

1 -

Business Application Studio

1 -

Business Architecture

1 -

Business Communication Services

1 -

Business Continuity

1 -

Business Data Fabric

3 -

Business Partner

12 -

Business Partner Master Data

10 -

Business Technology Platform

2 -

Business Trends

4 -

CA

1 -

calculation view

1 -

CAP

3 -

Capgemini

1 -

CAPM

1 -

Catalyst for Efficiency: Revolutionizing SAP Integration Suite with Artificial Intelligence (AI) and

1 -

CCMS

2 -

CDQ

12 -

CDS

2 -

Cental Finance

1 -

Certificates

1 -

CFL

1 -

Change Management

1 -

chatbot

1 -

chatgpt

3 -

CL_SALV_TABLE

2 -

Class Runner

1 -

Classrunner

1 -

Cloud ALM Monitoring

1 -

Cloud ALM Operations

1 -

cloud connector

1 -

Cloud Extensibility

1 -

Cloud Foundry

4 -

Cloud Integration

6 -

Cloud Platform Integration

2 -

cloudalm

1 -

communication

1 -

Compensation Information Management

1 -

Compensation Management

1 -

Compliance

1 -

Compound Employee API

1 -

Configuration

1 -

Connectors

1 -

Consolidation Extension for SAP Analytics Cloud

2 -

Control Indicators.

1 -

Controller-Service-Repository pattern

1 -

Conversion

1 -

Cosine similarity

1 -

cryptocurrency

1 -

CSI

1 -

ctms

1 -

Custom chatbot

3 -

Custom Destination Service

1 -

custom fields

1 -

Customer Experience

1 -

Customer Journey

1 -

Customizing

1 -

cyber security

3 -

cybersecurity

1 -

Data

1 -

Data & Analytics

1 -

Data Aging

1 -

Data Analytics

2 -

Data and Analytics (DA)

1 -

Data Archiving

1 -

Data Back-up

1 -

Data Flow

1 -

Data Governance

5 -

Data Integration

2 -

Data Quality

12 -

Data Quality Management

12 -

Data Synchronization

1 -

data transfer

1 -

Data Unleashed

1 -

Data Value

8 -

database tables

1 -

Datasphere

3 -

datenbanksicherung

1 -

dba cockpit

1 -

dbacockpit

1 -

Debugging

2 -

Delimiting Pay Components

1 -

Delta Integrations

1 -

Destination

3 -

Destination Service

1 -

Developer extensibility

1 -

Developing with SAP Integration Suite

1 -

Devops

1 -

digital transformation

1 -

Documentation

1 -

Dot Product

1 -

DQM

1 -

dump database

1 -

dump transaction

1 -

e-Invoice

1 -

E4H Conversion

1 -

Eclipse ADT ABAP Development Tools

2 -

edoc

1 -

edocument

1 -

ELA

1 -

Embedded Consolidation

1 -

Embedding

1 -

Embeddings

1 -

Employee Central

1 -

Employee Central Payroll

1 -

Employee Central Time Off

1 -

Employee Information

1 -

Employee Rehires

1 -

Enable Now

1 -

Enable now manager

1 -

endpoint

1 -

Enhancement Request

1 -

Enterprise Architecture

1 -

ETL Business Analytics with SAP Signavio

1 -

Euclidean distance

1 -

Event Dates

1 -

Event Driven Architecture

1 -

Event Mesh

2 -

Event Reason

1 -

EventBasedIntegration

1 -

EWM

1 -

EWM Outbound configuration

1 -

EWM-TM-Integration

1 -

Existing Event Changes

1 -

Expand

1 -

Expert

2 -

Expert Insights

2 -

Exploits

1 -

Fiori

14 -

Fiori Elements

2 -

Fiori SAPUI5

12 -

Flask

1 -

Full Stack

8 -

Funds Management

1 -

General

1 -

General Splitter

1 -

Generative AI

1 -

Getting Started

1 -

GitHub

8 -

Grants Management

1 -

GraphQL

1 -

groovy

1 -

GTP

1 -

HANA

6 -

HANA Cloud

2 -

Hana Cloud Database Integration

2 -

HANA DB

2 -

HANA XS Advanced

1 -

Historical Events

1 -

home labs

1 -

HowTo

1 -

HR Data Management

1 -

html5

8 -

HTML5 Application

1 -

Identity cards validation

1 -

idm

1 -

Implementation

1 -

input parameter

1 -

instant payments

1 -

Integration

3 -

Integration Advisor

1 -

Integration Architecture

1 -

Integration Center

1 -

Integration Suite

1 -

intelligent enterprise

1 -

iot

1 -

Java

1 -

job

1 -

Job Information Changes

1 -

Job-Related Events

1 -

Job_Event_Information

1 -

joule

4 -

Journal Entries

1 -

Just Ask

1 -

Kerberos for ABAP

8 -

Kerberos for JAVA

8 -

KNN

1 -

Launch Wizard

1 -

Learning Content

2 -

Life at SAP

5 -

lightning

1 -

Linear Regression SAP HANA Cloud

1 -

Loading Indicator

1 -

local tax regulations

1 -

LP

1 -

Machine Learning

2 -

Marketing

1 -

Master Data

3 -

Master Data Management

14 -

Maxdb

2 -

MDG

1 -

MDGM

1 -

MDM

1 -

Message box.

1 -

Messages on RF Device

1 -

Microservices Architecture

1 -

Microsoft Universal Print

1 -

Middleware Solutions

1 -

Migration

5 -

ML Model Development

1 -

Modeling in SAP HANA Cloud

8 -

Monitoring

3 -

MTA

1 -

Multi-Record Scenarios

1 -

Multiple Event Triggers

1 -

Myself Transformation

1 -

Neo

1 -

New Event Creation

1 -

New Feature

1 -

Newcomer

1 -

NodeJS

2 -

ODATA

2 -

OData APIs

1 -

odatav2

1 -

ODATAV4

1 -

ODBC

1 -

ODBC Connection

1 -

Onpremise

1 -

open source

2 -

OpenAI API

1 -

Oracle

1 -

PaPM

1 -

PaPM Dynamic Data Copy through Writer function

1 -

PaPM Remote Call

1 -

PAS-C01

1 -

Pay Component Management

1 -

PGP

1 -

Pickle

1 -

PLANNING ARCHITECTURE

1 -

Popup in Sap analytical cloud

1 -

PostgrSQL

1 -

POSTMAN

1 -

Process Automation

2 -

Product Updates

4 -

PSM

1 -

Public Cloud

1 -

Python

4 -

Qlik

1 -

Qualtrics

1 -

RAP

3 -

RAP BO

2 -

Record Deletion

1 -

Recovery

1 -

recurring payments

1 -

redeply

1 -

Release

1 -

Remote Consumption Model

1 -

Replication Flows

1 -

research

1 -

Resilience

1 -

REST

1 -

REST API

2 -

Retagging Required

1 -

Risk

1 -

Rolling Kernel Switch

1 -

route

1 -

rules

1 -

S4 HANA

1 -

S4 HANA Cloud

1 -

S4 HANA On-Premise

1 -

S4HANA

3 -

S4HANA_OP_2023

2 -

SAC

10 -

SAC PLANNING

9 -

SAP

4 -

SAP ABAP

1 -

SAP Advanced Event Mesh

1 -

SAP AI Core

8 -

SAP AI Launchpad

8 -

SAP Analytic Cloud Compass

1 -

Sap Analytical Cloud

1 -

SAP Analytics Cloud

4 -

SAP Analytics Cloud for Consolidation

3 -

SAP Analytics Cloud Story

1 -

SAP analytics clouds

1 -

SAP BAS

1 -

SAP Basis

6 -

SAP BODS

1 -

SAP BODS certification.

1 -

SAP BTP

21 -

SAP BTP Build Work Zone

2 -

SAP BTP Cloud Foundry

6 -

SAP BTP Costing

1 -

SAP BTP CTMS

1 -

SAP BTP Innovation

1 -

SAP BTP Migration Tool

1 -

SAP BTP SDK IOS

1 -

SAP Build

11 -

SAP Build App

1 -

SAP Build apps

1 -

SAP Build CodeJam

1 -

SAP Build Process Automation

3 -

SAP Build work zone

10 -

SAP Business Objects Platform

1 -

SAP Business Technology

2 -

SAP Business Technology Platform (XP)

1 -

sap bw

1 -

SAP CAP

2 -

SAP CDC

1 -

SAP CDP

1 -

SAP CDS VIEW

1 -

SAP Certification

1 -

SAP Cloud ALM

4 -

SAP Cloud Application Programming Model

1 -

SAP Cloud Integration for Data Services

1 -

SAP cloud platform

8 -

SAP Companion

1 -

SAP CPI

3 -

SAP CPI (Cloud Platform Integration)

2 -

SAP CPI Discover tab

1 -

sap credential store

1 -

SAP Customer Data Cloud

1 -

SAP Customer Data Platform

1 -

SAP Data Intelligence

1 -

SAP Data Migration in Retail Industry

1 -

SAP Data Services

1 -

SAP DATABASE

1 -

SAP Dataspher to Non SAP BI tools

1 -

SAP Datasphere

9 -

SAP DRC

1 -

SAP EWM

1 -

SAP Fiori

3 -

SAP Fiori App Embedding

1 -

Sap Fiori Extension Project Using BAS

1 -

SAP GRC

1 -

SAP HANA

1 -

SAP HCM (Human Capital Management)

1 -

SAP HR Solutions

1 -

SAP IDM

1 -

SAP Integration Suite

9 -

SAP Integrations

4 -

SAP iRPA

2 -

SAP LAGGING AND SLOW

1 -

SAP Learning Class

1 -

SAP Learning Hub

1 -

SAP Odata

2 -

SAP on Azure

1 -

SAP PartnerEdge

1 -

sap partners

1 -

SAP Password Reset

1 -

SAP PO Migration

1 -

SAP Prepackaged Content

1 -

SAP Process Automation

2 -

SAP Process Integration

2 -

SAP Process Orchestration

1 -

SAP S4HANA

2 -

SAP S4HANA Cloud

1 -

SAP S4HANA Cloud for Finance

1 -

SAP S4HANA Cloud private edition

1 -

SAP Sandbox

1 -

SAP STMS

1 -

SAP successfactors

3 -

SAP SuccessFactors HXM Core

1 -

SAP Time

1 -

SAP TM

2 -

SAP Trading Partner Management

1 -

SAP UI5

1 -

SAP Upgrade

1 -

SAP Utilities

1 -

SAP-GUI

8 -

SAP_COM_0276

1 -

SAPBTP

1 -

SAPCPI

1 -

SAPEWM

1 -

sapmentors

1 -

saponaws

2 -

SAPS4HANA

1 -

SAPUI5

5 -

schedule

1 -

Script Operator

1 -

Secure Login Client Setup

8 -

security

9 -

Selenium Testing

1 -

Self Transformation

1 -

Self-Transformation

1 -

SEN

1 -

SEN Manager

1 -

service

1 -

SET_CELL_TYPE

1 -

SET_CELL_TYPE_COLUMN

1 -

SFTP scenario

2 -

Simplex

1 -

Single Sign On

8 -

Singlesource

1 -

SKLearn

1 -

Slow loading

1 -

soap

1 -

Software Development

1 -

SOLMAN

1 -

solman 7.2

2 -

Solution Manager

3 -

sp_dumpdb

1 -

sp_dumptrans

1 -

SQL

1 -

sql script

1 -

SSL

8 -

SSO

8 -

Substring function

1 -

SuccessFactors

1 -

SuccessFactors Platform

1 -

SuccessFactors Time Tracking

1 -

Sybase

1 -

system copy method

1 -

System owner

1 -

Table splitting

1 -

Tax Integration

1 -

Technical article

1 -

Technical articles

1 -

Technology Updates

14 -

Technology Updates

1 -

Technology_Updates

1 -

terraform

1 -

Threats

2 -

Time Collectors

1 -

Time Off

2 -

Time Sheet

1 -

Time Sheet SAP SuccessFactors Time Tracking

1 -

Tips and tricks

2 -

toggle button

1 -

Tools

1 -

Trainings & Certifications

1 -

Transformation Flow

1 -

Transport in SAP BODS

1 -

Transport Management

1 -

TypeScript

2 -

ui designer

1 -

unbind

1 -

Unified Customer Profile

1 -

UPB

1 -

Use of Parameters for Data Copy in PaPM

1 -

User Unlock

1 -

VA02

1 -

Validations

1 -

Vector Database

2 -

Vector Engine

1 -

Visual Studio Code

1 -

VSCode

1 -

Vulnerabilities

1 -

Web SDK

1 -

work zone

1 -

workload

1 -

xsa

1 -

XSA Refresh

1

- « Previous

- Next »

Related Content

- New Machine Learning features in SAP HANA Cloud in Technology Blogs by SAP

- Corporate Git Setup on SAP BTP versus connecting to Corporate Git directly from SAP BAS in Technology Q&A

- Top Picks: Innovations Highlights from SAP Business Technology Platform (Q1/2024) in Technology Blogs by SAP

- Single Sign On to SAP Cloud Integration (CPI runtime) from an external Identity Provider in Technology Blogs by SAP

- SAP BTP Hyperscalers in Technology Q&A

Top kudoed authors

| User | Count |

|---|---|

| 7 | |

| 5 | |

| 5 | |

| 5 | |

| 4 | |

| 4 | |

| 4 | |

| 4 | |

| 3 | |

| 3 |