- SAP Community

- Products and Technology

- Technology

- Technology Blogs by SAP

- Your location history processed with SAP HANA - pa...

Technology Blogs by SAP

Learn how to extend and personalize SAP applications. Follow the SAP technology blog for insights into SAP BTP, ABAP, SAP Analytics Cloud, SAP HANA, and more.

Turn on suggestions

Auto-suggest helps you quickly narrow down your search results by suggesting possible matches as you type.

Showing results for

Developer Advocate

Options

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

02-19-2019

9:34 PM

It all started with this question from gregorw:

Well, in fact, I haven't. But it turned out to be a good exercise to use two of SAP HANA's multi-model processing capabilities: geospatial and JSON. As usually, I am using free SAP HANA, express edition (HXE) here.

I have a private Google account, so I requested Location History and My Places (places I rated on Google Maps) data:

Once received requested data I downloaded it to my HXE's

Fields I am interested in are:

We can parse data before loading it in the database. But loading it in the original format will be a good example of processing JSON with SQL in SAP HANA.

It is not a CSV file, but I pretended it is - with one record and with one field. That's why I picked delimiters that are not present in the file.

Next, I want to convert JSON into records of a

I used JSON_TABLE() function. Please note, that I am not using HANA's Document store for this exercise, but only relational engine and its SQL.

I can see data in the table now. My location data is available from 2013 till yesterday.

Let's see. Last Sunday, February 17th, I went on my Instagram hunt between 10:27 and 11:42 local time (

And the result in WKB format is:

This can be easily visualised using GeoJson.io website:

Location History stores the location roughly every 3 minutes, so the line is not smooth on the visualization, but reflects the path I did if comparing to the map generated on Endomondo data for the same walk:

In the second part of this blog post I do some spatial clustering on this data set.

‘Till next #GeospatialTuesday then,

-Vitaliy, aka @Sygyzmundovych

".@Sygyzmundovych have you ever tried to import the Google Location History from https://takeout.google.com/settings/takeout … into #HANA Geospacial?"

Well, in fact, I haven't. But it turned out to be a good exercise to use two of SAP HANA's multi-model processing capabilities: geospatial and JSON. As usually, I am using free SAP HANA, express edition (HXE) here.

Requesting and uploading data

I have a private Google account, so I requested Location History and My Places (places I rated on Google Maps) data:

- My Places are available as GeoJSON data, so it can be a topic for another post.

- Location History is available either as a plain JSON or KML geospatial format. I am going to request it in JSON format.

Once received requested data I downloaded it to my HXE's

/usr/sap/HXE/HDB90/work/ folder and uncompressed. Let's look at the data structure.hxeadm@hxehost:/usr/sap/HXE/HDB90/work/Takeout/Location History> head -20 Location\ History.json

{

"locations" : [ {

"timestampMs" : "1550519193999",

"latitudeE7" : 510754802,

"longitudeE7" : 169642113,

"accuracy" : 8,

"velocity" : 0,

"altitude" : 121,

"verticalAccuracy" : 3

}, {

"timestampMs" : "1550519070999",

"latitudeE7" : 510801796,

"longitudeE7" : 169665475,

"accuracy" : 8,

"velocity" : 9,

"heading" : 215,

"altitude" : 123,

"verticalAccuracy" : 3

}, {

...Fields I am interested in are:

timestampMsis a UTC timestamp in ms using UNIX Epoch time representation, i.e."1550519193999"isFebruary 18, 2019 7:46:33.999 PM GMT,latitudeE7is a latitude multiplied by 10,000,000 (or1e7) to represent it as an integer number, i.e."510754802"is 51,0754802 value in a GPS position,longitudeE7is a longitude multiplied by 10,000,000.

Loading data into SAP HANA

We can parse data before loading it in the database. But loading it in the original format will be a good example of processing JSON with SQL in SAP HANA.

CREATE SCHEMA "GOOGLE";

CREATE COLUMN TABLE "GOOGLE"."DUMP"(

"DATA" CLOB NOT NULL

);

IMPORT FROM CSV FILE '/usr/sap/HXE/HDB90/work/Takeout/Location History/Location History.json' INTO "GOOGLE"."DUMP"

WITH RECORD DELIMITED BY '\r'

FIELD DELIMITED BY '\t';It is not a CSV file, but I pretended it is - with one record and with one field. That's why I picked delimiters that are not present in the file.

Convert JSON to relational data using SQL

Next, I want to convert JSON into records of a

LOC_HISTORY table with a timestamp and a geographical position represented by ST_POINT data type.CREATE TABLE "GOOGLE"."LOC_HISTORY" AS

(SELECT

TO_SECONDDATE(ADD_SECONDS('1970-01-01 00:00:00', "TSMS" / 1000)) as "TIMESTAMP",

new ST_Point("LONE7"/1e7, "LATE7"/1e7) as "POINT"

FROM JSON_TABLE("GOOGLE"."DUMP"."DATA", '$.locations[*]'

COLUMNS

(

RN FOR ORDINALITY,

TSMS BIGINT PATH '$.timestampMs',

LATE7 BIGINT PATH '$.latitudeE7',

LONE7 BIGINT PATH '$.longitudeE7',

ALT INT PATH '$.altitude'

)

)

);I used JSON_TABLE() function. Please note, that I am not using HANA's Document store for this exercise, but only relational engine and its SQL.

I can see data in the table now. My location data is available from 2013 till yesterday.

Exercise 1: Reconstructing the walk

Let's see. Last Sunday, February 17th, I went on my Instagram hunt between 10:27 and 11:42 local time (

CET time zone), while my children were attending UniKids classes. I just need to rework a procedure from my earlier blog Calculating a path from IoT GPS data stored as ST_Point.CALL "GOOGLE"."PATH_FROM_POINTS"(

TS_START => LOCALTOUTC(To_TimeStamp('2019-02-17 10:27:00'), 'CET'),

TS_END => LOCALTOUTC(To_TimeStamp('2019-02-17 11:42:00'), 'CET'),

PATH => ?

);And the result in WKB format is:

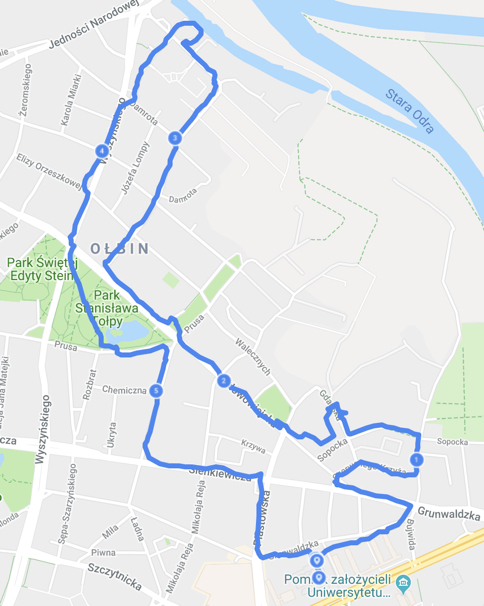

SRID=4326;LINESTRING (17.06334400177002 51.11322212219238,17.064226150512695 51.1140832901001,17.066997528076172 51.115102767944336,17.063793182373047 51.11575794219971,17.066638946533203 51.11593723297119,17.06586265563965 51.11723232269287,17.062926292419434 51.11690616607666,17.05934238433838 51.1182861328125,17.057364463806152 51.1193790435791,17.056079864501953 51.120232582092285,17.054750442504883 51.12098693847656,17.05545997619629 51.12276363372803,17.056791305541992 51.12459754943848,17.05857276916504 51.12675952911377,17.05574607849121 51.12709903717041,17.054173469543457 51.12527084350586,17.052987098693848 51.12366008758545,17.052385330200195 51.12179660797119,17.053675651550293 51.119704246520996,17.056140899658203 51.11831569671631,17.057037353515625 51.11619281768799,17.060548782348633 51.11597728729248,17.06048011779785 51.11384963989258,17.06330394744873 51.11301517486572)This can be easily visualised using GeoJson.io website:

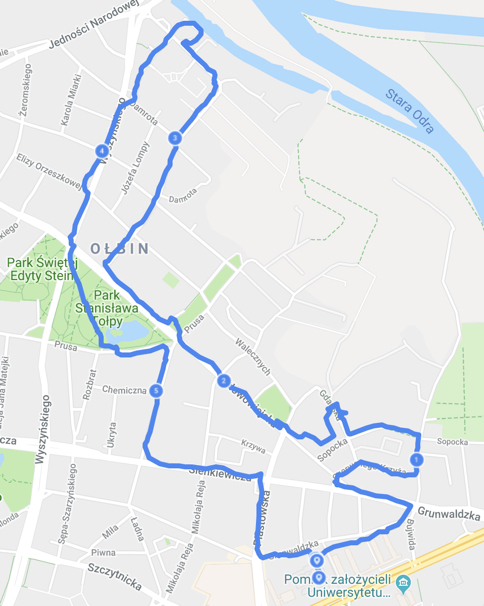

Location History stores the location roughly every 3 minutes, so the line is not smooth on the visualization, but reflects the path I did if comparing to the map generated on Endomondo data for the same walk:

In the second part of this blog post I do some spatial clustering on this data set.

‘Till next #GeospatialTuesday then,

-Vitaliy, aka @Sygyzmundovych

- SAP Managed Tags:

- SQL,

- SAP HANA,

- SAP HANA, express edition,

- SAP HANA multi-model processing,

- SAP HANA Spatial,

- Big Data

Labels:

4 Comments

You must be a registered user to add a comment. If you've already registered, sign in. Otherwise, register and sign in.

Labels in this area

-

ABAP CDS Views - CDC (Change Data Capture)

2 -

AI

1 -

Analyze Workload Data

1 -

BTP

1 -

Business and IT Integration

2 -

Business application stu

1 -

Business Technology Platform

1 -

Business Trends

1,661 -

Business Trends

88 -

CAP

1 -

cf

1 -

Cloud Foundry

1 -

Confluent

1 -

Customer COE Basics and Fundamentals

1 -

Customer COE Latest and Greatest

3 -

Customer Data Browser app

1 -

Data Analysis Tool

1 -

data migration

1 -

data transfer

1 -

Datasphere

2 -

Event Information

1,400 -

Event Information

65 -

Expert

1 -

Expert Insights

178 -

Expert Insights

280 -

General

1 -

Google cloud

1 -

Google Next'24

1 -

Kafka

1 -

Life at SAP

784 -

Life at SAP

11 -

Migrate your Data App

1 -

MTA

1 -

Network Performance Analysis

1 -

NodeJS

1 -

PDF

1 -

POC

1 -

Product Updates

4,577 -

Product Updates

330 -

Replication Flow

1 -

RisewithSAP

1 -

SAP BTP

1 -

SAP BTP Cloud Foundry

1 -

SAP Cloud ALM

1 -

SAP Cloud Application Programming Model

1 -

SAP Datasphere

2 -

SAP S4HANA Cloud

1 -

SAP S4HANA Migration Cockpit

1 -

Technology Updates

6,886 -

Technology Updates

408 -

Workload Fluctuations

1

Related Content

- SAP Document and Reporting Compliance - 'Colombia' - Contingency Process in Technology Blogs by SAP

- Comprehensive Guideline to SAP VMS in Technology Blogs by Members

- Trustable AI thanks to - SAP AI Core & SAP HANA Cloud & SAP S/4HANA & Enterprise Blockchain 🚀 in Technology Blogs by Members

- SAP BeLux: stretch the limits of Dox premium invoices in Technology Blogs by SAP

- Part 1:- SAP Basis OS support on SUSE Linux for beginners in Technology Blogs by Members

Top kudoed authors

| User | Count |

|---|---|

| 13 | |

| 10 | |

| 10 | |

| 9 | |

| 7 | |

| 6 | |

| 5 | |

| 5 | |

| 5 | |

| 4 |