- SAP Community

- Products and Technology

- Technology

- Technology Blogs by SAP

- Step by step to run your UI5 application on Kubern...

Technology Blogs by SAP

Learn how to extend and personalize SAP applications. Follow the SAP technology blog for insights into SAP BTP, ABAP, SAP Analytics Cloud, SAP HANA, and more.

Turn on suggestions

Auto-suggest helps you quickly narrow down your search results by suggesting possible matches as you type.

Showing results for

Advisor

Options

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

12-06-2018

9:44 AM

In my previous blog Step by step to run your UI5 application on Kubernetes - part 1 I have introduced the detail step how to run an SAP UI5 application within Docker, and successfully upload a Docker image containing my test UI5 application to Docker hub.

In this blog, let's try something really fancy - run this Docker image within Kubernetes.

- Two important concepts in Kubernetes: pod and deployment

- Some first hand experience about high availability and easy-to-scale feature provided by Kubernetes

- What does Rolling Update mean?

What's benefit if we run our application on Kubernetes? According to my limited experience so far, my personal understanding is, we application developer can then enable our application to run with high availability, easy-to-scale and high fault-tolerant, without spending time to learn how Kubernetes works under the hood.

That is to say, it's still IT expert, Kubernetes admin or infrastructure provider's responsibility to install, setup, configure and make Kubernetes run well, and we application developers working as Kubernetes consumer just need to use the simple command line tool, kubectl, to deploy our application to Kubernetes and that's all.

Let's now dive into this completely new world!

I still use my test UI5 application "Jerry's Service Order list" for demo.

The first problem to deal with is to find out an available Kubernetes environment. If you are confident with your basis knowledge and have enough hardware, you can set up a Kubernetes cluster on your own; otherwise you can seek some Kubernetes-as-a-service solution just as Jerry did.

In one of my articles written for Chinese partners: the Newton on the giant's shoulder? Kyma on top of Kubernetes I have mentioned Gardener, an open source tool sponsored by SAP to create a Kubernetes Cluster environment via Kubernetes-as-a-Service way on Google Cloud platform, Microsoft Azure and Amazon AWS.

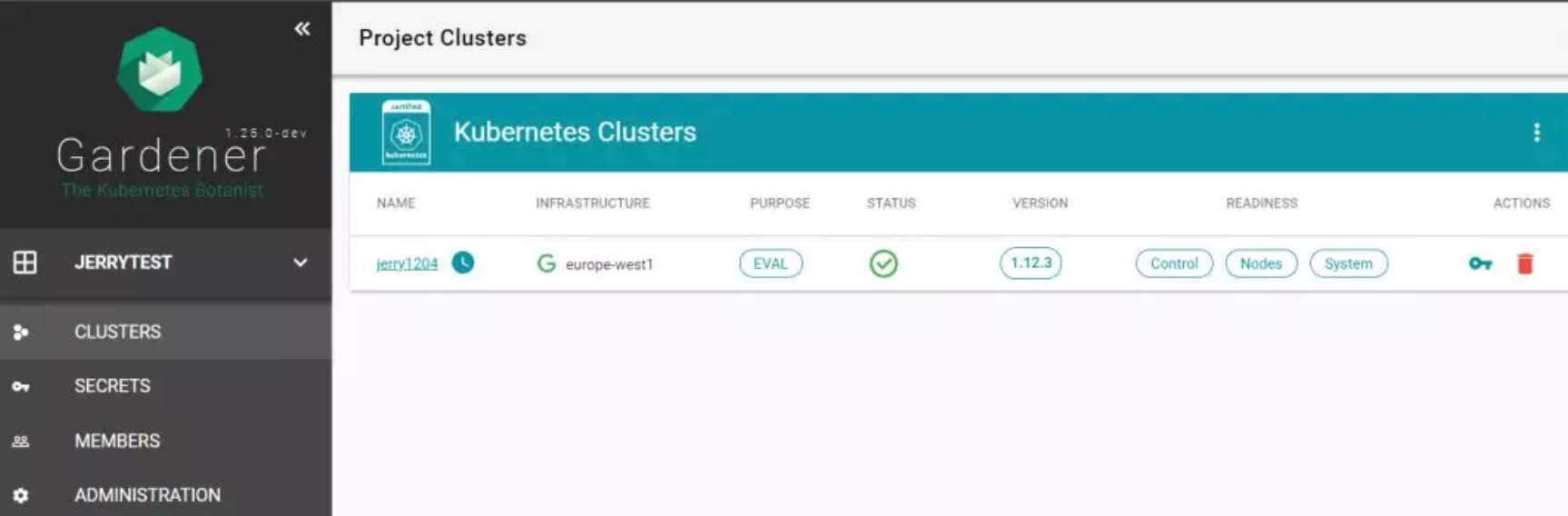

I have created a trial Kubernetes cluster named "jerry1204" via SAP internal Gardner instance based on Google Cloud Platform:

On December 3rd 2018, the latest version 1.13 is released. And from the screenshot below we can find the version of Kubernetes cluster created by Gardener is: 1.12.3.

Click Dashboard hyperlink in the Access tab of the above screenshot to perform various operation on this Kubernetes cluster via dashboard. Of course you can use command line as well, which will be introduced soon.

Since this is just a trial Kubernetes cluster, only one worker node is available.

The detail information of this single worker node found in dashboard could also be observed via command kubectl get node -o wide:

Two important concepts in Kubernetes: pod and deployment

Two important concepts in Kubernetes: pod and deployment

Now we will use command line kubectl to consume the Docker image i042416/ui5-nginx uploaded to Docker hub in previous blog:Step by step to run your UI5 application on Kubernetes – part 1.

kubectl run jerry-ui5 --image=i042416/ui5-nginx

Lots of Kubernetes artifacts are generated behind the scene once this command is executed.

First of all, where does the magic of Kubernetes come from which enable high-availability, fault-tolerance and elastic scalability for application running on top of it? It's pod which plays an important part.

From the Kubernetes architecture diagram above, we can observe that Kubernetes cluster consists of nodes and each of them contains multiple pods, again within each pod there are several containers encapsulated.

Kubernetes Pods are the smallest deployable computing units in the open source Kubernetes container scheduling and orchestration environment. A Pod is a grouping of one or more containers that operate together.

Back to our executed command:kubectl run jerry-ui5 --image=i042416/ui5-nginx

once it is done, execute another command:

kubectl get pod

A new pod is found:

Use describe command to inspect this pod:

kubectl describe pod jerry-ui5-6ffd46bb95-6bgpg

The "docker://XXX", value of field "Container ID" below indicates this pod contains a Docker container. Of course Docker is not the only supported container technology in Kubernetes; for example CoreOS Rocket is supported as well.

The data displayed in Events area gives us a typical lifecycle event flow of a pod:

Scheduled->Pulling->Pulled->Created->Started

The "From" field for each status also conveys very important information:

Scheduled: default-scheduler.

The Kubernetes scheduler is in charge of scheduling pods onto nodes. Basically it works like this:

- You create a pod

- The scheduler notices that the new pod you created doesn’t have a node assigned to it

- The scheduler assigns a node to the pod

In my screenshot above, in the message message "Successfully assigned XXX to shoot--jerrytest-jerry1204-worker-yamer-z1-XXX", the string with prefix shoot--jerrytest is just the name of my trial cluster worker node where the pod is assigned and executed.

All the "From" fields for other left status are "kubelet,shoot--jerrytest-jerry1204-worker-yamer-z1-XXX"

What is kubelet?

It’s not scheduler's responsibility to actually run the pod – that’s the kubelet’s job, which is the primary “node agent” responsible for running pods and ensuring their runtime status meets the users' expectation.

In Kubernetes dashboard, we can see this running pod as well:

Besides pod, another important concept in Kubernetes is deployment.

Execute command kubectl get deploy, we can again found a new deployment is created by kubectl run.

Kubernetes deployment is an abstraction layer for the pods, whose main purpose is to maintain the resources declared in its configuration to be in its desired state.

In my previous article we have learned how to start a Docker image by command docker run, whereas in Kubernetes world, we never directly deal with a Docker image, but expose the service provided by application running inside pod via Kubernetes service instead.

Execute kubectl get svc in my trial cluster and found no application specific service instance is returned; only one instance of Kubernetes system service is listed.

We use command kubectl expose to create a new service based on the newly-created deployment instance by command kubectl run:

kubectl expose deployment jerry-ui5 --type=LoadBalancer --port=80 --target-port=80

As long as expose is executed, get svc command this time return a service instance with the same as deployment. The IP exposed for external world:

Now we can use url

35.205.230.209/webapp to access the UI5 application running in the pod:

Some first hand experience about high availability and easy-to-scale feature provided by Kubernetes

Some first hand experience about high availability and easy-to-scale feature provided by Kubernetes

So far my UI5 application is only running in the single pod which resides with the single worker node in my trial cluster. No significant difference could be recognized compared with running in docker.

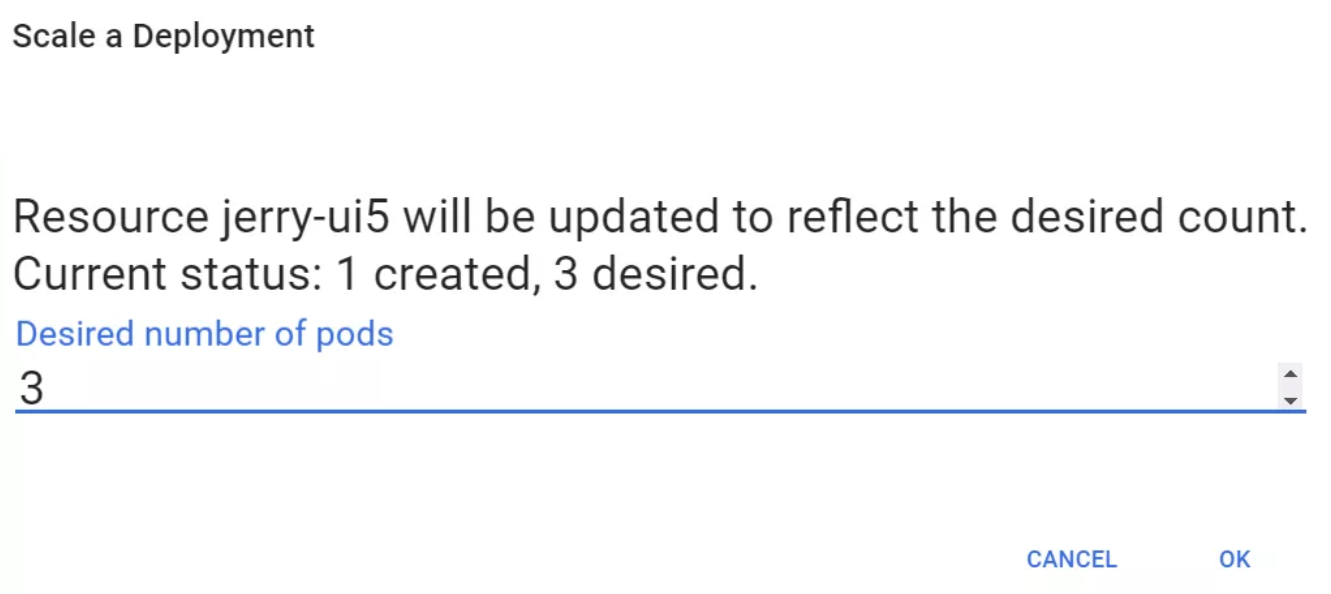

Now let's scale this UI5 application! Open the deployment instance and choose Scale menu from dashboard:

Set target pod number as 3, which means the deployment will try to ensure the number of running pod equals to 3 after this scale command is executed.

Of course we can also scale the application via command line, which will be introduced soon.

Now execute kubectl get pod, and we found out two new deployment instances are generated with Age equals to 12s.

Use kubectl describe to review the deployment detail, the runtime behavior of pods controlled by it could be found from Replicas fields:

3 desired | 3 updated | 3 total | 3 available | 0 unavailable

Now I delete one pod on purpose using command kubectl delete, and find a new pod is automatically created instantly, and total number of running pods still equals to 3.

What does Rolling Update mean?

Rolling updates allow Deployments' update to take place with zero downtime by incrementally updating Pods instances with new ones. The new Pods will be scheduled on Nodes with available resources.

The screenshot below is the output of kubectl describe deployment, and the value of field "StrategyType" indicates the default update strategy of deployment created by kubectl run is Rolling Update.

I design a simple updating scenario: My UI5 application is running on a worker node with 10 pods online based on version 1.0, then updated to version 2.0 via Rolling Update.

Because the image I uploaded to Docker Hub in previous blog is tagged with "latest" as default value, so I need to generate two Docker images separately with tag v1.0 and v2.0.

The command below is to push a Docker image with tag v1.0 to Docker Hub:

Append string "(v2.0)" to the title of detail page view to indicate this is version 2.0:

push this version 2.0 image to Docker hub as well using command below:

Now both images with v1.0 and v2.0 are ready in Docker Hub now.

First run UI5 application via Docker image with tag v1.0:

kubectl run jerry-ui5 --image=i042416/ui5-nginx:v1.0

Scale running pods from number 1 to 10 using following command:

kubectl scale --replicas=10 deployment/jerry-ui5

It could easily be found that the get pod command above returns 10 running pods.

Trigger Rolling Update by changing the deployment image from v1.0 to v2.0:

kubectl set image deployment/jerry-ui5 i042416/ui5-nginx=i042416/ui5-nginx:v2.0

Check the Rolling Update execution real-time progress via command:

kubectl rollout status deployment/jerry-ui5

The above screenshot is the output of this command which indicates at a given time, how many pods of old version are awaiting termination and how many new pods with version 2.0 are available.

Once Rolling Update is finished, open a pod and ensure the docker image has now been based on v2.0:

In the end double check the detail page title in browser and see (v2.0) as expected - the Rolling Update is done successfully.

So far what I have introduced is just the tip of the iceberg. As Kubernetes environment will be supported by SAP Cloud Platform soon, it's time for us to learn it more!

- SAP Managed Tags:

- SAP Fiori Cloud,

- JavaScript,

- SAPUI5,

- Cloud,

- SAP Business Technology Platform

Labels:

2 Comments

You must be a registered user to add a comment. If you've already registered, sign in. Otherwise, register and sign in.

Labels in this area

-

ABAP CDS Views - CDC (Change Data Capture)

2 -

AI

1 -

Analyze Workload Data

1 -

BTP

1 -

Business and IT Integration

2 -

Business application stu

1 -

Business Technology Platform

1 -

Business Trends

1,658 -

Business Trends

93 -

CAP

1 -

cf

1 -

Cloud Foundry

1 -

Confluent

1 -

Customer COE Basics and Fundamentals

1 -

Customer COE Latest and Greatest

3 -

Customer Data Browser app

1 -

Data Analysis Tool

1 -

data migration

1 -

data transfer

1 -

Datasphere

2 -

Event Information

1,400 -

Event Information

66 -

Expert

1 -

Expert Insights

177 -

Expert Insights

299 -

General

1 -

Google cloud

1 -

Google Next'24

1 -

Kafka

1 -

Life at SAP

780 -

Life at SAP

13 -

Migrate your Data App

1 -

MTA

1 -

Network Performance Analysis

1 -

NodeJS

1 -

PDF

1 -

POC

1 -

Product Updates

4,577 -

Product Updates

344 -

Replication Flow

1 -

RisewithSAP

1 -

SAP BTP

1 -

SAP BTP Cloud Foundry

1 -

SAP Cloud ALM

1 -

SAP Cloud Application Programming Model

1 -

SAP Datasphere

2 -

SAP S4HANA Cloud

1 -

SAP S4HANA Migration Cockpit

1 -

Technology Updates

6,873 -

Technology Updates

422 -

Workload Fluctuations

1

Related Content

- Add React app to SAP Build Workzone in Technology Q&A

- Consuming SAP with SAP Build Apps - Mobile Apps for iOS and Android in Technology Blogs by SAP

- Support for API Business Hub Enterprise in Actions Project in Technology Blogs by SAP

- App to automatically configure a new ABAP Developer System in Technology Blogs by Members

- SAP Application User provision based on Application Role via Entra ID in Technology Q&A

Top kudoed authors

| User | Count |

|---|---|

| 40 | |

| 25 | |

| 17 | |

| 13 | |

| 8 | |

| 7 | |

| 7 | |

| 7 | |

| 6 | |

| 6 |