- SAP Community

- Products and Technology

- Technology

- Technology Blogs by Members

- Spring Boot Reactive vs. Node.js in SAP Cloud Plat...

Technology Blogs by Members

Explore a vibrant mix of technical expertise, industry insights, and tech buzz in member blogs covering SAP products, technology, and events. Get in the mix!

Turn on suggestions

Auto-suggest helps you quickly narrow down your search results by suggesting possible matches as you type.

Showing results for

vadimklimov

Active Contributor

Options

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

08-03-2018

5:25 PM

Intro

Runtimes provided by SAP Cloud Platform, especially those available in Cloud Foundry environment via concept of buildpacks, leave technical architects and application developers with wide variety of options when it comes to choice of programming language, server runtime and framework. For certain runtimes, there are clear and well recognized use cases, but for some others, choice is not that straightforward. Probably, one of the most intensive discussions and debates that take place for many years and that is very much relevant for SAP Cloud Platform as well, is choice between server-side JavaScript and Java. To be more specific, commonly discussions are focused not on programming languages as such, but on applications developed using frameworks for Node.js runtime and applications developed using Java Spring framework. In many materials that cover this topic, Java applications are commonly considered to be better suited for complex operations potentially requiring high level of parallelization (thanks to JVM’s multi-threading), but payoff for this is higher resource consumption (especially when it comes to memory consumption of underlying JVM), whereas Node.js applications are considered to be more lightweight and relevant for CPU non-intensive and non-blocking operations (thanks to Node.js’s concurrency mechanisms and non-blocking nature of JavaScript). Java was commonly associated with classic imperative (blocking) programming model put into multi-threaded runtime, whereas Node.js was associated with non-blocking programming model put into single-threaded runtime with support of concurrency. But both parties evolved over time: reactive (non-blocking) programming principles were introduced to Java and became supported in later versions of Java and its frameworks, Node.js got modules that bring multi-threading support...

In this blog, I would like to reflect on this topic and make a brief comparison of two lightweight applications – one is written in Java, based on Java Spring Boot and uses Spring Reactive modules, the other one is written in JavaScript, based on Node.js and uses Express framework. Both applications use the same persistence layer (NoSQL database – MongoDB), implement the same querying logic and have been deployed to SAP Cloud Platform, Cloud Foundry environment using similarly sized containers and default buildpacks (java_buildpack and nodejs_buildpack, correspondingly). Applications expose REST API that can be consumed to query corresponding documents stored in MongoDB repository. For load testing, I’m going to use Apache JMeter that will produce large number of parallel requests and invoke applications under test.

The exercise doesn’t aim comparison of applications’ resource footprint (as mentioned above, Java applications commonly have higher initial memory consumption than Node.js applications), as well as there will be no comparison of resource consumption patterns for these applications when being put under load test (this analysis alone deserves a separate thorough blog), but I’m going to focus attention on one single metric – throughput.

The blog was inspired by reading comparisons between Node.js and Java applications’ performance for applications deployed to Cloud Foundry (for example, refer to the blog written by mariusobert), as well as other comparisons on the subject, that get published in Java and Node.js communities.

Overview and notes

High level overview and component diagram that depicts involved components employed in the demo, are illustrated below:

Comparison of non-blocking Node.js application with classic thread blocking Java application when it comes to I/O operations doesn’t seem right to me, so let’s stick to parity here and compare Node.js application with Java application based on reactive principles.

Another important aspect is that both applications don’t implement complex application logic and remain very lightweight (which also results in absence of usage of some abstraction patterns that would commonly present in production grade applications) – ultimately, applications are going to only implement very basic router and controller logic for querying documents from MongoDB repository.

Together with this, some important modules and functionalities that shall be present in production grade applications – for example, authentication and authorization, logging, thorough exception handling, etc. – are intentionally removed to keep demo applications as simple as possible.

Applications under test

Java Spring Boot Reactive application re-uses sample application that has been developed earlier when demonstrating migration of Spring Boot application from classic imperative model to reactive model, so please refer to my earlier blog, if you would like to get into details of that application. The application is based on Spring Boot 2.0 and uses:

- Spring Web Reactive – to expose REST APIs using reactive model,

- Spring Data MongoDB Reactive – to interact with MongoDB database using reactive model,

- Spring Cloud Connectors – to interact with cloud provided services and in particular, with services that are bound to the application in Cloud Platform environment.

Node.js application is based on Node.js version 10 and uses:

- Express web framework module – to expose REST APIs,

- Mongoose module – to interact with MongoDB database,

- cfenv module – to interact with application environment provided by Cloud Foundry.

An application is implemented using promise pattern (to be more precise, async/await pattern that is based on the concept of promise) in order to avoid heavy usage of callbacks.

Application's source code and manifest file used for deployment to Cloud Foundry can be found in GitHub repository.

I encourage you to read the blog written by florian.pfeffer – the blog contains very detailed, step by step instructions on how Node.js application can be developed and deployed to Cloud Foundry environment.

Both applications have been deployed to Cloud Foundry environment of SAP Cloud Platform and bound to the service instance of MongoDB:

Test execution

Series of tests were conducted using the same structure of the test plan in JMeter:

Requests produced by HTTP sampler and sent to both applications, are similar to those used in the earlier referenced blog – these are HTTP GET requests to query documents that match the code provided in request as a query parameter. Randomizer function is used to generate code within allowed interval, so that produced requests are less static.

For every run, test script executed 1000 loops of HTTP requests to Spring Boot Reactive and Node.js applications each, with increasing number of virtual users to emulate increasing number of concurrent calls to the API. Summary of test runs was collected using JMeter standard listener and special attention to identified throughput has been paid.

- 1 virtual user running 1000 loops of calls (1000 HTTP requests to each tested application):

- 5 concurrent virtual users running 1000 loops of calls (5000 HTTP requests to each tested application in total):

- 10 concurrent virtual users running 1000 loops of calls (10000 HTTP requests to each tested application in total):

- 20 concurrent virtual users running 1000 loops of calls (20000 HTTP requests to each tested application in total):

- 30 concurrent virtual users running 1000 loops of calls (30000 HTTP requests to each tested application in total):

- 40 concurrent virtual users running 1000 loops of calls (40000 HTTP requests to each tested application in total):

- 50 concurrent virtual users running 1000 loops of calls (50000 HTTP requests to each tested application in total):

Test results summary

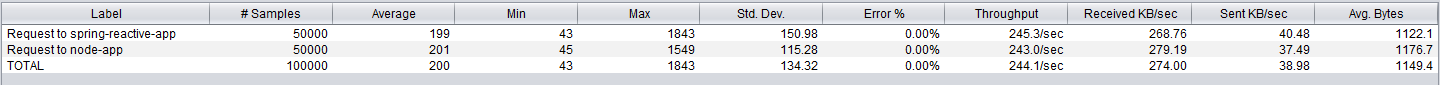

As it can be seen from tests execution summary, Spring Boot Reactive and Node.js applications performed well with almost identical throughput and reached their optimal throughput on 20+ concurrent requests, approaching throughput of approximately 240-250 requests per second:

There were some trials were Spring Boot Reactive application tended to be slightly more robust than Node.js application, but there were also some other trials where Node.js application handled slightly higher throughput. Given difference was negligibly small and cannot be seen as a repeatable trend, I tend to consider that difference as statistically acceptable margin of error that can be ignored in defined circumstances.

Given applications under test don’t implement any sophisticated logic, test can be considered as examination of robustness of Spring Boot Reactive and Node.js technology stack – being applied to this particular setup, robustness of technologies that form stack of a typical microservice application – web framework, interaction with persistence layer, and, given cloud focus of the application, interaction with application context / environment when being deployed to cloud provisioned container. And, based on obtained measurements, both Spring Boot, when using reactive modules, and Node.js based technology stacks that were utilized in application development, can demonstrate relatively equal robustness.

Outro

It has been demonstrated that we can achieve almost equal robustness when developing Java / Spring Boot Reactive and JavaScript / Node.js application. In this particular setup, applications were deployed to Cloud Foundry, but very similar outcome might apply to on premise applications or applications deployed to other cloud platforms. Now let’s reflect on observations and measurements… Does this signify that Spring Boot Reactive is as high-performing, as Node.js?

Does this imply that application developers remain uncertain on what is a reasonably better choice – Java or server-side JavaScript – when it comes to justify choice based on application robustness for high-load applications? (if we think for a moment about many other evaluation factors – such as application resource footprint and utilization, maintenance, learning curve, existing skill set and qualifications of developers and support teams, unification or diversity of programming languages applied to front end and back end, etc. – then choice might become very different)

One key note that shall be taken as a takeaway from reading this blog (as well as many other materials that illustrate comparison of various development technologies, runtimes and frameworks) – never ever make definitive conclusions based on such tests and measurements, taken alone. Every comparison summary similar to the one provided in this blog, reflects very specific use case being put in certain environment and setup (of both application under test and test script that is used for load generation). Hence, be sure that use case and environment that were used for measurements, match those of yours. Enterprise applications – including microservices – can vary significantly in their behavioral pattern and area of application: they can be memory intensive (for example, processing large volumes of data), CPU intensive (for example, involving complex calculations or highly recursive functions), I/O intensive (for example, intensive interaction with other components such as persistence layer or other services), combinations of above and so on. Unless you are very confident that notable conditions are the same or difference between them is insignificant and is not going to invalidate results of measurements, making them inappropriate for assessed alternatives, any such comparison is good for educational purposes, but shall always be taken with fair share of criticism.

This idea sounds very straightforward, but for some reasons, it is not uncommon to evidence it being neglected when it comes to debates about which runtime is more robust, which framework is more suitable for applications with high load profile, etc.

- SAP Managed Tags:

- Java,

- Node.js,

- SAP Business Technology Platform

3 Comments

You must be a registered user to add a comment. If you've already registered, sign in. Otherwise, register and sign in.

Labels in this area

-

"automatische backups"

1 -

"regelmäßige sicherung"

1 -

"TypeScript" "Development" "FeedBack"

1 -

505 Technology Updates 53

1 -

ABAP

14 -

ABAP API

1 -

ABAP CDS Views

2 -

ABAP CDS Views - BW Extraction

1 -

ABAP CDS Views - CDC (Change Data Capture)

1 -

ABAP class

2 -

ABAP Cloud

2 -

ABAP Development

5 -

ABAP in Eclipse

1 -

ABAP Platform Trial

1 -

ABAP Programming

2 -

abap technical

1 -

absl

2 -

access data from SAP Datasphere directly from Snowflake

1 -

Access data from SAP datasphere to Qliksense

1 -

Accrual

1 -

action

1 -

adapter modules

1 -

Addon

1 -

Adobe Document Services

1 -

ADS

1 -

ADS Config

1 -

ADS with ABAP

1 -

ADS with Java

1 -

ADT

2 -

Advance Shipping and Receiving

1 -

Advanced Event Mesh

3 -

AEM

1 -

AI

7 -

AI Launchpad

1 -

AI Projects

1 -

AIML

9 -

Alert in Sap analytical cloud

1 -

Amazon S3

1 -

Analytical Dataset

1 -

Analytical Model

1 -

Analytics

1 -

Analyze Workload Data

1 -

annotations

1 -

API

1 -

API and Integration

3 -

API Call

2 -

Application Architecture

1 -

Application Development

5 -

Application Development for SAP HANA Cloud

3 -

Applications and Business Processes (AP)

1 -

Artificial Intelligence

1 -

Artificial Intelligence (AI)

5 -

Artificial Intelligence (AI) 1 Business Trends 363 Business Trends 8 Digital Transformation with Cloud ERP (DT) 1 Event Information 462 Event Information 15 Expert Insights 114 Expert Insights 76 Life at SAP 418 Life at SAP 1 Product Updates 4

1 -

Artificial Intelligence (AI) blockchain Data & Analytics

1 -

Artificial Intelligence (AI) blockchain Data & Analytics Intelligent Enterprise

1 -

Artificial Intelligence (AI) blockchain Data & Analytics Intelligent Enterprise Oil Gas IoT Exploration Production

1 -

Artificial Intelligence (AI) blockchain Data & Analytics Intelligent Enterprise sustainability responsibility esg social compliance cybersecurity risk

1 -

ASE

1 -

ASR

2 -

ASUG

1 -

Attachments

1 -

Authorisations

1 -

Automating Processes

1 -

Automation

2 -

aws

2 -

Azure

1 -

Azure AI Studio

1 -

B2B Integration

1 -

Backorder Processing

1 -

Backup

1 -

Backup and Recovery

1 -

Backup schedule

1 -

BADI_MATERIAL_CHECK error message

1 -

Bank

1 -

BAS

1 -

basis

2 -

Basis Monitoring & Tcodes with Key notes

2 -

Batch Management

1 -

BDC

1 -

Best Practice

1 -

bitcoin

1 -

Blockchain

3 -

bodl

1 -

BOP in aATP

1 -

BOP Segments

1 -

BOP Strategies

1 -

BOP Variant

1 -

BPC

1 -

BPC LIVE

1 -

BTP

12 -

BTP Destination

2 -

Business AI

1 -

Business and IT Integration

1 -

Business application stu

1 -

Business Application Studio

1 -

Business Architecture

1 -

Business Communication Services

1 -

Business Continuity

1 -

Business Data Fabric

3 -

Business Partner

12 -

Business Partner Master Data

10 -

Business Technology Platform

2 -

Business Trends

4 -

CA

1 -

calculation view

1 -

CAP

3 -

Capgemini

1 -

CAPM

1 -

Catalyst for Efficiency: Revolutionizing SAP Integration Suite with Artificial Intelligence (AI) and

1 -

CCMS

2 -

CDQ

12 -

CDS

2 -

Cental Finance

1 -

Certificates

1 -

CFL

1 -

Change Management

1 -

chatbot

1 -

chatgpt

3 -

CL_SALV_TABLE

2 -

Class Runner

1 -

Classrunner

1 -

Cloud ALM Monitoring

1 -

Cloud ALM Operations

1 -

cloud connector

1 -

Cloud Extensibility

1 -

Cloud Foundry

4 -

Cloud Integration

6 -

Cloud Platform Integration

2 -

cloudalm

1 -

communication

1 -

Compensation Information Management

1 -

Compensation Management

1 -

Compliance

1 -

Compound Employee API

1 -

Configuration

1 -

Connectors

1 -

Consolidation Extension for SAP Analytics Cloud

2 -

Control Indicators.

1 -

Controller-Service-Repository pattern

1 -

Conversion

1 -

Cosine similarity

1 -

cryptocurrency

1 -

CSI

1 -

ctms

1 -

Custom chatbot

3 -

Custom Destination Service

1 -

custom fields

1 -

Customer Experience

1 -

Customer Journey

1 -

Customizing

1 -

cyber security

3 -

cybersecurity

1 -

Data

1 -

Data & Analytics

1 -

Data Aging

1 -

Data Analytics

2 -

Data and Analytics (DA)

1 -

Data Archiving

1 -

Data Back-up

1 -

Data Flow

1 -

Data Governance

5 -

Data Integration

2 -

Data Quality

12 -

Data Quality Management

12 -

Data Synchronization

1 -

data transfer

1 -

Data Unleashed

1 -

Data Value

8 -

database tables

1 -

Datasphere

3 -

datenbanksicherung

1 -

dba cockpit

1 -

dbacockpit

1 -

Debugging

2 -

Delimiting Pay Components

1 -

Delta Integrations

1 -

Destination

3 -

Destination Service

1 -

Developer extensibility

1 -

Developing with SAP Integration Suite

1 -

Devops

1 -

digital transformation

1 -

Documentation

1 -

Dot Product

1 -

DQM

1 -

dump database

1 -

dump transaction

1 -

e-Invoice

1 -

E4H Conversion

1 -

Eclipse ADT ABAP Development Tools

2 -

edoc

1 -

edocument

1 -

ELA

1 -

Embedded Consolidation

1 -

Embedding

1 -

Embeddings

1 -

Employee Central

1 -

Employee Central Payroll

1 -

Employee Central Time Off

1 -

Employee Information

1 -

Employee Rehires

1 -

Enable Now

1 -

Enable now manager

1 -

endpoint

1 -

Enhancement Request

1 -

Enterprise Architecture

1 -

ETL Business Analytics with SAP Signavio

1 -

Euclidean distance

1 -

Event Dates

1 -

Event Driven Architecture

1 -

Event Mesh

2 -

Event Reason

1 -

EventBasedIntegration

1 -

EWM

1 -

EWM Outbound configuration

1 -

EWM-TM-Integration

1 -

Existing Event Changes

1 -

Expand

1 -

Expert

2 -

Expert Insights

2 -

Exploits

1 -

Fiori

14 -

Fiori Elements

2 -

Fiori SAPUI5

12 -

Flask

1 -

Full Stack

8 -

Funds Management

1 -

General

1 -

General Splitter

1 -

Generative AI

1 -

Getting Started

1 -

GitHub

8 -

Grants Management

1 -

GraphQL

1 -

groovy

1 -

GTP

1 -

HANA

6 -

HANA Cloud

2 -

Hana Cloud Database Integration

2 -

HANA DB

2 -

HANA XS Advanced

1 -

Historical Events

1 -

home labs

1 -

HowTo

1 -

HR Data Management

1 -

html5

8 -

HTML5 Application

1 -

Identity cards validation

1 -

idm

1 -

Implementation

1 -

input parameter

1 -

instant payments

1 -

Integration

3 -

Integration Advisor

1 -

Integration Architecture

1 -

Integration Center

1 -

Integration Suite

1 -

intelligent enterprise

1 -

iot

1 -

Java

1 -

job

1 -

Job Information Changes

1 -

Job-Related Events

1 -

Job_Event_Information

1 -

joule

4 -

Journal Entries

1 -

Just Ask

1 -

Kerberos for ABAP

8 -

Kerberos for JAVA

8 -

KNN

1 -

Launch Wizard

1 -

Learning Content

2 -

Life at SAP

5 -

lightning

1 -

Linear Regression SAP HANA Cloud

1 -

local tax regulations

1 -

LP

1 -

Machine Learning

2 -

Marketing

1 -

Master Data

3 -

Master Data Management

14 -

Maxdb

2 -

MDG

1 -

MDGM

1 -

MDM

1 -

Message box.

1 -

Messages on RF Device

1 -

Microservices Architecture

1 -

Microsoft Universal Print

1 -

Middleware Solutions

1 -

Migration

5 -

ML Model Development

1 -

Modeling in SAP HANA Cloud

8 -

Monitoring

3 -

MTA

1 -

Multi-Record Scenarios

1 -

Multiple Event Triggers

1 -

Myself Transformation

1 -

Neo

1 -

New Event Creation

1 -

New Feature

1 -

Newcomer

1 -

NodeJS

2 -

ODATA

2 -

OData APIs

1 -

odatav2

1 -

ODATAV4

1 -

ODBC

1 -

ODBC Connection

1 -

Onpremise

1 -

open source

2 -

OpenAI API

1 -

Oracle

1 -

PaPM

1 -

PaPM Dynamic Data Copy through Writer function

1 -

PaPM Remote Call

1 -

PAS-C01

1 -

Pay Component Management

1 -

PGP

1 -

Pickle

1 -

PLANNING ARCHITECTURE

1 -

Popup in Sap analytical cloud

1 -

PostgrSQL

1 -

POSTMAN

1 -

Process Automation

2 -

Product Updates

4 -

PSM

1 -

Public Cloud

1 -

Python

4 -

Qlik

1 -

Qualtrics

1 -

RAP

3 -

RAP BO

2 -

Record Deletion

1 -

Recovery

1 -

recurring payments

1 -

redeply

1 -

Release

1 -

Remote Consumption Model

1 -

Replication Flows

1 -

research

1 -

Resilience

1 -

REST

1 -

REST API

2 -

Retagging Required

1 -

Risk

1 -

Rolling Kernel Switch

1 -

route

1 -

rules

1 -

S4 HANA

1 -

S4 HANA Cloud

1 -

S4 HANA On-Premise

1 -

S4HANA

3 -

S4HANA_OP_2023

2 -

SAC

10 -

SAC PLANNING

9 -

SAP

4 -

SAP ABAP

1 -

SAP Advanced Event Mesh

1 -

SAP AI Core

8 -

SAP AI Launchpad

8 -

SAP Analytic Cloud Compass

1 -

Sap Analytical Cloud

1 -

SAP Analytics Cloud

4 -

SAP Analytics Cloud for Consolidation

3 -

SAP Analytics Cloud Story

1 -

SAP analytics clouds

1 -

SAP BAS

1 -

SAP Basis

6 -

SAP BODS

1 -

SAP BODS certification.

1 -

SAP BTP

21 -

SAP BTP Build Work Zone

2 -

SAP BTP Cloud Foundry

6 -

SAP BTP Costing

1 -

SAP BTP CTMS

1 -

SAP BTP Innovation

1 -

SAP BTP Migration Tool

1 -

SAP BTP SDK IOS

1 -

SAP Build

11 -

SAP Build App

1 -

SAP Build apps

1 -

SAP Build CodeJam

1 -

SAP Build Process Automation

3 -

SAP Build work zone

10 -

SAP Business Objects Platform

1 -

SAP Business Technology

2 -

SAP Business Technology Platform (XP)

1 -

sap bw

1 -

SAP CAP

2 -

SAP CDC

1 -

SAP CDP

1 -

SAP CDS VIEW

1 -

SAP Certification

1 -

SAP Cloud ALM

4 -

SAP Cloud Application Programming Model

1 -

SAP Cloud Integration for Data Services

1 -

SAP cloud platform

8 -

SAP Companion

1 -

SAP CPI

3 -

SAP CPI (Cloud Platform Integration)

2 -

SAP CPI Discover tab

1 -

sap credential store

1 -

SAP Customer Data Cloud

1 -

SAP Customer Data Platform

1 -

SAP Data Intelligence

1 -

SAP Data Migration in Retail Industry

1 -

SAP Data Services

1 -

SAP DATABASE

1 -

SAP Dataspher to Non SAP BI tools

1 -

SAP Datasphere

9 -

SAP DRC

1 -

SAP EWM

1 -

SAP Fiori

2 -

SAP Fiori App Embedding

1 -

Sap Fiori Extension Project Using BAS

1 -

SAP GRC

1 -

SAP HANA

1 -

SAP HCM (Human Capital Management)

1 -

SAP HR Solutions

1 -

SAP IDM

1 -

SAP Integration Suite

9 -

SAP Integrations

4 -

SAP iRPA

2 -

SAP Learning Class

1 -

SAP Learning Hub

1 -

SAP Odata

2 -

SAP on Azure

1 -

SAP PartnerEdge

1 -

sap partners

1 -

SAP Password Reset

1 -

SAP PO Migration

1 -

SAP Prepackaged Content

1 -

SAP Process Automation

2 -

SAP Process Integration

2 -

SAP Process Orchestration

1 -

SAP S4HANA

2 -

SAP S4HANA Cloud

1 -

SAP S4HANA Cloud for Finance

1 -

SAP S4HANA Cloud private edition

1 -

SAP Sandbox

1 -

SAP STMS

1 -

SAP successfactors

3 -

SAP SuccessFactors HXM Core

1 -

SAP Time

1 -

SAP TM

2 -

SAP Trading Partner Management

1 -

SAP UI5

1 -

SAP Upgrade

1 -

SAP Utilities

1 -

SAP-GUI

8 -

SAP_COM_0276

1 -

SAPBTP

1 -

SAPCPI

1 -

SAPEWM

1 -

sapmentors

1 -

saponaws

2 -

SAPS4HANA

1 -

SAPUI5

4 -

schedule

1 -

Script Operator

1 -

Secure Login Client Setup

8 -

security

9 -

Selenium Testing

1 -

Self Transformation

1 -

Self-Transformation

1 -

SEN

1 -

SEN Manager

1 -

service

1 -

SET_CELL_TYPE

1 -

SET_CELL_TYPE_COLUMN

1 -

SFTP scenario

2 -

Simplex

1 -

Single Sign On

8 -

Singlesource

1 -

SKLearn

1 -

soap

1 -

Software Development

1 -

SOLMAN

1 -

solman 7.2

2 -

Solution Manager

3 -

sp_dumpdb

1 -

sp_dumptrans

1 -

SQL

1 -

sql script

1 -

SSL

8 -

SSO

8 -

Substring function

1 -

SuccessFactors

1 -

SuccessFactors Platform

1 -

SuccessFactors Time Tracking

1 -

Sybase

1 -

system copy method

1 -

System owner

1 -

Table splitting

1 -

Tax Integration

1 -

Technical article

1 -

Technical articles

1 -

Technology Updates

14 -

Technology Updates

1 -

Technology_Updates

1 -

terraform

1 -

Threats

2 -

Time Collectors

1 -

Time Off

2 -

Time Sheet

1 -

Time Sheet SAP SuccessFactors Time Tracking

1 -

Tips and tricks

2 -

toggle button

1 -

Tools

1 -

Trainings & Certifications

1 -

Transformation Flow

1 -

Transport in SAP BODS

1 -

Transport Management

1 -

TypeScript

2 -

ui designer

1 -

unbind

1 -

Unified Customer Profile

1 -

UPB

1 -

Use of Parameters for Data Copy in PaPM

1 -

User Unlock

1 -

VA02

1 -

Validations

1 -

Vector Database

2 -

Vector Engine

1 -

Visual Studio Code

1 -

VSCode

1 -

Vulnerabilities

1 -

Web SDK

1 -

work zone

1 -

workload

1 -

xsa

1 -

XSA Refresh

1

- « Previous

- Next »

Related Content

- Replication Flow Blog Series Part 4 - Sizing in Technology Blogs by SAP

- Use ST05 to Analyze the Communication of the ABAP Work Process with External Resources in Technology Blogs by SAP

- Network Performance Analysis for SAP Netweaver ABAP in Technology Blogs by SAP

- Data Analysis Tool in Technology Blogs by SAP

- HANA Workload Management deep dive part II in Technology Blogs by Members

Top kudoed authors

| User | Count |

|---|---|

| 5 | |

| 5 | |

| 5 | |

| 4 | |

| 4 | |

| 4 | |

| 4 | |

| 4 | |

| 3 | |

| 3 |