- SAP Community

- Products and Technology

- Technology

- Technology Blogs by SAP

- Hoeffding Tree Overview – Creating a Training Mod...

Technology Blogs by SAP

Learn how to extend and personalize SAP applications. Follow the SAP technology blog for insights into SAP BTP, ABAP, SAP Analytics Cloud, SAP HANA, and more.

Turn on suggestions

Auto-suggest helps you quickly narrow down your search results by suggesting possible matches as you type.

Showing results for

aaron_patkau

Explorer

Options

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

02-23-2018

6:59 PM

While going through the video library, we noticed our Hoeffding Tree machine learning series was a little out of date, so we decided it was time for a makeover. If you’re using streaming analytics version 1.0 SP 11 or newer and want to learn how to train and score data in SAP HANA studio, check out part 1 of this new video series. There are more videos to come, and each will have an associated blog post just like this one, so stay on the lookout.

Here's an overview of part 1 and a sneak peek of the videos to come:

Summary

Part 1 is the beginning of the training phase, which involves creating a training model that uses the Hoeffding Tree training machine learning function. As data streams in, this function continuously works to discover predictive relationships in the model. To make sure things go smoothly, you need to use specific Hoeffding Tree training input and output schemas.

Input Schema

[IDS] + S

You can have as many feature columns as you need and each of these columns can be any combination of an integer, double, or string. The last column is a string, which is the label, or classifier. The data we’re working with in this video is sample insurance data, so the classifier will be “Yes” for a fraudulent claim, or “No” for a legitimate claim.

Output Schema

[D]

The output schema is more simple. There’s just one column, and it’s always a double. This displays a value between 0 and 1, which tells you the accuracy of the model.

You’re now ready to create a training model.

Creating and Building a Training Model

To create your model, first make sure you’re connected to an SAP HANA data service. Don’t have one? Learn how to add one from our documentation.

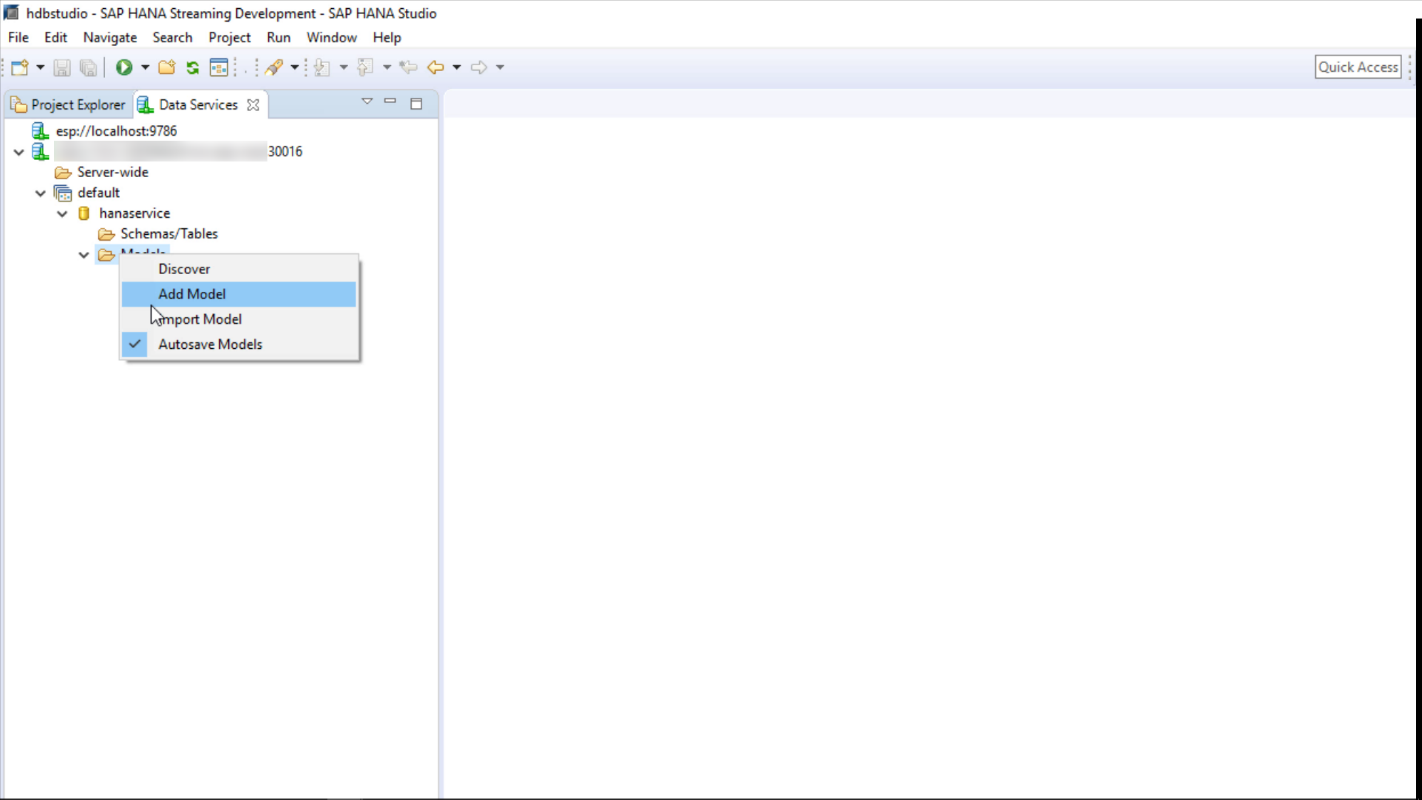

Once connected, drill down to the Models folder in the Data Services view and choose Add Model:

Then, open the model properties by selecting the model. In the General tab, fill in the fields:

Choose HoeffdingTreeTraining as the machine learning function. The above input and output schema match the source data you’ll use in the next video (more on that in the next blog). Because this is a simple example, we set the sync point to 5 rows. This means that for every 5 rows, the model will be backed up to the HANA database. Of course, you would likely use a much higher interval in a production setting. If you want to fill in the specifics of the algorithm, switch to the Parameters tab. In the video, we just used the defaults.

To learn more about model properties and parameters, here's a handy reference.

Next, right-click the new model and rename it:

Note that you also have the option to save the model (if autosave isn’t enabled), delete it, or reset it. Deleting a model removes every trace of it, whereas resetting a model only removes its model content and evaluation data. This means that the model’s function will learn from scratch. The model itself remains in the data service, and the model metadata remains in the HANA database tables.

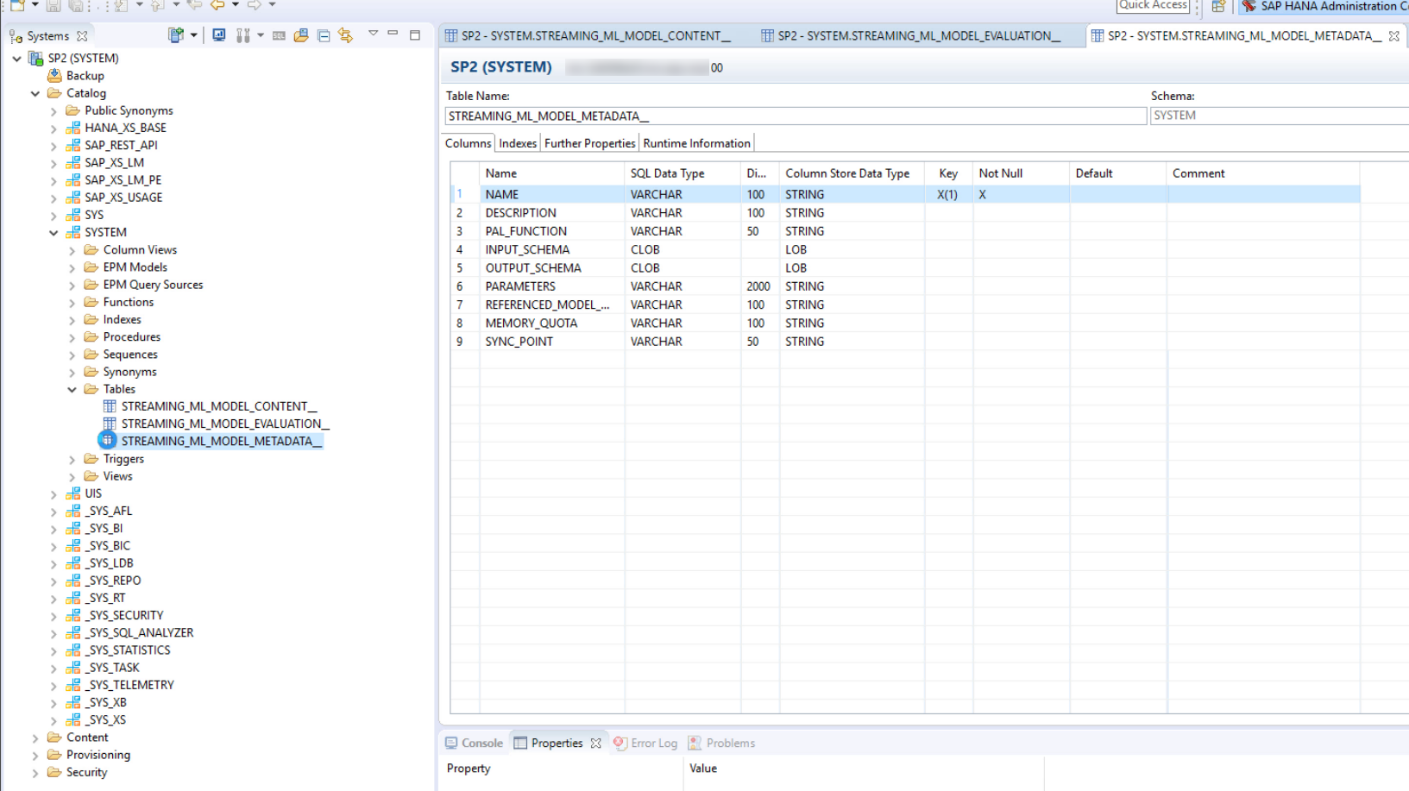

You can find the model metadata, along with the other tables that get created when you create a model, under the user schema in the SAP HANA Administration Console:

These tables store valuable overview information about the models you create, to which streaming analytics makes relevant changes whenever models are updated. These tables include a snapshot of the model properties, model performance stats, and so on. For more details, check out our table reference.

What’s Next?

Part two in the series is available here.

For more on machine learning models, check out the Model Management section of the SAP HANA Streaming Analytics: Developer Guide. If you’re interested in creating machine learning models in Web IDE, we've got a blog post for that too!

- SAP Managed Tags:

- SAP HANA streaming analytics,

- SAP Business Technology Platform

You must be a registered user to add a comment. If you've already registered, sign in. Otherwise, register and sign in.

Labels in this area

-

ABAP CDS Views - CDC (Change Data Capture)

2 -

AI

1 -

Analyze Workload Data

1 -

BTP

1 -

Business and IT Integration

2 -

Business application stu

1 -

Business Technology Platform

1 -

Business Trends

1,661 -

Business Trends

88 -

CAP

1 -

cf

1 -

Cloud Foundry

1 -

Confluent

1 -

Customer COE Basics and Fundamentals

1 -

Customer COE Latest and Greatest

3 -

Customer Data Browser app

1 -

Data Analysis Tool

1 -

data migration

1 -

data transfer

1 -

Datasphere

2 -

Event Information

1,400 -

Event Information

65 -

Expert

1 -

Expert Insights

178 -

Expert Insights

280 -

General

1 -

Google cloud

1 -

Google Next'24

1 -

Kafka

1 -

Life at SAP

784 -

Life at SAP

11 -

Migrate your Data App

1 -

MTA

1 -

Network Performance Analysis

1 -

NodeJS

1 -

PDF

1 -

POC

1 -

Product Updates

4,577 -

Product Updates

330 -

Replication Flow

1 -

RisewithSAP

1 -

SAP BTP

1 -

SAP BTP Cloud Foundry

1 -

SAP Cloud ALM

1 -

SAP Cloud Application Programming Model

1 -

SAP Datasphere

2 -

SAP S4HANA Cloud

1 -

SAP S4HANA Migration Cockpit

1 -

Technology Updates

6,886 -

Technology Updates

408 -

Workload Fluctuations

1

Related Content

- Unify your process and task mining insights: How SAP UEM by Knoa integrates with SAP Signavio in Technology Blogs by SAP

- Deep dive into Q4 2023, What’s New in SAP Cloud ALM for Implementation Blog Series in Technology Blogs by SAP

- Developing with SAP Integration Suite "the page you are looking for is currently unavailable." in Technology Q&A

- Adversarial Machine Learning: is your AI-based component robust? in Technology Blogs by SAP

- SAP Sustainability Footprint Management: Q1-24 Updates & Highlights in Technology Blogs by SAP

Top kudoed authors

| User | Count |

|---|---|

| 13 | |

| 10 | |

| 10 | |

| 9 | |

| 7 | |

| 6 | |

| 5 | |

| 5 | |

| 5 | |

| 4 |