- SAP Community

- Products and Technology

- Technology

- Technology Blogs by Members

- Table redistribution and repartitioning in a BW on...

Technology Blogs by Members

Explore a vibrant mix of technical expertise, industry insights, and tech buzz in member blogs covering SAP products, technology, and events. Get in the mix!

Turn on suggestions

Auto-suggest helps you quickly narrow down your search results by suggesting possible matches as you type.

Showing results for

former_member25

Explorer

Options

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

02-17-2018

2:33 AM

Dear Readers,

In this blog, I am providing step by step details about how to perform table redistribution and repartitioning in a BW on HANA system. Several steps described in this document are specific only to a BW on HANA system. This activity was performed in a HANA 1.0 SPS12 system.

In a scaled-out HANA system, tables and table partitions are spread across several hosts. The tables are distributed initially as part of the installation. Over the time when more and more data gets pumped into the system, the distribution may get distorted with some hosts holding very large amount of data whereas some other hosts holding much lesser amount of data. This leads to higher resource utilization in the overloaded hosts and may lead to various problems on those like higher CPU utilization, frequent out of memory dumps, increased redo log generation which in turn can cause problems in system replication to the DR site. If the rate of redo logs generation in the overloaded host is higher compared to the rate of transferring the logs to the DR site, it increases the buffer full counts and puts pressure on the replication network between the primary nodes and the corresponding nodes in secondary.

The table redistribution and repartitioning operation applies advanced algorithms to ensure tables are distributed optimally across all the active nodes and are partitioned appropriately taking into consideration several parameters mainly –

➢ Number of partitions

➢ Memory Usage of tables and partitions

➢ Number of rows of tables and partitions

➢ Table classification

Apart from these, there are several other parameters that are considered by the internal HANA algorithms to execute the redistribution and repartitioning task.

You can consider the following to decide whether a redistribution and repartitioning is needed in the system.

➢ Execute the SQL script "HANA_Tables_ColumnStore_TableHostMapping" from OSS note 1969700. From the output of this query, you can see the total size of the tables in disk across all the hosts. In our system, as you can see in the screen shot below the distribution was uneven with some nodes holding too much data compared to other nodes.

➢ If you observe frequent out of memory dumps are getting generated in few hosts due to high memory utilization of those hosts by column store tables. You can execute the following SQL statement to see the memory space occupied by the column store tables.

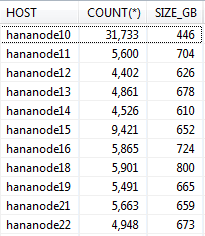

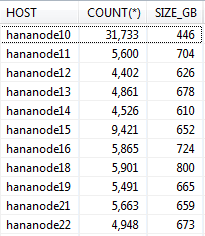

select host, count(*), round(sum(memory_size_in_total/1024/1024/1024)) as size_GB from m_cs_tables group by host order by host

As you can see in the below screen shot, in some hosts the memory space occupied by the column stores tables is much higher compared to other hosts.

➢ If new hosts are added to the system, tables are not automatically distributed to those. Only new tables created after the addition of hosts may get stored there. The optimize table distribution activity needs to be carried out to distribute tables from the existing hosts to the new hosts.

➢ If too many of the following error message are showing up in the indexserver trace file, the table redistribution and repartitioning activity also takes of these issues.

Potential performance problem: Table ABC and XYZ are split by unfavorable criteria. This will not prevent the data store from working but it may significantly degrade performance.

Potential performance problem: Table ABC is split and table XYZ is split by an appropriate criterion but corresponding parts are located in different servers. This will not prevent the data store from working but it may significantly degrade performance.

✓ Update table “table_placement”

✓ Maintain parameters

✓ Grant permissions to SAP schema user

✓ Run consistency check report

✓ Run stored procedure to check native HANA table consistency and catalog

✓ Run the cleanup python script to clean virtual files

✓ Check whether there are any business tables created in row store. Convert them to column store

✓ Run the memorysizing python script

✓ Take a database backup

✓ Suspend crontab jobs

✓ Save the current table distribution

✓ Increase the number of threads for the execution of table redistribution

✓ Unregister secondary system from primary (if DR is setup)

✓ Stop SAP application

✓ Lock users

✓ Execute “optimize table distribution” operation

✓ Startup SAP

✓ Run compression of tables

OSS note 1908075 provides an attachment which has several scripts for different HANA versions and different scale out scenarios. Download the attachment and navigate to the folder as per your HANA version, number of slave nodes and amount of memory per node.

In the SQL script, replace the $$PLACEHOLDER with the SAP schema name of your system. Execute the script. This will update the table “Table Placement” under SYS schema. This table will be referred by HANA algorithms to take decisions on the table redistribution and repartitioning activity.

Maintain HANA parameters as recommended in OSS note 1958216 according to your HANA version.

For HANA 1.0 SPS10 onwards, ensure that the SAP schema user (SAPBIW in our case) has the system privilege “Table Admin”.

SAP provides an ABAP report “rsdu_table_consistency” specifically for SAP systems on HANA database. First, ensure that you apply the latest version of this report and apply the OSS note 2175148 - SHDB: Regard TABLE_PLACEMENT in schema SYS (HANA SP100) if your HANA version is >=SPS10. Otherwise you may get short dumps while executing this report if you select the option “CL_SCEN_TAB_CLASSIFICATION”.

Execute this report from SA38 especially by selecting the options “CL_SCEN_PARTITION_SPEC” and “CL_SCEN_TAB_CLASSIFICATION”. (You can select all the other options as well). If any errors are reported, fix those by running the report in repair mode.

Note: This report should be run after the table “table_placement” is maintained as described in the first step. This report refers to that table to determine and fix errors related to table classification.

Execute the stored procedures check_table_consistency and check_catalog for the non-BW tables and ensure there are critical errors reported. If any critical errors are reported, fix those first.

If there are extra virtual files, the table redistribution and repartitioning operation may fail. Run the python script cleanupExtraFiles.py available in the python_support directory to determine whether there are any extra virtual files. Before you run the script, open it in VI editor and modify the following parameters as per your system.

self.host, self.port, self.user and self.passwd

Execute the following command first to determine whether there are any extra virtual files.

Python cleanupExtraFiles.py

If this command reports extra virtual files, execute the command again with remove option to cleanup those.

Python cleanupExtraFiles.py –removeAll

The table redistribution and repartitioning operation considers only column store tables and not row store tables. So, if anybody has created any business tables in row store (by mistake or without knowing the implications) those will not get considered for this activity. Big BW tables are not supposed to be created in row store in the first place. Convert those to column store using the below SQL

Alter table <table_name> column;

Before running the actual “optimize table distribution” task, execute the below command –

Call reorg_generate(6,’’)

This will generate the redistribution plan but not execute it. The number ‘6’ here is the algorithm id of “Balance landscape/Table” which gets executed by the “optimize table distribution” operation.

After the above procedure gets executed, in the same SQL console, execute the following query-

Create table REORG_LOCATIONS_EXPORT as (select * from #REORG_LOCATIONS)

This will create the table REORG_LOCATIONS_EXPORT in the schema of the user with which you executed this. Execute the query – Select memory_used from reorg_locations_export

If you see the memory_used column has several negative numbers like shown in the screen shot below, it indicates there is a problem.

You can also execute the below query and check the output.

select host, round(sum(memory_used/1024/1024/1024)) as memory_used from reorg_locations_export group by host order by host

If you get the output as shown in the below screen shot, this indicates that the memory statistics is not updated. If you execute the “optimize table distribution” operation now, the distribution won’t be even and some hosts may end up having far larger number of tables with high memory usage and whereas others will have very less number of tables and very less memory usage.

This is due to a HANA internal issue which has been fixed in HANA 2.0 where an internal housekeeping algorithm corrects these memory statistics. As a workaround for HANA 1.0 systems, SAP provides a python script that you can find in the standard python_support directory. (Check OSS note 1698281 for more details about this script).

Note: This script should be executed during low system load (preferably on weekends).

After the script run finishes, generate a new plan with the same method as described above and create a new table for reorg locations with a new name, say reorg_locations_export_1. Execute the query - Select memory_used from reorg_locations_export_1. Now you won’t be seeing

those negative numbers in the memory_used column. Executing the query - select host, round(sum(memory_used/1024/1024/1024)) as memory_used from reorg_locations_export_1 group by host order by host, will now show much better result. As you can see below, the values in the memory_used column is pretty much even across all nodes after executing the memorysizing script and there are no negative values.

Take a database backup before executing the redistribution activity.

Suspend jobs that you have scheduled in crontab, e.g. backup script.

From HANA studio, go to landscape --> Redistribution. Click on save. This will generate the current distribution plan and save it in case it is needed to restore back to the original table distribution.

For faster execution of the table redistribution and repartitioning operation, you can set the parameter indexserver.ini [table_redist] -> num_exec_threads to a high value like 100 or 200 based on the CPU capacity of your HANA system. This will increase the parallelization and speed up the operation. The value should not exceed the number of logical CPU cores of the hosts. The default value of this parameter is 20. Make sure to unset this parameter after the activity is completed.

If you have system replication setup between primary and secondary sites, you will need to unregister secondary from primary. If you perform table redistribution and repartitioning with system replication enabled, it will slow down the activity.

Stop SAP application servers

Lock all users in HANA except the system users SYS, _SYS_REPO, SYSTEM, SAP schema user, etc. Ensure there are no sessions active in the database before you proceed to the next step. This will also ensure that SLT replication cannot happen. Still if you want you can deactivate SLT replication separately.

From HANA studio, go to landscape --> Redistribution. Select “Optimize table distribution” and click on execute. In the next screen under “Parameters” leave the field blank. This will ensure that table repartitioning will also be taken care of along with redistribution. However, if you want to run only redistribution without repartitioning, enter “NO_SPLIT” in the parameters field. Click on next to generate the reorg plan and then click on execute.

Run the SQL script "HANA_Redistribution_ReorganizationMonitor" from OSS note 1969700 to monitor the redistribution and repartitioning activity. You can also execute the below command to monitor the reorg steps

select IFNULL("STATUS", 'PENDING'), count(*) from REORG_STEPS where reorg_id=(SELECT

MAX(REORG_ID) from REORG_OVERVIEW) group by "STATUS";

Start SAP application servers after the redistribution completes.

The changes in the partition specification of the tables as part of this activity leads to tables in uncompressed form. Though the “optimize table distribution” process carries out compression as part of this activity, due to some bug tables can still be in uncompressed form after this activity completes. This will lead to high memory usage. Compression will run automatically after the next delta merge happens on these tables. If you want you can perform it manually. Execute the SQL script "HANA_Tables_ColumnStore_TablesWithoutCompressionOptimization" and HANA_Tables_ColumnStore_ColumnsWithoutCompressionOptimization" from OSS note 1969700 to get the list of tables and columns that need compression. The output of this script provides the SQL query for executing the compression.

As an outcome of this activity, the distribution of tables evened out across all the hosts of the system. The memory space occupied by column store tables also became more or less even. Also you can see that the size of tables in the master node has reduced. This is because some of our BW projects had created big tables in the master node which have been moved to the slave nodes as part of this redistribution activity. This should be the ideal scenario.

Size of tables on disk (before)

Size of tables on disk (after)

Count and memory consumption of tables (before)

Count and memory consumption of tables (after)

I hope this article helps you all whoever is planning to perform this activity on your BW on HANA system.

Thanks,

Arindam

Referrence:

OSS note 1908075 - BW on SAP HANA: Table placement and landscape redistribution

OSS note 2143736 - FAQ: SAP HANA Table Distribution for BW

In this blog, I am providing step by step details about how to perform table redistribution and repartitioning in a BW on HANA system. Several steps described in this document are specific only to a BW on HANA system. This activity was performed in a HANA 1.0 SPS12 system.

In a scaled-out HANA system, tables and table partitions are spread across several hosts. The tables are distributed initially as part of the installation. Over the time when more and more data gets pumped into the system, the distribution may get distorted with some hosts holding very large amount of data whereas some other hosts holding much lesser amount of data. This leads to higher resource utilization in the overloaded hosts and may lead to various problems on those like higher CPU utilization, frequent out of memory dumps, increased redo log generation which in turn can cause problems in system replication to the DR site. If the rate of redo logs generation in the overloaded host is higher compared to the rate of transferring the logs to the DR site, it increases the buffer full counts and puts pressure on the replication network between the primary nodes and the corresponding nodes in secondary.

The table redistribution and repartitioning operation applies advanced algorithms to ensure tables are distributed optimally across all the active nodes and are partitioned appropriately taking into consideration several parameters mainly –

➢ Number of partitions

➢ Memory Usage of tables and partitions

➢ Number of rows of tables and partitions

➢ Table classification

Apart from these, there are several other parameters that are considered by the internal HANA algorithms to execute the redistribution and repartitioning task.

DETERMINING WHETHER A REDISTRIBUTION AND REPARTITIONING IS NEEDED

You can consider the following to decide whether a redistribution and repartitioning is needed in the system.

➢ Execute the SQL script "HANA_Tables_ColumnStore_TableHostMapping" from OSS note 1969700. From the output of this query, you can see the total size of the tables in disk across all the hosts. In our system, as you can see in the screen shot below the distribution was uneven with some nodes holding too much data compared to other nodes.

➢ If you observe frequent out of memory dumps are getting generated in few hosts due to high memory utilization of those hosts by column store tables. You can execute the following SQL statement to see the memory space occupied by the column store tables.

select host, count(*), round(sum(memory_size_in_total/1024/1024/1024)) as size_GB from m_cs_tables group by host order by host

As you can see in the below screen shot, in some hosts the memory space occupied by the column stores tables is much higher compared to other hosts.

➢ If new hosts are added to the system, tables are not automatically distributed to those. Only new tables created after the addition of hosts may get stored there. The optimize table distribution activity needs to be carried out to distribute tables from the existing hosts to the new hosts.

➢ If too many of the following error message are showing up in the indexserver trace file, the table redistribution and repartitioning activity also takes of these issues.

Potential performance problem: Table ABC and XYZ are split by unfavorable criteria. This will not prevent the data store from working but it may significantly degrade performance.

Potential performance problem: Table ABC is split and table XYZ is split by an appropriate criterion but corresponding parts are located in different servers. This will not prevent the data store from working but it may significantly degrade performance.

CHECKLIST

✓ Update table “table_placement”

✓ Maintain parameters

✓ Grant permissions to SAP schema user

✓ Run consistency check report

✓ Run stored procedure to check native HANA table consistency and catalog

✓ Run the cleanup python script to clean virtual files

✓ Check whether there are any business tables created in row store. Convert them to column store

✓ Run the memorysizing python script

✓ Take a database backup

✓ Suspend crontab jobs

✓ Save the current table distribution

✓ Increase the number of threads for the execution of table redistribution

✓ Unregister secondary system from primary (if DR is setup)

✓ Stop SAP application

✓ Lock users

✓ Execute “optimize table distribution” operation

✓ Startup SAP

✓ Run compression of tables

STEPS IN DETAILS

Update table “table_placement”

OSS note 1908075 provides an attachment which has several scripts for different HANA versions and different scale out scenarios. Download the attachment and navigate to the folder as per your HANA version, number of slave nodes and amount of memory per node.

In the SQL script, replace the $$PLACEHOLDER with the SAP schema name of your system. Execute the script. This will update the table “Table Placement” under SYS schema. This table will be referred by HANA algorithms to take decisions on the table redistribution and repartitioning activity.

Maintain parameters

Maintain HANA parameters as recommended in OSS note 1958216 according to your HANA version.

Grant permissions to SAP schema user

For HANA 1.0 SPS10 onwards, ensure that the SAP schema user (SAPBIW in our case) has the system privilege “Table Admin”.

Run consistency check report

SAP provides an ABAP report “rsdu_table_consistency” specifically for SAP systems on HANA database. First, ensure that you apply the latest version of this report and apply the OSS note 2175148 - SHDB: Regard TABLE_PLACEMENT in schema SYS (HANA SP100) if your HANA version is >=SPS10. Otherwise you may get short dumps while executing this report if you select the option “CL_SCEN_TAB_CLASSIFICATION”.

Execute this report from SA38 especially by selecting the options “CL_SCEN_PARTITION_SPEC” and “CL_SCEN_TAB_CLASSIFICATION”. (You can select all the other options as well). If any errors are reported, fix those by running the report in repair mode.

Note: This report should be run after the table “table_placement” is maintained as described in the first step. This report refers to that table to determine and fix errors related to table classification.

Run stored procedure to check native HANA table consistency and catalog

Execute the stored procedures check_table_consistency and check_catalog for the non-BW tables and ensure there are critical errors reported. If any critical errors are reported, fix those first.

Run the cleanup python script to clean extra virtual files

If there are extra virtual files, the table redistribution and repartitioning operation may fail. Run the python script cleanupExtraFiles.py available in the python_support directory to determine whether there are any extra virtual files. Before you run the script, open it in VI editor and modify the following parameters as per your system.

self.host, self.port, self.user and self.passwd

Execute the following command first to determine whether there are any extra virtual files.

Python cleanupExtraFiles.py

If this command reports extra virtual files, execute the command again with remove option to cleanup those.

Python cleanupExtraFiles.py –removeAll

Check whether there are any business tables created in row store

The table redistribution and repartitioning operation considers only column store tables and not row store tables. So, if anybody has created any business tables in row store (by mistake or without knowing the implications) those will not get considered for this activity. Big BW tables are not supposed to be created in row store in the first place. Convert those to column store using the below SQL

Alter table <table_name> column;

Run the memorysizing python script

Before running the actual “optimize table distribution” task, execute the below command –

Call reorg_generate(6,’’)

This will generate the redistribution plan but not execute it. The number ‘6’ here is the algorithm id of “Balance landscape/Table” which gets executed by the “optimize table distribution” operation.

After the above procedure gets executed, in the same SQL console, execute the following query-

Create table REORG_LOCATIONS_EXPORT as (select * from #REORG_LOCATIONS)

This will create the table REORG_LOCATIONS_EXPORT in the schema of the user with which you executed this. Execute the query – Select memory_used from reorg_locations_export

If you see the memory_used column has several negative numbers like shown in the screen shot below, it indicates there is a problem.

You can also execute the below query and check the output.

select host, round(sum(memory_used/1024/1024/1024)) as memory_used from reorg_locations_export group by host order by host

If you get the output as shown in the below screen shot, this indicates that the memory statistics is not updated. If you execute the “optimize table distribution” operation now, the distribution won’t be even and some hosts may end up having far larger number of tables with high memory usage and whereas others will have very less number of tables and very less memory usage.

This is due to a HANA internal issue which has been fixed in HANA 2.0 where an internal housekeeping algorithm corrects these memory statistics. As a workaround for HANA 1.0 systems, SAP provides a python script that you can find in the standard python_support directory. (Check OSS note 1698281 for more details about this script).

Note: This script should be executed during low system load (preferably on weekends).

After the script run finishes, generate a new plan with the same method as described above and create a new table for reorg locations with a new name, say reorg_locations_export_1. Execute the query - Select memory_used from reorg_locations_export_1. Now you won’t be seeing

those negative numbers in the memory_used column. Executing the query - select host, round(sum(memory_used/1024/1024/1024)) as memory_used from reorg_locations_export_1 group by host order by host, will now show much better result. As you can see below, the values in the memory_used column is pretty much even across all nodes after executing the memorysizing script and there are no negative values.

Take a database backup

Take a database backup before executing the redistribution activity.

Suspend crontab jobs

Suspend jobs that you have scheduled in crontab, e.g. backup script.

Save the current table distribution

From HANA studio, go to landscape --> Redistribution. Click on save. This will generate the current distribution plan and save it in case it is needed to restore back to the original table distribution.

Increase the number of threads for the execution of table redistribution

For faster execution of the table redistribution and repartitioning operation, you can set the parameter indexserver.ini [table_redist] -> num_exec_threads to a high value like 100 or 200 based on the CPU capacity of your HANA system. This will increase the parallelization and speed up the operation. The value should not exceed the number of logical CPU cores of the hosts. The default value of this parameter is 20. Make sure to unset this parameter after the activity is completed.

Unregister secondary system from primary (if DR is setup)

If you have system replication setup between primary and secondary sites, you will need to unregister secondary from primary. If you perform table redistribution and repartitioning with system replication enabled, it will slow down the activity.

Stop SAP application

Stop SAP application servers

Lock users

Lock all users in HANA except the system users SYS, _SYS_REPO, SYSTEM, SAP schema user, etc. Ensure there are no sessions active in the database before you proceed to the next step. This will also ensure that SLT replication cannot happen. Still if you want you can deactivate SLT replication separately.

Execute “optimize table distribution” operation

From HANA studio, go to landscape --> Redistribution. Select “Optimize table distribution” and click on execute. In the next screen under “Parameters” leave the field blank. This will ensure that table repartitioning will also be taken care of along with redistribution. However, if you want to run only redistribution without repartitioning, enter “NO_SPLIT” in the parameters field. Click on next to generate the reorg plan and then click on execute.

Monitor the redistribution and repartitioning operation

Run the SQL script "HANA_Redistribution_ReorganizationMonitor" from OSS note 1969700 to monitor the redistribution and repartitioning activity. You can also execute the below command to monitor the reorg steps

select IFNULL("STATUS", 'PENDING'), count(*) from REORG_STEPS where reorg_id=(SELECT

MAX(REORG_ID) from REORG_OVERVIEW) group by "STATUS";

Startup SAP

Start SAP application servers after the redistribution completes.

Run compression of tables

The changes in the partition specification of the tables as part of this activity leads to tables in uncompressed form. Though the “optimize table distribution” process carries out compression as part of this activity, due to some bug tables can still be in uncompressed form after this activity completes. This will lead to high memory usage. Compression will run automatically after the next delta merge happens on these tables. If you want you can perform it manually. Execute the SQL script "HANA_Tables_ColumnStore_TablesWithoutCompressionOptimization" and HANA_Tables_ColumnStore_ColumnsWithoutCompressionOptimization" from OSS note 1969700 to get the list of tables and columns that need compression. The output of this script provides the SQL query for executing the compression.

Result

As an outcome of this activity, the distribution of tables evened out across all the hosts of the system. The memory space occupied by column store tables also became more or less even. Also you can see that the size of tables in the master node has reduced. This is because some of our BW projects had created big tables in the master node which have been moved to the slave nodes as part of this redistribution activity. This should be the ideal scenario.

Size of tables on disk (before)

Size of tables on disk (after)

Count and memory consumption of tables (before)

Count and memory consumption of tables (after)

I hope this article helps you all whoever is planning to perform this activity on your BW on HANA system.

Thanks,

Arindam

Referrence:

OSS note 1908075 - BW on SAP HANA: Table placement and landscape redistribution

OSS note 2143736 - FAQ: SAP HANA Table Distribution for BW

- SAP Managed Tags:

- SAP HANA, platform edition

7 Comments

You must be a registered user to add a comment. If you've already registered, sign in. Otherwise, register and sign in.

Labels in this area

-

"automatische backups"

1 -

"regelmäßige sicherung"

1 -

505 Technology Updates 53

1 -

ABAP

14 -

ABAP API

1 -

ABAP CDS Views

2 -

ABAP CDS Views - BW Extraction

1 -

ABAP CDS Views - CDC (Change Data Capture)

1 -

ABAP class

2 -

ABAP Cloud

2 -

ABAP Development

5 -

ABAP in Eclipse

1 -

ABAP Platform Trial

1 -

ABAP Programming

2 -

abap technical

1 -

absl

1 -

access data from SAP Datasphere directly from Snowflake

1 -

Access data from SAP datasphere to Qliksense

1 -

Accrual

1 -

action

1 -

adapter modules

1 -

Addon

1 -

Adobe Document Services

1 -

ADS

1 -

ADS Config

1 -

ADS with ABAP

1 -

ADS with Java

1 -

ADT

2 -

Advance Shipping and Receiving

1 -

Advanced Event Mesh

3 -

AEM

1 -

AI

7 -

AI Launchpad

1 -

AI Projects

1 -

AIML

9 -

Alert in Sap analytical cloud

1 -

Amazon S3

1 -

Analytical Dataset

1 -

Analytical Model

1 -

Analytics

1 -

Analyze Workload Data

1 -

annotations

1 -

API

1 -

API and Integration

3 -

API Call

2 -

Application Architecture

1 -

Application Development

5 -

Application Development for SAP HANA Cloud

3 -

Applications and Business Processes (AP)

1 -

Artificial Intelligence

1 -

Artificial Intelligence (AI)

4 -

Artificial Intelligence (AI) 1 Business Trends 363 Business Trends 8 Digital Transformation with Cloud ERP (DT) 1 Event Information 462 Event Information 15 Expert Insights 114 Expert Insights 76 Life at SAP 418 Life at SAP 1 Product Updates 4

1 -

Artificial Intelligence (AI) blockchain Data & Analytics

1 -

Artificial Intelligence (AI) blockchain Data & Analytics Intelligent Enterprise

1 -

Artificial Intelligence (AI) blockchain Data & Analytics Intelligent Enterprise Oil Gas IoT Exploration Production

1 -

Artificial Intelligence (AI) blockchain Data & Analytics Intelligent Enterprise sustainability responsibility esg social compliance cybersecurity risk

1 -

ASE

1 -

ASR

2 -

ASUG

1 -

Attachments

1 -

Authorisations

1 -

Automating Processes

1 -

Automation

1 -

aws

2 -

Azure

1 -

Azure AI Studio

1 -

B2B Integration

1 -

Backorder Processing

1 -

Backup

1 -

Backup and Recovery

1 -

Backup schedule

1 -

BADI_MATERIAL_CHECK error message

1 -

Bank

1 -

BAS

1 -

basis

2 -

Basis Monitoring & Tcodes with Key notes

2 -

Batch Management

1 -

BDC

1 -

Best Practice

1 -

bitcoin

1 -

Blockchain

3 -

BOP in aATP

1 -

BOP Segments

1 -

BOP Strategies

1 -

BOP Variant

1 -

BPC

1 -

BPC LIVE

1 -

BTP

11 -

BTP Destination

2 -

Business AI

1 -

Business and IT Integration

1 -

Business application stu

1 -

Business Architecture

1 -

Business Communication Services

1 -

Business Continuity

1 -

Business Data Fabric

3 -

Business Partner

12 -

Business Partner Master Data

10 -

Business Technology Platform

2 -

Business Trends

1 -

CA

1 -

calculation view

1 -

CAP

3 -

Capgemini

1 -

CAPM

1 -

Catalyst for Efficiency: Revolutionizing SAP Integration Suite with Artificial Intelligence (AI) and

1 -

CCMS

2 -

CDQ

12 -

CDS

2 -

Cental Finance

1 -

Certificates

1 -

CFL

1 -

Change Management

1 -

chatbot

1 -

chatgpt

3 -

CL_SALV_TABLE

2 -

Class Runner

1 -

Classrunner

1 -

Cloud ALM Monitoring

1 -

Cloud ALM Operations

1 -

cloud connector

1 -

Cloud Extensibility

1 -

Cloud Foundry

3 -

Cloud Integration

6 -

Cloud Platform Integration

2 -

cloudalm

1 -

communication

1 -

Compensation Information Management

1 -

Compensation Management

1 -

Compliance

1 -

Compound Employee API

1 -

Configuration

1 -

Connectors

1 -

Consolidation Extension for SAP Analytics Cloud

1 -

Controller-Service-Repository pattern

1 -

Conversion

1 -

Cosine similarity

1 -

cryptocurrency

1 -

CSI

1 -

ctms

1 -

Custom chatbot

3 -

Custom Destination Service

1 -

custom fields

1 -

Customer Experience

1 -

Customer Journey

1 -

Customizing

1 -

Cyber Security

2 -

Data

1 -

Data & Analytics

1 -

Data Aging

1 -

Data Analytics

2 -

Data and Analytics (DA)

1 -

Data Archiving

1 -

Data Back-up

1 -

Data Governance

5 -

Data Integration

2 -

Data Quality

12 -

Data Quality Management

12 -

Data Synchronization

1 -

data transfer

1 -

Data Unleashed

1 -

Data Value

8 -

database tables

1 -

Datasphere

2 -

datenbanksicherung

1 -

dba cockpit

1 -

dbacockpit

1 -

Debugging

2 -

Delimiting Pay Components

1 -

Delta Integrations

1 -

Destination

3 -

Destination Service

1 -

Developer extensibility

1 -

Developing with SAP Integration Suite

1 -

Devops

1 -

digital transformation

1 -

Documentation

1 -

Dot Product

1 -

DQM

1 -

dump database

1 -

dump transaction

1 -

e-Invoice

1 -

E4H Conversion

1 -

Eclipse ADT ABAP Development Tools

2 -

edoc

1 -

edocument

1 -

ELA

1 -

Embedded Consolidation

1 -

Embedding

1 -

Embeddings

1 -

Employee Central

1 -

Employee Central Payroll

1 -

Employee Central Time Off

1 -

Employee Information

1 -

Employee Rehires

1 -

Enable Now

1 -

Enable now manager

1 -

endpoint

1 -

Enhancement Request

1 -

Enterprise Architecture

1 -

ETL Business Analytics with SAP Signavio

1 -

Euclidean distance

1 -

Event Dates

1 -

Event Driven Architecture

1 -

Event Mesh

2 -

Event Reason

1 -

EventBasedIntegration

1 -

EWM

1 -

EWM Outbound configuration

1 -

EWM-TM-Integration

1 -

Existing Event Changes

1 -

Expand

1 -

Expert

2 -

Expert Insights

1 -

Fiori

14 -

Fiori Elements

2 -

Fiori SAPUI5

12 -

Flask

1 -

Full Stack

8 -

Funds Management

1 -

General

1 -

Generative AI

1 -

Getting Started

1 -

GitHub

8 -

Grants Management

1 -

groovy

1 -

GTP

1 -

HANA

5 -

HANA Cloud

2 -

Hana Cloud Database Integration

2 -

HANA DB

1 -

HANA XS Advanced

1 -

Historical Events

1 -

home labs

1 -

HowTo

1 -

HR Data Management

1 -

html5

8 -

Identity cards validation

1 -

idm

1 -

Implementation

1 -

input parameter

1 -

instant payments

1 -

Integration

3 -

Integration Advisor

1 -

Integration Architecture

1 -

Integration Center

1 -

Integration Suite

1 -

intelligent enterprise

1 -

Java

1 -

job

1 -

Job Information Changes

1 -

Job-Related Events

1 -

Job_Event_Information

1 -

joule

4 -

Journal Entries

1 -

Just Ask

1 -

Kerberos for ABAP

8 -

Kerberos for JAVA

8 -

Launch Wizard

1 -

Learning Content

2 -

Life at SAP

1 -

lightning

1 -

Linear Regression SAP HANA Cloud

1 -

local tax regulations

1 -

LP

1 -

Machine Learning

2 -

Marketing

1 -

Master Data

3 -

Master Data Management

14 -

Maxdb

2 -

MDG

1 -

MDGM

1 -

MDM

1 -

Message box.

1 -

Messages on RF Device

1 -

Microservices Architecture

1 -

Microsoft Universal Print

1 -

Middleware Solutions

1 -

Migration

5 -

ML Model Development

1 -

Modeling in SAP HANA Cloud

8 -

Monitoring

3 -

MTA

1 -

Multi-Record Scenarios

1 -

Multiple Event Triggers

1 -

Neo

1 -

New Event Creation

1 -

New Feature

1 -

Newcomer

1 -

NodeJS

2 -

ODATA

2 -

OData APIs

1 -

odatav2

1 -

ODATAV4

1 -

ODBC

1 -

ODBC Connection

1 -

Onpremise

1 -

open source

2 -

OpenAI API

1 -

Oracle

1 -

PaPM

1 -

PaPM Dynamic Data Copy through Writer function

1 -

PaPM Remote Call

1 -

PAS-C01

1 -

Pay Component Management

1 -

PGP

1 -

Pickle

1 -

PLANNING ARCHITECTURE

1 -

Popup in Sap analytical cloud

1 -

PostgrSQL

1 -

POSTMAN

1 -

Process Automation

2 -

Product Updates

4 -

PSM

1 -

Public Cloud

1 -

Python

4 -

Qlik

1 -

Qualtrics

1 -

RAP

3 -

RAP BO

2 -

Record Deletion

1 -

Recovery

1 -

recurring payments

1 -

redeply

1 -

Release

1 -

Remote Consumption Model

1 -

Replication Flows

1 -

Research

1 -

Resilience

1 -

REST

1 -

REST API

1 -

Retagging Required

1 -

Risk

1 -

Rolling Kernel Switch

1 -

route

1 -

rules

1 -

S4 HANA

1 -

S4 HANA Cloud

1 -

S4 HANA On-Premise

1 -

S4HANA

3 -

S4HANA_OP_2023

2 -

SAC

10 -

SAC PLANNING

9 -

SAP

4 -

SAP ABAP

1 -

SAP Advanced Event Mesh

1 -

SAP AI Core

8 -

SAP AI Launchpad

8 -

SAP Analytic Cloud Compass

1 -

Sap Analytical Cloud

1 -

SAP Analytics Cloud

4 -

SAP Analytics Cloud for Consolidation

2 -

SAP Analytics Cloud Story

1 -

SAP analytics clouds

1 -

SAP BAS

1 -

SAP Basis

6 -

SAP BODS

1 -

SAP BODS certification.

1 -

SAP BTP

20 -

SAP BTP Build Work Zone

2 -

SAP BTP Cloud Foundry

5 -

SAP BTP Costing

1 -

SAP BTP CTMS

1 -

SAP BTP Innovation

1 -

SAP BTP Migration Tool

1 -

SAP BTP SDK IOS

1 -

SAP Build

11 -

SAP Build App

1 -

SAP Build apps

1 -

SAP Build CodeJam

1 -

SAP Build Process Automation

3 -

SAP Build work zone

10 -

SAP Business Objects Platform

1 -

SAP Business Technology

2 -

SAP Business Technology Platform (XP)

1 -

sap bw

1 -

SAP CAP

2 -

SAP CDC

1 -

SAP CDP

1 -

SAP Certification

1 -

SAP Cloud ALM

4 -

SAP Cloud Application Programming Model

1 -

SAP Cloud Integration for Data Services

1 -

SAP cloud platform

8 -

SAP Companion

1 -

SAP CPI

3 -

SAP CPI (Cloud Platform Integration)

2 -

SAP CPI Discover tab

1 -

sap credential store

1 -

SAP Customer Data Cloud

1 -

SAP Customer Data Platform

1 -

SAP Data Intelligence

1 -

SAP Data Migration in Retail Industry

1 -

SAP Data Services

1 -

SAP DATABASE

1 -

SAP Dataspher to Non SAP BI tools

1 -

SAP Datasphere

9 -

SAP DRC

1 -

SAP EWM

1 -

SAP Fiori

2 -

SAP Fiori App Embedding

1 -

Sap Fiori Extension Project Using BAS

1 -

SAP GRC

1 -

SAP HANA

1 -

SAP HCM (Human Capital Management)

1 -

SAP HR Solutions

1 -

SAP IDM

1 -

SAP Integration Suite

9 -

SAP Integrations

4 -

SAP iRPA

2 -

SAP Learning Class

1 -

SAP Learning Hub

1 -

SAP Odata

2 -

SAP on Azure

1 -

SAP PartnerEdge

1 -

sap partners

1 -

SAP Password Reset

1 -

SAP PO Migration

1 -

SAP Prepackaged Content

1 -

SAP Process Automation

2 -

SAP Process Integration

2 -

SAP Process Orchestration

1 -

SAP S4HANA

2 -

SAP S4HANA Cloud

1 -

SAP S4HANA Cloud for Finance

1 -

SAP S4HANA Cloud private edition

1 -

SAP Sandbox

1 -

SAP STMS

1 -

SAP SuccessFactors

2 -

SAP SuccessFactors HXM Core

1 -

SAP Time

1 -

SAP TM

2 -

SAP Trading Partner Management

1 -

SAP UI5

1 -

SAP Upgrade

1 -

SAP-GUI

8 -

SAP_COM_0276

1 -

SAPBTP

1 -

SAPCPI

1 -

SAPEWM

1 -

sapmentors

1 -

saponaws

2 -

SAPUI5

4 -

schedule

1 -

Secure Login Client Setup

8 -

security

9 -

Selenium Testing

1 -

SEN

1 -

SEN Manager

1 -

service

1 -

SET_CELL_TYPE

1 -

SET_CELL_TYPE_COLUMN

1 -

SFTP scenario

2 -

Simplex

1 -

Single Sign On

8 -

Singlesource

1 -

SKLearn

1 -

soap

1 -

Software Development

1 -

SOLMAN

1 -

solman 7.2

2 -

Solution Manager

3 -

sp_dumpdb

1 -

sp_dumptrans

1 -

SQL

1 -

sql script

1 -

SSL

8 -

SSO

8 -

Substring function

1 -

SuccessFactors

1 -

SuccessFactors Time Tracking

1 -

Sybase

1 -

system copy method

1 -

System owner

1 -

Table splitting

1 -

Tax Integration

1 -

Technical article

1 -

Technical articles

1 -

Technology Updates

1 -

Technology Updates

1 -

Technology_Updates

1 -

Threats

1 -

Time Collectors

1 -

Time Off

2 -

Tips and tricks

2 -

Tools

1 -

Trainings & Certifications

1 -

Transport in SAP BODS

1 -

Transport Management

1 -

TypeScript

2 -

unbind

1 -

Unified Customer Profile

1 -

UPB

1 -

Use of Parameters for Data Copy in PaPM

1 -

User Unlock

1 -

VA02

1 -

Validations

1 -

Vector Database

1 -

Vector Engine

1 -

Visual Studio Code

1 -

VSCode

1 -

Web SDK

1 -

work zone

1 -

workload

1 -

xsa

1 -

XSA Refresh

1

- « Previous

- Next »

Related Content

- Workload Analysis for HANA Platform Series - 1. Define and Understand the Workload Pattern in Technology Blogs by SAP

- Workload Analysis for HANA Platform Series - 2. Analyze the CPU, Threads and Numa Utilizations in Technology Blogs by SAP

- Backorder Processing in aATP - SAP S4 HANA in Technology Blogs by Members

- Workload in the SAP HANA in Technology Blogs by Members

- Collected information about reclaim / shrink / defragmentation topic in context of SAP HANA persistence (with example) in Technology Blogs by SAP

Top kudoed authors

| User | Count |

|---|---|

| 11 | |

| 9 | |

| 7 | |

| 6 | |

| 4 | |

| 4 | |

| 3 | |

| 3 | |

| 3 | |

| 3 |