- SAP Community

- Products and Technology

- Technology

- Technology Blogs by Members

- SAP Leonardo ML Foundation - Retraining part 1

Technology Blogs by Members

Explore a vibrant mix of technical expertise, industry insights, and tech buzz in member blogs covering SAP products, technology, and events. Get in the mix!

Turn on suggestions

Auto-suggest helps you quickly narrow down your search results by suggesting possible matches as you type.

Showing results for

Product and Topic Expert

Options

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

02-15-2018

10:31 AM

Introducing

To continue the story from the last blog, were we get started how to get access to SAP Leonardo ML Foundation. And which steps are requierde to get allowed to call the API´s.

| 1 | Getting started with SAP Leonard ML Foundation on SAP Cloud Platform Cloud Foundry |

| 2 | SAP Leonardo ML Foundation - Retraining part1 (this blog) |

| 3 | SAP Leonardo ML Foundation - Retraining part2 |

| 4 | SAP Leonardo ML Foundation - Bring your own model (BYOM) |

SAP Leonardo ML Foundation Architecture:

We want now focused on the upcomming lines to check and execute the retraining for the "image" callssifier with our own data.

I want focus on this blog the doing and not on ML in general. And futhermore u can use the "retraining" functionality not with a trial version!

Important: Currently only the "Image Classifier Service" can be used for the retraining.

Let´s start......

In general the retraining consists of the follwing four steps:

- Uploading the data for the training

- Executing the retraining job

- Deploy the model

- Execute the image classifier API

In detail we want

Please check pls also the SAP Help documentation.

Data, data, data

The first thing what wee need is for sure some data as our source which we want to use to train our new model.

Based on the fact, that hopefully the spring is not far away we just using some nice flower data ;o)

Another (the real) reason is that "Tensorflow" provides an archive for that and we want to start simple.

But anyway another good resouce to get other pictures is of course the Image Net or the Faktun Batch Download Picture plugin for chrome

As mentioned before we just starting by download flower archive from here to our local device.

A part of this data will be used later for our own "flower" model with the SAP Leonardo ML Image Classification service.

Get started and check the API

A good starting point is simply to enter the "retraining url" in a browser and have alook at Swagger UI to get an first idea which options we have:

In general we have three main parts for the retraining:

- jobs

- deloyments

- models

Data preperation

Before we can execute one of the API´s we need to prepare our data and uplpoad them to AWS.

To start simple i´ve decided to reduce the amount of the data which comes with the archive which is provided by tensorflow. I think thre categories of flowers works.

For this create the following data structure:

+-- flowers

+-- training

+--roses

+--sunflowers

+--tulips

+-- test

+--roses

+--sunflowers

+--tulips

+-- validation

+--roses

+--sunflowers

+--tulipsAs documented we need to structure the 3 folders "training", "test" and "vaidation".

Furhermore we split our source data into a 80-10-10 (~80% training, ~10% test and ~10 % validation).

Access the AWS object store

To get access to the object storage which runs on Amazon Webservice (AWS) we can using "minio" to operate directly with the S3 objectstore.

You can get the minio client here: link

Additional we can access the data also via UI.

For this and also the CLI access we need first to initialize (needs to be done only once) our file system by executing the follwing API call:

| HTTP Method | GET |

| URL | <JOB_SUBMISSION_API_URL> |

| PATH | /v1/storage/endpoint |

| HEADER | Authorization (OAuth2 Access Token) |

As response we get now something like this:

{

"access_key": "<access key>",

"endpoint": "<endpoint>.files.eu-central-1.aws.ml.hana.ondemand.com",

"message": "The endpoint is ready to use.",

"secret_key": "<secret key>",

"status": "Ready"

}The Minio UI

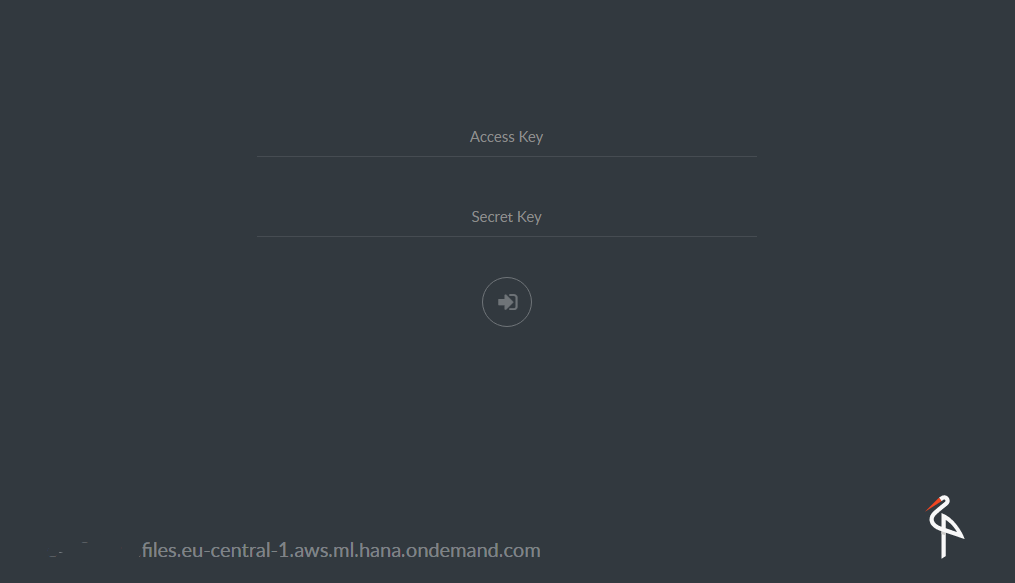

To get acces to the s3 store via the minio ui enter the URL and logon via the "acces key" and the "secret key":

Afterwards we are able to see our bucket (data) with some data:

The CLI access

For the access via the CLI, we just starting here again with the authentification:

>mc.exe config host add saps3 https://<your endon aws s3>.files.eu-central-1.aws.ml.hana.ondemand.com <access key> <secret key>

Added `saps3` successfully.And afterwards we now can using the "mc" command to e.g. list our data (buckets):

mc.exe ls <bucket>/<directory>

Update: Using "cyberduck"

Additional to the previous tools u can also use "cyberduck" to connect to your AWS S3 filesystem.

Creat a new AWS S3 connection by entering the required data:

As result u can access the data here:

Upload our data

Now its time to upload our "custom" data which we wan´t to use for our "retraining".

The easiest way is to copy our files by executing the cp command:

mc.exe cp -r E:\0_SAPCP\8_ML\1_SAP_ML\0_Development\1_first_try\flowers saps3\data

...bc557236c7_n.jpg: 146.19 MB / 146.19 MB [================================================] 100.00% 484.60 KB/s 5m8s

Aferwards we can see our uploadad data on our AWS S3 bucket:

In the case something is going wrong u can also use the following command to delete your data / bucket:

mc.exe rm --recursive --dangerous --force saps3/dataA complete overview about all commands can be found by executing the "--help" parameter.

mc.exe --helpTime for the retraining....execute the job

As result that our data is know in place to exetute our training wen can now call the corresponding API:

Details:

| HTTP Method | POST |

| URL | <RETRAIN_API_URL> |

| PATH | /v1/jobs |

| HEADER | Authorization (OAuth2 Access Token) |

And the following Body:

{

"mode": "image",

"options": {

"dataset": "flowers",

"modelName": "flowers-demo"

}

}As response we get now the "job id":

{

"id": "flowers-2018-02-15t0851z"

}By executing the correspomding GET method we can retrieve the details and the status about the all "jobs":

or only our new job:

We get something like this response:

{

"processedTime": "2018-02-15T08:54:31.541131",

"status": {

"startTime": null,

"submissionTime": null,

"id": "flowers-2018-02-15t0851z",

"finishTime": null,

"status": "Pending/Scheduled"

}

}{

"processedTime": "2018-02-15T08:57:34.844304",

"status": {

"startTime": "2018-02-15T08:57:33Z",

"submissionTime": "2018-02-15T08:55:36Z",

"id": "flowers-2018-02-15t0851z",

"finishTime": null,

"status": "Running"

}

}And finally u can see i took a while:

{

"processedTime": "2018-02-15T09:03:15.181445",

"status": {

"submissionTime": "2018-02-15T08:55:36Z",

"id": "flowers-2018-02-15t0851z",

"startTime": "2018-02-15T08:57:33Z",

"finishTime": "2018-02-15T09:02:32Z",

"status": "Succeeded"

}

}Lets check the log´s

Before we start with the final deplyoment, we start we a short look at our AWS S3 filesystem.

And there we can now see some additional folders:

>mc.exe ls saps3/data/

[2018-02-15 10:04:34 CET] 0B flowers-2018-02-15t0851z\

[2018-02-15 10:04:34 CET] 0B flowers\

[2018-02-15 10:04:34 CET] 0B jobs\If we now display the content of our "job id" folder.

mc.exe ls -r saps3/data/flowers-2018-02-15t0744z

[2018-02-15 10:02:31 CET] 12KiB retraining.logAnd futhermore if we have a deeper look at the log file we get the information about the retraining:

mc.exe cat saps3/data/flowers-2018-02-15t0851z\retraining.log

Scanning dataset flowers ...

Dataset used: flowers

Dataset has labels: ['roses', 'sunflowers', 'tulips']

2228 images are used for training

180 images are used for validation

200 images are used for test

********** Summary for epoch: 0 **********

2018-02-15 09:00:08: Step 0: Train accuracy = 87.5%%

2018-02-15 09:00:08: Step 0: Cross entropy = 0.451392

2018-02-15 09:00:09: Step 0: Validation accuracy = 86.1%% (N=180)

2018-02-15 09:00:09: Step 0: Validation cross entropy = 0.437444

Saving intermediate result.

********** Summary for epoch: 1 **********

2018-02-15 09:00:13: Step 1: Train accuracy = 93.8%%

2018-02-15 09:00:13: Step 1: Cross entropy = 0.291782

2018-02-15 09:00:13: Step 1: Validation accuracy = 92.2%% (N=180)

2018-02-15 09:00:13: Step 1: Validation cross entropy = 0.320360

Saving intermediate result.

.....

At the end of this file we get the "Summary" about our training:

##########################################

########### Retraining Summary ###########

##########################################

Job id: flowers-2018-02-15t0851z

Training batch size : 64

Learning rate : 0.001000

Total retraining epochs : 100

Retraining is stopped after 10 consecutive epochs which show no improvement in accurracy.

Epoch with best accuracy : 27

Best validation accuracy : 1.000000

Final test accuracy is : 0.985000

The exported model will predict top 3 classifications

Retraining started at: 2018-02-15 08:57:34

Retraining ended at: 2018-02-15 09:01:59

Restoring parameters from /home/model/interval-model-27

No assets to save.

No assets to write.

SavedModel written to: /home/model/tfs/saved_model.pb

TF Serving model saved.

Retraining lasted: 0:04:25.357850

Model is uploaded to repository with name flowers-demo and version 3.A short explanation to the "Epoch" and "Bacth Size" terminology is here described: link

Epoch: One Epoch is when an ENTIRE dataset is passed forward and backward through the neural network only ONCE

Batch Size: Total number of training examples present in a single batch.

In the next blog we will continue the retraining by deploying the model and finally testing and executing our "new" model by adapting the standard "Image Classifier" API.

cheers,

fabian

Helpful Links

SAP Leonardo ML Foundation: https://help.sap.com/viewer/product/SAP_LEONARDO_MACHINE_LEARNING_FOUNDATION/1.0/en-US

Tensorflow flowers dataset: http://download.tensorflow.org/example_images/flower_photos.tgz

Minio Client: https://docs.minio.io/docs/minio-client-quickstart-guide

Tensorflow: https://www.tensorflow.org

Image net: http://image-net.org

Faktun Batch Downlaod Image: https://chrome.google.com/webstore/detail/fatkun-batch-download-ima/nnjjahlikiabnchcpehcpkdeckfgnohf...

Epoch vs Batch Size vs Iterations: https://towardsdatascience.com/epoch-vs-iterations-vs-batch-size-4dfb9c7ce9c9

22 Comments

You must be a registered user to add a comment. If you've already registered, sign in. Otherwise, register and sign in.

Labels in this area

-

"automatische backups"

1 -

"regelmäßige sicherung"

1 -

"TypeScript" "Development" "FeedBack"

1 -

505 Technology Updates 53

1 -

ABAP

14 -

ABAP API

1 -

ABAP CDS Views

2 -

ABAP CDS Views - BW Extraction

1 -

ABAP CDS Views - CDC (Change Data Capture)

1 -

ABAP class

2 -

ABAP Cloud

2 -

ABAP Development

5 -

ABAP in Eclipse

1 -

ABAP Platform Trial

1 -

ABAP Programming

2 -

abap technical

1 -

absl

2 -

access data from SAP Datasphere directly from Snowflake

1 -

Access data from SAP datasphere to Qliksense

1 -

Accrual

1 -

action

1 -

adapter modules

1 -

Addon

1 -

Adobe Document Services

1 -

ADS

1 -

ADS Config

1 -

ADS with ABAP

1 -

ADS with Java

1 -

ADT

2 -

Advance Shipping and Receiving

1 -

Advanced Event Mesh

3 -

AEM

1 -

AI

7 -

AI Launchpad

1 -

AI Projects

1 -

AIML

9 -

Alert in Sap analytical cloud

1 -

Amazon S3

1 -

Analytical Dataset

1 -

Analytical Model

1 -

Analytics

1 -

Analyze Workload Data

1 -

annotations

1 -

API

1 -

API and Integration

3 -

API Call

2 -

Application Architecture

1 -

Application Development

5 -

Application Development for SAP HANA Cloud

3 -

Applications and Business Processes (AP)

1 -

Artificial Intelligence

1 -

Artificial Intelligence (AI)

5 -

Artificial Intelligence (AI) 1 Business Trends 363 Business Trends 8 Digital Transformation with Cloud ERP (DT) 1 Event Information 462 Event Information 15 Expert Insights 114 Expert Insights 76 Life at SAP 418 Life at SAP 1 Product Updates 4

1 -

Artificial Intelligence (AI) blockchain Data & Analytics

1 -

Artificial Intelligence (AI) blockchain Data & Analytics Intelligent Enterprise

1 -

Artificial Intelligence (AI) blockchain Data & Analytics Intelligent Enterprise Oil Gas IoT Exploration Production

1 -

Artificial Intelligence (AI) blockchain Data & Analytics Intelligent Enterprise sustainability responsibility esg social compliance cybersecurity risk

1 -

ASE

1 -

ASR

2 -

ASUG

1 -

Attachments

1 -

Authorisations

1 -

Automating Processes

1 -

Automation

2 -

aws

2 -

Azure

1 -

Azure AI Studio

1 -

B2B Integration

1 -

Backorder Processing

1 -

Backup

1 -

Backup and Recovery

1 -

Backup schedule

1 -

BADI_MATERIAL_CHECK error message

1 -

Bank

1 -

BAS

1 -

basis

2 -

Basis Monitoring & Tcodes with Key notes

2 -

Batch Management

1 -

BDC

1 -

Best Practice

1 -

bitcoin

1 -

Blockchain

3 -

bodl

1 -

BOP in aATP

1 -

BOP Segments

1 -

BOP Strategies

1 -

BOP Variant

1 -

BPC

1 -

BPC LIVE

1 -

BTP

12 -

BTP Destination

2 -

Business AI

1 -

Business and IT Integration

1 -

Business application stu

1 -

Business Application Studio

1 -

Business Architecture

1 -

Business Communication Services

1 -

Business Continuity

1 -

Business Data Fabric

3 -

Business Partner

12 -

Business Partner Master Data

10 -

Business Technology Platform

2 -

Business Trends

1 -

CA

1 -

calculation view

1 -

CAP

3 -

Capgemini

1 -

CAPM

1 -

Catalyst for Efficiency: Revolutionizing SAP Integration Suite with Artificial Intelligence (AI) and

1 -

CCMS

2 -

CDQ

12 -

CDS

2 -

Cental Finance

1 -

Certificates

1 -

CFL

1 -

Change Management

1 -

chatbot

1 -

chatgpt

3 -

CL_SALV_TABLE

2 -

Class Runner

1 -

Classrunner

1 -

Cloud ALM Monitoring

1 -

Cloud ALM Operations

1 -

cloud connector

1 -

Cloud Extensibility

1 -

Cloud Foundry

4 -

Cloud Integration

6 -

Cloud Platform Integration

2 -

cloudalm

1 -

communication

1 -

Compensation Information Management

1 -

Compensation Management

1 -

Compliance

1 -

Compound Employee API

1 -

Configuration

1 -

Connectors

1 -

Consolidation Extension for SAP Analytics Cloud

2 -

Control Indicators.

1 -

Controller-Service-Repository pattern

1 -

Conversion

1 -

Cosine similarity

1 -

cryptocurrency

1 -

CSI

1 -

ctms

1 -

Custom chatbot

3 -

Custom Destination Service

1 -

custom fields

1 -

Customer Experience

1 -

Customer Journey

1 -

Customizing

1 -

cyber security

3 -

Data

1 -

Data & Analytics

1 -

Data Aging

1 -

Data Analytics

2 -

Data and Analytics (DA)

1 -

Data Archiving

1 -

Data Back-up

1 -

Data Governance

5 -

Data Integration

2 -

Data Quality

12 -

Data Quality Management

12 -

Data Synchronization

1 -

data transfer

1 -

Data Unleashed

1 -

Data Value

8 -

database tables

1 -

Datasphere

2 -

datenbanksicherung

1 -

dba cockpit

1 -

dbacockpit

1 -

Debugging

2 -

Delimiting Pay Components

1 -

Delta Integrations

1 -

Destination

3 -

Destination Service

1 -

Developer extensibility

1 -

Developing with SAP Integration Suite

1 -

Devops

1 -

digital transformation

1 -

Documentation

1 -

Dot Product

1 -

DQM

1 -

dump database

1 -

dump transaction

1 -

e-Invoice

1 -

E4H Conversion

1 -

Eclipse ADT ABAP Development Tools

2 -

edoc

1 -

edocument

1 -

ELA

1 -

Embedded Consolidation

1 -

Embedding

1 -

Embeddings

1 -

Employee Central

1 -

Employee Central Payroll

1 -

Employee Central Time Off

1 -

Employee Information

1 -

Employee Rehires

1 -

Enable Now

1 -

Enable now manager

1 -

endpoint

1 -

Enhancement Request

1 -

Enterprise Architecture

1 -

ETL Business Analytics with SAP Signavio

1 -

Euclidean distance

1 -

Event Dates

1 -

Event Driven Architecture

1 -

Event Mesh

2 -

Event Reason

1 -

EventBasedIntegration

1 -

EWM

1 -

EWM Outbound configuration

1 -

EWM-TM-Integration

1 -

Existing Event Changes

1 -

Expand

1 -

Expert

2 -

Expert Insights

1 -

Fiori

14 -

Fiori Elements

2 -

Fiori SAPUI5

12 -

Flask

1 -

Full Stack

8 -

Funds Management

1 -

General

1 -

Generative AI

1 -

Getting Started

1 -

GitHub

8 -

Grants Management

1 -

groovy

1 -

GTP

1 -

HANA

6 -

HANA Cloud

2 -

Hana Cloud Database Integration

2 -

HANA DB

2 -

HANA XS Advanced

1 -

Historical Events

1 -

home labs

1 -

HowTo

1 -

HR Data Management

1 -

html5

8 -

HTML5 Application

1 -

Identity cards validation

1 -

idm

1 -

Implementation

1 -

input parameter

1 -

instant payments

1 -

Integration

3 -

Integration Advisor

1 -

Integration Architecture

1 -

Integration Center

1 -

Integration Suite

1 -

intelligent enterprise

1 -

iot

1 -

Java

1 -

job

1 -

Job Information Changes

1 -

Job-Related Events

1 -

Job_Event_Information

1 -

joule

4 -

Journal Entries

1 -

Just Ask

1 -

Kerberos for ABAP

8 -

Kerberos for JAVA

8 -

KNN

1 -

Launch Wizard

1 -

learning content

2 -

Life at SAP

1 -

lightning

1 -

Linear Regression SAP HANA Cloud

1 -

local tax regulations

1 -

LP

1 -

Machine Learning

2 -

Marketing

1 -

Master Data

3 -

Master Data Management

14 -

Maxdb

2 -

MDG

1 -

MDGM

1 -

MDM

1 -

Message box.

1 -

Messages on RF Device

1 -

Microservices Architecture

1 -

Microsoft Universal Print

1 -

Middleware Solutions

1 -

Migration

5 -

ML Model Development

1 -

Modeling in SAP HANA Cloud

8 -

Monitoring

3 -

MTA

1 -

Multi-Record Scenarios

1 -

Multiple Event Triggers

1 -

Neo

1 -

New Event Creation

1 -

New Feature

1 -

Newcomer

1 -

NodeJS

2 -

ODATA

2 -

OData APIs

1 -

odatav2

1 -

ODATAV4

1 -

ODBC

1 -

ODBC Connection

1 -

Onpremise

1 -

open source

2 -

OpenAI API

1 -

Oracle

1 -

PaPM

1 -

PaPM Dynamic Data Copy through Writer function

1 -

PaPM Remote Call

1 -

PAS-C01

1 -

Pay Component Management

1 -

PGP

1 -

Pickle

1 -

PLANNING ARCHITECTURE

1 -

Popup in Sap analytical cloud

1 -

PostgrSQL

1 -

POSTMAN

1 -

Process Automation

2 -

Product Updates

4 -

PSM

1 -

Public Cloud

1 -

Python

4 -

Qlik

1 -

Qualtrics

1 -

RAP

3 -

RAP BO

2 -

Record Deletion

1 -

Recovery

1 -

recurring payments

1 -

redeply

1 -

Release

1 -

Remote Consumption Model

1 -

Replication Flows

1 -

research

1 -

Resilience

1 -

REST

1 -

REST API

1 -

Retagging Required

1 -

Risk

1 -

Rolling Kernel Switch

1 -

route

1 -

rules

1 -

S4 HANA

1 -

S4 HANA Cloud

1 -

S4 HANA On-Premise

1 -

S4HANA

3 -

S4HANA_OP_2023

2 -

SAC

10 -

SAC PLANNING

9 -

SAP

4 -

SAP ABAP

1 -

SAP Advanced Event Mesh

1 -

SAP AI Core

8 -

SAP AI Launchpad

8 -

SAP Analytic Cloud Compass

1 -

Sap Analytical Cloud

1 -

SAP Analytics Cloud

4 -

SAP Analytics Cloud for Consolidation

3 -

SAP Analytics Cloud Story

1 -

SAP analytics clouds

1 -

SAP BAS

1 -

SAP Basis

6 -

SAP BODS

1 -

SAP BODS certification.

1 -

SAP BTP

21 -

SAP BTP Build Work Zone

2 -

SAP BTP Cloud Foundry

6 -

SAP BTP Costing

1 -

SAP BTP CTMS

1 -

SAP BTP Innovation

1 -

SAP BTP Migration Tool

1 -

SAP BTP SDK IOS

1 -

SAP Build

11 -

SAP Build App

1 -

SAP Build apps

1 -

SAP Build CodeJam

1 -

SAP Build Process Automation

3 -

SAP Build work zone

10 -

SAP Business Objects Platform

1 -

SAP Business Technology

2 -

SAP Business Technology Platform (XP)

1 -

sap bw

1 -

SAP CAP

2 -

SAP CDC

1 -

SAP CDP

1 -

SAP CDS VIEW

1 -

SAP Certification

1 -

SAP Cloud ALM

4 -

SAP Cloud Application Programming Model

1 -

SAP Cloud Integration for Data Services

1 -

SAP cloud platform

8 -

SAP Companion

1 -

SAP CPI

3 -

SAP CPI (Cloud Platform Integration)

2 -

SAP CPI Discover tab

1 -

sap credential store

1 -

SAP Customer Data Cloud

1 -

SAP Customer Data Platform

1 -

SAP Data Intelligence

1 -

SAP Data Migration in Retail Industry

1 -

SAP Data Services

1 -

SAP DATABASE

1 -

SAP Dataspher to Non SAP BI tools

1 -

SAP Datasphere

10 -

SAP DRC

1 -

SAP EWM

1 -

SAP Fiori

2 -

SAP Fiori App Embedding

1 -

Sap Fiori Extension Project Using BAS

1 -

SAP GRC

1 -

SAP HANA

1 -

SAP HCM (Human Capital Management)

1 -

SAP HR Solutions

1 -

SAP IDM

1 -

SAP Integration Suite

9 -

SAP Integrations

4 -

SAP iRPA

2 -

SAP Learning Class

1 -

SAP Learning Hub

1 -

SAP Odata

2 -

SAP on Azure

1 -

SAP PartnerEdge

1 -

sap partners

1 -

SAP Password Reset

1 -

SAP PO Migration

1 -

SAP Prepackaged Content

1 -

SAP Process Automation

2 -

SAP Process Integration

2 -

SAP Process Orchestration

1 -

SAP S4HANA

2 -

SAP S4HANA Cloud

1 -

SAP S4HANA Cloud for Finance

1 -

SAP S4HANA Cloud private edition

1 -

SAP Sandbox

1 -

SAP STMS

1 -

SAP successfactors

3 -

SAP SuccessFactors HXM Core

1 -

SAP Time

1 -

SAP TM

2 -

SAP Trading Partner Management

1 -

SAP UI5

1 -

SAP Upgrade

1 -

SAP Utilities

1 -

SAP-GUI

8 -

SAP_COM_0276

1 -

SAPBTP

1 -

SAPCPI

1 -

SAPEWM

1 -

sapmentors

1 -

saponaws

2 -

SAPS4HANA

1 -

SAPUI5

4 -

schedule

1 -

Secure Login Client Setup

8 -

security

9 -

Selenium Testing

1 -

SEN

1 -

SEN Manager

1 -

service

1 -

SET_CELL_TYPE

1 -

SET_CELL_TYPE_COLUMN

1 -

SFTP scenario

2 -

Simplex

1 -

Single Sign On

8 -

Singlesource

1 -

SKLearn

1 -

soap

1 -

Software Development

1 -

SOLMAN

1 -

solman 7.2

2 -

Solution Manager

3 -

sp_dumpdb

1 -

sp_dumptrans

1 -

SQL

1 -

sql script

1 -

SSL

8 -

SSO

8 -

Substring function

1 -

SuccessFactors

1 -

SuccessFactors Platform

1 -

SuccessFactors Time Tracking

1 -

Sybase

1 -

system copy method

1 -

System owner

1 -

Table splitting

1 -

Tax Integration

1 -

Technical article

1 -

Technical articles

1 -

Technology Updates

1 -

Technology Updates

1 -

Technology_Updates

1 -

terraform

1 -

Threats

1 -

Time Collectors

1 -

Time Off

2 -

Time Sheet

1 -

Time Sheet SAP SuccessFactors Time Tracking

1 -

Tips and tricks

2 -

toggle button

1 -

Tools

1 -

Trainings & Certifications

1 -

Transport in SAP BODS

1 -

Transport Management

1 -

TypeScript

2 -

ui designer

1 -

unbind

1 -

Unified Customer Profile

1 -

UPB

1 -

Use of Parameters for Data Copy in PaPM

1 -

User Unlock

1 -

VA02

1 -

Validations

1 -

Vector Database

2 -

Vector Engine

1 -

Visual Studio Code

1 -

VSCode

1 -

Web SDK

1 -

work zone

1 -

workload

1 -

xsa

1 -

XSA Refresh

1

- « Previous

- Next »

Related Content

- AI-powered Pipeline Corrosion Analysis: Introduction & Architecture in Technology Blogs by SAP

- Generative AI for SAP. Part V: Models and Knowledge Graphs (KG) in Technology Blogs by Members

- Exploring the potential of GPT in SAP ecosystem in Technology Blogs by SAP

- Top Secret Sessions for Your SAP Sapphire Agenda in Orlando from May 16-17, 2023 in Technology Blogs by SAP

- SAP Mentor Spotlight Interview: Leonardo De Araujo in Technology Blogs by SAP

Top kudoed authors

| User | Count |

|---|---|

| 12 | |

| 12 | |

| 7 | |

| 5 | |

| 5 | |

| 4 | |

| 4 | |

| 3 | |

| 3 | |

| 3 |