- SAP Community

- Groups

- Industry Groups

- SAP for Retail

- Blogs

- Your face is the key to unlocking multichannel ret...

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

Not a lot of people know this but one of the most powerful and complicated features we possess is face detection. Deep in the back our brain is a gyrus, or area, which is responsible for general recognition and recall. Located in this gyrus is the Fusiform Face Area (FFA) which specifically takes care of facial recognition.

Within milliseconds our FFAs can perceive gender, age, emotion and meanwhile lookup any memories related to the face’s owner. It is just as fast at detecting familiar faces in a crowd. Look back at yearbooks and class photos and you’ll be locating yourself almost immediately. Merely glancing at the faces around you will bring back old memories. We barely have to think about it.

The powers of the FFA predate language, fire and even sliced-bread. Now, courtesy of a revolution which turned the tables in 2014, computers are getting it too.

Which is rather big news because in the digital realm we have been approximating identification for as long as computers have been around. Names, logins, membership numbers and session-cookies are all used to represent specific individuals. In many cases they work well as a proxy — but they leave huge gaps when the limit is reached.

The retail chasm

In the Innovation Lab we’ve been exploring those particularly poignant gaps in retail. There is a wide chasm between online commerce and in-store retail. Any cross-commerce manager will tell you of the deep insights they can browse for days about their online store using Google Analytics compared to the hordes of silent, nearly invisible zombies who are shuffling through their retail locations.

Much of this issue lies with the absence of a strong proxy for retail visitors. We all admit to selling our profile-souls when we sign up for a few-percent discount loyal program. L2 calculates, by the way, that 86% of brand loyalty programs lack any rewards for profile signup.

Fundamentally, such programs only work to bring loyal (i.e. engaged and returning) people back to the store. They only provide the juicy insights right at the end of the purchasing-decision process — when transactions are made. The key question still remains; what are people doing between reading a newsletter and approaching the checkout? Right now beyond a couple of doorway counters and employing some talented shop assistants, the in-store purchase funnel is drenched in fog.

So, back to the FFA.

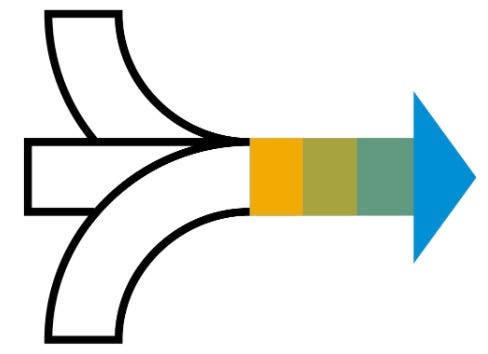

Integrate face-detection software with fixed cameras or smart-glasses and we have the potential to start recognising individual customers. From here are unlocked four layers of capability with increasing levels of what we, slightly tongue-in-cheek, like to call ‘commerce omnipotency’.

Personalized experiences. Omnipotency level: Hive Mind.

If a customer has registered their profile along with a headshot they can be recognized in real-time using face detection. Connect a pair of smart glasses to a customer database and now every shop assistant can be armed with a hive-mind of shopper insights in the corner of their eye.

Recent purchases, specific preferences, open complaints and waitlisted items can all be flashed up on the screen to be used as conversation cues and meaningful interactions.

That image above is from a product we built here in the Innovation Lab codenamed ‘Total Customer Recall’. It gives at-a-glance access to key customer metrics. It feels a little sci-fi right now but our position is that smart eyewear has started to emerge from a cumbersome and underpowered ugly-duckling phase. It will very soon metamorphosize into unobtrusive and natural eyewear which have significant applications.

Detect sessions. Omnipotency level: All seeing eye.

Using a sequence of entryway, in-store and exit cameras, customers can be detected as soon as they step inside. Forget recognizing them as explicitly known customers and instead track them as sessions which can be used to build segmentation and sales-cycle analytics.

We’re quite used to defining online sales funnels with conversion paths which run start with page views, proceed to add-to-carts and checkouts. The power here is to bring this in-store by using the face like a session cookie.

Sure, there are valid concerns about intrusion and privacy regulations but follow the rules (such as full disclosure, opt-in and appropriately handled personal data storage) and the results will be a transparent system which only succeeds if it provides value to the individuals concerned.

Imagine a sequence of retail stages such as (i) glancing into a shop window, (ii) walking inside, (iii) spending longer than a minute, (iv) walking to different areas, (v) looking at particular product displays and then (vi) proceeding to checkout. Now capture exit rates for each stage and the funnel is rich with information which can be layered up with segmentation about age, gender, sentiment and returning behaviours. The result is an analytics suite for bricks and mortar. We built an iOS app recently (shown above) which connects to three remote cameras and builds naturally relevant store insights.

Detect individuals. Omnipotency level: Thought Inception.

Here is where things become very juicy. We’ve detected customer visits and personalized the experience for known customers. Now comes the potential to combine them into untapped realms of engagement.

Take a bookstore. It is not out of the ordinary to suggest information about known customers can be extrapolated to new visitors. For example, if 90% of profiled women who visit a given bookstore on Tuesday afternoons between 2–4pm are below the age of 20 and buy books on the topic of Classic History then I have some confidence about the next visitor of a similar profile. Using screens or other forms of creative sound-visual content we can make very personalised recommendations. Back in 2013, McKinsey calculated Amazon’s product recommendations are worth 35% of total eCommerce revenue.

Together with our SMB retail point-of-sale team we built a prototype in the Lab recently. On the screen is a carousel of books and whenever anybody steps in front of the camera the rotation changes slightly to be a selection based on detectable features; gender, age and sentiment cues. The results are uncannily relevant despite our rudimentary algorithm which includes assumptions like “people in their 30s like self-help books’.

We’re cautious about sprinkling the words ‘machine learning’ all over solutions like a magic wand, but with a tight set of measurable attributes, it’s not a significant stretch to use existing sales data to build a model which can gain confidence about new purchaser behaviours.

Unify the channels. Omnipotency: God Mode.

Enter a new world which blends together digital and physical dimensions.

By connecting profiles between online and offline session we can build a insights and experiences which ignore the boundaries between channels. According to research by Wharton, 66% of purchases involve two or more channels. A GE Capital study on multichannel commerce found that 81% of shoppers researched their product online before making big purchases.

This effectively extends the sales funnel to the horizon in both directions. Someone may walk into the store having browsed online for inspiration and finally make the purchase over the counter. Another person might discover a product in store and then go home to research before ordering online. Both are very natural in the real world without the customer stopping to consider which channel they are using right now.

Knowing what we do about the fickleness of customer loyalty, if retailers are not alongside and keeping touch when these transitions occur, the chance of disconnection is high.

.

Regardless of the level of omnipotence, face recognition has huge prospects for making commerce more natural, more effective and essentially more profitable. It is only just becoming a possibility thanks to the digital version of something we all take for granted — the lowly FFA.

Connect with Drew Bates at www.linkedin.com/in/drew-bates/

About the SAP SMB Innovation Lab

As part of SAP’s SMB Group based in Shanghai, China, we mold the hottest technology trends into inspiring innovations. We act as an internal startup poised on the shoulders of a world market leader to get our hands dirty in defining and creating new ways of getting business done.

- SAP Managed Tags:

- Machine Learning,

- Digital Technologies,

- Retail

You must be a registered user to add a comment. If you've already registered, sign in. Otherwise, register and sign in.