- SAP Community

- Products and Technology

- Technology

- Technology Blogs by SAP

- Part 7: SAP Cloud Platform Architecture Library

Technology Blogs by SAP

Learn how to extend and personalize SAP applications. Follow the SAP technology blog for insights into SAP BTP, ABAP, SAP Analytics Cloud, SAP HANA, and more.

Turn on suggestions

Auto-suggest helps you quickly narrow down your search results by suggesting possible matches as you type.

Showing results for

Advisor

Options

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

09-22-2017

1:17 PM

This is part 7 of my blog series about cloud architecture on SAP Cloud Platform. You can find the overview page here.

An architect should be able to reuse patterns and best practices either created by him- or herself or others, who have made their experiences and share them. Such a body of knowledge can be referred to as an architecture repository or architecture library. Those common patterns help establishing standardization and greatly reduce time to production as the architect can rely on those best practices without prior evaluation of several approaches before finding a working solution.

I believe that both beginners and professionals in SAP CP projects can profit from such guidance and are able to validate their own architectures against the suggested architectures.

As you might get more and more experience as an architect in actual SAP CP projects, I encourage you to start creating your own architecture libraries and also share them with others. As it is good practice to lead by example, I will share my first release of architectures for SAP CP (tested only with the Neo environment of SAP CP) that I have worked with in the past and that I frequently recommend. I will start VERY trivial and then build up the complexity so there is something easy to consume for everyone.

But be careful: All the suggestions are my personal recommendations and NOT SAP's "official references". There might also be situations in which the scenarios do not work for your explicit project, maybe because of data security reasons in your company or the lack of certain components. Still, I created the collection of architectures as carefully as possible, meaning that I have practical experience all with those scenarios from the projects I support as an architect. You won't find a single suggestion from me that I only know by "word-of-mouth recommendation", "read about it in the manual", or that I wouldn't recommend to my own customers. For official reference architectures, consider looking at the Blueprints that are currently released by my colleagues from development: https://www.sap.com/developer/blueprints.html

I think it would be great if we could all start sharing our scenarios and discuss them. Hopefully my suggestions help you in your projects, whether you are at the very start of the journey and have to understand the SAP Cloud Connector or whether you are advanced and have to deal with Principal Propagation, federated identity providers, and hybrid application landscapes.

I will present the following architectures in this blog:

So let's get started with the individual description:

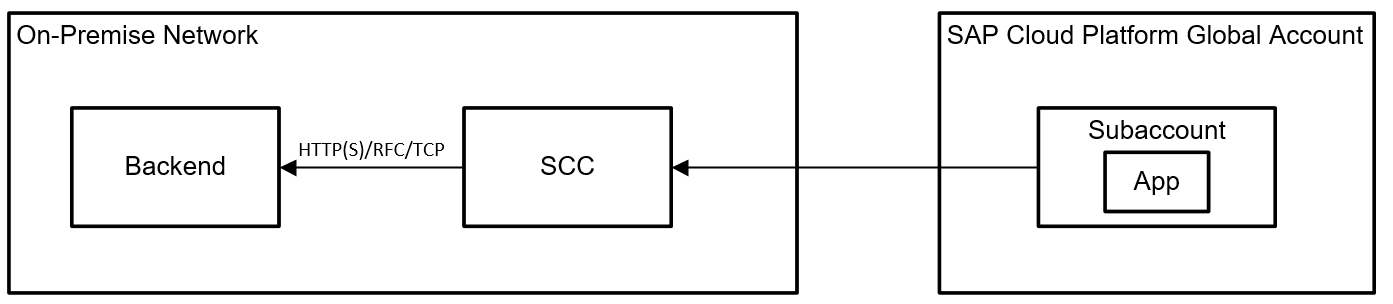

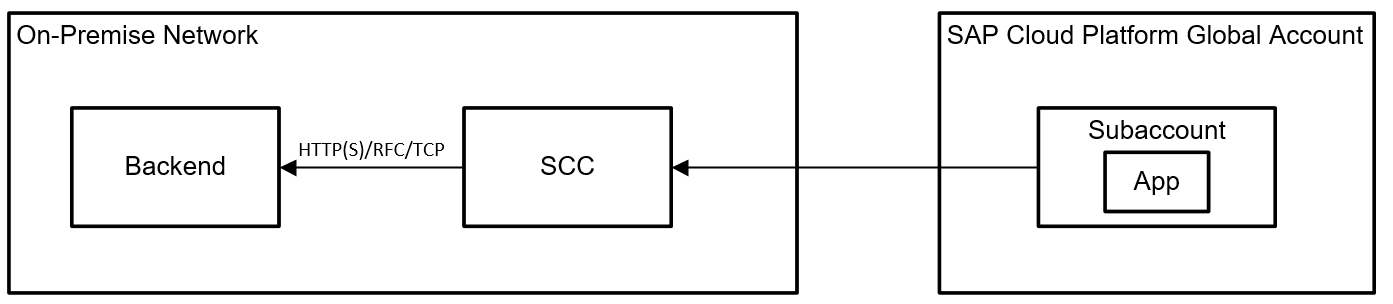

1) Cloud-2-On-Premise Integration via SAP Cloud Connector

Ok, so this is for complete beginners: If you want to expose resources to applications in SAP CP, you should do so by using the SAP Cloud Connector (SCC). The SCC is installed in your on-premise network and has direct network connectivity to your backend system. The SCC is then configured to open a tunnel to an individual subaccount of your Global Account (explanation of the difference in my blog series https://blogs.sap.com/2016/10/21/part-1-understanding-hcp-global-accounts-sub-accounts-concept-means...). You then add the resources you want to expose in the SCC configuration (you can expose HTTP(S), RFC, and since SCC 2.10.0.1 also TCP connections to the cloud). The app(s) in the subaccount then use Destinations (which are configured in the Subaccount) to access the backend systems (you can also configure destinations which point to resources in the public internet, in this case, you do not need the SCC). Attention: Access to the SCC is the most critical thing in your landscape. Control it tightly as the SCC is the component that is exposing resources to the outside world. Of course, a single SCC can expose multiple backends to your cloud.

2) Cloud-2-On-Premise Integration via SCC, complete separation using subaccounts and a single SCC

In case you have multiple unrelated projects, you should separate them in different subaccounts (cf. https://blogs.sap.com/2016/10/21/part-1-understanding-hcp-global-accounts-sub-accounts-concept-means...). Still, you can share a SAP Cloud Connector with multiple subaccounts and multiple backends without them influencing each other. In the SCC, almost all configurations are maintained per subaccount. I tried to indicate this with the colors in the following picture. Backend A is exposed only to the subaccount hosting App A, while Backend B is only available to App B and C in a separate subaccount. So you do not need individual SCCs for every project. As SCCs are to be installed on separate machines (do not install them on top of existing backend systems!), this would otherwise increase the amount of on-premise machines once your cloud grows. By the way: Even in an agile DevOps environment, I would not let developers from projects A & B configure those central SCCs! The SCC should be configured by a third party (e.g. your IT admins) who pay attention to all projects and make sure they do not influence configurations for subaccounts that do not belong to them.

3) On-Premise-2-Cloud Integration via Service Channels and the SCC

In order to access HANA databases, which are located in the SAP CP, you should use the SCC as well. In the SCC, you configure a "Service Channel" to an individual database from a subaccount. The SCC then opens a port on its internal network interface. You can connect to this port from your internal network (e.g. a backend system) with a JDBC/ODBC client and access the database. I have used this integration in the following scenarios:

4) SAP Cloud Connector Setup for staged application development

As explained in my other blogs (https://blogs.sap.com/2016/10/21/part-1-understanding-hcp-global-accounts-sub-accounts-concept-means...), you should use different subaccounts for staged application development. This way, you can achieve different stages for development, QA, and production comparable to the well-known paradigm that is used for on-premise systems since I can remember. But what does this mean for your SCC? As explained in my other blogs (https://blogs.sap.com/2016/10/21/part-1-understanding-hcp-global-accounts-sub-accounts-concept-means...), you should use different subaccounts for staged application development. This way, you can achieve different stages for development, QA, and production comparable to the well-known paradigm that is used for on-premise systems since I can remember. But what does this mean for your SCC?

Your landscape will therefore look like depicted in the following picture. Keep in mind that there might be multiple subaccounts per stage and also many more backends attached to the SCCs. But in theory, this is what you should aim for:

5) Integration to different, non-connected, on-premise networks

In the "early days", a subaccount could only be connected to a single SCC. Since version 2.9, a SCC can be configured with a location id. In your subaccounts, you can then point to different locations in your destinations. This is useful when you want to connect to on-premise networks, which are not interconnected by themselves. Of course, also here you should have multiple SCCs for the different stages in every network if applicable.

6) Using SAP CP in multiple geographies

A global account is always bound to a datacenter within a region. There are no global accounts that spread across regions. So let's say you have a global customer with networks in two geographies, e.g. Europe and US. Some applications might be for the US only, while others must keep all the cloud-data within the European region for data security and compliance reasons. For performance reasons, latency, or organizational structures, you also might want to spread your applications across regions. For those requirements, you will need separate Global Accounts in different regions. Still, you might want to integrate on-premise data across regions to both Global Accounts and their respective subaccounts. The following architecture depicts such a setup. You might want to create SCC(s) in the different regional on-premise networks and connect them to subaccounts in multiple Global Accounts of SAP CP as you like. The location ids make sure that you always address the correct network. Keep in mind that you also need multiple SCCs for the different stages.

7) Using Smart Data Integration (SDI) for data integration scenarios

When you want to integrate data with replication or virtualized access, Smart Data Integration is one option from the large EIM portfolio of SAP. SDI can only be used for integrations that involve HANA as a target or a source. SDI is available in SAP CP as well. You can deploy the DP Server on your HANA (via HALM) and activate it in the HANA configuration. Then, you'll also need an on-premise component. In the SDI use case, this is not the SCC but the SDI Data Provisioning Agent (DPA). The DPA is installed on-premise and, same as the SCC, has a direct network connectivity to the resource you want to integrate. I have seen DPAs installed also on the same machine as SCCs which can make sense for your scenario as well (whereas the official recommendation is to keep the SCC separate when I last checked it). The DPA then connects to the HANA database in the cloud via an encrypted connection. For the connection to the resource, adapters need to be deployed on the DPA that match the type of resource you want to integrate. You can also develop your own adapters if nothing appropriate is available in the standard. For available adapters, check the respective PAM. There is already a large amount of adapters, even including integrations to Hadoop to incorporate your Big Data scenarios (although you should look at Vora or the SAP Big Data Services as well!).

😎 Using Smart Data Access (SDA) for virtualized access to SAP CP HANA tables

The Service Channels I described for the second architecture can also be used to realize data virtualization scenarios with Smart Data Access (SDA). SDA can be used to include tables from a cloud-based HANA into an on-premise HANA. Attention: the reverse is not possible! So you can "enrich" your on-premise databases with virtual tables but not the other way around, so this scenario cannot be used if you want to access on-premise data in the cloud. Using SDA is very useful if you already have, for example, large on-premise data warehouse environments built with SDA and you want to include data from cloud-based HANA databases as well. Technically, this is realized via a Service Channel (JDBC/ODBC protocol) and the SCC as depicted in the following picture.

9) Using the SAP Cloud Platform Portal Service with an Embedded Gateway (External Access Point Scenario)

In this scenario, the backend system exposes its OData services via an Embedded Gateway and via the SCC to the SAP CP. Within the cloud subaccount, the Portal Service can host multiple sites, for example, Fiori Launchpad sites. The apps within the Fiori Launchpad(s) access the OData services via destinations in the subaccount. I would recommend this setup in case a customer does not have an existing Gateway Hub deployment on-premise and also does not need a central on-premise Gateway to combine services from multiple backend systems. I would insist on using Principal Propagation as authentication method for the destination towards the backend system because otherwise, you cannot guarantee that a user of the cloud apps can see the same data in the cloud that he or she would also be allowed to see on-premise (e.g. via SAP GUI). I will describe principal documentation in another architecture. Also, notice that I am depicting an external access point scenario here. For the difference between external and internal access point compare the documentation (https://help.sap.com/viewer/596eae6331ac4637af13a327a1e340a5/2.0%202017-07/en-US/47565c1f67d5466cba9...) and this blog (https://blogs.sap.com/2017/07/06/fiori-cloud-and-supported-landscape-scenarios/). As the external access point scenario makes mobile usage of apps much easier and does not need additional reverse proxies in the on-premise network, I recommend this scenario to my customers first. Only if customers insist on keeping all of their business data network traffic on-premise or if latency does not allow otherwise (which I haven't seen yet for well-designed Fiori Apps as bottlenecks are typically somewhere else), I would choose an internal access point scenario.

10) Using the SAP Cloud Platform Portal Service with a Gateway Hub (External Access Point Scenario)

In comparison to the previous setup, in this case the SAP Gateway is used in a Hub deployment. Same as before, the Gateway exposes the OData services towards the SCC and therefore SAP CP. All other comments from the previous scenario about Principal Propagation and internal vs. external access point scenario remain valid here. The following key factors determine whether this architecture suits your situation:

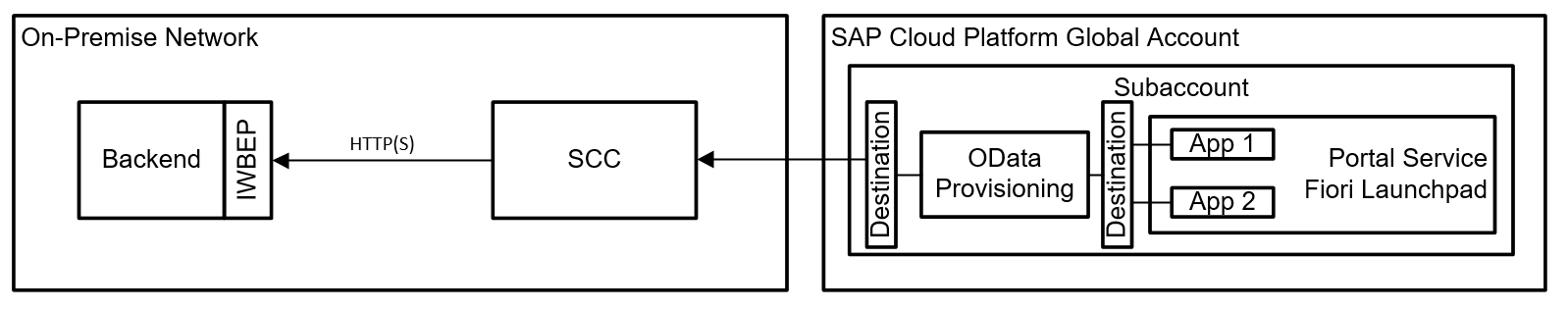

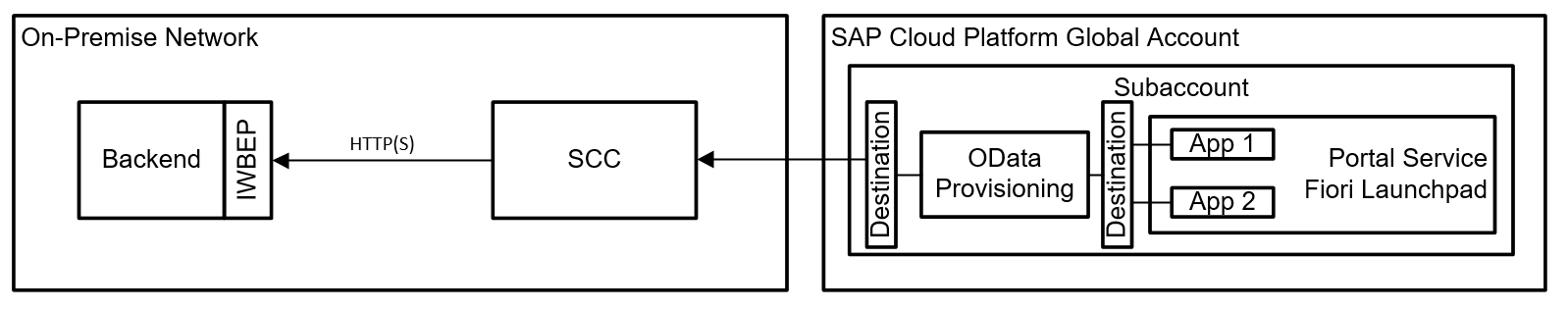

11) Using the SAP Cloud Platform Portal Service with the OData Provisioning Service

In case there is no on-premise Gateway available or it is not possible to use it for other reasons, the OData Provisioning Service can take over the task of exposing backend services as OData services to cloud applications. The setup is fairly straight-forward and requires the IWBEP Service activated on the backend system. A good quick start documentation can be found here (https://help.sap.com/doc/301058c7809f45b68418dc1b124cc9d3/SAP%20Fiori%20Cloud/en-US/SFC_ImplQuickGui...). In the CP, it's important to structure the destinations in the subaccount properly. On subaccount configuration level, you should specify the destination that the apps use to access the OData Provisioning Service. In the configuration of the OData Provisioning Service, you can configure the destination that points to your backend system. Keep in mind that you should definitely use principal propagation as authentication mechanism in order not to bypass the backend permissions (of course, you can start configuring the scenario with Basic Authentication just to make sure it works before upgrading to Principal Propagation). The destination between the apps and the OData Provisioning Service will use App2AppSSO as authentication mechanism.

12) Using the SAP Fiori Cloud Service with the Cloud Portal Service

The SAP Fiori Cloud Service allows you to subscribe to application content from SAP, either for S/4 HANA or Business Suite backends. The on-premise integration and Gateway deployment method is not influenced by the Fiori Cloud Service, so you can use all approaches that we discussed before (Embedded Gateway, Gateway Hub, OData Provisioning). By subscribing to the service and the application content, you get the predefined application descriptors, roles, catalogs, and groups for the Fiori Launchpad. The applications that are available are not limited to the Fiori technology. The service also includes WebDynpro ABAP and SAP GUI for HTML apps, whereas those classic apps still run on the backend system and are integrated securely in the cloud environment. Fiori apps from the application content are included as subscriptions into your subaccounts and can therefore be maintained and even extended in your subaccount. Another hint: once you import the content from the Fiori Cloud Service, a certain number of apps is added to your Launchpad site. Keep in mind that this is currently only a subset of the overall number of available applications that could in principle be added to your Fiori Launchpad… it's just that SAP is currently not delivering all catalogs, roles, groups, and application descriptors via the Fiori Cloud Service. So you can still add 'missing' apps to your Fiori Launchpad manually if you know the parameters. In order to add other classic apps, compare this documentation https://help.sap.com/viewer/3ca6847da92847d79b27753d690ac5d5/Cloud/en-US/d36ea2f36ae443289d9968c7e1f.... Also explore the Fiori Apps Library for a complete list of available applications. Here, you can also see which apps are delivered automatically via the Fiori Cloud Service and which apps you probably have to add manually to your Fiori Launchpad in the cloud.

13) Principal Propagation, SSO, Corporate User Store, and Permission Management

This rather complex scenario combines multiple components that are related in order to achieve a secure and consistent authentication and authorization concept in a hybrid environment. It therefore incorporates Single Sign-On authentication for a cloud application using a corporate user, using corporate user groups for permission management in the cloud, and conducting principal propagation to authorize communication with a backend. The architecture shows one possibility for this complete picture including all components that are needed to setup such an environment. I have used this setup successfully at customers where certain prerequisites are met, it will however not be applicable to every customer landscape. Basically, the customer will need a SAP Cloud Connector, a corporate user store, and a SAML identity provider that is integrated with the user store. The SAML endpoint has to be reachable via the network that is used by the users of the cloud applications: So if your users access the applications via the internet, also the SAML endpoint has to be available via the internet because the browser or mobile device is forwarded to the authentication endpoint and therefore has to be able to reach it from a network perspective. If your users only access the applications from inside the on-premise corporate network, it is also possible to use a SAML endpoint that is not available externally. You can find more details in another blog of my series https://blogs.sap.com/2016/10/21/hana-cloud-platform-thoughts-cloud-architecture/#.

Concerning the authentication: In many cases, a company already has some sort of SSO mechanism in place, oftentimes also working with SAML. So, for example, all devices could have a certificate installed that allows certificate based authentication with the SAML endpoint. The user accesses an application, is redirected to the identity provider, a certificate based authentication is conducted automatically and the user's browser returns to the application with a valid authentication ticket, allowing him or her to access to the application.

Concerning authorization: The SAP CP allows assigning users to groups on the basis of SAML-based assertion attributes (or other attributes). So your SAML IdP will not only validate the users' identities but also return the user groups that the user is a member of in the corporate user store. Once you establish a trust relationship between the SAML IdP and the application IdP configuration in SAP CP, you can use those assertion attributes to map the corporate user groups to user groups in SAP CP (those groups are maintained manually in the subaccount as depicted in the architecture). So now, you have a mapping between the corporate user groups and the cloud user groups via the mapping functions of the IdP configuration. Next, you will map the cloud groups to roles. Roles in SAP CP are collections of permissions. The mapping of groups to roles therefore is an important mechanism to structure authorizations. Permissions are introduced by the developers of the applications by defining them in the source code. Once deployed on SAP CP, the CP 'detects' those permissions in the code and makes them available in the subaccount to be assigned to roles.

Concerning principal propagation: In this setup, the user id of the user accessing the cloud application and the user in the backend system are identical, which allows setting up principal propagation. Basically, a whole lot of certificate based trust relationships is needed to achieve this: A trust relationship between the SAP Cloud Connector and the backend, a trust relationship between the SCC and the SAML IdP and also of course the trust relationship we created earlier between the SAML IdP and the CP subaccount. Once the destination towards the backend is configured to use principal propagation, the SAML ticket will be forwarded to the SCC which then issues a short-living X.509 certificate including the user id of the end-user. The backend will then have to validate and interpret this X.509 certificate and map the user id of the cloud user to the backend user (which is the same in our scenario because the user base is maintained in the corporate user store and both backend and cloud are integrated with this user store).

Once all those components are configured, you have a great user and permission management for your hybrid landscape because basically, all permissions are controlled from the central corporate user store and your end-users can access your cloud applications with their "normal corporate domain users" which they are used to.

14) Web IDE Development with ABAP Repository

Not all customers want to have their application runtime in the cloud yet or the scenario might not profit from a runtime in the cloud. Even though, SAP Cloud Platform can be a very effective extension platform with the development tools it provides, even though only the development tools are based in the cloud and the complete application is running on-premise. SAP Cloud Platform Web IDE can therefore be integrated with an on-premise ABAP Repository in order to create or modify application frontends. The connection has to be established via the Cloud Connector and additional properties of the HTTP Destination used in the subaccount (documentation https://help.sap.com/viewer/825270ffffe74d9f988a0f0066ad59f0/Cloud/en-US/5c3debce758a470e8342161457f...). Although you could install Web IDE also in an on-premise setup, you would lose the charm of SAP updating and running your Web IDE and also the advantage of having one (version-) consistent frontend development environment for all Fiori UIs. For multiple different projects developing with Web IDE, I would recommend separating them by using different subaccounts so that the projects do not influence each other, git-repositories are not shared between projects (unless you want that), and backend systems are only available as needed. Once deployed to your on-premise ABAP Repository, the developments can be transported as usual with an on-premise Solution Manager as indicated in my architecture.

15) Using the Solution Manager to transport Multi-Target Applications and monitor applications

Your on-premise Solution Manager can be used in combination with SAP Cloud Platform as well. Using CTS+, your SolMan can transport Multi-Target Applications (MTA) to target subaccounts. Although MTAs can currently not be exported automatically (add them manually to your transport), SolMan can be used to deploy the MTA on the target subaccount. This way, you can make use of the benefits of a controlled and audited release environment if you are required to do so because of compliance reasons, for example. An MTA thereby consists of multiple modules which you can pack and transport together as a combined release. HANA XS classic developments can be transported using CTS+ but attached to the same transport (but not in the same MTA).

On top of assisting with transporting applications, your SolMan can also help in monitoring cloud applications on SAP CP. Although development has manifold ideas to further develop monitoring capabilities, today, there are already good features available such as end-user experience monitoring, exception monitoring for cloud applications, and monitoring options for your integrations via the Integration Service or the Cloud Connector.

16) Managing hybrid applications with Solution Manager

A very interesting extension of scenario 15 would be an application that also has on-premise components in addition to the cloud-based part. Let's assume you develop your Fiori frontend in Web IDE and deployed it to a subaccount in SAP CP. The Fiori application is most likely depending on the availability (and correct version!) of certain backend services in your on-premise systems. Once you transport and release new versions of the overall hybrid application (=on-premise and cloud components), you will want all parts of the application to be in sync (and not some backend version 2 being called by frontend version 1, for example). To achieve this, your Solution Manager transport can combine development fragments from all required components and systems and transport them within one large transport request. It would transport, for example, the ABAP components in the on-premise system line and your cloud-based MTA with the method described in scenario 15. In addition, you probably also have Cloud Connectors integrating your systems (and as I showed in scenario 4, you should also at least distinguish between non-prod and productive Cloud Connectors). In the end, your entire scenario will look like this:

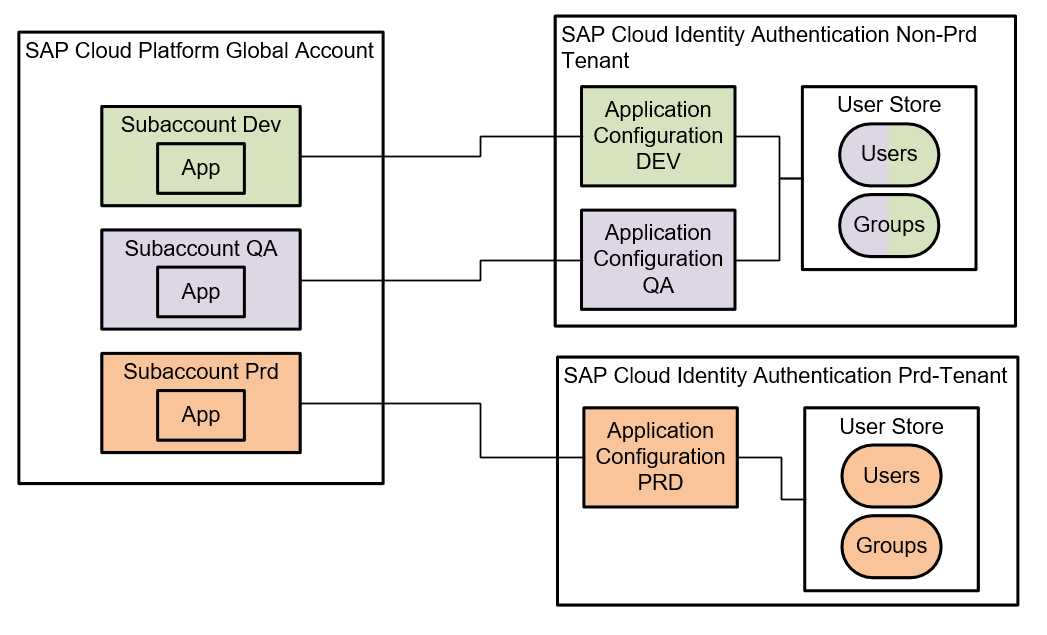

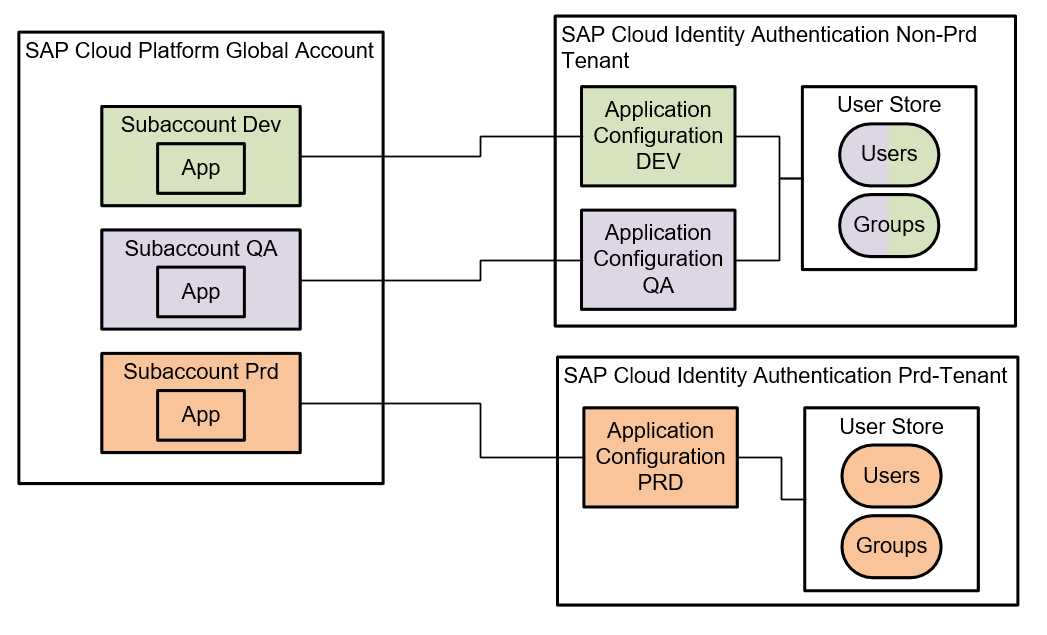

17) Understanding the internal structure of the SAP Identity Authentication Service

A question that I am repeatedly asked is about the structure and components of the SAP Identity Authentication Service and its relation to subaccounts. I will therefore show, with two architecture diagrams, how the service is structured. At first, you can imagine the SAP Identity Authentication Service as a SaaS offering for user management. This means that you can license one or more tenants of this SAML identity provider. Each tenant has its own configuration, user base, reporting, and administrator users. However, a tenant should be structured to serve multiple different applications in parallel as identity provider. It is important to understand that each tenant has ONE user base and group base. This means that if you want to have completely separate sets of users maintained in single tenants, you will need multiple tenants. On the other hand, as the user base can be used for multiple applications, this means that different cloud applications can be used with the same user credentials. Access to the cloud applications (authorization) should be controlled via user groups or other properties of the user (we talked about the SAML assertion attributes earlier). Normally, each subaccount is integrated via one so called "Application" in the identity authentication service. This "Application" basically is a configuration of the respective SAML endpoint. You can, for example, configure the login screen, password policy, and terms of use of this "Application". Also, you are able to choose whether the Application should take its users from the internal user store (and group store) or whether the authentication should be forwarded to another identity provider (which is a very mighty feature of SAML as I explain in this blog https://blogs.sap.com/2017/03/10/part-5-beta-sap-cp-member-provisioning-via-microsoft-adfs/). Concerning structure, I already said that in the standard, you oftentimes find one Identity Authentication Application connected to one subaccount hosting one application. If you have multiple different cloud applications hosted in a single subaccount (might be a good idea or a bad idea depending on your scenario, you should consider reading this blog https://blogs.sap.com/2016/10/21/part-1-understanding-hcp-global-accounts-sub-accounts-concept-means... if you are not sure), you could have different Identity Authentication Applications or share the same one for all applications depending on your needs. If you choose to integration multiple Identity Authentication Applications with a single subaccount, you will need an URL parameter to specify that you are calling a cloud application with an identity provider which is different from the default identity provider of the subaccount (there can only be one default). Despite all those possibilities, I would say that the most common structure and relation between different subaccounts and identity authentication tenants looks like this:

With the colors, I tried to indicate that the different Identity Authentication Applications belong to separate Cloud Platform subaccounts but share a common set of users and groups.

18) Using multiple tenants of SAP Identity Authentication Service

As an extension to scenario 17, you could (and probably should) introduce multiple tenants of the Identity Authentication Service in your cloud landscape. I typically suggest this so that you are able to distinguish between productive and non-productive tenants. This sometimes makes development and operations easier when there is a completely separate tenant for productive scenarios only which is maintained by the "normal" user administrators of a company and another non-productive tenant where developers have more freedom to experiment, create test users, and so on. Still, the structure of having Identity Authentication Applications within the tenant remains valid. The difference is that you have separate tenants with separate administrators, reporting, a separate user base, and a separate set of available groups. Your scenario with separate tenants for productive and non-productive authentication and user administration is shown in the following picture:

19) User Management: Different ways of integrating the corporate user store via SAML

When your company or client has an external SAML endpoint available for authentication and you plan on using this endpoint, there are different ways of achieving this which have implications on the scalability and functionality of the solution. Let's first look onto the architecture options and then explain the options and differences:

For SAML, it's important that the endpoint is in the same network as the browser of the user who is accessing an application. Therefore, an externally available SAML endpoint makes things much easier for user authentication in public cloud scenarios. I would consider this state-of-the-art. If you look at Subaccount C first in the picture, you can see that Subaccount C is directly integrated to the Corporate Identity Provider. This 'direct' integration via exchanging SAML metadata is definitely the easiest, but can cause scalability issues in the long run. You see, for every new Subaccount, you would need to touch your Corporate Identity Provider and configure a new SAML endpoint (or at least exchange the metadata because every SAP CP subaccount has its own metadata). Typically, this involves cross-department interaction and might slow you down when you want to add new subaccounts to your global account. I would therefore recommend an alternative solution: Subaccount A and Subaccount B are both integrated with an SAP Cloud Identity Authentication Tenant. This authentication is a one-click integration to establish the trust connection between the two… so that happens very fast. In the Identity Authentication tenant, as we have learned earlier, this will create a new "Application". For every Application within the tenant, you can configure the identity provider, meaning the user base that is taken for authentication. In Identity Authentication, you can configure your application to use a "Corporate Identity Provider" as the identity provider of an application. This Corporate Identity Provider can then be configured once by establishing a trust relationship between the Corporate IdP and your Identity Authentication tenant and can then be re-used for every application. The Identity Authentication tenant will then redirect all authentication requests to the 'generic' SAML endpoint (highlighted in blue) which means all Subaccounts can make use of the same authentication forwarding mechanism. Of course, the trust configuration between the corporate identity provider and the Identity Authentication tenant requires a little bit of work and you have to select carefully which SAML attributes should be exposed by the endpoint of the corporate identity provider so that all cloud scenarios have the required attributes but once the configuration is set up like for Subaccount A and B, you can quickly add new subaccount without ever touching your corporate identity provider.

I also want to point you to an important detail when it comes to members: Members can only be provisioned from an Identity Authentication tenant when using the Platform Identity Provider Service. But when you configure the forwarding as I describe here, your members of the subaccounts can also be provisioned by your corporate identity provider. This adds a huge improvement for administration, operations, and compliance but sadly does not work yet smoothly for all scenarios (more details can be found in this blog https://blogs.sap.com/2017/03/10/part-5-beta-sap-cp-member-provisioning-via-microsoft-adfs/).

20) User Management: Internal and External Application Users for the same application

Let's imagine you have the following scenario: your cloud application is to be used by both internal employees of your company and also external partners. Most likely, your internal employees can be authenticated and authorized by your corporate SAML identity provider while your external partners have no internal user accounts in your corporate IdP. Oftentimes, partners have their user accounts in separate identity providers which are separated for good reasons. I will now explain my approach of letting both groups of users use the same application and federating authentication and authorization correctly.

As you can add multiple application identity providers to a subaccount (although you have to choose one default IdP), you could for example add your corporate identity provider with your internal users and SAP Identity Authentication Service as IdP for your external users to the same subaccount, where the application in question is deployed. Internal users (blue) will then be able to authenticate against the corporate IdP and external users (orange) will authenticate against the Identity Authentication Service. If your corporate IdP is only available from within the internal network, authentication will only be possible if users are in the internal network themselves. If you want to allow full mobile access from anywhere without VPNs and other measures, you'll also need an external SAML endpoint for your internal users. Your external users will most likely not be in your internal network, so they will anyway need an external SAML endpoint - so here, the Identity Authentication Service is the ideal location to maintain those users. Authorization is conducted oftentimes by group membership of a user. Those groups can be maintained in your on-premise corporate IdP for internal users and in the Identity Authentication tenant for your external users. Mapping rules are then made individually for each application IdP configuration in the subaccount to make sure that the groups in the subaccounts map to the ones from the IdPs.

In comparison to architecture 13, you can see that I introduced a new location for "roles" in the subaccount. In SAP CP, roles can either be configured on subaccount level (the "role"-box on the right) or within an application (the "role"-box on the left). The difference is that subaccount level Roles can be shared between applications whereas roles that are only available within an application cannot be shared - and therefore not reused by multiple applications. Both types of roles have their reasons of existence, so for some cases shared roles make sense whereas for other roles (oftentimes more 'application specific roles'), a shareable role does not add value. It's up for you to decide…

21) SAP Cloud Platform Integration Service (former HANA Cloud Integration, HCI)

In case you want to use the SAP Cloud Platform Integration Service (former HANA Cloud Integration, HCI) and you are not sure about how many subaccounts, tenants, and SCCs you will need, probably the following scenario can serve as a good blueprint for you:

In this scenario, an application is developed in SAP Cloud Platform that could, for example, extend a SaaS Solution and include data from two backend systems. Integration should be done by the Integration Service.

In such a scenario, you probably already have different SCCs, separating the productive from the non-productive communication. You could extend this with also separating the middleware into a productive and non-productive middleware layer (multiple reasons to do this: load/latency, separate administrators, more freedom on dev-tenants, …). The Integration Service always comes as a tenant within a separate subaccount. So in case you want to separate the middleware, you will need to license two tenants, which you will automatically get in two separate subaccounts ("Subaccount IS_PRD" and "Subaccount IS_DEV" in my figure). The cloud application itself should most likely be developed in yet another subaccount, so you have a clean separation between application and middleware and can scale better (like multiple applications using the same middleware. If you did that on a single subaccount, you might end up in terrible chaos).

I get asked a lot about the Integration Service tenants so just to make it clear again to avoid misconceptions: the tenant as such is like an application that is operated by SAP. This tenant is then subscribed to within a dedicated subaccount. From this subaccount, you can then configure destinations to the SCC for on-premise integration or go to the tenant configuration of the Integration Service. But still, you only get one tenant runtime and NOT individual tenants in every subaccount that you have (which is what some people think). You can license additional tenants and, of course, you can use the tenant for integrations between all subaccounts that you have… but not every subaccount automatically has a separate tenant once you license the Integration Service.

This concludes my first release of the SAP Cloud Platform Cloud Architecture Library. Remember, the architectures are not official reference architectures released by SAP but my personal recommendations based on experiences I personally made during my projects. So they might be biased towards my preferences of doing things, but I made good experiences with them and hope that sharing my recommendations will help you with your projects.

Let me know whether you need guidance for other scenarios, whether you agree to my suggestions, or start posting your own patterns as well so that we can all profit in the community!

All the best,

Jakob

An architect should be able to reuse patterns and best practices either created by him- or herself or others, who have made their experiences and share them. Such a body of knowledge can be referred to as an architecture repository or architecture library. Those common patterns help establishing standardization and greatly reduce time to production as the architect can rely on those best practices without prior evaluation of several approaches before finding a working solution.

I believe that both beginners and professionals in SAP CP projects can profit from such guidance and are able to validate their own architectures against the suggested architectures.

As you might get more and more experience as an architect in actual SAP CP projects, I encourage you to start creating your own architecture libraries and also share them with others. As it is good practice to lead by example, I will share my first release of architectures for SAP CP (tested only with the Neo environment of SAP CP) that I have worked with in the past and that I frequently recommend. I will start VERY trivial and then build up the complexity so there is something easy to consume for everyone.

But be careful: All the suggestions are my personal recommendations and NOT SAP's "official references". There might also be situations in which the scenarios do not work for your explicit project, maybe because of data security reasons in your company or the lack of certain components. Still, I created the collection of architectures as carefully as possible, meaning that I have practical experience all with those scenarios from the projects I support as an architect. You won't find a single suggestion from me that I only know by "word-of-mouth recommendation", "read about it in the manual", or that I wouldn't recommend to my own customers. For official reference architectures, consider looking at the Blueprints that are currently released by my colleagues from development: https://www.sap.com/developer/blueprints.html

I think it would be great if we could all start sharing our scenarios and discuss them. Hopefully my suggestions help you in your projects, whether you are at the very start of the journey and have to understand the SAP Cloud Connector or whether you are advanced and have to deal with Principal Propagation, federated identity providers, and hybrid application landscapes.

I will present the following architectures in this blog:

- Cloud-2-On-Premise Integration via SAP Cloud Connector

- Cloud-2-On-Premise Integration via SCC, complete separation using subaccounts and a single SCC

- On-Premise-2-Cloud Integration via Service Channels and the SCC

- SAP Cloud Connector Setup for staged application development

- Integration to different, non-connected, on-premise networks

- Using SAP CP in multiple geographies

- Using Smart Data Integration (SDI) for data integration scenarios

- Using Smart Data Access (SDA) for virtualized access to SAP CP HANA tables

- Using the SAP Cloud Platform Portal Service with an Embedded Gateway (External Access Point Scenario)

- Using the SAP Cloud Platform Portal Service with a Gateway Hub (External Access Point Scenario)

- Using the SAP Cloud Platform Portal Service with the OData Provisioning Service

- Using the SAP Fiori Cloud Service with the Cloud Portal Service

- Principal Propagation, SSO, Corporate User Store, and Permission Management

- Web IDE Development with ABAP Repository

- Using the Solution Manager to transport Multi-Target Applications and monitor applications

- Managing hybrid applications with Solution Manager

- Understanding the internal structure of the SAP Identity Authentication Service

- Using multiple tenants of SAP Identity Authentication Service

- User Management: Different ways of integrating the corporate user store via SAML

- User Management: Internal and External Application Users for the same application

- SAP Cloud Platform Integration Service (former HANA Cloud Integration, HCI)

So let's get started with the individual description:

1) Cloud-2-On-Premise Integration via SAP Cloud Connector

Ok, so this is for complete beginners: If you want to expose resources to applications in SAP CP, you should do so by using the SAP Cloud Connector (SCC). The SCC is installed in your on-premise network and has direct network connectivity to your backend system. The SCC is then configured to open a tunnel to an individual subaccount of your Global Account (explanation of the difference in my blog series https://blogs.sap.com/2016/10/21/part-1-understanding-hcp-global-accounts-sub-accounts-concept-means...). You then add the resources you want to expose in the SCC configuration (you can expose HTTP(S), RFC, and since SCC 2.10.0.1 also TCP connections to the cloud). The app(s) in the subaccount then use Destinations (which are configured in the Subaccount) to access the backend systems (you can also configure destinations which point to resources in the public internet, in this case, you do not need the SCC). Attention: Access to the SCC is the most critical thing in your landscape. Control it tightly as the SCC is the component that is exposing resources to the outside world. Of course, a single SCC can expose multiple backends to your cloud.

2) Cloud-2-On-Premise Integration via SCC, complete separation using subaccounts and a single SCC

In case you have multiple unrelated projects, you should separate them in different subaccounts (cf. https://blogs.sap.com/2016/10/21/part-1-understanding-hcp-global-accounts-sub-accounts-concept-means...). Still, you can share a SAP Cloud Connector with multiple subaccounts and multiple backends without them influencing each other. In the SCC, almost all configurations are maintained per subaccount. I tried to indicate this with the colors in the following picture. Backend A is exposed only to the subaccount hosting App A, while Backend B is only available to App B and C in a separate subaccount. So you do not need individual SCCs for every project. As SCCs are to be installed on separate machines (do not install them on top of existing backend systems!), this would otherwise increase the amount of on-premise machines once your cloud grows. By the way: Even in an agile DevOps environment, I would not let developers from projects A & B configure those central SCCs! The SCC should be configured by a third party (e.g. your IT admins) who pay attention to all projects and make sure they do not influence configurations for subaccounts that do not belong to them.

3) On-Premise-2-Cloud Integration via Service Channels and the SCC

In order to access HANA databases, which are located in the SAP CP, you should use the SCC as well. In the SCC, you configure a "Service Channel" to an individual database from a subaccount. The SCC then opens a port on its internal network interface. You can connect to this port from your internal network (e.g. a backend system) with a JDBC/ODBC client and access the database. I have used this integration in the following scenarios:

- Controlling a HANA database in the cloud. Typically, your IT department also controls certain functionality of on-premise HANA systems via JDBC (e.g. users and permissions). The same can be done for SAP CP HANAs via Service Channels. - Controlling a HANA database in the cloud. Typically, your IT department also controls certain functionality of on-premise HANA systems via JDBC (e.g. users and permissions). The same can be done for SAP CP HANAs via Service Channels.

- Accessing data in SAP CP HANAs from on-premise systems. For example in data warehouse scenarios or with your in-house analytics software, you might want to incorporate data from your cloud databases into your reports as well. Service Channels could be the way to go. (SDA access is also possible to some extent, I will explain this in a separate architecture)

- If you only have HANA Studio or Eclipse without the cloud plugins, you can also access and develop on the database via Service Channels. Sometimes, HANA Studios are maintained centrally and installing the cloud plugins in order to connect to the database might take some time. Service Channels could be your jumpstart then…

4) SAP Cloud Connector Setup for staged application development

As explained in my other blogs (https://blogs.sap.com/2016/10/21/part-1-understanding-hcp-global-accounts-sub-accounts-concept-means...), you should use different subaccounts for staged application development. This way, you can achieve different stages for development, QA, and production comparable to the well-known paradigm that is used for on-premise systems since I can remember. But what does this mean for your SCC? As explained in my other blogs (https://blogs.sap.com/2016/10/21/part-1-understanding-hcp-global-accounts-sub-accounts-concept-means...), you should use different subaccounts for staged application development. This way, you can achieve different stages for development, QA, and production comparable to the well-known paradigm that is used for on-premise systems since I can remember. But what does this mean for your SCC?

- If you have the resources, you could think about having a separate SCC instances/machines for every stage.

- If that is not possible, you should at least separate the productive from the non-productive scenarios.

- At least for the productive stage, your SCC should be redundant. SCC has its own high availability function built in, so I highly recommend to use it for production!

Your landscape will therefore look like depicted in the following picture. Keep in mind that there might be multiple subaccounts per stage and also many more backends attached to the SCCs. But in theory, this is what you should aim for:

5) Integration to different, non-connected, on-premise networks

In the "early days", a subaccount could only be connected to a single SCC. Since version 2.9, a SCC can be configured with a location id. In your subaccounts, you can then point to different locations in your destinations. This is useful when you want to connect to on-premise networks, which are not interconnected by themselves. Of course, also here you should have multiple SCCs for the different stages in every network if applicable.

6) Using SAP CP in multiple geographies

A global account is always bound to a datacenter within a region. There are no global accounts that spread across regions. So let's say you have a global customer with networks in two geographies, e.g. Europe and US. Some applications might be for the US only, while others must keep all the cloud-data within the European region for data security and compliance reasons. For performance reasons, latency, or organizational structures, you also might want to spread your applications across regions. For those requirements, you will need separate Global Accounts in different regions. Still, you might want to integrate on-premise data across regions to both Global Accounts and their respective subaccounts. The following architecture depicts such a setup. You might want to create SCC(s) in the different regional on-premise networks and connect them to subaccounts in multiple Global Accounts of SAP CP as you like. The location ids make sure that you always address the correct network. Keep in mind that you also need multiple SCCs for the different stages.

7) Using Smart Data Integration (SDI) for data integration scenarios

When you want to integrate data with replication or virtualized access, Smart Data Integration is one option from the large EIM portfolio of SAP. SDI can only be used for integrations that involve HANA as a target or a source. SDI is available in SAP CP as well. You can deploy the DP Server on your HANA (via HALM) and activate it in the HANA configuration. Then, you'll also need an on-premise component. In the SDI use case, this is not the SCC but the SDI Data Provisioning Agent (DPA). The DPA is installed on-premise and, same as the SCC, has a direct network connectivity to the resource you want to integrate. I have seen DPAs installed also on the same machine as SCCs which can make sense for your scenario as well (whereas the official recommendation is to keep the SCC separate when I last checked it). The DPA then connects to the HANA database in the cloud via an encrypted connection. For the connection to the resource, adapters need to be deployed on the DPA that match the type of resource you want to integrate. You can also develop your own adapters if nothing appropriate is available in the standard. For available adapters, check the respective PAM. There is already a large amount of adapters, even including integrations to Hadoop to incorporate your Big Data scenarios (although you should look at Vora or the SAP Big Data Services as well!).

😎 Using Smart Data Access (SDA) for virtualized access to SAP CP HANA tables

The Service Channels I described for the second architecture can also be used to realize data virtualization scenarios with Smart Data Access (SDA). SDA can be used to include tables from a cloud-based HANA into an on-premise HANA. Attention: the reverse is not possible! So you can "enrich" your on-premise databases with virtual tables but not the other way around, so this scenario cannot be used if you want to access on-premise data in the cloud. Using SDA is very useful if you already have, for example, large on-premise data warehouse environments built with SDA and you want to include data from cloud-based HANA databases as well. Technically, this is realized via a Service Channel (JDBC/ODBC protocol) and the SCC as depicted in the following picture.

9) Using the SAP Cloud Platform Portal Service with an Embedded Gateway (External Access Point Scenario)

In this scenario, the backend system exposes its OData services via an Embedded Gateway and via the SCC to the SAP CP. Within the cloud subaccount, the Portal Service can host multiple sites, for example, Fiori Launchpad sites. The apps within the Fiori Launchpad(s) access the OData services via destinations in the subaccount. I would recommend this setup in case a customer does not have an existing Gateway Hub deployment on-premise and also does not need a central on-premise Gateway to combine services from multiple backend systems. I would insist on using Principal Propagation as authentication method for the destination towards the backend system because otherwise, you cannot guarantee that a user of the cloud apps can see the same data in the cloud that he or she would also be allowed to see on-premise (e.g. via SAP GUI). I will describe principal documentation in another architecture. Also, notice that I am depicting an external access point scenario here. For the difference between external and internal access point compare the documentation (https://help.sap.com/viewer/596eae6331ac4637af13a327a1e340a5/2.0%202017-07/en-US/47565c1f67d5466cba9...) and this blog (https://blogs.sap.com/2017/07/06/fiori-cloud-and-supported-landscape-scenarios/). As the external access point scenario makes mobile usage of apps much easier and does not need additional reverse proxies in the on-premise network, I recommend this scenario to my customers first. Only if customers insist on keeping all of their business data network traffic on-premise or if latency does not allow otherwise (which I haven't seen yet for well-designed Fiori Apps as bottlenecks are typically somewhere else), I would choose an internal access point scenario.

10) Using the SAP Cloud Platform Portal Service with a Gateway Hub (External Access Point Scenario)

In comparison to the previous setup, in this case the SAP Gateway is used in a Hub deployment. Same as before, the Gateway exposes the OData services towards the SCC and therefore SAP CP. All other comments from the previous scenario about Principal Propagation and internal vs. external access point scenario remain valid here. The following key factors determine whether this architecture suits your situation:

- In case an existing on-premise Gateway Hub landscape is already installed

- In case services from the various on-premise backend systems need to be orchestrated via the sophisticated methods the SAP Gateway provides

- More considerations from this resource: https://help.sap.com/saphelp_gateway20sp12/helpdata/en/88/889a8cbf6046378e274d6d9cd04e4d/frameset.ht...

11) Using the SAP Cloud Platform Portal Service with the OData Provisioning Service

In case there is no on-premise Gateway available or it is not possible to use it for other reasons, the OData Provisioning Service can take over the task of exposing backend services as OData services to cloud applications. The setup is fairly straight-forward and requires the IWBEP Service activated on the backend system. A good quick start documentation can be found here (https://help.sap.com/doc/301058c7809f45b68418dc1b124cc9d3/SAP%20Fiori%20Cloud/en-US/SFC_ImplQuickGui...). In the CP, it's important to structure the destinations in the subaccount properly. On subaccount configuration level, you should specify the destination that the apps use to access the OData Provisioning Service. In the configuration of the OData Provisioning Service, you can configure the destination that points to your backend system. Keep in mind that you should definitely use principal propagation as authentication mechanism in order not to bypass the backend permissions (of course, you can start configuring the scenario with Basic Authentication just to make sure it works before upgrading to Principal Propagation). The destination between the apps and the OData Provisioning Service will use App2AppSSO as authentication mechanism.

12) Using the SAP Fiori Cloud Service with the Cloud Portal Service

The SAP Fiori Cloud Service allows you to subscribe to application content from SAP, either for S/4 HANA or Business Suite backends. The on-premise integration and Gateway deployment method is not influenced by the Fiori Cloud Service, so you can use all approaches that we discussed before (Embedded Gateway, Gateway Hub, OData Provisioning). By subscribing to the service and the application content, you get the predefined application descriptors, roles, catalogs, and groups for the Fiori Launchpad. The applications that are available are not limited to the Fiori technology. The service also includes WebDynpro ABAP and SAP GUI for HTML apps, whereas those classic apps still run on the backend system and are integrated securely in the cloud environment. Fiori apps from the application content are included as subscriptions into your subaccounts and can therefore be maintained and even extended in your subaccount. Another hint: once you import the content from the Fiori Cloud Service, a certain number of apps is added to your Launchpad site. Keep in mind that this is currently only a subset of the overall number of available applications that could in principle be added to your Fiori Launchpad… it's just that SAP is currently not delivering all catalogs, roles, groups, and application descriptors via the Fiori Cloud Service. So you can still add 'missing' apps to your Fiori Launchpad manually if you know the parameters. In order to add other classic apps, compare this documentation https://help.sap.com/viewer/3ca6847da92847d79b27753d690ac5d5/Cloud/en-US/d36ea2f36ae443289d9968c7e1f.... Also explore the Fiori Apps Library for a complete list of available applications. Here, you can also see which apps are delivered automatically via the Fiori Cloud Service and which apps you probably have to add manually to your Fiori Launchpad in the cloud.

13) Principal Propagation, SSO, Corporate User Store, and Permission Management

This rather complex scenario combines multiple components that are related in order to achieve a secure and consistent authentication and authorization concept in a hybrid environment. It therefore incorporates Single Sign-On authentication for a cloud application using a corporate user, using corporate user groups for permission management in the cloud, and conducting principal propagation to authorize communication with a backend. The architecture shows one possibility for this complete picture including all components that are needed to setup such an environment. I have used this setup successfully at customers where certain prerequisites are met, it will however not be applicable to every customer landscape. Basically, the customer will need a SAP Cloud Connector, a corporate user store, and a SAML identity provider that is integrated with the user store. The SAML endpoint has to be reachable via the network that is used by the users of the cloud applications: So if your users access the applications via the internet, also the SAML endpoint has to be available via the internet because the browser or mobile device is forwarded to the authentication endpoint and therefore has to be able to reach it from a network perspective. If your users only access the applications from inside the on-premise corporate network, it is also possible to use a SAML endpoint that is not available externally. You can find more details in another blog of my series https://blogs.sap.com/2016/10/21/hana-cloud-platform-thoughts-cloud-architecture/#.

Concerning the authentication: In many cases, a company already has some sort of SSO mechanism in place, oftentimes also working with SAML. So, for example, all devices could have a certificate installed that allows certificate based authentication with the SAML endpoint. The user accesses an application, is redirected to the identity provider, a certificate based authentication is conducted automatically and the user's browser returns to the application with a valid authentication ticket, allowing him or her to access to the application.

Concerning authorization: The SAP CP allows assigning users to groups on the basis of SAML-based assertion attributes (or other attributes). So your SAML IdP will not only validate the users' identities but also return the user groups that the user is a member of in the corporate user store. Once you establish a trust relationship between the SAML IdP and the application IdP configuration in SAP CP, you can use those assertion attributes to map the corporate user groups to user groups in SAP CP (those groups are maintained manually in the subaccount as depicted in the architecture). So now, you have a mapping between the corporate user groups and the cloud user groups via the mapping functions of the IdP configuration. Next, you will map the cloud groups to roles. Roles in SAP CP are collections of permissions. The mapping of groups to roles therefore is an important mechanism to structure authorizations. Permissions are introduced by the developers of the applications by defining them in the source code. Once deployed on SAP CP, the CP 'detects' those permissions in the code and makes them available in the subaccount to be assigned to roles.

Concerning principal propagation: In this setup, the user id of the user accessing the cloud application and the user in the backend system are identical, which allows setting up principal propagation. Basically, a whole lot of certificate based trust relationships is needed to achieve this: A trust relationship between the SAP Cloud Connector and the backend, a trust relationship between the SCC and the SAML IdP and also of course the trust relationship we created earlier between the SAML IdP and the CP subaccount. Once the destination towards the backend is configured to use principal propagation, the SAML ticket will be forwarded to the SCC which then issues a short-living X.509 certificate including the user id of the end-user. The backend will then have to validate and interpret this X.509 certificate and map the user id of the cloud user to the backend user (which is the same in our scenario because the user base is maintained in the corporate user store and both backend and cloud are integrated with this user store).

Once all those components are configured, you have a great user and permission management for your hybrid landscape because basically, all permissions are controlled from the central corporate user store and your end-users can access your cloud applications with their "normal corporate domain users" which they are used to.

14) Web IDE Development with ABAP Repository

Not all customers want to have their application runtime in the cloud yet or the scenario might not profit from a runtime in the cloud. Even though, SAP Cloud Platform can be a very effective extension platform with the development tools it provides, even though only the development tools are based in the cloud and the complete application is running on-premise. SAP Cloud Platform Web IDE can therefore be integrated with an on-premise ABAP Repository in order to create or modify application frontends. The connection has to be established via the Cloud Connector and additional properties of the HTTP Destination used in the subaccount (documentation https://help.sap.com/viewer/825270ffffe74d9f988a0f0066ad59f0/Cloud/en-US/5c3debce758a470e8342161457f...). Although you could install Web IDE also in an on-premise setup, you would lose the charm of SAP updating and running your Web IDE and also the advantage of having one (version-) consistent frontend development environment for all Fiori UIs. For multiple different projects developing with Web IDE, I would recommend separating them by using different subaccounts so that the projects do not influence each other, git-repositories are not shared between projects (unless you want that), and backend systems are only available as needed. Once deployed to your on-premise ABAP Repository, the developments can be transported as usual with an on-premise Solution Manager as indicated in my architecture.

15) Using the Solution Manager to transport Multi-Target Applications and monitor applications

Your on-premise Solution Manager can be used in combination with SAP Cloud Platform as well. Using CTS+, your SolMan can transport Multi-Target Applications (MTA) to target subaccounts. Although MTAs can currently not be exported automatically (add them manually to your transport), SolMan can be used to deploy the MTA on the target subaccount. This way, you can make use of the benefits of a controlled and audited release environment if you are required to do so because of compliance reasons, for example. An MTA thereby consists of multiple modules which you can pack and transport together as a combined release. HANA XS classic developments can be transported using CTS+ but attached to the same transport (but not in the same MTA).

On top of assisting with transporting applications, your SolMan can also help in monitoring cloud applications on SAP CP. Although development has manifold ideas to further develop monitoring capabilities, today, there are already good features available such as end-user experience monitoring, exception monitoring for cloud applications, and monitoring options for your integrations via the Integration Service or the Cloud Connector.

16) Managing hybrid applications with Solution Manager

A very interesting extension of scenario 15 would be an application that also has on-premise components in addition to the cloud-based part. Let's assume you develop your Fiori frontend in Web IDE and deployed it to a subaccount in SAP CP. The Fiori application is most likely depending on the availability (and correct version!) of certain backend services in your on-premise systems. Once you transport and release new versions of the overall hybrid application (=on-premise and cloud components), you will want all parts of the application to be in sync (and not some backend version 2 being called by frontend version 1, for example). To achieve this, your Solution Manager transport can combine development fragments from all required components and systems and transport them within one large transport request. It would transport, for example, the ABAP components in the on-premise system line and your cloud-based MTA with the method described in scenario 15. In addition, you probably also have Cloud Connectors integrating your systems (and as I showed in scenario 4, you should also at least distinguish between non-prod and productive Cloud Connectors). In the end, your entire scenario will look like this:

17) Understanding the internal structure of the SAP Identity Authentication Service

A question that I am repeatedly asked is about the structure and components of the SAP Identity Authentication Service and its relation to subaccounts. I will therefore show, with two architecture diagrams, how the service is structured. At first, you can imagine the SAP Identity Authentication Service as a SaaS offering for user management. This means that you can license one or more tenants of this SAML identity provider. Each tenant has its own configuration, user base, reporting, and administrator users. However, a tenant should be structured to serve multiple different applications in parallel as identity provider. It is important to understand that each tenant has ONE user base and group base. This means that if you want to have completely separate sets of users maintained in single tenants, you will need multiple tenants. On the other hand, as the user base can be used for multiple applications, this means that different cloud applications can be used with the same user credentials. Access to the cloud applications (authorization) should be controlled via user groups or other properties of the user (we talked about the SAML assertion attributes earlier). Normally, each subaccount is integrated via one so called "Application" in the identity authentication service. This "Application" basically is a configuration of the respective SAML endpoint. You can, for example, configure the login screen, password policy, and terms of use of this "Application". Also, you are able to choose whether the Application should take its users from the internal user store (and group store) or whether the authentication should be forwarded to another identity provider (which is a very mighty feature of SAML as I explain in this blog https://blogs.sap.com/2017/03/10/part-5-beta-sap-cp-member-provisioning-via-microsoft-adfs/). Concerning structure, I already said that in the standard, you oftentimes find one Identity Authentication Application connected to one subaccount hosting one application. If you have multiple different cloud applications hosted in a single subaccount (might be a good idea or a bad idea depending on your scenario, you should consider reading this blog https://blogs.sap.com/2016/10/21/part-1-understanding-hcp-global-accounts-sub-accounts-concept-means... if you are not sure), you could have different Identity Authentication Applications or share the same one for all applications depending on your needs. If you choose to integration multiple Identity Authentication Applications with a single subaccount, you will need an URL parameter to specify that you are calling a cloud application with an identity provider which is different from the default identity provider of the subaccount (there can only be one default). Despite all those possibilities, I would say that the most common structure and relation between different subaccounts and identity authentication tenants looks like this:

With the colors, I tried to indicate that the different Identity Authentication Applications belong to separate Cloud Platform subaccounts but share a common set of users and groups.

18) Using multiple tenants of SAP Identity Authentication Service

As an extension to scenario 17, you could (and probably should) introduce multiple tenants of the Identity Authentication Service in your cloud landscape. I typically suggest this so that you are able to distinguish between productive and non-productive tenants. This sometimes makes development and operations easier when there is a completely separate tenant for productive scenarios only which is maintained by the "normal" user administrators of a company and another non-productive tenant where developers have more freedom to experiment, create test users, and so on. Still, the structure of having Identity Authentication Applications within the tenant remains valid. The difference is that you have separate tenants with separate administrators, reporting, a separate user base, and a separate set of available groups. Your scenario with separate tenants for productive and non-productive authentication and user administration is shown in the following picture:

19) User Management: Different ways of integrating the corporate user store via SAML

When your company or client has an external SAML endpoint available for authentication and you plan on using this endpoint, there are different ways of achieving this which have implications on the scalability and functionality of the solution. Let's first look onto the architecture options and then explain the options and differences:

For SAML, it's important that the endpoint is in the same network as the browser of the user who is accessing an application. Therefore, an externally available SAML endpoint makes things much easier for user authentication in public cloud scenarios. I would consider this state-of-the-art. If you look at Subaccount C first in the picture, you can see that Subaccount C is directly integrated to the Corporate Identity Provider. This 'direct' integration via exchanging SAML metadata is definitely the easiest, but can cause scalability issues in the long run. You see, for every new Subaccount, you would need to touch your Corporate Identity Provider and configure a new SAML endpoint (or at least exchange the metadata because every SAP CP subaccount has its own metadata). Typically, this involves cross-department interaction and might slow you down when you want to add new subaccounts to your global account. I would therefore recommend an alternative solution: Subaccount A and Subaccount B are both integrated with an SAP Cloud Identity Authentication Tenant. This authentication is a one-click integration to establish the trust connection between the two… so that happens very fast. In the Identity Authentication tenant, as we have learned earlier, this will create a new "Application". For every Application within the tenant, you can configure the identity provider, meaning the user base that is taken for authentication. In Identity Authentication, you can configure your application to use a "Corporate Identity Provider" as the identity provider of an application. This Corporate Identity Provider can then be configured once by establishing a trust relationship between the Corporate IdP and your Identity Authentication tenant and can then be re-used for every application. The Identity Authentication tenant will then redirect all authentication requests to the 'generic' SAML endpoint (highlighted in blue) which means all Subaccounts can make use of the same authentication forwarding mechanism. Of course, the trust configuration between the corporate identity provider and the Identity Authentication tenant requires a little bit of work and you have to select carefully which SAML attributes should be exposed by the endpoint of the corporate identity provider so that all cloud scenarios have the required attributes but once the configuration is set up like for Subaccount A and B, you can quickly add new subaccount without ever touching your corporate identity provider.

I also want to point you to an important detail when it comes to members: Members can only be provisioned from an Identity Authentication tenant when using the Platform Identity Provider Service. But when you configure the forwarding as I describe here, your members of the subaccounts can also be provisioned by your corporate identity provider. This adds a huge improvement for administration, operations, and compliance but sadly does not work yet smoothly for all scenarios (more details can be found in this blog https://blogs.sap.com/2017/03/10/part-5-beta-sap-cp-member-provisioning-via-microsoft-adfs/).

20) User Management: Internal and External Application Users for the same application

Let's imagine you have the following scenario: your cloud application is to be used by both internal employees of your company and also external partners. Most likely, your internal employees can be authenticated and authorized by your corporate SAML identity provider while your external partners have no internal user accounts in your corporate IdP. Oftentimes, partners have their user accounts in separate identity providers which are separated for good reasons. I will now explain my approach of letting both groups of users use the same application and federating authentication and authorization correctly.

As you can add multiple application identity providers to a subaccount (although you have to choose one default IdP), you could for example add your corporate identity provider with your internal users and SAP Identity Authentication Service as IdP for your external users to the same subaccount, where the application in question is deployed. Internal users (blue) will then be able to authenticate against the corporate IdP and external users (orange) will authenticate against the Identity Authentication Service. If your corporate IdP is only available from within the internal network, authentication will only be possible if users are in the internal network themselves. If you want to allow full mobile access from anywhere without VPNs and other measures, you'll also need an external SAML endpoint for your internal users. Your external users will most likely not be in your internal network, so they will anyway need an external SAML endpoint - so here, the Identity Authentication Service is the ideal location to maintain those users. Authorization is conducted oftentimes by group membership of a user. Those groups can be maintained in your on-premise corporate IdP for internal users and in the Identity Authentication tenant for your external users. Mapping rules are then made individually for each application IdP configuration in the subaccount to make sure that the groups in the subaccounts map to the ones from the IdPs.

In comparison to architecture 13, you can see that I introduced a new location for "roles" in the subaccount. In SAP CP, roles can either be configured on subaccount level (the "role"-box on the right) or within an application (the "role"-box on the left). The difference is that subaccount level Roles can be shared between applications whereas roles that are only available within an application cannot be shared - and therefore not reused by multiple applications. Both types of roles have their reasons of existence, so for some cases shared roles make sense whereas for other roles (oftentimes more 'application specific roles'), a shareable role does not add value. It's up for you to decide…

21) SAP Cloud Platform Integration Service (former HANA Cloud Integration, HCI)

In case you want to use the SAP Cloud Platform Integration Service (former HANA Cloud Integration, HCI) and you are not sure about how many subaccounts, tenants, and SCCs you will need, probably the following scenario can serve as a good blueprint for you:

In this scenario, an application is developed in SAP Cloud Platform that could, for example, extend a SaaS Solution and include data from two backend systems. Integration should be done by the Integration Service.