- SAP Community

- Products and Technology

- Technology

- Technology Blogs by Members

- Analytical Business Rules with HANA and R – Foreca...

Technology Blogs by Members

Explore a vibrant mix of technical expertise, industry insights, and tech buzz in member blogs covering SAP products, technology, and events. Get in the mix!

Turn on suggestions

Auto-suggest helps you quickly narrow down your search results by suggesting possible matches as you type.

Showing results for

ttrapp

Active Contributor

Options

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

10-09-2016

11:39 PM

This blog series is about analytical business rules. I will focus on advanced techniques using R in BRFplus rule systems. R is together with PAL one tool that enhances HANA with techniques from predictive analytics.

Operational vs. Analytical Decision Management

Operational Decision Management is related to digitalization using business rules. With business rules we automate processes by doing automated decisions based on calculations. Usually the rule systems have a simple structure as algorithms specified by business process experts.

Analytical decision management has a different flavor since it uses methods from statistics and data mining to detect business rules. In many cases this is done by statisticians or data analysts and typical example are calculations of risks f.e. of a financial product.

Sometimes data analysts doing analytical business rules management find rules having a simple structure and sometimes the rules contain difficult calculations having their origin from analytical models. From now on I call those rules analytical rules.

How to find Analytical Rules?

Let’s look at a hypothetical and much simplified example. A revenue analyst wants to analyze the revenue of a certain business unit. This process should be automated and a rule system should warn a business analyst if the revenue gets critical. I will present two (simplified) answers which show you what I mean.

As I wrote above usual a specialist or sometimes a specialized organizational will define the rules. Those specialists will usually at first look at the data since visualization is very important for a data analyst or data scientist. SAP offers many tools for this task: SAP Lumira is perfect for this task, but you can also develop your own analytical apps using frameworks like APF for exploratory data analysis. HANA experts can also use HDB studios and people with R skills can use the Outside-In approach described here. So R offers a workbench that can be used by data scientists for everything that can’t be done with a dashboard. This includes specials charts for visualization which are described in this blog: https://www.r-bloggers.com/interactive-visualizations-with-r-a-minireview/ but also statistical tests.

The following diagram shows a time series in R imported in a data frame. If you look at the picture you will see that it is hard to see any pattern. You may argue that this is no realistic example since you need would expect a visible trend (perhaps linear), seasonality (regular and predictable changes which recur in a certain time interval) and a cyclic component (regular fluctuations).

We will come back the question of the trend later. At first we start with a very simple analytical rule: we want to find outliers.

Outlier Detection – Checking of Thresholds

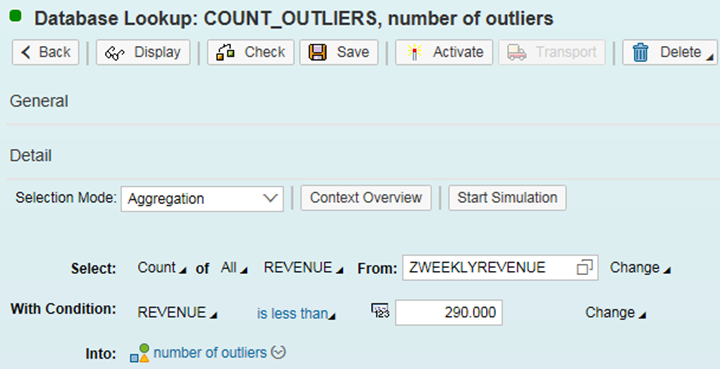

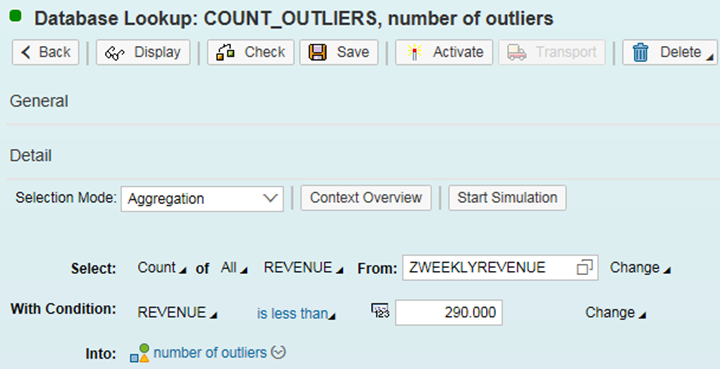

Outliers are observations that you don’t expect. Usually those are very big or small values which can occur by chance. In our context we mean something different and ask how many revenues are smaller than a certain threshold – say less or equal 290.000. In the time series above you find 9 of those values which can be easily checked using the following DB Lookup expression in BRFplus.

This is a very simple analytical rule: we ask how many times the value is under a certain limit. I call this an analytical rule since I don’t check the properties of one business object – I check a huge number of values. With BRFplus DB Lookup working on transparent tables or CDS views you can check a huge amount of line items efficiently especially using the HANA database.

Finding Trends and Forecasts

In the following I will present a simplified example for a more advanced analytical rule that is using prediction. If you look at the example an example of time series above a natural question is, whether we can detect a trend and can even make predictions. A typical question is whether in the next time in the future there will be some outliers.

The example above is a typical example that in most cases it is hard to detect a trend. When a statistician analyses a time series instance usually he will try to decompose the time series to detect seasonal and cyclic behavior. Without those components the time series consists of a smooth component (trend) and random fluctuations (“white noise”). A well know technique is smoothing which removes irregularities to provide a clearer view of the true underlying pattern in the series. This can be done very easily using R in combination with HANA which I described in a previous blog: http://scn.sap.com/community/hana-in-memory/blog/2016/07/31/hana-and-r-inside-out-and-outside-in In the following I show a very simplified example of a prediction. In contrast to the last blog now I using the Inside-Out scenario where R is called from SQLscript procedures.

Supposed we selected the value of above time series using SQL we can create create a time series object in R:

R provides all state-of-the-art algorithms for smoothing, f.e. Holt Winters smoothing:

In this example we use an automated estimation of the smoothing parameters. They are most important since in exponential smoothing methods. All exponential smoothing methods usually look at the latest values in the time series where older values have less influence. The smoothing parameters define exactly the influence of older values.

With the

When smoothing is performed you can make a prediction using the forecast package in R:

Here R calculated a prediction for eight weeks. The result can be printed out with the following command:

In the picture you can see the values in 80% (middle blue) and 95% (light blue) prediction interval.

The prediction intervals can be used in predictive analytical rules. If they f.e. the lower values of the 95% prediction interval go below a certain value for a number of times, this can lead to a clearing case where an official in charge will be alerted using a business workflow for example.

It is very easy to implement this feature in BRFplus and in R-Inside-Out szenarion. Here a SQLscript procedure is using R. The following procedure has the time series as input, performs smooting, makes a forecast and return the lower values of the 95% prediction intervals. You will easily understand that I reused the code snippets above.

This procedure can be called using a SQL Script procedure in HANA

This SQLscript procedure can be called from an AMDP or with a Database Procedure Proxy and both artifact can the called from BRFplus .At the moment you can’t use R in AMDP which makes the software logistics a little bit painful.

Some Remarks about Forecast Automation

Usually forecasting is very difficult. There is no single algorithm that will provide reasonable results for all problem instances. Usually a data scientist will have to visualize data, perform time series decomposition, will try out different smoothing parameters and finally will use statistical checks to analyze the output of the algorithms. At least this was what I learned in the 90s of the last century at university. This is true but in the last years that science has made progress. If you want to learn about this I recommend the book “Forecasting with Exponential Smoothing - The State Space Approach” written by Rob Hyndman and others: http://exponentialsmoothing.net/ The R forecast packages used above was written by Rob Hyndman, too. So let me describe some highlights of the book:

Also any researchers believe that with the above mentioned approach machines usually perform better doing predictions.

I recommend to test the quality of predictions. With HANA this can be done in real time: just calculate predictions of past values and compare them with actual values. This is of course a huge computational effort that can be done easily using In-Memory technology. Those computations should be revisited by statisticians who will use it to optimize the prediction algorithms.

Summary

I discussed two simple examples for analytical business rules. For a simple forecast scenarion I used the HANA/R integration since the R library provides all state-of-the-art algorithms for predictive analytics. Those can be called from ABAP and so from BRFplus to enhance Business Rules with complex calculations. I consider this as a giant step forward to intelligent decision making,

Operational vs. Analytical Decision Management

Operational Decision Management is related to digitalization using business rules. With business rules we automate processes by doing automated decisions based on calculations. Usually the rule systems have a simple structure as algorithms specified by business process experts.

Analytical decision management has a different flavor since it uses methods from statistics and data mining to detect business rules. In many cases this is done by statisticians or data analysts and typical example are calculations of risks f.e. of a financial product.

Sometimes data analysts doing analytical business rules management find rules having a simple structure and sometimes the rules contain difficult calculations having their origin from analytical models. From now on I call those rules analytical rules.

How to find Analytical Rules?

Let’s look at a hypothetical and much simplified example. A revenue analyst wants to analyze the revenue of a certain business unit. This process should be automated and a rule system should warn a business analyst if the revenue gets critical. I will present two (simplified) answers which show you what I mean.

As I wrote above usual a specialist or sometimes a specialized organizational will define the rules. Those specialists will usually at first look at the data since visualization is very important for a data analyst or data scientist. SAP offers many tools for this task: SAP Lumira is perfect for this task, but you can also develop your own analytical apps using frameworks like APF for exploratory data analysis. HANA experts can also use HDB studios and people with R skills can use the Outside-In approach described here. So R offers a workbench that can be used by data scientists for everything that can’t be done with a dashboard. This includes specials charts for visualization which are described in this blog: https://www.r-bloggers.com/interactive-visualizations-with-r-a-minireview/ but also statistical tests.

The following diagram shows a time series in R imported in a data frame. If you look at the picture you will see that it is hard to see any pattern. You may argue that this is no realistic example since you need would expect a visible trend (perhaps linear), seasonality (regular and predictable changes which recur in a certain time interval) and a cyclic component (regular fluctuations).

We will come back the question of the trend later. At first we start with a very simple analytical rule: we want to find outliers.

Outlier Detection – Checking of Thresholds

Outliers are observations that you don’t expect. Usually those are very big or small values which can occur by chance. In our context we mean something different and ask how many revenues are smaller than a certain threshold – say less or equal 290.000. In the time series above you find 9 of those values which can be easily checked using the following DB Lookup expression in BRFplus.

This is a very simple analytical rule: we ask how many times the value is under a certain limit. I call this an analytical rule since I don’t check the properties of one business object – I check a huge number of values. With BRFplus DB Lookup working on transparent tables or CDS views you can check a huge amount of line items efficiently especially using the HANA database.

Finding Trends and Forecasts

In the following I will present a simplified example for a more advanced analytical rule that is using prediction. If you look at the example an example of time series above a natural question is, whether we can detect a trend and can even make predictions. A typical question is whether in the next time in the future there will be some outliers.

The example above is a typical example that in most cases it is hard to detect a trend. When a statistician analyses a time series instance usually he will try to decompose the time series to detect seasonal and cyclic behavior. Without those components the time series consists of a smooth component (trend) and random fluctuations (“white noise”). A well know technique is smoothing which removes irregularities to provide a clearer view of the true underlying pattern in the series. This can be done very easily using R in combination with HANA which I described in a previous blog: http://scn.sap.com/community/hana-in-memory/blog/2016/07/31/hana-and-r-inside-out-and-outside-in In the following I show a very simplified example of a prediction. In contrast to the last blog now I using the Inside-Out scenario where R is called from SQLscript procedures.

Supposed we selected the value of above time series using SQL we can create create a time series object in R:

revenueseries <-ts(revenues)R provides all state-of-the-art algorithms for smoothing, f.e. Holt Winters smoothing:

revenueseriessmooth <- HoltWinters(revenueseries, beta=FALSE, gamma=FALSE)In this example we use an automated estimation of the smoothing parameters. They are most important since in exponential smoothing methods. All exponential smoothing methods usually look at the latest values in the time series where older values have less influence. The smoothing parameters define exactly the influence of older values.

With the

plot.revenueseriessmooth command in R you can see the result in the following diagram.

When smoothing is performed you can make a prediction using the forecast package in R:

revenueseriesforecasts <- forecast.HoltWinters(revenueseriessmooth, h=8)Here R calculated a prediction for eight weeks. The result can be printed out with the following command:

plot.forecast(revenueseriesforecasts)In the picture you can see the values in 80% (middle blue) and 95% (light blue) prediction interval.

The prediction intervals can be used in predictive analytical rules. If they f.e. the lower values of the 95% prediction interval go below a certain value for a number of times, this can lead to a clearing case where an official in charge will be alerted using a business workflow for example.

It is very easy to implement this feature in BRFplus and in R-Inside-Out szenarion. Here a SQLscript procedure is using R. The following procedure has the time series as input, performs smooting, makes a forecast and return the lower values of the 95% prediction intervals. You will easily understand that I reused the code snippets above.

DROP PROCEDURE "MY_SCHEMA"."REVENUE_FORECAST";CREATE PROCEDURE "MY_SCHEMA"."REVENUE_FORECAST" (IN revenue " MY_SCHEMA "."T_SERIES", OUT result " MY_SCHEMA"."T_SERIES")LANGUAGE RLANGREADS SQL DATA ASBEGINrevenueseries <-ts(revenues)revenueseriessmooth <- HoltWinters(revenueseries,

beta=FALSE, gamma=FALSE)library("forecast")revenueseriesforecasts <- forecast.HoltWinters(revenueseriessmooth, h=8)lowval <- as.data.frame(matrix(revenueseriesforecasts2$lower[,"95%"])colnames(result) <- c("REVENUE")END;This procedure can be called using a SQL Script procedure in HANA

/********* Begin Procedure Script ************/BEGINlt_threshold_low = SELECT REVENUE FROM "SAPDAT"."ZWEEKLYREVENUE" WHERE PARTNER = :IV_PARTNER and CLIENT = :IV_CLIENT ORDER BY FISCYEAR ASC, WEEK ASC;CALL "MY_SCHEMA"."REVENUE_FORECAST"(:lt_threshold_low, :OUTPUT_TABLE);ENDThis SQLscript procedure can be called from an AMDP or with a Database Procedure Proxy and both artifact can the called from BRFplus .At the moment you can’t use R in AMDP which makes the software logistics a little bit painful.

Some Remarks about Forecast Automation

Usually forecasting is very difficult. There is no single algorithm that will provide reasonable results for all problem instances. Usually a data scientist will have to visualize data, perform time series decomposition, will try out different smoothing parameters and finally will use statistical checks to analyze the output of the algorithms. At least this was what I learned in the 90s of the last century at university. This is true but in the last years that science has made progress. If you want to learn about this I recommend the book “Forecasting with Exponential Smoothing - The State Space Approach” written by Rob Hyndman and others: http://exponentialsmoothing.net/ The R forecast packages used above was written by Rob Hyndman, too. So let me describe some highlights of the book:

- Many methods of exponential smoothing are special cases of the so called state space approach.

- There a stochastic models for the state approach so that you can calculates above mentioned confidence intervals for a forecast.

- With so-called information criteria you can test the predictive ability of each model.

- You can use those techniques for automation of forecasting: just perform forecasts for every of above mentioned models and use it for model selection. And then choose the best model for calculation.

- In the literature you find many researches that combination of different models perform very well.

Also any researchers believe that with the above mentioned approach machines usually perform better doing predictions.

I recommend to test the quality of predictions. With HANA this can be done in real time: just calculate predictions of past values and compare them with actual values. This is of course a huge computational effort that can be done easily using In-Memory technology. Those computations should be revisited by statisticians who will use it to optimize the prediction algorithms.

Summary

I discussed two simple examples for analytical business rules. For a simple forecast scenarion I used the HANA/R integration since the R library provides all state-of-the-art algorithms for predictive analytics. Those can be called from ABAP and so from BRFplus to enhance Business Rules with complex calculations. I consider this as a giant step forward to intelligent decision making,

- SAP Managed Tags:

- SAP HANA,

- SAP Decision Service Management,

- NW ABAP Business Rule Framework (BRFplus)

6 Comments

You must be a registered user to add a comment. If you've already registered, sign in. Otherwise, register and sign in.

Labels in this area

-

"automatische backups"

1 -

"regelmäßige sicherung"

1 -

505 Technology Updates 53

1 -

ABAP

14 -

ABAP API

1 -

ABAP CDS Views

2 -

ABAP CDS Views - BW Extraction

1 -

ABAP CDS Views - CDC (Change Data Capture)

1 -

ABAP class

2 -

ABAP Cloud

2 -

ABAP Development

5 -

ABAP in Eclipse

1 -

ABAP Platform Trial

1 -

ABAP Programming

2 -

abap technical

1 -

absl

1 -

access data from SAP Datasphere directly from Snowflake

1 -

Access data from SAP datasphere to Qliksense

1 -

Accrual

1 -

action

1 -

adapter modules

1 -

Addon

1 -

Adobe Document Services

1 -

ADS

1 -

ADS Config

1 -

ADS with ABAP

1 -

ADS with Java

1 -

ADT

2 -

Advance Shipping and Receiving

1 -

Advanced Event Mesh

3 -

AEM

1 -

AI

7 -

AI Launchpad

1 -

AI Projects

1 -

AIML

9 -

Alert in Sap analytical cloud

1 -

Amazon S3

1 -

Analytical Dataset

1 -

Analytical Model

1 -

Analytics

1 -

Analyze Workload Data

1 -

annotations

1 -

API

1 -

API and Integration

3 -

API Call

2 -

Application Architecture

1 -

Application Development

5 -

Application Development for SAP HANA Cloud

3 -

Applications and Business Processes (AP)

1 -

Artificial Intelligence

1 -

Artificial Intelligence (AI)

4 -

Artificial Intelligence (AI) 1 Business Trends 363 Business Trends 8 Digital Transformation with Cloud ERP (DT) 1 Event Information 462 Event Information 15 Expert Insights 114 Expert Insights 76 Life at SAP 418 Life at SAP 1 Product Updates 4

1 -

Artificial Intelligence (AI) blockchain Data & Analytics

1 -

Artificial Intelligence (AI) blockchain Data & Analytics Intelligent Enterprise

1 -

Artificial Intelligence (AI) blockchain Data & Analytics Intelligent Enterprise Oil Gas IoT Exploration Production

1 -

Artificial Intelligence (AI) blockchain Data & Analytics Intelligent Enterprise sustainability responsibility esg social compliance cybersecurity risk

1 -

ASE

1 -

ASR

2 -

ASUG

1 -

Attachments

1 -

Authorisations

1 -

Automating Processes

1 -

Automation

1 -

aws

2 -

Azure

1 -

Azure AI Studio

1 -

B2B Integration

1 -

Backorder Processing

1 -

Backup

1 -

Backup and Recovery

1 -

Backup schedule

1 -

BADI_MATERIAL_CHECK error message

1 -

Bank

1 -

BAS

1 -

basis

2 -

Basis Monitoring & Tcodes with Key notes

2 -

Batch Management

1 -

BDC

1 -

Best Practice

1 -

bitcoin

1 -

Blockchain

3 -

BOP in aATP

1 -

BOP Segments

1 -

BOP Strategies

1 -

BOP Variant

1 -

BPC

1 -

BPC LIVE

1 -

BTP

11 -

BTP Destination

2 -

Business AI

1 -

Business and IT Integration

1 -

Business application stu

1 -

Business Architecture

1 -

Business Communication Services

1 -

Business Continuity

1 -

Business Data Fabric

3 -

Business Partner

12 -

Business Partner Master Data

10 -

Business Technology Platform

2 -

Business Trends

1 -

CA

1 -

calculation view

1 -

CAP

3 -

Capgemini

1 -

CAPM

1 -

Catalyst for Efficiency: Revolutionizing SAP Integration Suite with Artificial Intelligence (AI) and

1 -

CCMS

2 -

CDQ

12 -

CDS

2 -

Cental Finance

1 -

Certificates

1 -

CFL

1 -

Change Management

1 -

chatbot

1 -

chatgpt

3 -

CL_SALV_TABLE

2 -

Class Runner

1 -

Classrunner

1 -

Cloud ALM Monitoring

1 -

Cloud ALM Operations

1 -

cloud connector

1 -

Cloud Extensibility

1 -

Cloud Foundry

3 -

Cloud Integration

6 -

Cloud Platform Integration

2 -

cloudalm

1 -

communication

1 -

Compensation Information Management

1 -

Compensation Management

1 -

Compliance

1 -

Compound Employee API

1 -

Configuration

1 -

Connectors

1 -

Consolidation Extension for SAP Analytics Cloud

1 -

Controller-Service-Repository pattern

1 -

Conversion

1 -

Cosine similarity

1 -

cryptocurrency

1 -

CSI

1 -

ctms

1 -

Custom chatbot

3 -

Custom Destination Service

1 -

custom fields

1 -

Customer Experience

1 -

Customer Journey

1 -

Customizing

1 -

Cyber Security

2 -

Data

1 -

Data & Analytics

1 -

Data Aging

1 -

Data Analytics

2 -

Data and Analytics (DA)

1 -

Data Archiving

1 -

Data Back-up

1 -

Data Governance

5 -

Data Integration

2 -

Data Quality

12 -

Data Quality Management

12 -

Data Synchronization

1 -

data transfer

1 -

Data Unleashed

1 -

Data Value

8 -

database tables

1 -

Datasphere

2 -

datenbanksicherung

1 -

dba cockpit

1 -

dbacockpit

1 -

Debugging

2 -

Delimiting Pay Components

1 -

Delta Integrations

1 -

Destination

3 -

Destination Service

1 -

Developer extensibility

1 -

Developing with SAP Integration Suite

1 -

Devops

1 -

digital transformation

1 -

Documentation

1 -

Dot Product

1 -

DQM

1 -

dump database

1 -

dump transaction

1 -

e-Invoice

1 -

E4H Conversion

1 -

Eclipse ADT ABAP Development Tools

2 -

edoc

1 -

edocument

1 -

ELA

1 -

Embedded Consolidation

1 -

Embedding

1 -

Embeddings

1 -

Employee Central

1 -

Employee Central Payroll

1 -

Employee Central Time Off

1 -

Employee Information

1 -

Employee Rehires

1 -

Enable Now

1 -

Enable now manager

1 -

endpoint

1 -

Enhancement Request

1 -

Enterprise Architecture

1 -

ETL Business Analytics with SAP Signavio

1 -

Euclidean distance

1 -

Event Dates

1 -

Event Driven Architecture

1 -

Event Mesh

2 -

Event Reason

1 -

EventBasedIntegration

1 -

EWM

1 -

EWM Outbound configuration

1 -

EWM-TM-Integration

1 -

Existing Event Changes

1 -

Expand

1 -

Expert

2 -

Expert Insights

1 -

Fiori

14 -

Fiori Elements

2 -

Fiori SAPUI5

12 -

Flask

1 -

Full Stack

8 -

Funds Management

1 -

General

1 -

Generative AI

1 -

Getting Started

1 -

GitHub

8 -

Grants Management

1 -

groovy

1 -

GTP

1 -

HANA

5 -

HANA Cloud

2 -

Hana Cloud Database Integration

2 -

HANA DB

1 -

HANA XS Advanced

1 -

Historical Events

1 -

home labs

1 -

HowTo

1 -

HR Data Management

1 -

html5

8 -

Identity cards validation

1 -

idm

1 -

Implementation

1 -

input parameter

1 -

instant payments

1 -

Integration

3 -

Integration Advisor

1 -

Integration Architecture

1 -

Integration Center

1 -

Integration Suite

1 -

intelligent enterprise

1 -

Java

1 -

job

1 -

Job Information Changes

1 -

Job-Related Events

1 -

Job_Event_Information

1 -

joule

4 -

Journal Entries

1 -

Just Ask

1 -

Kerberos for ABAP

8 -

Kerberos for JAVA

8 -

Launch Wizard

1 -

Learning Content

2 -

Life at SAP

1 -

lightning

1 -

Linear Regression SAP HANA Cloud

1 -

local tax regulations

1 -

LP

1 -

Machine Learning

2 -

Marketing

1 -

Master Data

3 -

Master Data Management

14 -

Maxdb

2 -

MDG

1 -

MDGM

1 -

MDM

1 -

Message box.

1 -

Messages on RF Device

1 -

Microservices Architecture

1 -

Microsoft Universal Print

1 -

Middleware Solutions

1 -

Migration

5 -

ML Model Development

1 -

Modeling in SAP HANA Cloud

8 -

Monitoring

3 -

MTA

1 -

Multi-Record Scenarios

1 -

Multiple Event Triggers

1 -

Neo

1 -

New Event Creation

1 -

New Feature

1 -

Newcomer

1 -

NodeJS

2 -

ODATA

2 -

OData APIs

1 -

odatav2

1 -

ODATAV4

1 -

ODBC

1 -

ODBC Connection

1 -

Onpremise

1 -

open source

2 -

OpenAI API

1 -

Oracle

1 -

PaPM

1 -

PaPM Dynamic Data Copy through Writer function

1 -

PaPM Remote Call

1 -

PAS-C01

1 -

Pay Component Management

1 -

PGP

1 -

Pickle

1 -

PLANNING ARCHITECTURE

1 -

Popup in Sap analytical cloud

1 -

PostgrSQL

1 -

POSTMAN

1 -

Process Automation

2 -

Product Updates

4 -

PSM

1 -

Public Cloud

1 -

Python

4 -

Qlik

1 -

Qualtrics

1 -

RAP

3 -

RAP BO

2 -

Record Deletion

1 -

Recovery

1 -

recurring payments

1 -

redeply

1 -

Release

1 -

Remote Consumption Model

1 -

Replication Flows

1 -

Research

1 -

Resilience

1 -

REST

1 -

REST API

1 -

Retagging Required

1 -

Risk

1 -

Rolling Kernel Switch

1 -

route

1 -

rules

1 -

S4 HANA

1 -

S4 HANA Cloud

1 -

S4 HANA On-Premise

1 -

S4HANA

3 -

S4HANA_OP_2023

2 -

SAC

10 -

SAC PLANNING

9 -

SAP

4 -

SAP ABAP

1 -

SAP Advanced Event Mesh

1 -

SAP AI Core

8 -

SAP AI Launchpad

8 -

SAP Analytic Cloud Compass

1 -

Sap Analytical Cloud

1 -

SAP Analytics Cloud

4 -

SAP Analytics Cloud for Consolidation

2 -

SAP Analytics Cloud Story

1 -

SAP analytics clouds

1 -

SAP BAS

1 -

SAP Basis

6 -

SAP BODS

1 -

SAP BODS certification.

1 -

SAP BTP

20 -

SAP BTP Build Work Zone

2 -

SAP BTP Cloud Foundry

5 -

SAP BTP Costing

1 -

SAP BTP CTMS

1 -

SAP BTP Innovation

1 -

SAP BTP Migration Tool

1 -

SAP BTP SDK IOS

1 -

SAP Build

11 -

SAP Build App

1 -

SAP Build apps

1 -

SAP Build CodeJam

1 -

SAP Build Process Automation

3 -

SAP Build work zone

10 -

SAP Business Objects Platform

1 -

SAP Business Technology

2 -

SAP Business Technology Platform (XP)

1 -

sap bw

1 -

SAP CAP

2 -

SAP CDC

1 -

SAP CDP

1 -

SAP Certification

1 -

SAP Cloud ALM

4 -

SAP Cloud Application Programming Model

1 -

SAP Cloud Integration for Data Services

1 -

SAP cloud platform

8 -

SAP Companion

1 -

SAP CPI

3 -

SAP CPI (Cloud Platform Integration)

2 -

SAP CPI Discover tab

1 -

sap credential store

1 -

SAP Customer Data Cloud

1 -

SAP Customer Data Platform

1 -

SAP Data Intelligence

1 -

SAP Data Migration in Retail Industry

1 -

SAP Data Services

1 -

SAP DATABASE

1 -

SAP Dataspher to Non SAP BI tools

1 -

SAP Datasphere

9 -

SAP DRC

1 -

SAP EWM

1 -

SAP Fiori

2 -

SAP Fiori App Embedding

1 -

Sap Fiori Extension Project Using BAS

1 -

SAP GRC

1 -

SAP HANA

1 -

SAP HCM (Human Capital Management)

1 -

SAP HR Solutions

1 -

SAP IDM

1 -

SAP Integration Suite

9 -

SAP Integrations

4 -

SAP iRPA

2 -

SAP Learning Class

1 -

SAP Learning Hub

1 -

SAP Odata

2 -

SAP on Azure

1 -

SAP PartnerEdge

1 -

sap partners

1 -

SAP Password Reset

1 -

SAP PO Migration

1 -

SAP Prepackaged Content

1 -

SAP Process Automation

2 -

SAP Process Integration

2 -

SAP Process Orchestration

1 -

SAP S4HANA

2 -

SAP S4HANA Cloud

1 -

SAP S4HANA Cloud for Finance

1 -

SAP S4HANA Cloud private edition

1 -

SAP Sandbox

1 -

SAP STMS

1 -

SAP SuccessFactors

2 -

SAP SuccessFactors HXM Core

1 -

SAP Time

1 -

SAP TM

2 -

SAP Trading Partner Management

1 -

SAP UI5

1 -

SAP Upgrade

1 -

SAP-GUI

8 -

SAP_COM_0276

1 -

SAPBTP

1 -

SAPCPI

1 -

SAPEWM

1 -

sapmentors

1 -

saponaws

2 -

SAPUI5

4 -

schedule

1 -

Secure Login Client Setup

8 -

security

9 -

Selenium Testing

1 -

SEN

1 -

SEN Manager

1 -

service

1 -

SET_CELL_TYPE

1 -

SET_CELL_TYPE_COLUMN

1 -

SFTP scenario

2 -

Simplex

1 -

Single Sign On

8 -

Singlesource

1 -

SKLearn

1 -

soap

1 -

Software Development

1 -

SOLMAN

1 -

solman 7.2

2 -

Solution Manager

3 -

sp_dumpdb

1 -

sp_dumptrans

1 -

SQL

1 -

sql script

1 -

SSL

8 -

SSO

8 -

Substring function

1 -

SuccessFactors

1 -

SuccessFactors Time Tracking

1 -

Sybase

1 -

system copy method

1 -

System owner

1 -

Table splitting

1 -

Tax Integration

1 -

Technical article

1 -

Technical articles

1 -

Technology Updates

1 -

Technology Updates

1 -

Technology_Updates

1 -

Threats

1 -

Time Collectors

1 -

Time Off

2 -

Tips and tricks

2 -

Tools

1 -

Trainings & Certifications

1 -

Transport in SAP BODS

1 -

Transport Management

1 -

TypeScript

2 -

unbind

1 -

Unified Customer Profile

1 -

UPB

1 -

Use of Parameters for Data Copy in PaPM

1 -

User Unlock

1 -

VA02

1 -

Validations

1 -

Vector Database

1 -

Vector Engine

1 -

Visual Studio Code

1 -

VSCode

1 -

Web SDK

1 -

work zone

1 -

workload

1 -

xsa

1 -

XSA Refresh

1

- « Previous

- Next »

Related Content

- 10+ ways to reshape your SAP landscape with SAP BTP - Blog 4 Interview in Technology Blogs by SAP

- 10+ ways to reshape your SAP landscape with SAP Business Technology Platform – Blog 4 in Technology Blogs by SAP

- What’s New in SAP Analytics Cloud Release 2024.08 in Technology Blogs by SAP

- Sneak Peek in to SAP Analytics Cloud release for Q2 2024 in Technology Blogs by SAP

- ETL Business Analytics with SAP Signavio in Technology Blogs by Members

Top kudoed authors

| User | Count |

|---|---|

| 11 | |

| 9 | |

| 7 | |

| 6 | |

| 4 | |

| 4 | |

| 3 | |

| 3 | |

| 3 | |

| 3 |