- SAP Community

- Products and Technology

- Technology

- Technology Blogs by SAP

- SAP Lumira Cloud with Data Service Enabled - Part ...

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

In Part 1 of the blog, we introduced the business scenario of Data Service for SAP Lumira Cloud and the basic workflow.

In actual business, as an IT admin I don’t always want to load a full chunk of source data to the target, especially if the volume of data is big, I only want to insert the new records since my last update. Also, if my existing data has updated values, (e.g. sales revenue of the same record has changed), how can I update the desired record?

Advanced workflow using enhanced HCI upload settings

In advanced HCI upload settings, SAP Lumira Cloud introduced Primary Key to help fulfill these needs.

In the new example, we have a story called Best Run Corp Margin Analysis.

The end user created a story board based on initial data and published it to SAP Lumira Cloud.

(1) The visualization has a filter setting there which selects records for Q4 2013 only. In the example, we will not add new records for the filtered quarter but instead we will update the values of that quarter.

Steps for using advanced upload settings

- As team admin, find the dataset that you want to apply advanced settings, click “HCI Upload Settings” from its action menu.

In the pop up dialog, select one or more dimensions that will form a primary key.

Validate the Primary Key – While selecting the dimensions, you can click on the Validate button to see if the combination of dimensions has unique enough values that can form the primary key. Keep selecting and validating until you see the validation is passed. You will get a green check as in the screen below.

Apply the Primary Key – when you pass the validation, click on the Apply button so the primary key is applied to the dataset.

2. As team admin, log on HCI again and create a new task that can leverage the primary key.

Note in this example, we are using HANA DB as the source for data refresh.

Then, just as in the basic workflow, you need to select the Lumira Cloud datastore as the target in the next step.

3. Next, you will start to define the dataflow following the wizard.

Make sure you import the dataset that has the primary key you set previously.

In the next step, it is critical that you select the option of “Auto correct load based on primary key correlation”.

Note that compared to the basic data upload workflow, now this new option is enabled because HCI detects the dataset has a primary key. Selecting this option will enable UPSERT for data upload.

4. Now as team admin, you don’t want to load the full chunk of data to the target. Instead, you want to filter the desired data, e.g. all new records since Q4 2013. In this case, you need to define a variable for the data flow.

Close the previous data flow screen. Edit the task by going to the EXECUTION PROPERTIES tab, and then click the “+” button to add a variable.

Note it is important to define the name, data type and value for the variable.

5. Edit the data flow again to finish the task creation

From the screen above, click OK to close the variable definition. Click on the DATA FLOWS tab. Select the data flow and select Edit.

In the visual data flow screen, use drag and drop to

1). Add an intermediate transformation

2). Link source table to the transformation

Double click on Transform1 to do the intermediate mapping and filtering.

When the intermediate mapping is done, close the screen and continue with the final mapping as before – double click the Target_Query icon from the visual data flow, use the output columns from Transformation1 as the new input, and map them to the target columns.

6. Run the task and check the result

When the task is finished, go back to SAP Lumira Cloud.

As the end user, you can open the story again to compare the data.

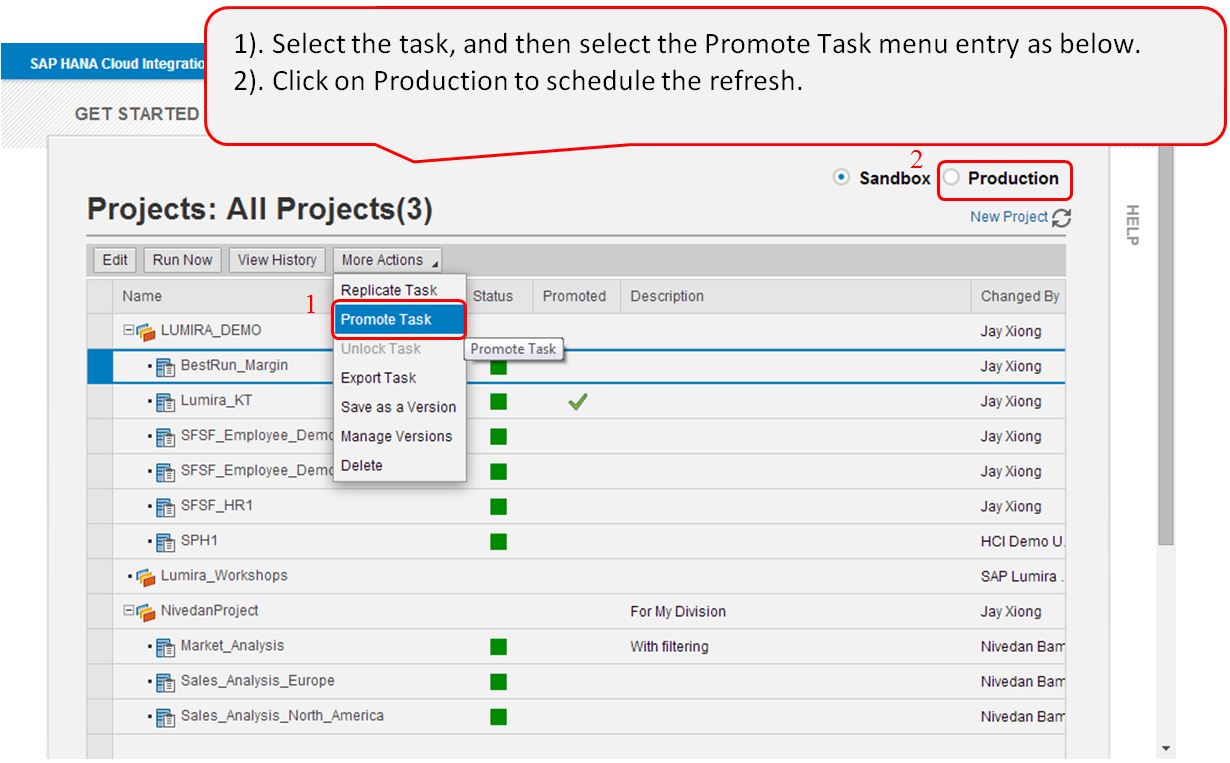

7. Promote the task to production for scheduled refresh

In the Production environment, select the task you want to schedule, and click the Schedule button.

In the pop up screen, enter the scheduling details.

Summary

With the introduction of data services by integrating with HCI, SAP Lumira Cloud enterprise users can now upload and refresh their data on cloud by sending the data from their enterprise database systems in a more scalable way.

The data upload and refresh job is mainly designed for IT admin type of user, therefore end users can easily delegate data refresh tasks to team admin so end users can concentrate on their own business.

Once the data upload and refresh task is setup, future refresh can be scheduled and therefore it also makes IT admin’s job easy.

In short, the new data service integration feature makes enterprise users much more empowered on SAP Lumira Cloud for always having up to date data from enterprise data sources.

- SAP Managed Tags:

- SAP Lumira

You must be a registered user to add a comment. If you've already registered, sign in. Otherwise, register and sign in.

-

ABAP CDS Views - CDC (Change Data Capture)

2 -

AI

1 -

Analyze Workload Data

1 -

BTP

1 -

Business and IT Integration

2 -

Business application stu

1 -

Business Technology Platform

1 -

Business Trends

1,661 -

Business Trends

87 -

CAP

1 -

cf

1 -

Cloud Foundry

1 -

Confluent

1 -

Customer COE Basics and Fundamentals

1 -

Customer COE Latest and Greatest

3 -

Customer Data Browser app

1 -

Data Analysis Tool

1 -

data migration

1 -

data transfer

1 -

Datasphere

2 -

Event Information

1,400 -

Event Information

64 -

Expert

1 -

Expert Insights

178 -

Expert Insights

273 -

General

1 -

Google cloud

1 -

Google Next'24

1 -

Kafka

1 -

Life at SAP

784 -

Life at SAP

11 -

Migrate your Data App

1 -

MTA

1 -

Network Performance Analysis

1 -

NodeJS

1 -

PDF

1 -

POC

1 -

Product Updates

4,577 -

Product Updates

327 -

Replication Flow

1 -

RisewithSAP

1 -

SAP BTP

1 -

SAP BTP Cloud Foundry

1 -

SAP Cloud ALM

1 -

SAP Cloud Application Programming Model

1 -

SAP Datasphere

2 -

SAP S4HANA Cloud

1 -

SAP S4HANA Migration Cockpit

1 -

Technology Updates

6,886 -

Technology Updates

405 -

Workload Fluctuations

1

- Annotation in SEGW in Technology Q&A

- It has never been easier to print from SAP with Microsoft Universal Print in Technology Blogs by Members

- Can a user log on to the BTP Build Workzone (standard edition) with UN + PW instead of SSO? in Technology Q&A

- odata url accessibility in Technology Q&A

- Unable to log in to SAP BusinessObjects CMC in Technology Blogs by Members

| User | Count |

|---|---|

| 13 | |

| 10 | |

| 10 | |

| 7 | |

| 7 | |

| 6 | |

| 5 | |

| 5 | |

| 5 | |

| 4 |