- SAP Community

- Products and Technology

- Technology

- Technology Blogs by SAP

- Federation vs. Data Warehousing

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

Just recently, I got dragged - yet again - into a debate on whether data warehousing is out-dated or not. I tried to boil it down to one amongst many problems that data warehousing solves. As that helped to direct the discussion into a constructive and less ideological debate, I've put it into this short blog.

The problem is trivial and very old: as you need data from multiple sources why not accessing the data directly in those sources whenever needed! That guarantees real-time. Let's assume that the sources are powerful, network bandwidths at the top of technology and overall query performance be excellent. So: why not? In fact, this is absolutely valid but there is one more thing to consider, namely that all sources to be accessed need to be available. What is the mathematical probability for that? Even small analytic systems (aka data marts) access 30, 40, 50 data sources. For bigger data warehouses this goes to the 100s. That does not mean that every query accesses all those sources but naturally a significantly smaller subset. However, from an admin perspective it is clearly not viable to continuously translate source availability to query availability. One must assume that end users would want to access all sources continuously as it is required.

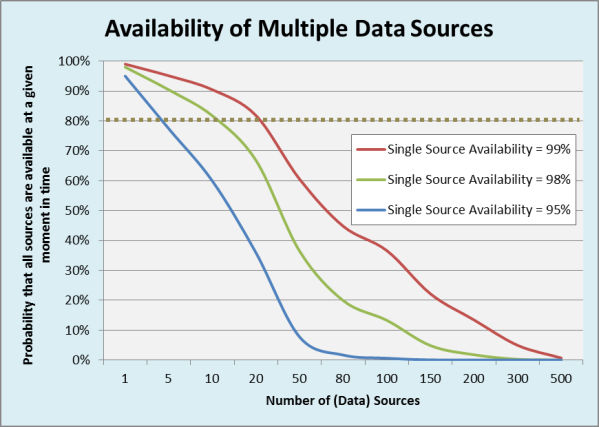

Figure 1 pictures 3 graphs that show the probability of all sources (= all data) being available, depending on the average availability of a source. For the latter 99%, 98% and 95% were considered to cater for planned and unplanned downtimes, network and other infrastructure failures. Even if a service-level agreement (SLA) of 80% availability (see dotted line) is assumed, it becomes obvious that such an SLA can be achieved only for a modest number of sources. N.b. that this applies even when data is synchronously replicated into an RDBMS because replication will obviously fail if the source is down or not accessible.

Fig. 1: Probability that all (data) sources are available given an average availability for a single source.

In a data warehouse (DW), this problem is addressed by regularly and asynchronously copying (extracting) data from the source into the DW. This is a controlled, managed and monitored process that can be made transparent to the admin of a source system who can then cater for downtimes or any other non-availability of his system. As such, a big problem for one admin - i.e. availability of allsources - is broken down to smaller chunks that can be managed in a simpler, de-central way. Once, the data is in the DW, it is available independent from planned / unplanned downtimes or network failures of the source systems.

Please do not read this blog as a counter argument to federation. No, I simply intend to create awareness for an instance that is solved by a data warehouse and that must not be under-estimated or neglected.

This blog has been cross-published here. You can follow me on Twitter under @tfxz.

- SAP Managed Tags:

- BW (SAP Business Warehouse)

You must be a registered user to add a comment. If you've already registered, sign in. Otherwise, register and sign in.

-

ABAP CDS Views - CDC (Change Data Capture)

2 -

AI

1 -

Analyze Workload Data

1 -

BTP

1 -

Business and IT Integration

2 -

Business application stu

1 -

Business Technology Platform

1 -

Business Trends

1,661 -

Business Trends

87 -

CAP

1 -

cf

1 -

Cloud Foundry

1 -

Confluent

1 -

Customer COE Basics and Fundamentals

1 -

Customer COE Latest and Greatest

3 -

Customer Data Browser app

1 -

Data Analysis Tool

1 -

data migration

1 -

data transfer

1 -

Datasphere

2 -

Event Information

1,400 -

Event Information

64 -

Expert

1 -

Expert Insights

178 -

Expert Insights

274 -

General

1 -

Google cloud

1 -

Google Next'24

1 -

Kafka

1 -

Life at SAP

784 -

Life at SAP

11 -

Migrate your Data App

1 -

MTA

1 -

Network Performance Analysis

1 -

NodeJS

1 -

PDF

1 -

POC

1 -

Product Updates

4,577 -

Product Updates

327 -

Replication Flow

1 -

RisewithSAP

1 -

SAP BTP

1 -

SAP BTP Cloud Foundry

1 -

SAP Cloud ALM

1 -

SAP Cloud Application Programming Model

1 -

SAP Datasphere

2 -

SAP S4HANA Cloud

1 -

SAP S4HANA Migration Cockpit

1 -

Technology Updates

6,886 -

Technology Updates

405 -

Workload Fluctuations

1

- Configuration: SAP Ariba SSO with SAP Cloud Identity Services - Identity Authentication in Technology Blogs by SAP

- Pilot: SAP Datasphere Fundamentals in Technology Blogs by SAP

- Exploring Integration Options in SAP Datasphere with the focus on using SAP extractors in Technology Blogs by SAP

- Possible Use Cases Of ECC & S/4HANA Connection With SAP Datasphere. in Technology Q&A

- SAP Datasphere - Space, Data Integration, and Data Modeling Best Practices in Technology Blogs by SAP

| User | Count |

|---|---|

| 13 | |

| 10 | |

| 10 | |

| 7 | |

| 7 | |

| 6 | |

| 5 | |

| 5 | |

| 5 | |

| 4 |