- SAP Community

- Products and Technology

- Technology

- Technology Blogs by Members

- Handling Deleted Records from Flat File as Source ...

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

Applies to: SAP BI 7.X

Summary:

Normally we use flat file loads to SAP BW loading either to DSO and/or info Provider with an assumption that the file sent is always with the delta changes. Otherwise, we will drop and reload the DSO/Info Providers every time we receive the files. These approaches are fine if the file size is reasonably small which does not take lot of the processing time to complete the data loads. In case, we have huge volume of data and have additional upward layers to feed the same data, this approach is time consuming and process centric. Here we are going to discuss the design option to overcome data issues in the file such as nullified/deleted records in the source that need to be deleted in the upward Info Providers as well.

Author(s): Anil Kumar Puranam, Peesu Sudhakar and Sougata Singha.

Company: Deloitte

Created on: 27th January, 2014

Authors Bio:

Sudhakar Reddy Peesu is an SAP Technology professional with more than 15 years of experience leading SAP BW/ABAP implementations, developing custom applications, and developing IT strategy. He is a PMP certified manager, has led BW/ABAP teams with hardware and landscape planning, design, installation and support, and development teams through all phases of the SDLC using the onsite/offshore delivery model. He has strong communication, project management, and organization skills. His industry project experience includes Banking, Consumer/Products, and Public Sector industries

Anil Kumar. Puranam is working as a Senior BW/BI Developer in Deloitte consulting. He has more than 9 years of SAP BW/BI/BO experience. He has worked on various support/implementation projects while working in consulting companies like Deloitte, IBM India, and TCS.

Sougata Singha is working as a business technology analyst in Deloitte Consulting. He has 2 years of experience in SAP BW/BI/BO and has been working on different support and implementation projects

Business Scenario:

Generally, in many of the BW implementations, ECC will be the source for transactional data loads. However during the transition phase to SAP (moving the operational system from legacy to ECC application), there is a lot of importance to the data extraction from legacy systems through flat files. In phase of moving our applications from legacy to ECC systems, we need to extract historical as well as day-to-day transactions to BW through flat files. Most of the time, we need to bring the data from legacy applications to BW as full loads until and unless the source applications are capable to generate deltas. When the tools are not capable to provide the deltas as like as ECC systems, we build the DSO layer as the staging layer. The DSO that we use in the staging layer help us to generate the after images and before images which generates delta to the next layer. But at the same time a single DSO alone is not capable/ sufficient to identify the deleted records from the source files that we receive.

Traditional Designs

Below are common two designs that we implement:

1. Full load from flat file to Info Provider and upwards to other Info Providers for various reporting

a. Easy to implement

b. Assume the file received are delta records from the source

c. Drop and reload if the legacy system is not capable to send the delta files

d. Time consuming to drop and re-load especially, when the file size is huge

e. Additional load process and time if the base Info Provider feeds upward Reporting layer cubes

f. If the data is feeding to external systems, the other system should also need to have some additional mechanism/design for taking care of data feeds

2. Flat file load first to staging DSO and upward Info Provider layers –

a. Relatively easy to implement

b. This design process delta or full file load

c. Only delta records are pushed to the upward Info Provider layers

d. This data flow helps to generate the delta records from staging layer DSO to next layer(s).

e. Assumption is that the delta/full file syndicated to BW will be with the zeroed out records (for deleted ones, zero will be posted rather than deleting).

The below side-by-side data flow picture illustrates above two design approaches/methods:

Problem with Traditional Design:

When we implement the 2nd design data flow discussed above, here is one major problem when there is some data records deleted in the source legacy system and part of the next file extract. As we are not dropping data and reloading the data at the staging layer DSO, it will be unable to detect those deleted records and they will remain in the staging DSO and upward layers. This will be pulled into the reports and display inaccurate data and lead to wrong decision making.

Sample data

In order to understand the business scenario better, the below example is discussed with some sample data and behavior of delta mechanism.

Note: Sample data provided is only for requirement understanding; it does not contain any data from the client (Actual Data).

Technical Challenge:

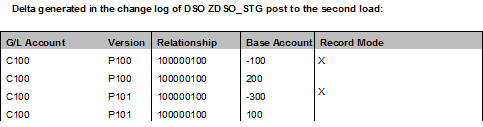

Here in the second load, we can see one of the records is missing. But this record will be still available in the DSO ZDSO_STG and further in the Info Providers. In the below table we can see the delta records generated in the change log of ZDSO_STG.

In order to delete the records from the DSO/Info Providers and to represent the correct values in reports, one of the traditional options that we have is to complete drop and reload of flat file data. But this approach is time consuming task depending on the size of the data file (records) that we need to drop and reload and the number of data targets that we need to process. Sometimes the drop and reload cannot be done in all the Info providers depending on the applications build on top of the Info providers. In order to address the above technical challenges, below step by step approach is followed.

Approach to overcome the Challenge:

In order to overcome the above challenge following steps need to be implemented,

Step 1: Parallel DSO with the same transformation but with one additional Info Object

Introduce another DSO ZDSO_DEL between PSA and the current DSO (i.e. between PSA and ZDSO_STG). Here DSO ZDSO_DEL is exactly same as like as ZDSO_STG, but with one extra info object ZTIMESTMP in the data field. Below is the definition of this IO -

Step 2: Transformation between the newly introduced DSO and regular DSO

Create transformation from new DSO ZDSO_DEL to ZDSO_STG. Here we can see 1:1 mapping between source and target, except ZTIMESTMP of source. ZTIMESTMP of source is not mapped to any of the field of target DSO.

Step 3: Create a custom control table to store the timestamp values

Create (or use any existing custom table) a z- table to store the value of ZTIMESTMP to be inserted in all the records of one data load to ZDSO_DEL. So here all the data packages of the load will be same value available in ZDSO_DEL. Here I have created a table ZTAB_CTRL.

Step 4: ABAP program to insert/modify the load date and time into the control table

Create a small program to update an entry in table ZTAB_CTRL with NAME, say, “ZTIMESTAMP_VAL”, which is the concatenation of system Date and Time. Check the appendix A for the sample code. Here is the sample record after updating the table:

Step 5: End Routine program in the transformation of newly introduced DSO

Create an end routine in the transformation of ZDSO_DEL from PSA (the transformation created in step 1), for updating ZTIMESTMP Info object for all the records of ZDSO_DEL. In the end routine, pass the value of above table entry to ZTIMESTMP.

Here is the code that we need to use in the end routine.

Step 6: Transformation between the two DSO’s and ABAP logic to mark Record Mode to ‘R’

Create the start routine in transformations from ZDSO_DEL to ZDSO_STG (the transformation created in step 2). Here in the start routine, check the value of ZTIMESTMP with the value of table entry in table ZTAB_CTRL. If the value is not same in the record, we need to make record mode equal to “R”.

We need to make filter on ZTIMESTMP in the DTP by using few lines ABAP code to fetch the records which are not equal to the value in the table ZTAB_CTRL. But still we need to make record mode equal to “R” for all these records. Please see Appendix B for the code to be used in DTP.

Here is the code that we need to use in the start routine.

Step 7: Process Chain design for automation

Create a process chain as shown below. Here the first step will be ABAP program to update the entry in table ZTAB_CTRL with NAME = “ZTIMESTAMP_VAL”, which will be equal to concatenation of system Date and system Time.

Note: We need to do full load from PSA to new DSO ZDSO_DEL each time and also to ZDSO_STG. We need to do full load from this new DSO (ZDSO_DEL) to existing DSO (ZDSO_STG). But here we take the records which do not have timestamp equal to the entry the table ZTAB_CTRL.

Data in the targets after each load:

Here no change to 4th record of ZDSO_STG even though it is not available in Source files. First record got overwritten with new value 200 from 100. And also 3rd record got overwritten with new value 100 from 300.And no change to 2nd record.

Transaction Data in ZDSO_DEL after the Second load:

Here assume that load was happened at around 20131115010101.

Transaction Data in ZDSO_STG after moving the data from ZDSO_DEL:

If we trigger the load from ZDSO_DEL to ZDSO_STG, we do get 4th record from ZDSO_DEL and this record will be moved with record mode ‘R’ to ZDSO_STG.

Conclusion:

The deleted record is deleted from the target and it would be taken care as delta to upper layer as well.

Advantage/Disadvantage of new design:

Advantages:

1) Deletion is properly handled in the staging layer DSO and delta will be generated for the deleted records to upper layer.

2) Development effort is less. New DSO structure is same as old DSO. So structure can be copied while developing the new DSO.

3) Routines written in start routine, end routine and DTP filter is small and easy to write.

4) No further control table maintenance is required.

Disadvantages:

1) We are keeping same set of data in 2 DSO. So memory is a concern.

2) If we expect more than 50% data is deleted in each load, then ZDSO_DEL ZDSO_STG load will take much time and in that case drop and reload is a better option

Appendix A

*this program will update the Table ZTAB_CTRL-

*LOW field value to timestamp Value for Insight Elim.

PARAMETERS ZNAME type ZTAB_CTRL-NAME .

DATA: lv_timestamp(20) TYPE /bic/oiztimestmp.

CONCATENATE sy-datlo sy-timlo INTO lv_timestamp.

DATA wa_tab TYPE ZTAB_CTRL.

wa_tab-name = ZNAME.

wa_tab-value = lv_timestamp.

modify ZTAB_CTRL from wa_tab.

IF SY-SUBRC = 0.

COMMIT WORK AND WAIT.

ENDIF.

Appendix B

// DTP filter on TIMESTAMP between ZDSO_DEL & ZDSO_STG.

DATA: s_ZTAB_CTRL TYPE ZTAB_CTRL.

DATA temp TYPE /bic/oiztimestmp.

SELECT SINGLE * INTO s_ZTAB_CTRL FROM ZTAB_CTRL

WHERE name = 'ZTIMESTAMP_VAL'.

IF sy-subrc = 0.

temp = s_ZTAB_CTRL-VALUE.

l_t_range-iobjnm = 'ZTIMESTMP'.

l_t_range-fieldname = '/BIC/ZTIMESTMP'.

l_t_range-sign = 'E'.

l_t_range-option = 'EQ'.

l_t_range-low = temp.

APPEND l_t_range.

ENDIF.

- SAP Managed Tags:

- BW (SAP Business Warehouse)

You must be a registered user to add a comment. If you've already registered, sign in. Otherwise, register and sign in.

-

"automatische backups"

1 -

"regelmäßige sicherung"

1 -

505 Technology Updates 53

1 -

ABAP

14 -

ABAP API

1 -

ABAP CDS Views

2 -

ABAP CDS Views - BW Extraction

1 -

ABAP CDS Views - CDC (Change Data Capture)

1 -

ABAP class

2 -

ABAP Cloud

2 -

ABAP Development

5 -

ABAP in Eclipse

1 -

ABAP Platform Trial

1 -

ABAP Programming

2 -

abap technical

1 -

absl

1 -

access data from SAP Datasphere directly from Snowflake

1 -

Access data from SAP datasphere to Qliksense

1 -

Accrual

1 -

action

1 -

adapter modules

1 -

Addon

1 -

Adobe Document Services

1 -

ADS

1 -

ADS Config

1 -

ADS with ABAP

1 -

ADS with Java

1 -

ADT

2 -

Advance Shipping and Receiving

1 -

Advanced Event Mesh

3 -

AEM

1 -

AI

7 -

AI Launchpad

1 -

AI Projects

1 -

AIML

9 -

Alert in Sap analytical cloud

1 -

Amazon S3

1 -

Analytical Dataset

1 -

Analytical Model

1 -

Analytics

1 -

Analyze Workload Data

1 -

annotations

1 -

API

1 -

API and Integration

3 -

API Call

2 -

Application Architecture

1 -

Application Development

5 -

Application Development for SAP HANA Cloud

3 -

Applications and Business Processes (AP)

1 -

Artificial Intelligence

1 -

Artificial Intelligence (AI)

4 -

Artificial Intelligence (AI) 1 Business Trends 363 Business Trends 8 Digital Transformation with Cloud ERP (DT) 1 Event Information 462 Event Information 15 Expert Insights 114 Expert Insights 76 Life at SAP 418 Life at SAP 1 Product Updates 4

1 -

Artificial Intelligence (AI) blockchain Data & Analytics

1 -

Artificial Intelligence (AI) blockchain Data & Analytics Intelligent Enterprise

1 -

Artificial Intelligence (AI) blockchain Data & Analytics Intelligent Enterprise Oil Gas IoT Exploration Production

1 -

Artificial Intelligence (AI) blockchain Data & Analytics Intelligent Enterprise sustainability responsibility esg social compliance cybersecurity risk

1 -

ASE

1 -

ASR

2 -

ASUG

1 -

Attachments

1 -

Authorisations

1 -

Automating Processes

1 -

Automation

1 -

aws

2 -

Azure

1 -

Azure AI Studio

1 -

B2B Integration

1 -

Backorder Processing

1 -

Backup

1 -

Backup and Recovery

1 -

Backup schedule

1 -

BADI_MATERIAL_CHECK error message

1 -

Bank

1 -

BAS

1 -

basis

2 -

Basis Monitoring & Tcodes with Key notes

2 -

Batch Management

1 -

BDC

1 -

Best Practice

1 -

bitcoin

1 -

Blockchain

3 -

BOP in aATP

1 -

BOP Segments

1 -

BOP Strategies

1 -

BOP Variant

1 -

BPC

1 -

BPC LIVE

1 -

BTP

11 -

BTP Destination

2 -

Business AI

1 -

Business and IT Integration

1 -

Business application stu

1 -

Business Architecture

1 -

Business Communication Services

1 -

Business Continuity

1 -

Business Data Fabric

3 -

Business Partner

12 -

Business Partner Master Data

10 -

Business Technology Platform

2 -

Business Trends

1 -

CA

1 -

calculation view

1 -

CAP

3 -

Capgemini

1 -

CAPM

1 -

Catalyst for Efficiency: Revolutionizing SAP Integration Suite with Artificial Intelligence (AI) and

1 -

CCMS

2 -

CDQ

12 -

CDS

2 -

Cental Finance

1 -

Certificates

1 -

CFL

1 -

Change Management

1 -

chatbot

1 -

chatgpt

3 -

CL_SALV_TABLE

2 -

Class Runner

1 -

Classrunner

1 -

Cloud ALM Monitoring

1 -

Cloud ALM Operations

1 -

cloud connector

1 -

Cloud Extensibility

1 -

Cloud Foundry

3 -

Cloud Integration

6 -

Cloud Platform Integration

2 -

cloudalm

1 -

communication

1 -

Compensation Information Management

1 -

Compensation Management

1 -

Compliance

1 -

Compound Employee API

1 -

Configuration

1 -

Connectors

1 -

Consolidation Extension for SAP Analytics Cloud

1 -

Controller-Service-Repository pattern

1 -

Conversion

1 -

Cosine similarity

1 -

cryptocurrency

1 -

CSI

1 -

ctms

1 -

Custom chatbot

3 -

Custom Destination Service

1 -

custom fields

1 -

Customer Experience

1 -

Customer Journey

1 -

Customizing

1 -

Cyber Security

2 -

Data

1 -

Data & Analytics

1 -

Data Aging

1 -

Data Analytics

2 -

Data and Analytics (DA)

1 -

Data Archiving

1 -

Data Back-up

1 -

Data Governance

5 -

Data Integration

2 -

Data Quality

12 -

Data Quality Management

12 -

Data Synchronization

1 -

data transfer

1 -

Data Unleashed

1 -

Data Value

8 -

database tables

1 -

Datasphere

2 -

datenbanksicherung

1 -

dba cockpit

1 -

dbacockpit

1 -

Debugging

2 -

Delimiting Pay Components

1 -

Delta Integrations

1 -

Destination

3 -

Destination Service

1 -

Developer extensibility

1 -

Developing with SAP Integration Suite

1 -

Devops

1 -

digital transformation

1 -

Documentation

1 -

Dot Product

1 -

DQM

1 -

dump database

1 -

dump transaction

1 -

e-Invoice

1 -

E4H Conversion

1 -

Eclipse ADT ABAP Development Tools

2 -

edoc

1 -

edocument

1 -

ELA

1 -

Embedded Consolidation

1 -

Embedding

1 -

Embeddings

1 -

Employee Central

1 -

Employee Central Payroll

1 -

Employee Central Time Off

1 -

Employee Information

1 -

Employee Rehires

1 -

Enable Now

1 -

Enable now manager

1 -

endpoint

1 -

Enhancement Request

1 -

Enterprise Architecture

1 -

ETL Business Analytics with SAP Signavio

1 -

Euclidean distance

1 -

Event Dates

1 -

Event Driven Architecture

1 -

Event Mesh

2 -

Event Reason

1 -

EventBasedIntegration

1 -

EWM

1 -

EWM Outbound configuration

1 -

EWM-TM-Integration

1 -

Existing Event Changes

1 -

Expand

1 -

Expert

2 -

Expert Insights

1 -

Fiori

14 -

Fiori Elements

2 -

Fiori SAPUI5

12 -

Flask

1 -

Full Stack

8 -

Funds Management

1 -

General

1 -

Generative AI

1 -

Getting Started

1 -

GitHub

8 -

Grants Management

1 -

groovy

1 -

GTP

1 -

HANA

5 -

HANA Cloud

2 -

Hana Cloud Database Integration

2 -

HANA DB

1 -

HANA XS Advanced

1 -

Historical Events

1 -

home labs

1 -

HowTo

1 -

HR Data Management

1 -

html5

8 -

Identity cards validation

1 -

idm

1 -

Implementation

1 -

input parameter

1 -

instant payments

1 -

Integration

3 -

Integration Advisor

1 -

Integration Architecture

1 -

Integration Center

1 -

Integration Suite

1 -

intelligent enterprise

1 -

Java

1 -

job

1 -

Job Information Changes

1 -

Job-Related Events

1 -

Job_Event_Information

1 -

joule

4 -

Journal Entries

1 -

Just Ask

1 -

Kerberos for ABAP

8 -

Kerberos for JAVA

8 -

Launch Wizard

1 -

Learning Content

2 -

Life at SAP

1 -

lightning

1 -

Linear Regression SAP HANA Cloud

1 -

local tax regulations

1 -

LP

1 -

Machine Learning

2 -

Marketing

1 -

Master Data

3 -

Master Data Management

14 -

Maxdb

2 -

MDG

1 -

MDGM

1 -

MDM

1 -

Message box.

1 -

Messages on RF Device

1 -

Microservices Architecture

1 -

Microsoft Universal Print

1 -

Middleware Solutions

1 -

Migration

5 -

ML Model Development

1 -

Modeling in SAP HANA Cloud

8 -

Monitoring

3 -

MTA

1 -

Multi-Record Scenarios

1 -

Multiple Event Triggers

1 -

Neo

1 -

New Event Creation

1 -

New Feature

1 -

Newcomer

1 -

NodeJS

2 -

ODATA

2 -

OData APIs

1 -

odatav2

1 -

ODATAV4

1 -

ODBC

1 -

ODBC Connection

1 -

Onpremise

1 -

open source

2 -

OpenAI API

1 -

Oracle

1 -

PaPM

1 -

PaPM Dynamic Data Copy through Writer function

1 -

PaPM Remote Call

1 -

PAS-C01

1 -

Pay Component Management

1 -

PGP

1 -

Pickle

1 -

PLANNING ARCHITECTURE

1 -

Popup in Sap analytical cloud

1 -

PostgrSQL

1 -

POSTMAN

1 -

Process Automation

2 -

Product Updates

4 -

PSM

1 -

Public Cloud

1 -

Python

4 -

Qlik

1 -

Qualtrics

1 -

RAP

3 -

RAP BO

2 -

Record Deletion

1 -

Recovery

1 -

recurring payments

1 -

redeply

1 -

Release

1 -

Remote Consumption Model

1 -

Replication Flows

1 -

Research

1 -

Resilience

1 -

REST

1 -

REST API

1 -

Retagging Required

1 -

Risk

1 -

Rolling Kernel Switch

1 -

route

1 -

rules

1 -

S4 HANA

1 -

S4 HANA Cloud

1 -

S4 HANA On-Premise

1 -

S4HANA

3 -

S4HANA_OP_2023

2 -

SAC

10 -

SAC PLANNING

9 -

SAP

4 -

SAP ABAP

1 -

SAP Advanced Event Mesh

1 -

SAP AI Core

8 -

SAP AI Launchpad

8 -

SAP Analytic Cloud Compass

1 -

Sap Analytical Cloud

1 -

SAP Analytics Cloud

4 -

SAP Analytics Cloud for Consolidation

2 -

SAP Analytics Cloud Story

1 -

SAP analytics clouds

1 -

SAP BAS

1 -

SAP Basis

6 -

SAP BODS

1 -

SAP BODS certification.

1 -

SAP BTP

20 -

SAP BTP Build Work Zone

2 -

SAP BTP Cloud Foundry

5 -

SAP BTP Costing

1 -

SAP BTP CTMS

1 -

SAP BTP Innovation

1 -

SAP BTP Migration Tool

1 -

SAP BTP SDK IOS

1 -

SAP Build

11 -

SAP Build App

1 -

SAP Build apps

1 -

SAP Build CodeJam

1 -

SAP Build Process Automation

3 -

SAP Build work zone

10 -

SAP Business Objects Platform

1 -

SAP Business Technology

2 -

SAP Business Technology Platform (XP)

1 -

sap bw

1 -

SAP CAP

2 -

SAP CDC

1 -

SAP CDP

1 -

SAP Certification

1 -

SAP Cloud ALM

4 -

SAP Cloud Application Programming Model

1 -

SAP Cloud Integration for Data Services

1 -

SAP cloud platform

8 -

SAP Companion

1 -

SAP CPI

3 -

SAP CPI (Cloud Platform Integration)

2 -

SAP CPI Discover tab

1 -

sap credential store

1 -

SAP Customer Data Cloud

1 -

SAP Customer Data Platform

1 -

SAP Data Intelligence

1 -

SAP Data Migration in Retail Industry

1 -

SAP Data Services

1 -

SAP DATABASE

1 -

SAP Dataspher to Non SAP BI tools

1 -

SAP Datasphere

9 -

SAP DRC

1 -

SAP EWM

1 -

SAP Fiori

2 -

SAP Fiori App Embedding

1 -

Sap Fiori Extension Project Using BAS

1 -

SAP GRC

1 -

SAP HANA

1 -

SAP HCM (Human Capital Management)

1 -

SAP HR Solutions

1 -

SAP IDM

1 -

SAP Integration Suite

9 -

SAP Integrations

4 -

SAP iRPA

2 -

SAP Learning Class

1 -

SAP Learning Hub

1 -

SAP Odata

2 -

SAP on Azure

1 -

SAP PartnerEdge

1 -

sap partners

1 -

SAP Password Reset

1 -

SAP PO Migration

1 -

SAP Prepackaged Content

1 -

SAP Process Automation

2 -

SAP Process Integration

2 -

SAP Process Orchestration

1 -

SAP S4HANA

2 -

SAP S4HANA Cloud

1 -

SAP S4HANA Cloud for Finance

1 -

SAP S4HANA Cloud private edition

1 -

SAP Sandbox

1 -

SAP STMS

1 -

SAP SuccessFactors

2 -

SAP SuccessFactors HXM Core

1 -

SAP Time

1 -

SAP TM

2 -

SAP Trading Partner Management

1 -

SAP UI5

1 -

SAP Upgrade

1 -

SAP-GUI

8 -

SAP_COM_0276

1 -

SAPBTP

1 -

SAPCPI

1 -

SAPEWM

1 -

sapmentors

1 -

saponaws

2 -

SAPUI5

4 -

schedule

1 -

Secure Login Client Setup

8 -

security

9 -

Selenium Testing

1 -

SEN

1 -

SEN Manager

1 -

service

1 -

SET_CELL_TYPE

1 -

SET_CELL_TYPE_COLUMN

1 -

SFTP scenario

2 -

Simplex

1 -

Single Sign On

8 -

Singlesource

1 -

SKLearn

1 -

soap

1 -

Software Development

1 -

SOLMAN

1 -

solman 7.2

2 -

Solution Manager

3 -

sp_dumpdb

1 -

sp_dumptrans

1 -

SQL

1 -

sql script

1 -

SSL

8 -

SSO

8 -

Substring function

1 -

SuccessFactors

1 -

SuccessFactors Time Tracking

1 -

Sybase

1 -

system copy method

1 -

System owner

1 -

Table splitting

1 -

Tax Integration

1 -

Technical article

1 -

Technical articles

1 -

Technology Updates

1 -

Technology Updates

1 -

Technology_Updates

1 -

Threats

1 -

Time Collectors

1 -

Time Off

2 -

Tips and tricks

2 -

Tools

1 -

Trainings & Certifications

1 -

Transport in SAP BODS

1 -

Transport Management

1 -

TypeScript

2 -

unbind

1 -

Unified Customer Profile

1 -

UPB

1 -

Use of Parameters for Data Copy in PaPM

1 -

User Unlock

1 -

VA02

1 -

Validations

1 -

Vector Database

1 -

Vector Engine

1 -

Visual Studio Code

1 -

VSCode

1 -

Web SDK

1 -

work zone

1 -

workload

1 -

xsa

1 -

XSA Refresh

1

- « Previous

- Next »

- SAP Document and Reporting Compliance - 'Colombia' - Contingency Process in Technology Blogs by SAP

- Dynamic BDC in Table Control in Technology Blogs by Members

- Installation of Sales cube (0SD_C03) in BW 7.5 version in Technology Blogs by Members

- Activating Embedded AI – Intelligent GRIR Reconciliation in Technology Blogs by SAP

- Workload Analysis for HANA Platform Series - 0. HANA Workload Analysis Overview in Technology Blogs by SAP

| User | Count |

|---|---|

| 11 | |

| 9 | |

| 7 | |

| 6 | |

| 4 | |

| 4 | |

| 3 | |

| 3 | |

| 3 | |

| 2 |