- SAP Community

- Groups

- Interest Groups

- Application Development

- Blog Posts

- Continuous Integration - Automated ABAP Unit Tests...

Application Development Blog Posts

Learn and share on deeper, cross technology development topics such as integration and connectivity, automation, cloud extensibility, developing at scale, and security.

Turn on suggestions

Auto-suggest helps you quickly narrow down your search results by suggesting possible matches as you type.

Showing results for

adam_krawczyk1

Contributor

Options

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

05-23-2013

2:49 PM

In this document I would like to share my lesson learned and show that it is possible to run automated Unit Tests in ABAP and integrate it with Hudson (or Jenkinks) Continuous Integration server. With some development effort we can have all Unit Tests running every day, see tests results with code coverage in Hudson and look at nice graphs and historical data summary.

Document overview:

- 1. Introduction

- 2. Continuous Integration

- 3. Framework presentation

- 4. Implementation overview

- 5. Summary

- 6. Update - source code

1. Introduction

When I started experience with SAP development almost 2 years ago, I looked for automated Unit Tests possibilities. Unfortunately Continuous Integration is not strong side of ABAP development. You can schedule and run tests regularly in fact, but the way how results are presented was not enough me. As I had already experience with Hudson projects built for java software (easy integration by the way), I tried to find out same possibilities in ABAP world. Unfortunately - no plugins for SAP integration with Hudson, not even one integration example existing in forums. I took the initiative into own hands.

2. Continuous Integration

Before I will go into details let me explain how I understandContinuous Integration process in ABAP context. It must be fully automated and consists of the following steps:

1. Compile and build code - in ABAP it is actually done for free with activation.

2. Run tests, different levels possible:

- Unit Tests - only these are currently supported in my framework.

- Functional, Performance, Availability tests and others.

3. Collect and present statistics on Continuous Integration server - tests results and code coverage are available in ABAP.

4. Notify users in case of at least one test has failed.

5. Optionally deploy code - send to quality / production system - not included into my framework.

In the framework I focused only on ABAP Unit Tests automation, run in development not quality system because:

- It reflects current development system status (we tend to have requests opened long time before they are send to quality).

- Unit Tests run is disabled for quality systems in our landscapes.

Continuous Integration process should be triggered after each change commit, or at least once per day. For me once per day is enough (many developers and changes during day) and framework is configured as such.

Actually framework fulfills criteria from points 1-4, just with limited scope for Unit Tests in development system. Anyhow it is a lot.

3. Framework presentation

So let's look what we have. Framework is run for all Unit Tests in the system from customized packages (Z* or Y* packages). As I do not want to present statistics for all development in my company, I just show example results from ZCAGS_CI package, which is actually the package containing framework development itself.

Hudson projects for two development landscapes D83 and D87 are defined:

- From this overview we quickly see status overview: S - blue color means last success, W - project condition - stability, tests results, code coverage quality (sun or storm icon).

- Projects are integrated in the way that they can be started from Hudson server - just by clock with green arrow button on the right.

- Unit Tests Full Run Hudson project starts batch job in SAP system which runs tests, then it tries to download result files periodically and if they are found, code coverage and Unit Tests files are imported from SAP to Hudson server.

- After that subsequent jobs Unit Tests - Package and Unit Tests - User are run. These jobs just read downloaded files and present them in graphs and statistics.

- As you can see - all Unit Tests are running quickly: over 6 minutes for D83 and 3 minutes for D87. There are hundreds of Unit Tests available.

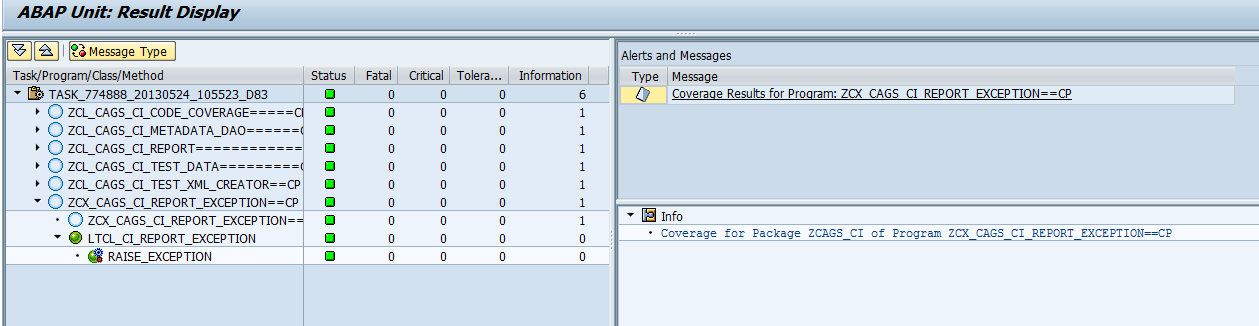

Example ZCAGS_CI package preview with Unit Tests results (real duration on test level is not implemented):

If someone knows Hudson from before he is aware of the package hierarchy and links to nested levels. We can click on example row to see its details. Lets preview ZCL_CAGS_CI_CODE_COVERAGE test results:

We see all 8 tests run from local class LCL_CAGS_CI_CODE_COVERAGE and all of them are passed. In case if there would be an error we could see it by clicking test method link further.

In addition Hudson keeps history of all builds and tests statistics. Graph below shows history of Unit Tests for all packages (I have not shown number of tests which are normally on Y axis):

As you can see from the graph there were some failing tests that nobody cared about (red color). Having daily automated Unit Tests in place exposed that clearly so tests have been finally fixed. All run tests should be have success status - daily green build goal. In addition we see increasing number of tests due to Continuous Integration awareness and teams engagement.

Now lets look at code coverage statistics for ZCAGS_CI package. We see historical progress since beginning, code coverage finally increased till 71.2 % for line level:

Code coverage shows which of classes in the package were touched/entered during execution of Unit Tests. This is one of helpful statistics that measures testing code quality.

We see graph with historical data. 4 levels of code coverage are available:

- Class - how many classes are covered

- Method - how many methods are covered

- Block - how many blocks/statements are covered. Block may be WHILE loop or IF statement.

- Line - most detailed value, how many lines of code are run by Unit Tests.

Let me describe example of coverage based on two classes:

1. ZCL_CAGS_CI_HTTP_REQ_HANDLER

- We see that there is not even one Unit Test case that uses this class

- It is because request handler reads HTTP request and starts batch job or operates on file system, that is why I skipped Unit Tests there.

2. ZCL_CAGS_CI_REPORT

- Main class handling report features.

- 20 methods of this class were executed during Unit Tests run, class has 31 methods in total.

- 113 lines of code were executed during Unit Tests run out of 180 lines.

- We see that class coverage is 1/1. It is simple on class level, but if we see package level (header line) we have value 8/9.

Code coverage and unit tests views are available for all customized packages in SAP system that have executable code (top level view).

All these statistics can be extracted from SAP standard tools. Hudson integration just adds another, extended GUI view on top of results and makes tests run management easier. I can run tests at any time from Hudson. By default tests are scheduled on every working day. This is configurable in Hudson project.

It is worth to mention that we can configure Hudson project in the way that it sends automatic email notifications in case of at least single test failure occurred. We can have single person that monitors daily status or even whole team included into notifications - it depends on us.

Hudson offers even more options and plugins and is a good candidate to me for Continuous Integration server for daily automated tests.

4. Implementation overview

Main report looks like this:

We can specify defined packages list or load all Y* and Z* packages for tests execution. Second option is default, run from Hudson - we want to run all tests for customized packages in the system.

Code coverage is measured only for classes and excluded for programs. The fact is that reports often does not need to have Unit Tests or even cannot be tested. We have also many historical reports from time where there was no Unit Tests yet. That is why code coverage statistics including programs are very low. That is why we focus only on code quality measurements for classes - it is easier to focus and monitor improvements.

Two levels of results are possible - package level and user level. Package level is default, we see how well each package is covered with tests. User level is just for own overview and history monitoring. We cannot get exact user coverage as class and all its tests method is "owned" by creator, further updates from another user just adds statistics to original class creator.

After report is run, all results are saved to files with given names and path. Files are formatted according to JUnit format which is simple XML file with predefined schema and hierarchy. This is needed for integration with Hudson - we need to transfer files and read them on Hudson to show final results.

How results are calculated? That is the core of report, where I need to say thank you to SAP standard and its developers.

SAP development goes in good direction, Unit Tests framework is extended, new automated tools are available. One of them is report rs_aucv_runner, which actually can be run from SE80 -> right click menu on class -> Execute -> Unit Tests With -> Job Scheduling.

That is very good report that allows user to run Unit Tests for any packages, including subpackages. My customized report is built on top of this - submits standard program, reads required data through implicit enhancements during runtime and saves results to files. In fact we can get Unit Tests and code coverage results from this standard report, but to be honest results are not very informative - no information about tests count, separated code coverage for each class after selecting it and finally no history, just current results:

That is why I have chosen Hudson. It presents same data in different and better way.

It is also possible to schedule daily automated Unit Tests from SCI Code Inspector. However there is mainly one useful information there - failure tests. That is basically enough to be notified in case of tests failures. Anyhow, code quality statistics and historical progress overview is a good part of Agile development where reviews and retrospectives are part of life.

5. Summary

I hope this document will be inspiration for those who are searching for automated Unit Tests possibilities like I was before. I believe that Unit Tests are important and improve software development quality and efficiency as well. To encourage people to use them it is worth to establish daily automated tests in first place. If we add another layer of status visualization and progress history, it helps even much. We should believe that good quality software development requires automated tests that is why it is worth to build motivation with supportive tools.

Hudson ABAP integration is one of options. I started with Unit Tests, but I guess it would not be difficult to add another level of tests and present results on same server. Personally I found Hudson more user friendly. It is enough just to open HTML link instead of SAP transaction. Results are present all the time, calculated at night. They expose two most important statistics: Unit Tests failure/success rate and code coverage values, for all packages that we specify. If we have many teams, they can focus on own package monitoring. If we add history and statistics kept in Hudson, that is a good help for tracking progress and improve ourselves.

It would be good if SAP could provide built in integration to Hudson server as plugin. If someone is interested in details of development, I can share it or maybe even post new document or publish code project - I need to investigate possible options for code sharing/publishing rights if it is needed.

Good luck with automated tests in ABAP!

6. Update - source code

I was asked by many to public source code. Finally, after long time I got some capacity to do it - here it is. I put it to external server as zip file was not allowed on this forum:

ABAP Hudson integration.zip - Google Drive

Go to Readme.txt and try to implement framework in your system. You can contact me in case of any doubts. Good luck!

I preserve code property rights as mine, but you can use code for your own or company purposes, mentioning that the source comes from me.

- SAP Managed Tags:

- ABAP Testing and Analysis

15 Comments

You must be a registered user to add a comment. If you've already registered, sign in. Otherwise, register and sign in.

Labels in this area

-

A Dynamic Memory Allocation Tool

1 -

ABAP

9 -

abap cds

1 -

ABAP CDS Views

14 -

ABAP class

1 -

ABAP Cloud

1 -

ABAP Development

5 -

ABAP in Eclipse

2 -

ABAP Keyword Documentation

2 -

ABAP OOABAP

2 -

ABAP Programming

1 -

abap technical

1 -

ABAP test cockpit

7 -

ABAP test cokpit

1 -

ADT

1 -

Advanced Event Mesh

1 -

AEM

1 -

AI

1 -

API and Integration

1 -

APIs

9 -

APIs ABAP

1 -

App Dev and Integration

1 -

Application Development

2 -

application job

1 -

archivelinks

1 -

Automation

4 -

B2B Integration

1 -

BTP

1 -

CAP

1 -

CAPM

1 -

Career Development

3 -

CL_GUI_FRONTEND_SERVICES

1 -

CL_SALV_TABLE

1 -

Cloud Extensibility

8 -

Cloud Native

7 -

Cloud Platform Integration

1 -

CloudEvents

2 -

CMIS

1 -

Connection

1 -

container

1 -

Customer Portal

1 -

Debugging

2 -

Developer extensibility

1 -

Developing at Scale

3 -

DMS

1 -

dynamic logpoints

1 -

Dynpro

1 -

Dynpro Width

1 -

Eclipse ADT ABAP Development Tools

1 -

EDA

1 -

Event Mesh

1 -

Expert

1 -

Field Symbols in ABAP

1 -

Fiori

1 -

Fiori App Extension

1 -

Forms & Templates

1 -

General

1 -

Getting Started

1 -

IBM watsonx

2 -

Integration & Connectivity

10 -

Introduction

1 -

JavaScripts used by Adobe Forms

1 -

joule

1 -

NodeJS

1 -

ODATA

3 -

OOABAP

3 -

Outbound queue

1 -

ProCustomer

1 -

Product Updates

1 -

Programming Models

14 -

Restful webservices Using POST MAN

1 -

RFC

1 -

RFFOEDI1

1 -

SAP BAS

1 -

SAP BTP

1 -

SAP Build

1 -

SAP Build apps

1 -

SAP Build CodeJam

1 -

SAP CodeTalk

1 -

SAP Odata

2 -

SAP SEGW

1 -

SAP UI5

1 -

SAP UI5 Custom Library

1 -

SAPEnhancements

1 -

SapMachine

1 -

security

3 -

SM30

1 -

Table Maintenance Generator

1 -

text editor

1 -

Tools

18 -

User Experience

6 -

Width

1

Top kudoed authors

| User | Count |

|---|---|

| 4 | |

| 3 | |

| 2 | |

| 2 | |

| 2 | |

| 2 | |

| 1 | |

| 1 | |

| 1 | |

| 1 |