- SAP Community

- Products and Technology

- Technology

- Technology Blogs by Members

- ASE 15.7: THREADED VS. PROCESS KERNEL MODE.

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

So, the threaded or the process? Which one would you select on your next migration?

There is a clear recommendation in Sybase documentation to use the threaded kernel mode. This recommendation, though, is precisely the opposite of what Sybase ASE DBAs would have naturally selected. I have spoken to several customers that consider to move to 15.7. Most feel more comfortable moving to 15.7 running in process kernel mode. Little wonder: we are used to ASE running this way. For those who struggled with new optimizer upgrading from 12.x to 15.x to face yet another revolution in ASE code looks formidable.

And yet, setting worries aside, perhaps there are good reasons we are pushed into the new kernel? I am not talking about the improved way the threaded kernel mode treats IO requests (which is publicized by the official documentation) and more steady query performance. I am talking about things less straightforward. Is not the process mode a sort of a "compatibility mode" for the 15.7 new kernel version - similar to the "compatibility mode" available for the 15.x new optimizer version. The official "compatibility mode" was never really recommended for use. It was an option to choose only if there is absolutely no way to let the new optimizer do its work (incidentally, it is rather curious why this mode should have been though of at all: if the new optimizer makes more intelligent decisions, why preserving something less optimal in the same code). What about the process mode kernel? Is this option really an alternative to the "default" threaded kernel mode (and if it is, say for certain HW platforms, why is it not clearly stated as such)?

I have set up a test to check. In fact, this is not a vane intellectual foible. I am facing a real migration project in the very close future which will involve moving into 15.7 and which will also involve making a tested decision as to which kernel mode to choose. If I heed to recommendations - I should choose the threaded mode. If I heed to the DBA vox populi - I would choose the process mode (threaded mode code is quite new, less than 5 years of real customer experience - how many real issues were found and fixed, if at all?).

I have the following settings for the test. ASE 15.7 ESD#2 (I'll move on to #3 & #4 later on), running on Solaris x64 VM environment (not very optimal, but since I compare rather than analyze, it is no as bad). Really small ASEs. ~2GB RAM, no separate cache for tempdb. Relatively small procedure cache and statement cache. 3 engines or threads out of 4 VM cores available (2 physical chips). Something that may be setup and tested by anyone. I am generating load using very simple code JAVA run from the same host. The client code selects a couple of rows from a syscomments - each time dynamically generating unique select statement. I will deliberately submit these requests as prepared statement. "Deliberately" because JAVA community loves prepared statements and will continue to use them indiscriminately whether this is a waste of resources or not. "Deliberately," also, because I know this code has a strong potential to destabilize ASE 15.x. What I want to check is whether 15.7 version is more stable for this type of "bad" client code than it's previous 15.x variations. I want also to check which kernel mode performs better - if there is a difference at all. At last, I want to check which of ASE/JDBC configuration parameters should be used/avoided in for this type of code?

Below are the configuration settings (and times) which are behind the graphs that I site throughout this paper.

For the threaded mode kernel:

| "14:56" | S157T_2LWP_DYN1_ST0_STR0_PLA0_ST0M |

| "14:58" | S157T_2LWP_DYN1_ST0_STR1_PLA0_ST0M |

| "15:01" | S157T_2LWP_DYN1_ST0_STR1_PLA1_ST0M |

| "15:03" | S157T_2LWP_DYN0_ST0_STR0_PLA0_ST0M |

| "15:06" | S157T_2LWP_DYN0_ST0_STR1_PLA0_ST0M |

| "15:08" | S157T_2LWP_DYN0_ST0_STR1_PLA1_ST0M |

| "15:12" | S157T_2LWP_DYN1_ST1_STR0_PLA0_ST20M |

| "15:14" | S157T_2LWP_DYN1_ST1_STR1_PLA0_ST20M |

| "15:17" | S157T_2LWP_DYN1_ST1_STR1_PLA1_ST20M |

| "15:19" | S157T_2LWP_DYN0_ST1_STR0_PLA0_ST20M |

| "15:21" | S157T_2LWP_DYN0_ST1_STR1_PLA0_ST20M |

| "15:24" | S157T_2LWP_DYN0_ST1_STR1_PLA1_ST20M |

For the process mode:

| "12:41" | S157P_2LWP_DYN1_ST0_STR0_PLA0_ST0M |

| "12:44" | S157P_2LWP_DYN1_ST0_STR1_PLA0_ST0M |

| "12:47" | S157P_2LWP_DYN1_ST0_STR1_PLA1_ST0M |

| "12:51" | S157P_2LWP_DYN0_ST0_STR0_PLA0_ST0M |

| "12:54" | S157P_2LWP_DYN0_ST0_STR1_PLA0_ST0M |

| "12:56" | S157P_2LWP_DYN0_ST0_STR1_PLA1_ST0M |

| "13:02" | S157P_2LWP_DYN1_ST1_STR0_PLA0_ST20M |

| "13:05" | S157P_2LWP_DYN1_ST1_STR1_PLA0_ST20M |

| "13:07" | S157P_2LWP_DYN1_ST1_STR1_PLA1_ST20M |

| "13:10" | S157P_2LWP_DYN0_ST1_STR0_PLA0_ST20M |

| "13:13" | S157P_2LWP_DYN0_ST1_STR1_PLA0_ST20M |

| "13:15" | S157P_2LWP_DYN0_ST1_STR1_PLA1_ST20M |

A bit cryptic but what the table above specifies is that we have 2 LWP generating processes, running with the JDBC setting of DYNAMIC_PREPARE set to true or false {DYN1/DYN0}, with the statement cache either turned on or off on the connection level {ST1/ST0}, with the streamlined sql options set on or off {STR0/STR1}, with the plan sharing option turned on or off {PLA0/PLA1} and with the statement cache turned off (0 MB) or configured at 20MB {ST0M/ST20M}. S157P is Sybase 15.7 process mode. S157T is Sybase 15.7 threaded mode.

Again, these are preliminary tests. I will be performing similar test on a large SPARC server tomorrow, so more data will be available with time and more insights. Insights may change. For now I post only things discovered in this simplistic, home-grown "lab".

So here are the numbers: we are running an endless loop creating unique select statements from JAVA client. We always submit them as prepared statements, but we change settings: first we run with statement cache turned off and the "functionality group" parameters turned off. We turn each of these on and off, than do the same with submitting the statements with DYNAMIC_PREPARE set to false. Then we run the same tests again but with the statement cache configured 20MB.

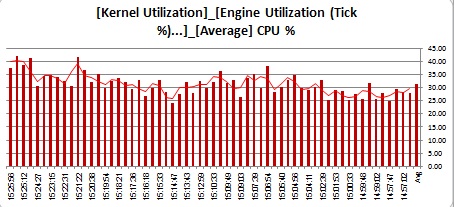

CPU load:

Process mode:

Threaded mode:

I don't really know what is the throughput here, but I know that the same code generates slightly greater load on ASE running the threaded kernel (either because it has a greater throughput or because it has a greater weight). An average for the process mode stands on 28% CPU, while on the threaded mode it gets to 32%. The same is true for peaks: 35% vs. 42%. What was the throughput? We will compare TPS and procedure requests per second (actually, both TPS and PPS are the result of compiling the prepared statements we send to the ASE into LWPs - we don't have any DML statements in the code and we do not execute procedures either - so this is ASE's way to handle our code).

TPS in process mode:

TPS in threaded mode:

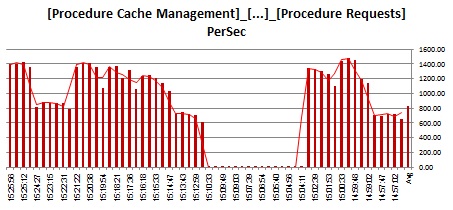

TPS is more or less the same. What about procedure requests per second?

Process:

Threaded:

Actually, the process mode has generated 200 more procedure requests per second. This is the speed at which the code is executed (the lacuna in graph is when both the statement cache and the DYNAMIC_PREPARE setting are turned off).

In general, the behavior is the same but there are certain unexpected differences. From right to left: we start with statement cache turned off (and set statement_cache off in the code). We get ~700 SPS (SP executions per second). We turn the streamlined option on - the throughput goes twice up to ~1400 SPS, plan sharing seems to add a bit more. We turn the DYNAMIC_PREPARE off - no LWPs. Then we do the same with statement cache turned on on ASE (and set statement_cache on in the code). The behavior is similar, except that when we set DYNAMIC_PREPARED to off - we again generate LWPs at high rate (incidentally, CPU fares worst in both cases when the prepared statements generated by the code meet the DYNAMIC_PREPARED = false JDBC setting). And with the statement cache set to zero the CPU load is slightly lower for this type of code.

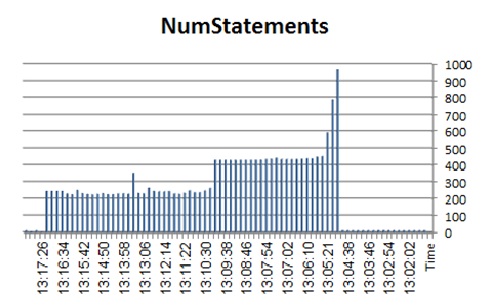

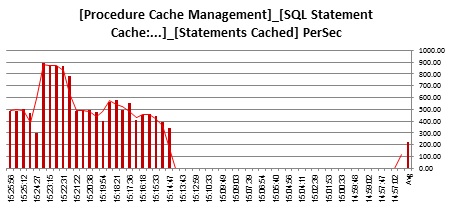

The statement cache data (statements cached per second from sysmon & number of statements in the cache from the monStatementCache).

Process mode:

Threaded mode:

More or less similar, with some anomaly.

So far, it seems there is no great difference. Moreover, the process mode fares a bit better.

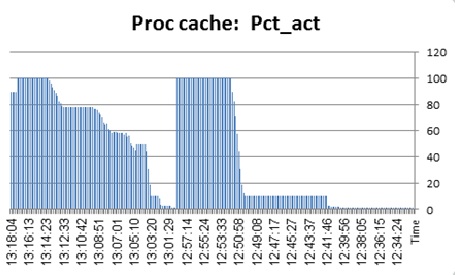

However, this is what we see when we monitor the procedure cache usage with sp_monitorconfig.

Process mode:

Threaded mode:

Oups. There seems to be a problem here. With the ASE running in the process kernel mode, procedure cache gets exhausted as soon as we run prepared statements and turn the DYNAMIC_PREPARE off for own JDBC client. The only way to reclaim space in the procedure cache for the process mode kernel is.... to bounce the server. Urgh. Pretty bad. Procedure cache in the process kernel mode is very sensitive and seems to be wasted on something.

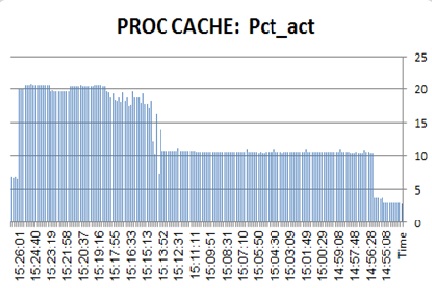

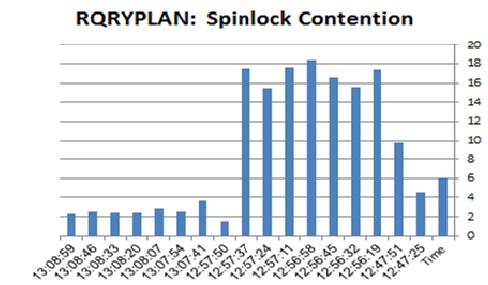

There is also a difference with compiled plan treatment in the two modes:

Process mode (query plans spinlock contention):

Threaded kernel:

This spinlock contention arises only when the statement cache is configured and the statements are submitted with DYNAMIC_PREPARED set to true in the threaded mode. In process mode it climbs all the way up to 20%.

Process mode - statements recompiled due to plans being flushed out of the cache:

Threaded mode:

For some reason, statements are recompiled almost twice more frequently in the process kernel mode.

To throw a stream of optimism here: procedure manager spinlock contention. This was the nasty spinlock that wrought real havoc in previous 15.x versions (and brought at least one upgrade attempt down). This one now is pretty well treated in 15.7.

Process mode:

Threaded mode:

But this too, seems to be treated a bit better in the threaded kernel than in the process kernel mode.

So, what have we learned from this simple test, if at all? The process kernel mode seems to give more steady throughput for this kind of code: lower CPU load, higher proc/sec ratio. At the same time, in process kernel mode, procedure cache functions very badly: sp_monitorconfig either does not report correct data or reports on procedure cache being exhausted if we run prepared statements and set JDBC to DYNAMIC_PREPARE = FALSE. Moreover, there is no way to cleanup the procedure cache mysteriously wasted. Even when all the activity in the server is halted and the procedure cache is cleaned up using the available dbcc commands, it remains 99% full. I did not test the same issues with later ESDs, but I have seen that on SPARC platform too, process mode seems to yield more steady throughput, but often at the price of getting into issues that the threaded kernel does not have (I hit stack traces on adding engines online under heavy stress, the procedure cache reported zero utilization by sp_monitorconfig; in threaded kernel mode resource memory was dynamically adjusted by ASE at startup, in process kernel mode ASE failed to online engines if the kernel resource memory was missing &c).

So process or threaded kernel, which one would you choose?

Tomorrow I will be running similar tests on 15.7 ESD#3 running on SPARC. I will test to see if similar issues arise there.

To be continued....

ATM.

- SAP Managed Tags:

- SAP Adaptive Server Enterprise

You must be a registered user to add a comment. If you've already registered, sign in. Otherwise, register and sign in.

-

"automatische backups"

1 -

"regelmäßige sicherung"

1 -

"TypeScript" "Development" "FeedBack"

1 -

505 Technology Updates 53

1 -

ABAP

15 -

ABAP API

1 -

ABAP CDS Views

2 -

ABAP CDS Views - BW Extraction

1 -

ABAP CDS Views - CDC (Change Data Capture)

1 -

ABAP class

2 -

ABAP Cloud

3 -

ABAP Development

5 -

ABAP in Eclipse

1 -

ABAP Platform Trial

1 -

ABAP Programming

2 -

abap technical

1 -

abapGit

1 -

absl

2 -

access data from SAP Datasphere directly from Snowflake

1 -

Access data from SAP datasphere to Qliksense

1 -

Accrual

1 -

action

1 -

adapter modules

1 -

Addon

1 -

Adobe Document Services

1 -

ADS

1 -

ADS Config

1 -

ADS with ABAP

1 -

ADS with Java

1 -

ADT

2 -

Advance Shipping and Receiving

1 -

Advanced Event Mesh

3 -

Advanced formula

1 -

AEM

1 -

AI

8 -

AI Launchpad

1 -

AI Projects

1 -

AIML

10 -

Alert in Sap analytical cloud

1 -

Amazon S3

1 -

Analytic Models

1 -

Analytical Dataset

1 -

Analytical Model

1 -

Analytics

1 -

Analyze Workload Data

1 -

annotations

1 -

API

1 -

API and Integration

4 -

API Call

2 -

API security

1 -

Application Architecture

1 -

Application Development

5 -

Application Development for SAP HANA Cloud

3 -

Applications and Business Processes (AP)

1 -

Artificial Intelligence

1 -

Artificial Intelligence (AI)

5 -

Artificial Intelligence (AI) 1 Business Trends 363 Business Trends 8 Digital Transformation with Cloud ERP (DT) 1 Event Information 462 Event Information 15 Expert Insights 114 Expert Insights 76 Life at SAP 418 Life at SAP 1 Product Updates 4

1 -

Artificial Intelligence (AI) blockchain Data & Analytics

1 -

Artificial Intelligence (AI) blockchain Data & Analytics Intelligent Enterprise

1 -

Artificial Intelligence (AI) blockchain Data & Analytics Intelligent Enterprise Oil Gas IoT Exploration Production

1 -

Artificial Intelligence (AI) blockchain Data & Analytics Intelligent Enterprise sustainability responsibility esg social compliance cybersecurity risk

1 -

AS Java

1 -

ASE

1 -

ASR

2 -

ASUG

1 -

Attachments

1 -

Authentication

1 -

Authorisations

1 -

Automating Processes

1 -

Automation

2 -

aws

2 -

Azure

2 -

Azure AI Studio

1 -

Azure API Center

1 -

Azure API Management

1 -

B2B Integration

1 -

Backorder Processing

1 -

Backpropagation

1 -

Backup

1 -

Backup and Recovery

1 -

Backup schedule

1 -

BADI_MATERIAL_CHECK error message

1 -

Bank

1 -

Bank Communication Management

1 -

BAS

1 -

basis

2 -

Basis Monitoring & Tcodes with Key notes

2 -

Batch Management

1 -

BDC

1 -

Best Practice

1 -

BI

1 -

bitcoin

1 -

Blockchain

3 -

bodl

1 -

BOP in aATP

1 -

BOP Segments

1 -

BOP Strategies

1 -

BOP Variant

1 -

BPC

1 -

BPC LIVE

1 -

BTP

14 -

BTP AI Launchpad

1 -

BTP Destination

2 -

Business AI

1 -

Business and IT Integration

1 -

Business application stu

1 -

Business Application Studio

1 -

Business Architecture

1 -

Business Communication Services

1 -

Business Continuity

2 -

Business Data Fabric

3 -

Business Fabric

1 -

Business Partner

13 -

Business Partner Master Data

11 -

Business Technology Platform

2 -

Business Trends

4 -

BW4HANA

1 -

CA

1 -

calculation view

1 -

CAP

4 -

Capgemini

1 -

CAPM

1 -

Catalyst for Efficiency: Revolutionizing SAP Integration Suite with Artificial Intelligence (AI) and

1 -

CCMS

2 -

CDQ

13 -

CDS

2 -

Cental Finance

1 -

Certificates

1 -

CFL

1 -

Change Management

1 -

chatbot

1 -

chatgpt

3 -

CICD

1 -

CL_SALV_TABLE

2 -

Class Runner

1 -

Classrunner

1 -

Cloud ALM Monitoring

1 -

Cloud ALM Operations

1 -

cloud connector

1 -

Cloud Extensibility

1 -

Cloud Foundry

4 -

Cloud Integration

6 -

Cloud Platform Integration

2 -

cloudalm

1 -

communication

1 -

Compensation Information Management

1 -

Compensation Management

1 -

Compliance

1 -

Compound Employee API

1 -

Configuration

1 -

Connectors

1 -

Consolidation

1 -

Consolidation Extension for SAP Analytics Cloud

3 -

Control Indicators.

1 -

Controller-Service-Repository pattern

1 -

Conversion

1 -

Cosine similarity

1 -

CPI

1 -

cryptocurrency

1 -

CSI

1 -

ctms

1 -

Custom chatbot

3 -

Custom Destination Service

1 -

custom fields

1 -

Custom Headers

1 -

Customer Experience

1 -

Customer Journey

1 -

Customizing

1 -

cyber security

4 -

cybersecurity

1 -

Data

1 -

Data & Analytics

1 -

Data Aging

1 -

Data Analytics

2 -

Data and Analytics (DA)

1 -

Data Archiving

1 -

Data Back-up

1 -

Data Flow

1 -

Data Governance

5 -

Data Integration

2 -

Data Quality

13 -

Data Quality Management

13 -

Data Synchronization

1 -

data transfer

1 -

Data Unleashed

1 -

Data Value

9 -

Database and Data Management

1 -

database tables

1 -

Databricks

1 -

Dataframe

1 -

Datasphere

3 -

datenbanksicherung

1 -

dba cockpit

1 -

dbacockpit

1 -

Debugging

2 -

Defender

1 -

Delimiting Pay Components

1 -

Delta Integrations

1 -

Destination

3 -

Destination Service

1 -

Developer extensibility

1 -

Developing with SAP Integration Suite

1 -

Devops

1 -

digital transformation

1 -

Disaster Recovery

1 -

Documentation

1 -

Dot Product

1 -

DQM

1 -

dump database

1 -

dump transaction

1 -

e-Invoice

1 -

E4H Conversion

1 -

Eclipse ADT ABAP Development Tools

2 -

edoc

1 -

edocument

1 -

ELA

1 -

Embedded Consolidation

1 -

Embedding

1 -

Embeddings

1 -

Employee Central

1 -

Employee Central Payroll

1 -

Employee Central Time Off

1 -

Employee Information

1 -

Employee Rehires

1 -

Enable Now

1 -

Enable now manager

1 -

endpoint

1 -

Enhancement Request

1 -

Enterprise Architecture

1 -

Entra

1 -

ESLint

1 -

ETL Business Analytics with SAP Signavio

1 -

Euclidean distance

1 -

Event Dates

1 -

Event Driven Architecture

1 -

Event Mesh

2 -

Event Reason

1 -

EventBasedIntegration

1 -

EWM

1 -

EWM Outbound configuration

1 -

EWM-TM-Integration

1 -

Existing Event Changes

1 -

Expand

1 -

Expert

2 -

Expert Insights

2 -

Exploits

1 -

Fiori

16 -

Fiori Elements

2 -

Fiori SAPUI5

13 -

first-guidance

1 -

Flask

2 -

FTC

1 -

Full Stack

9 -

Funds Management

1 -

gCTS

1 -

GenAI hub

1 -

General

2 -

Generative AI

1 -

Getting Started

1 -

GitHub

11 -

Google cloud

1 -

Grants Management

1 -

groovy

2 -

GTP

1 -

HANA

6 -

HANA Cloud

2 -

Hana Cloud Database Integration

2 -

HANA DB

2 -

Hana Vector Engine

1 -

HANA XS Advanced

1 -

Historical Events

1 -

home labs

1 -

HowTo

1 -

HR Data Management

1 -

html5

9 -

HTML5 Application

1 -

Identity cards validation

1 -

idm

1 -

Implementation

1 -

Infuse AI

1 -

input parameter

1 -

instant payments

1 -

Integration

3 -

Integration Advisor

1 -

Integration Architecture

1 -

Integration Center

1 -

Integration Suite

1 -

intelligent enterprise

1 -

iot

1 -

Java

1 -

JMS Receiver channel ping issue

1 -

job

1 -

Job Information Changes

1 -

Job-Related Events

1 -

Job_Event_Information

1 -

joule

4 -

Journal Entries

1 -

Just Ask

1 -

Kerberos for ABAP

10 -

Kerberos for JAVA

9 -

KNN

1 -

Launch Wizard

1 -

Learning Content

2 -

Life at SAP

5 -

lightning

1 -

Linear Regression SAP HANA Cloud

1 -

Loading Indicator

1 -

local tax regulations

1 -

LP

1 -

Machine Learning

4 -

Marketing

1 -

Master Data

3 -

Master Data Management

15 -

Maxdb

2 -

MDG

1 -

MDGM

1 -

MDM

1 -

Message box.

1 -

Messages on RF Device

1 -

Microservices Architecture

1 -

Microsoft

1 -

Microsoft Universal Print

1 -

Middleware Solutions

1 -

Migration

5 -

ML Model Development

1 -

MLFlow

1 -

Modeling in SAP HANA Cloud

9 -

Monitoring

3 -

MPL

1 -

MTA

1 -

Multi-factor-authentication

1 -

Multi-Record Scenarios

1 -

Multilayer Perceptron

1 -

Multiple Event Triggers

1 -

Myself Transformation

1 -

Neo

1 -

Neural Networks

1 -

New Event Creation

1 -

New Feature

1 -

Newcomer

1 -

NodeJS

3 -

ODATA

2 -

OData APIs

1 -

odatav2

1 -

ODATAV4

1 -

ODBC

1 -

ODBC Connection

1 -

Onpremise

1 -

open source

2 -

OpenAI API

1 -

Oracle

1 -

PaPM

1 -

PaPM Dynamic Data Copy through Writer function

1 -

PaPM Remote Call

1 -

Partner Built Foundation Model

1 -

PAS-C01

1 -

Pay Component Management

1 -

PGP

1 -

Pickle

1 -

PLANNING ARCHITECTURE

1 -

Popup in Sap analytical cloud

1 -

PostgrSQL

1 -

POSTMAN

1 -

Prettier

1 -

Process Automation

2 -

Product Updates

6 -

PSM

1 -

Public Cloud

1 -

Python

5 -

python library - Document information extraction service

1 -

Qlik

1 -

Qualtrics

1 -

RAP

3 -

RAP BO

2 -

React

1 -

Record Deletion

1 -

Recovery

1 -

recurring payments

1 -

redeply

1 -

Release

1 -

Remote Consumption Model

1 -

Replication Flows

1 -

research

1 -

Resilience

1 -

REST

1 -

REST API

1 -

Retagging Required

1 -

Risk

1 -

rolandkramer

2 -

Rolling Kernel Switch

1 -

route

1 -

rules

1 -

S4 HANA

1 -

S4 HANA Cloud

1 -

S4 HANA On-Premise

1 -

S4HANA

4 -

S4HANA Cloud

1 -

S4HANA_OP_2023

2 -

SAC

11 -

SAC PLANNING

10 -

SAP

4 -

SAP ABAP

1 -

SAP Advanced Event Mesh

1 -

SAP AI Core

10 -

SAP AI Launchpad

9 -

SAP Analytic Cloud

1 -

SAP Analytic Cloud Compass

1 -

Sap Analytical Cloud

1 -

SAP Analytics Cloud

5 -

SAP Analytics Cloud for Consolidation

3 -

SAP Analytics cloud planning

1 -

SAP Analytics Cloud Story

1 -

SAP analytics clouds

1 -

SAP API Management

1 -

SAP Application Logging Service

1 -

SAP BAS

1 -

SAP Basis

6 -

SAP BO FC migration

1 -

SAP BODS

1 -

SAP BODS certification.

1 -

SAP BODS migration

1 -

SAP BPC migration

1 -

SAP BTP

25 -

SAP BTP Build Work Zone

2 -

SAP BTP Cloud Foundry

8 -

SAP BTP Costing

1 -

SAP BTP CTMS

1 -

SAP BTP Generative AI

1 -

SAP BTP Innovation

1 -

SAP BTP Migration Tool

1 -

SAP BTP SDK IOS

1 -

SAP BTPEA

1 -

SAP Build

12 -

SAP Build App

1 -

SAP Build apps

1 -

SAP Build CodeJam

1 -

SAP Build Process Automation

3 -

SAP Build work zone

11 -

SAP Business Objects Platform

1 -

SAP Business Technology

2 -

SAP Business Technology Platform (XP)

1 -

sap bw

1 -

SAP CAP

2 -

SAP CDC

1 -

SAP CDP

1 -

SAP CDS VIEW

1 -

SAP Certification

1 -

SAP Cloud ALM

4 -

SAP Cloud Application Programming Model

1 -

SAP Cloud Integration

1 -

SAP Cloud Integration for Data Services

1 -

SAP cloud platform

9 -

SAP Companion

1 -

SAP CPI

3 -

SAP CPI (Cloud Platform Integration)

2 -

SAP CPI Discover tab

1 -

sap credential store

1 -

SAP Customer Data Cloud

1 -

SAP Customer Data Platform

1 -

SAP Data Intelligence

1 -

SAP Data Migration in Retail Industry

1 -

SAP Data Services

1 -

SAP DATABASE

1 -

SAP Dataspher to Non SAP BI tools

1 -

SAP Datasphere

9 -

SAP DRC

1 -

SAP EWM

1 -

SAP Fiori

3 -

SAP Fiori App Embedding

1 -

Sap Fiori Extension Project Using BAS

1 -

SAP GRC

1 -

SAP HANA

1 -

SAP HANA PAL

1 -

SAP HANA Vector

1 -

SAP HCM (Human Capital Management)

1 -

SAP HR Solutions

1 -

SAP IDM

1 -

SAP Integration Suite

10 -

SAP Integrations

4 -

SAP iRPA

2 -

SAP LAGGING AND SLOW

1 -

SAP Learning Class

1 -

SAP Learning Hub

1 -

SAP Master Data

1 -

SAP Odata

2 -

SAP on Azure

2 -

SAP PAL

1 -

SAP PartnerEdge

1 -

sap partners

1 -

SAP Password Reset

1 -

SAP PO Migration

1 -

SAP Prepackaged Content

1 -

sap print

1 -

SAP Process Automation

2 -

SAP Process Integration

2 -

SAP Process Orchestration

1 -

SAP Router

1 -

SAP S4HANA

2 -

SAP S4HANA Cloud

2 -

SAP S4HANA Cloud for Finance

1 -

SAP S4HANA Cloud private edition

1 -

SAP Sandbox

1 -

SAP STMS

1 -

SAP successfactors

3 -

SAP SuccessFactors HXM Core

1 -

SAP Time

1 -

SAP TM

2 -

SAP Trading Partner Management

1 -

SAP UI5

1 -

SAP Upgrade

1 -

SAP Utilities

1 -

SAP-GUI

9 -

SAP_COM_0276

1 -

SAPBTP

1 -

SAPCPI

1 -

SAPEWM

1 -

sapfirstguidance

3 -

SAPHANAService

1 -

SAPIQ

2 -

sapmentors

1 -

saponaws

2 -

saprouter

1 -

SAPRouter installation

1 -

SAPS4HANA

1 -

SAPUI5

5 -

schedule

1 -

Script Operator

1 -

Secure Login Client Setup

9 -

security

10 -

Selenium Testing

1 -

Self Transformation

1 -

Self-Transformation

1 -

SEN

1 -

SEN Manager

1 -

Sender

1 -

service

2 -

SET_CELL_TYPE

1 -

SET_CELL_TYPE_COLUMN

1 -

SFTP scenario

2 -

Simplex

1 -

Single Sign On

9 -

Singlesource

1 -

SKLearn

1 -

Slow loading

1 -

SOAP

2 -

Software Development

1 -

SOLMAN

1 -

solman 7.2

2 -

Solution Manager

3 -

sp_dumpdb

1 -

sp_dumptrans

1 -

SQL

1 -

sql script

1 -

SSL

9 -

SSO

9 -

Story2

1 -

Substring function

1 -

SuccessFactors

1 -

SuccessFactors Platform

1 -

SuccessFactors Time Tracking

1 -

Sybase

1 -

Synthetic User Monitoring

1 -

system copy method

1 -

System owner

1 -

Table splitting

1 -

Tax Integration

1 -

Technical article

1 -

Technical articles

1 -

Technology Updates

15 -

Technology Updates

1 -

Technology_Updates

1 -

terraform

1 -

Testing

1 -

Threats

2 -

Time Collectors

1 -

Time Off

2 -

Time Sheet

1 -

Time Sheet SAP SuccessFactors Time Tracking

1 -

Tips and tricks

2 -

toggle button

1 -

Tools

1 -

Trainings & Certifications

1 -

Transformation Flow

1 -

Transport in SAP BODS

1 -

Transport Management

1 -

TypeScript

3 -

ui designer

1 -

unbind

1 -

Unified Customer Profile

1 -

UPB

1 -

Use of Parameters for Data Copy in PaPM

1 -

User Unlock

1 -

VA02

1 -

Validations

1 -

Vector Database

2 -

Vector Engine

1 -

Vectorization

1 -

Visual Studio Code

1 -

VSCode

2 -

VSCode extenions

1 -

Vulnerabilities

1 -

Web SDK

1 -

Webhook

1 -

work zone

1 -

workload

1 -

xsa

1 -

XSA Refresh

1

- « Previous

- Next »

- Analyze Expensive ABAP Workload in the Cloud with Work Process Sampling in Technology Blogs by SAP

- Explore Business Continuity Options for SAP workload using AWS Elastic DisasterRecoveryService (DRS) in Technology Blogs by Members

- Sybase Issue: Segmentation Fault during SUSE OS Upgrade in Technology Q&A

- Sybase Issue: Segmentation Fault during SUSE OS Upgrade in Technology Q&A

- Integration of a SAP MaxDB into CCMS of an SAP System : Part 1 in Technology Blogs by Members

| User | Count |

|---|---|

| 53 | |

| 5 | |

| 4 | |

| 4 | |

| 4 | |

| 4 | |

| 4 | |

| 3 | |

| 3 | |

| 3 |