- SAP Community

- Products and Technology

- Additional Blogs by SAP

- Data Migration: Installing Data Services and Best...

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

In a previous First look at SAP's Data Migration Solution I discussed the options for data migration and the technologies used. We stated that SAP delivers software and content for data migration projects. In this blog I'll discuss the installation of the Data Services software and loading of the best practice content.

In order to get started you can use online help or the bp-migration alias in SMP. In this blog I used the best practices link in online help. There is a lot of great collateral here to take advantage of, however when I was ready to install The combination of the content down (bp-migration) and the online help giver you everything you need to get started! It will take you to the ERP quick guide that literally walks through what you need! It includes a silent installation. I tried the silent installation, with no luck, so I went to the appendix and did the steps for the manual installation. I'm sure the silent installation works if everything is setup correctly, but I wasn't too worried about it since I wanted to see the required steps for the manual installation.

Experience with the manual installation

With the manual installation you install Microsoft SQL Express, Data Services, and do things like create specific repositories, load best practice content into Data Services, and ensure everything is ready to run. I had to do the manual installation between meetings, so it took me a couple of days, but in man-hours it probably only took ½ of day - and a lot of that was me reading the guide to ensure it was done correctly. There are a lot of scripts that you need to run, so I'd suggest setting up the passwords to be the same as what would be set in the silent installation.

The installation of Microsoft SQL Express and Data Services is very straight forward. I did them on my laptop which has other SAP software and didn't run into any problems. I couldn't find a trial download of Data Services on SDN. Hopefully your company has already purchased the product. Someone mentioned you can download and then just get a temporary key from http://service.sap.com/licensekey, but I'm not sure. I also think there is a version of BI-Edge that includes it, but it's not in the basic BI-Edge, you at least need the "BI Edge with Integrator Kit". If you have experience with using Data Services as a trial, please post a response and share!

Once the basic installation is done you start to import the content into data services. The content is grouped into rar files and you download and unzip the content. The import happens into a couple of Data Services repositories. The first repository is related to all the lookups that are required during the migration. The first content I unzipped had spreadsheets for IDOC mapping and files that will be used for doing lookups to validate data. All of this goes into the lookup repository. One example is the following screenshot. This is for HCM data. It has description of the field, if a lookup is required, and if the field is required. There's other information as well.

At this point I wanted to know what else is delivered to help understand the IDOCs. So, I explored a couple things. The first is the documentation with the best practices in online help. In online help you'll find sample project plans, and links to ASAP methodology that has data migration content. I haven't explored the content available in detail for project management, I'll do that and blog about that soon. You can also download the documentation from SWDC on the service market place. The documentation is the same in online help and in the download area on SWDC. The documentation includes a word document for each of the objects. So, for example, in the HCM example mentioned above, the word document is 32 pages and includes description of the IDOC segments, number ranges, and process steps.

After the import I had datastores, jobs, project content in Data Services. Examples are below:

Project and jobs created for creating lookup tables in the staging area for the relevant migration objects. These look up tables will be used to validate the data when the migration jobs execute.

"

"

Data flows created in data services for the lookup table creation jobs:

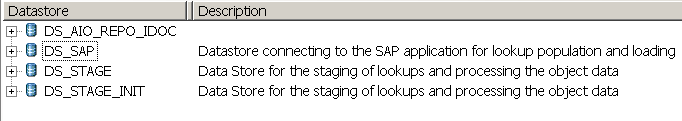

Datastores were created. The datastores create lcocal storage for temporary data for the migration, as well as linking to the SAP target system. I haven't yet created a datastore for the source system. The next step will be to update the DS_SAP datastore with the actual SAP system to be the target system for the migration.

The second repository holds all the migration jobs with the target of the IDOCs going to the SAP system. Once the import for this repository were completed I had a project with jobs related to the IDOC structures:

From this screen shot you can see the jobs for some of the content objects such as accounts payable, bill of material, cost elements, etc.

That was pretty much it for the manual installation. The last piece was to add the repositories to the Data Services Management Console. The management console is web-based way to schedule and monitor the jobs. OK - now I'm ready for the post-installation work! Look for the next installment to discuss post-installation and start using the content!

- 10+ ways to reshape your SAP landscape with SAP Business Technology Platform – Blog 4 in Technology Blogs by SAP

- SAP Field Logistics: Centralized Supplier Item Repository for an Optimized Rental Process in Supply Chain Management Blogs by SAP

- FAQ on Upgrading SAP S/4HANA Cloud Public Edition in Enterprise Resource Planning Blogs by SAP

- GRC Process Control: How CCM can be leveraged to monitor HANA Databases in Financial Management Q&A

- SAP Datasphere - Space, Data Integration, and Data Modeling Best Practices in Technology Blogs by SAP