- SAP Community

- Products and Technology

- Additional Blogs by Members

- The Transaction In The Lake (Part 3)

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

Previously:

Before a detour into transactional profiling, I was looking at the smoking gun at the end of Episode 1 of this story of a runaway program. I started looking at something simple, alias "VL02", and ended up looking at a suspicious SQL trace.

I got a tipoff from my Basis squad contact that the evidence was buried in the old case logs, commonly known as (cue Dragnet theme), "OSS".

Detective's Log, Wednesday Nov. 26, 2008 9:00 AM

The day before Thanksgiving (in the U.S.), a perfect time to browse through old mug shot files. My tip-off led me to a note called Profitability Analysis: Technical Documentation, dated way back to the prior century. Talk about cold case files!

Sure enough, the symptom read, "Performance problems on table CE4xxxx when posting data into CO-PA" - can't get much of a better match than that.

Fingerprint of the SQL statement:

SELECT * FROM CE4xxxx

WHERE AKTBO = 'X'

AND KNDNR = ...

AND ARTNR = ...

AND FKART = fixed value

AND (all other characteristics specified)

Yup, that's our suspect, all right. Then the note talks about the standard secondary index, containing:

MANDT

AKTBO

KNDNR

ARTNR

BUKRS

WERKS

VTWEG

It goes on to say this is only an example, or guess, as it were, and customers should customize an index to suit their design. There is further discussion of what to include in the secondary index, and why.

I don't agree with a lot of that discussion. For example:

"The fields MANDT and AKTBO must be the first fields in the secondary index, otherwise the database system reads the primary index instead."

This is definitely an urban myth, established so long ago no one can explain why. You'll see later on this article why we can throw that myth out the optimizing window.

And:

"Use as few fields as possible in the index. Each additional field takes disk space and worsens performance. The sequence of fields in the index is not relevant for finding segment numbers. However, by choosing the sequence carefully, you may be able to support some other read access."

I'd amend this to something like "use the proper fields in the index". Again, you'll see what we chose shortly.

There are important warnings that tuning the secondary index for the CO/PA write function could have side effects on other reports. Always a good thing to keep in mind.

The note concludes with a review of 1990s (or earlier) technology to improve I/O performance by "striping" data on disks. I'll let that one alone.

DBA squad

I waited to get back with the DBA squad until after the 4-day weekend, and a copyback was completed.

Detective's Log, Wednesday Dec. 3, 2008 1:00 PM

After analyzing the indexable column frequency distribution, our lead DBA built a secondary index in the following order:

KNDNR

ARTNR

BUKRS

WERKS

WWCAM

PRCTR

PAREG

AUART

Each column has more than 1 distinct value; in other words, we've skipped MANDT and AKTBO. While the note warns about size of index objects, both the standard ~1 and custom ~Z1 secondary indexes are about the same size (5.5 GB vs 5.4GB) and our new one is smaller! At the moment, anyway.

The preliminary explain plans showed the suspect statement would use the new index (how could it not?). The next step was testing our hypothesis that this will reduce VL02 runtime.

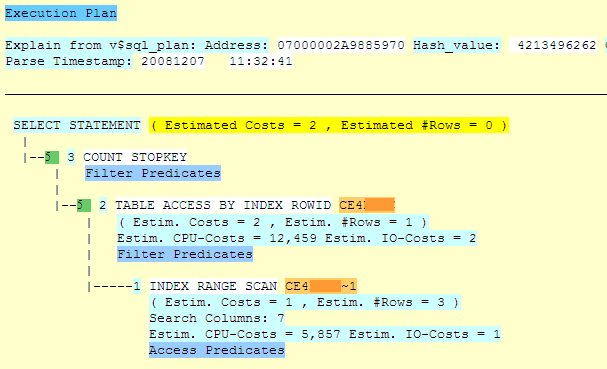

The top part of the diagram below is the explain plan (SQL statement cache analysis) showing the optimizer picks index 1. It's from our test system.

|

|

(chopped off for improved formatting?)

The bottom part of the image above shows our production system, with the same SQL statement hash value, and the optimizer picking the 1 index.

The image above shows the test explain plan, after the new index was created.

It's not obvious from these plans that the I/O savings will be significant, as the only major difference might be estimated I/O costs of 2 vs. 1.

Testing

Detective's Log, Monday Dec. 8, 2008 9:00 AM

After communicating the test requirements, I looked in the SQL cache in our test system on Monday morning, finding these results:

| Disk reads | Reads /Exec | Buffer gets | Bgets /exec | Proc. rows | Rproc /Exe | Bgets /row | Program name |

|---|---|---|---|---|---|---|---|

| 148 | 1.2 | 1,216 | 9.7 | 53 | 0.4 | 22.9 | RK2Axxxx_POST |

Production looked like this:

| Disk reads | Reads /Exec | Buffer gets | Bgets /exec | Proc. rows | Rproc /Exe | Bgets /row | Program name |

|---|---|---|---|---|---|---|---|

| 785,249 | 41.4 | 5,805,240 | 306 | 13,873 | 0.7 | 418.5 | RK2Axxxx_POST |

Given the reduction in disk reads and buffer gets per execution, and buffer gets per row, we think we have a winner.

Business Process Optimization

Time is a precious commodity, and building an index on a 50 million row table is not instantaneous. What our DBAs plan to do is build the production index using a "parallel 8" clause, prior to the actual transport hitting production. That gets the index built in a hurry, and avoids slowing down the total import process (meaning business down time) because Oracle recognizes the index pre-exists when the transport hits, and signals success immediately. We haven't found a way to include parallel 8 in the transport, and running it in a quiet time is quick (under 10 minutes in test, with fewer CPUs than production has).

Archiving

We suspect that the increase in run time has been gradual, as the CO-PA objects have grown for yet-undetermined reasons. The archiving documentation say these can't be deleted unless all related records have been deleted, so we've got work ahead to get this growth curve under control.

Leftovers

(images that didn't wrap well)

|

|

- Debugging transaction MD04 in SAP S/4HANA in Enterprise Resource Planning Blogs by SAP

- Quick Start guide for PLM system integration 3.0 Implementation in Product Lifecycle Management Blogs by SAP

- CIG transaction tracker handling in Technology Q&A

- Quick Start guide for PLM system integration 3.0 Implementation/Installation in Enterprise Resource Planning Blogs by SAP

- Comprehensive Guideline to SAP VMS in Technology Blogs by Members