- SAP Community

- Products and Technology

- Additional Blogs by Members

- RDBMS system integration using XI 3.0 JDBC Sender/...

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

The J2EE-based Adapter Engine of SAP XI 3.0 provides various adapters that can be used to connect external systems to the Integration Engine. These adapters convert XML and HTTP-based messages to the specific protocols and formats of the respective external systems and the other way around. The XI JDBC Sender adapter enables content (data) to be sent from databases to the integration server/PCK.Let us look at the various architecture approaches possible in this scenario.

Integration approaches for JDBC Sender adapter

- Direct approach where in the XI JDBC adapter is configured to poll the source table

- Indirect approach where in the XI JDBC adapter is configured to use polling/staging table

Direct approach

In this approach, the XI JDBC sender adapter regularly polls the actual source table for data to be posted to XI, based on the user configured polling parameters (poll interval, retry interval etc).The result set that matches the SELECT statement specified in the adapter configuration (Query SQL Statement parameter) is converted into a valid XML document and sent to the integration engine for further processing. The adapter then executes the SQL statement specified in the Update SQL Statement parameter which will probably update a FLAG/STATUS column in the source table so that the data that was polled during the current cycle is marked as READ.

This approach has some disadvantages that are listed below.

- Requires modification of the source table definition to add the STATUS column that is required to prevent polling duplicate data.

- May lead to database deadlock/inconsistency, if other business users also have direct (from the back-end) or indirect (say, using a J2EE application) access to the source table.

- This approach is more intrusive since it operates directly on the customer’s business data.

Indirect approach

In this approach, the actual source table is shielded from XI JDBC adapter by using a polling/staging table. Results of DML operation performed on the source table is reflected to the staging tables by means of database triggers deployed on the source table. The XI JDBC sender adapter polls the staging table for new data and the result of the Query SQL statement is converted to XML document structure and sent to the integration engine.

The various database objects involved in this approach are listed and explained below

- Staging/Polling Table: Staging table is a temporary table that is a replica of the actual table from where data has to be polled and posted to XI. This table, preferably, should be created in a separate schema and should be available for exclusive use by the JDBC Sender adapter of XI. The database scripts to create this staging table can be obtained by exporting table definition of the actual source table. This can be done using third party tools (e.g, TOAD which has provision to export table definition scripts of Oracle database tables as .sql files). These scripts can then be manually modified to include a flag/STATUS column which will be used by the adapter to differentiate data which have already been polled and posted to XI from the data which has not yet been polled and posted to XI.

- Trigger scripts: Trigger scripts are required to move data from actual tables (where DML operations will be performed by business users) to the staging tables (polled by XI JDBC adapter). These triggers should be created and deployed on the actual table thereby enabling on-the-fly transfer of data to staging table (AFTER INSERT/AFTER UPDATE/AFTER DELETE triggers based on the user requirement).

- Purge Scripts: Purge scripts are database scripts that will purge data from the staging table and can be scheduled to run at regular intervals. Usage of purge scripts again depends on the significance of data which is being posted to XI. In some scenarios, it may be required to retain the data in the staging tables (for audit and troubleshooting purposes).

- Access Control Scripts: These scripts are common scripts that have to be created and deployed in the RDBMS system prior to all the above steps. These scripts provide appropriate access (select/update) to the XI JDBC adapter (user name/password are specified as part of adapter connection parameters) on the necessary database schemas.

The pros and cons of the above approach are below

Pros

- The advantages of using this approach is that it is non intrusive (customers do not prefer exposing direct access to their business data), since JDBC adapter polls the staging tables and not the source tables.

- No modification to Source table definition is required.

- Loose coupling between the integration logic and the actual business data thus facilitating flexibility for future modifications/enhancements.

Cons

- Creation of additional database objects/scripts is involved thereby increasing the effort involved for integration.

Integration approaches for JDBC Receiver adapter

- Direct approach where in the XI JDBC adapter is configured to directly save data in the destination table

- Indirect approach using staging tables and database triggers

- Indirect approach using stored procedures and ccBPM

Direct Approach

In this approach, the JDBC receiver adapter is configured to directly update the destination table. SAP XI provides predefined canonical formats based on which schemas (integration metadata) have to be created and made available in the repository. The XI JDBC receiver adapter performs various DML operations (INSERT / UPDATE / DELETE / UPSERT) based on the action keyword specified in the schema.

This approach has certain disadvantages that are listed below

- More intrusive and may lead to reduced performance/deadlocks if other business users have simultaneous access to the same destination tables used by the adapter.

- Integration logic and business logic are tightly coupled there by reducing the flexibility for future enhancements/modifications

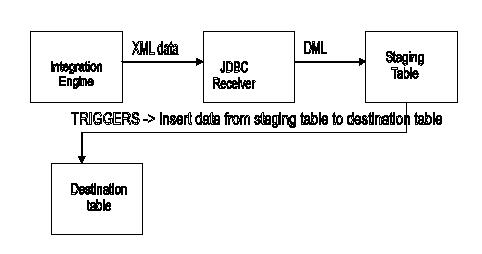

Indirect approach using staging tables and database triggers

In this approach, the JDBC receiver adapter fetches XML data from the integration engine, based on the canonical format used; appropriate DML operation is performed on the staging table. Database triggers deployed on the staging table move the data to the destination tables (AFTER INSERT/AFTER UPDATE/AFTER DELETE triggers).

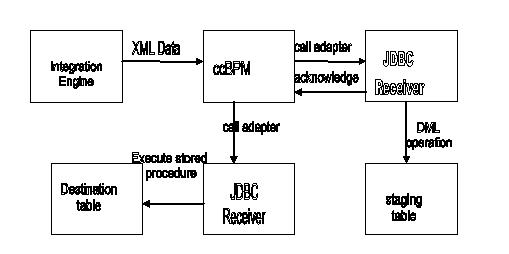

Indirect approach using stored procedures and ccBPM

In this approach, ccBPM is used along with the JDBC receiver adapter. This approach is more flexible and exploits significant features of the JDBC adapter and ccBPM. XML data from the integration engine is fetched by the integration process and passed on to the JDBC receiver adapter which then performs a DML operation on the staging table. The response is sent back to BPM which then executes a call to the PL/SQL stored procedure deployed on the database system. This stored procedure moves data from staging table to the destination table.

Observations

- If the database table involved in the receiver configuration is accessed by other business users, who are using other front-end applications (say for instance, a J2EE web application) or if there are already deployed database scripts (batch scheduled/event based scripts) on these tables for use by other business users, then having XI JDBC adapter directly access this tables is not a good option. Having it directly access the destination tables may adversely impact the performance, be more intrusive, or may lead to database deadlocks/crashes because of database locks by multiple systems

- The business data to be posted from XI to a relational database is collected in an integration process, which then passes on the data to JDBC adapter. At the end of a customer-defined threshold , the integration process can be made to execute a database script( a PL/SQL procedure) which will move the data from temporary staging tables to destination tables(using database Cursor scripts)

- There can be scenarios where in direct manipulation of destination tables is not allowed by the System itself since it may lead to inconsistencies. For instance, consider an Oracle Application 11.5.x system where in Oracle Applications Business objects reside in various schemas distributed across the 11.5.x instance. Though the underlying database of the Apps system can be directly accessed using JDBC, directly manipulating the Base tables may result in data inconsistency and data integrity will be lost. In this case, XI JDBC adapter saves data to temporary staging tables in the database, and then a wrapper PL/SQL stored procedure can be used that calls an Oracle API. This API call will take care of moving data to destination tables in a consistent and vendor recommended manner.

- Creation and deployment of additional database objects has some disadvantages like possibilities of data overflow if staging tables are not purged at regular intervals and troubleshooting is difficult when the API call in wrapper stored procedure fails

- Top Picks: Innovations Highlights from SAP Business Technology Platform (Q1/2024) in Technology Blogs by SAP

- How to build SOAP service in SAP Cloud Integration in Technology Blogs by Members

- HTTP receiver adapter - inbuilt retry option in Technology Q&A

- How to Extend Order Integration from SAP Order Management Foundation to SAP S/4HANA in CRM and CX Blogs by SAP

- Nested JSON to SAP HANA Tables with SAP Integration Suite in Technology Blogs by Members